I.

An article by Adam Grant called Differences Between Men And Women Are Vastly Exaggerated is going viral, thanks in part to a share by Facebook exec Sheryl Sandberg. It’s a response to an email by a Google employee saying that he thought Google’s low female representation wasn’t a result of sexism, but a result of men and women having different interests long before either gender thinks about joining Google. Grant says that gender differences are small and irrelevant to the current issue. I disagree.

Grant writes:

It’s always precarious to make claims about how one half of the population differs from the other half—especially on something as complicated as technical skills and interests. But I think it’s a travesty when discussions about data devolve into name-calling and threats. As a social scientist, I prefer to look at the evidence.

The gold standard is a meta-analysis: a study of studies, correcting for biases in particular samples and measures. Here’s what meta-analyses tell us about gender differences:

When it comes to abilities, attitudes, and actions, sex differences are few and small.

Across 128 domains of the mind and behavior, “78% of gender differences are small or close to zero.” A recent addition to that list is leadership, where men feel more confident but women are rated as more competent.

There are only a handful of areas with large sex differences: men are physically stronger and more physically aggressive, masturbate more, and are more positive on casual sex. So you can make a case for having more men than women… if you’re fielding a sports team or collecting semen.

The meta-analysis Grant cites is Hyde’s, available here. I’ve looked into it before, and I don’t think it shows what he wants it to show.

Suppose I wanted to convince you that men and women had physically identical bodies. I run studies on things like number of arms, number of kidneys, size of the pancreas, caliber of the aorta, whether the brain is in the head or the chest, et cetera. 90% of these come back identical – in fact, the only ones that don’t are a few outliers like “breast size” or “number of penises”. I conclude that men and women are mostly physically similar. I can even make a statistic like “men and women are physically the same in 78% of traits”.

Then I go back to the person who says women have larger breasts and men are more likely to have penises, and I say “Ha, actually studies prove men and women are mostly physically identical! I sure showed you, you sexist!”

I worry that Hyde’s analysis plays the same trick. She does a wonderful job finding that men and women have minimal differences in eg “likelihood of smiling when not being observed”, “interpersonal leadership style”, et cetera. But if you ask the man on the street “Are men and women different?”, he’s likely to say something like “Yeah, men are more aggressive and women are more sensitive”. And in fact, Hyde found that men were indeed definitely more aggressive, and women indeed definitely more sensitive. But throw in a hundred other effects nobody cares about like “likelihood of smiling when not observed”, and you can report that “78% of gender differences are small or zero”.

Hyde found moderate or large gender differences in (and here I’m paraphrasing very scientific-sounding constructs into more understandable terms) aggressiveness, horniness, language abilities, mechanical abilities, visuospatial skills, mechanical ability, tendermindness, assertiveness, comfort with body, various physical abilities, and computer skills.

Perhaps some peeople might think that finding moderate-to-large-differences in mechanical abilities, computer skills, etc supports the idea that gender differences might play a role in gender balance in the tech industry. But because Hyde’s meta-analysis drowns all of this out with stuff about smiling-when-not-observed, Grant is able to make it sound like Hyde proves his point.

It’s actually worse than this, because Grant misreports the study findings in various ways [EDIT: Or possibly not, see here]. For example, he states that the sex differences in physical aggression and physical strength are “large”. The study very specifically says the opposite of this. Its three different numbers for physical aggression (from three different studies) are 0.4, 0.59, and 0.6, and it sets a cutoff for “large” effects at 0.66 or more.

On the other hand, Grant fails to report an effect that actually is large: mechanical reasoning ability (in the paper as Feingold 1998 DAT mechanical reasoning). There is a large gender difference on this, d = 0.76.

And although Hyde doesn’t look into it in her meta-analysis, other meta-analyses like this one find a large effect size (d = 1.18) for thing-oriented vs. people-oriented interest, the very claim that the argument that Grant is trying to argue against centers around.

So Grant tries to argue against large thing-oriented vs. people-oriented differences by citing a meta-analysis that doesn’t look into them at all. He then misreports the findings of that meta-analysis, exaggerating effects that fit his thesis and failing to report the ones that don’t. Finally, he cites a “summary statistic” that averages away the variation we’re looking for out by combining it with a bunch of noise, and claims the noise proves his point even though the variation is as big as ever.

II.

Next, Grant claims that there are no sex differences in mathematical ability, and also that the sex differences in mathematical ability are culturally determined. I’m not really sure what he means [EDIT: He means sex differences that exist in other countries] but I agree with his first argument – at the levels we’re looking at, there’s no gender difference in math ability.

Grant says that these foreign differences in math ability exist but are due to stereotypes, and so are less noticeable in more progressive, gender-equitable nations:

Girls do as well as boys—or slightly better—in math in elementary, but boys have an edge by high school. Male advantages are more likely to exist in countries that lack gender equity in school enrollment, women in research jobs, and women in parliament—and that have stereotypes associating science with males.

Again, my research suggests no average gender difference in ability, so I can’t speak to whether these differences are caused by stereotypes or not. But I want to go back to the original question: why is there a gender difference in tech-industry-representation [in the US]? Is this also due to stereotypes and the effect of an insufficiently gender-equitable society? Do we find that “countries that lack gender equity in school enrollment” and “stereotypes associating science with males” have fewer women in tech?

No. Galpin investigated the percent of women in computer classes all around the world. Her number of 26% for the US is slightly higher than I usually hear, probably because it’s older (the percent women in computing has actually gone down over time!). The least sexist countries I can think of – Sweden, New Zealand, Canada, etc – all have somewhere around the same number (30%, 20%, and 24%, respectively). The most sexist countries do extremely well on this metric! The highest numbers on the chart are all from non-Western, non-First-World countries that do middling-to-poor on the Gender Development Index: Thailand with 55%, Guyana with 54%, Malaysia with 51%, Iran with 41%, Zimbabwe with 41%, and Mexico with 39%. Needless to say, Zimbabwe is not exactly famous for its deep commitment to gender equality.

Why is this? It’s a very common and well-replicated finding that the more progressive and gender-equal a country, the larger gender differences in personality of the sort Hyde found become. I agree this is a very strange finding, but it’s definitely true. See eg Journal of Personality and Social Psychology, Sex Differences In Big Five Personality Traits Across 55 Cultures:

Previous research suggested that sex differences in personality traits are larger in prosperous, healthy, and egalitarian cultures in which women have more opportunities equal with those of men. In this article, the authors report cross-cultural findings in which this unintuitive result was replicated across samples from 55 nations (n = 17,637).

In case you’re wondering, the countries with the highest gender differences in personality are France, Netherlands, and the Czech Republic. The countries with the lowest sex differences are Indonesia, Fiji, and the Congo.

I conclude that whatever gender-equality-stereotype-related differences Grant has found in the nonexistent math ability difference between men and women, they are more than swamped by the large opposite effects in gender differences in personality. This meshes with what I’ve been saying all along: at the level we’re talking about here, it’s not about ability, it’s about interest.

III.

We know that interests are highly malleable. Female students become significantly more interested in science careers after having a teacher who discusses the problem of underrepresentation. And at Harvey Mudd College, computer science majors were around 10% women a decade ago. Today they’re 55%.

I highly recommend Freddie deBoer’s Why Selection Bias Is The Most Powerful Force In Education. If an educational program shows amazing results, and there’s any possible way it’s selection bias – then it’s selection bias.

I looked into Harvey Mudd’s STEM admission numbers, and, sure enough, they admit women at 2.5x the rate as men. So, yeah, it’s selection bias.

I don’t blame them. All they have to do is cultivate a reputation as a place to go if you’re a woman interested in computer science, attract lots of female CS applicants, then make sure to admit all the CS-interested female applicants they get. In exchange, they get constant glowing praise from every newspaper in the country (1, 2,

3, 4, 5, 6, 7, 8, 9, 10, etc, etc, etc).

How would we know this was selection bias if we couldn’t just look at the numbers? The graph that Grant himself cites just above this statement shows that, over the same ten year period, percent women CS graduates has declined nationwide. This has corresponded with such a massive push to get more women in tech that…well, that a college which succeeds will get constant glowing praise from every newspaper in the country even when they admit they’re using selection bias. Do you think no one else has tried? Every college diversity office in the country is working overtime to try to get more women into tech, there are women in tech scholarships, women in tech conferences, women in tech prizes – and, over the period that’s happened, Grant’s own graph shows the percent of women in tech going down.

(I don’t understand why it’s going down as opposed to steady, but my guess is a combination of constant messaging that there are no women in tech making women think it isn’t for them, plus the effect from society getting more gender-equitable that we described in Part II – ie we’re now less like Zimbabwe, and so we can’t expect our gender ratios to be as good as theirs are).

Look. If I recruit only gingers, and I admit only gingers, I can get a 100% ginger CS program. That doesn’t mean I’ve proven that gingers are really more interested in CS than everyone else, and it was just discrimination holding them back. It means I’ve done what every single private school and college does anyway, all the time – finagle with admissions to make myself look good.

[EDIT: Some further discussion by Mudd students in the comments here]

IV.

Back to Grant:

4. There are sex differences in interests, but they’re not biologically determined.

The data on occupational interests do reveal strong male preferences for working with things and strong female preferences for working with people. But they also reveal that men and women are equally interested in working with data.

So why are there so many more male than female engineers? Because women have systematically been discouraged from working with computers. Look at trends in college majors: since the 1980s, the proportion of female majors has gone up in science and medicine and law, but down in computer science.

Before we discuss this, a quick step back.

In the year 1850, women were locked out of almost every major field, with a few exceptions like nursing and teaching. The average man of the day would have been equally confident that women were unfit for law, unfit for medicine, unfit for mathematics, unfit for linguistics, unfit for engineering, unfit for journalism, unfit for psychology, and unfit for biology. He would have had various sexist justifications – women shouldn’t be in law because it’s too competitive and high-pressure; women shouldn’t be in medicine because they’re fragile and will faint at the sight of blood; et cetera.

As the feminist movement gradually took hold, women conquered one of these fields after another. 51% of law students are now female. So are 49.8% of medical students, 45% of math majors, 60% of linguistics majors, 60% of journalism majors, 75% of psychology majors, and 60% of biology postdocs. Yet for some reason, engineering remains only about 20% female.

And everyone says “Aha! I bet it’s because of negative stereotypes!”

This makes no sense. There were negative stereotypes about everything! Somebody has to explain why the equal and greater negative stereotypes against women in law, medicine, etc were completely powerless, yet for some reason the negative stereotypes in engineering were the ones that took hold and prevented women from succeeding there.

And if your answer is just going to be that apparently the negative stereotypes in engineering were stronger than the negative stereotypes about everything else, why would that be? Put yourself in the shoes of our Victorian sexist, trying to maintain his male privilege. He thinks to himself “Well, I suppose I could tolerate women doctors saving my life. And if I had to, I would accept women going into law and determining who goes free and who goes to jail. I’m even sort of okay with women going into journalism and crafting the narratives that shape our world. But women building bridges? NO MERE FEMALE COULD EVER DO SUCH A THING!” Really? This is the best explanation the world can come up with? Doesn’t anyone have at least a little bit of curiousity about this?

(and I don’t think it’s just coincidence – ie I don’t think it’s just that a bunch of head engineers happened to be really sexist, and a bunch of head doctors happened to be really non-sexist. The same patterns apply through pretty much every First World country, and if it were just a matter of personalities you would expect them to differ from place to place.)

Whenever I ask this question, I get something like “engineering and computer science are two of the highest-paying, highest-status jobs, so of course men would try to keep women out of them, in order to maintain their supremacy”. But I notice that doctors and lawyers are also pretty high-paying, high-status jobs, and that nothing of the sort happened there. And that when people aren’t using engineering/programming’s high status to justify their beliefs about gender stereotypes in it, they’re ruthlessly making fun of engineers and programmers, whether it’s watching Big Bang Theory or reading Dilbert or just going on about “pocket protectors”.

Meanwhile, men make up only 10% of nurses, only 20% of new veterinarians, only 25% of new psychologists, about 25% of new paediatricians, about 26% of forensic scientists, about 28% of medical managers, and 42% of new biologists.

Note that many of these imbalances are even more lopsided than the imbalance favoring men in technology, and that many of these jobs earn much more than the average programmer. For example, the average computer programmer only makes about $80,000; the average veterinarian makes about $88,000, and the average pediatrician makes a whopping $170,000.

As long as you’re comparing some poor woman janitor to a male programmer making $80,000, you can talk about how it’s clearly sexism against women getting the good jobs. But once you take off the blinders and try to look at an even slightly bigger picture, you start wondering why veterinarians, who make even more money than that, are even more lopsidedly female than programmers are male. And then you start thinking that maybe you need some framework more sophisticated than the simple sexism theory in order to predict who’s doing all of these different jobs. And once you have that framework, maybe the sexism theory isn’t necessary any longer, and you can throw it out, and use the same theory to predict why women dominate veterinary medicine and psychology, why men dominate engineering and computer science, and why none of this has any relation at all to what fields that some sexist in the 1850s wanted to keep women out of.

So let’s look deeper into what prevents women from entering these STEM fields.

Does it happen at the college level? About 20% of high school students taking AP Computer Science are women. The ratio of women graduating from college with computer science degrees is exactly what you would expect from the ratio of women who showed interest in it in high school (the numbers are even lower in Britain, where 8% of high school computer students are girls). So differences exist before the college level, and nothing that happens at the college level – no discriminatory professors, no sexist classmates – change the numbers at all.

Does it happen at the high school level? There’s not a lot of obvious room for discrimination – AP classes are voluntary; students who want to go into them do, and students who don’t want to go into them don’t. There are no prerequisites except basic mathematical competency or other open-access courses. It seems like of the people who voluntarily choose to take AP classes that nobody can stop them from going into, 80% are men and 20% are women, which exactly matches the ratio of each gender that eventually get tech company jobs.

Rather than go through every step individually, I’ll skip to the punch and point out that the same pattern repeats in middle school, elementary school, and about as young as anybody has ever bothered checking. So something produces these differences very early on? What might that be?

Might young women be avoiding computers because they’ve absorbed stereotypes telling them that they’re not smart enough, or that they’re “only for boys”? No. As per Shashaani 1997, “[undergraduate] females strongly agreed with the statement ‘females have as much ability as males when learning to use computers’, and strongly disagreed with the statement ‘studying about computers is more important for men than for women’. On a scale of 1-5, where 5 represents complete certainty in gender equality in computer skills, and 1 completely certainty in inequality, the average woman chooses 4.2; the average male 4.03. This seems to have been true since the very beginning of the age of personal computers: Smith 1986 finds that “there were no significant differences between males and females in their attitudes of efficacy or sense of confidence in ability to use the computer, contrary to expectation…females [showed] stronger beliefs in equity of ability and competencies in use of the computer.” This is a very consistent result and you can find other studies corroborating it in the bibliographies of both papers.

Might girls be worried not by stereotypes about computers themselves, but by stereotypes that girls are bad at math and so can’t succeed in the math-heavy world of computer science? No. About 45% of college math majors are women, compared to (again) only 20% of computer science majors. Undergraduate mathematics itself more-or-less shows gender parity. This can’t be an explanation for the computer results.

Might sexist parents be buying computers for their sons but not their daughters, giving boys a leg up in learning computer skills? In the 80s and 90s, everybody was certain that this was the cause of the gap. Newspapers would tell lurid (and entirely hypothetical) stories of girls sitting down to use a computer when suddenly a boy would show up, push her away, and demand it all to himself. But move forward a few decades and now young girls are more likely to own computers than young boys – with little change in the high school computer interest numbers. So that isn’t it either.

So if it happens before middle school, and it’s not stereotypes, what might it be?

One subgroup of women does not display these gender differences at any age. These are women with congenital adrenal hyperplasia, a condition that gives them a more typically-male hormone balance. For a good review, see Gendered Occupational Interests: Prenatal Androgen Effects on Psychological Orientation to Things Versus People. They find that:

Consistent with hormone effects on interests, females with CAH are considerably more interested than are females without CAH in male-typed toys, leisure activities, and occupations, from childhood through adulthood (reviewed in Blakemore et al., 2009; Cohen-Bendahan et al., 2005); adult females with CAH also engage more in male-typed occupations than do females without CAH (Frisén et al., 2009). Male-typed interests of females with CAH are associated with degree of androgen exposure, which can be inferred from genotype or disease characteristics (Berenbaum et al., 2000; Meyer-Bahlburg et al., 2006; Nordenström et al., 2002). Interests of males with CAH are similar to those of males without CAH because both are exposed to high (sex-typical) prenatal androgens and are reared as boys.

Females with CAH do not provide a perfect test of androgen effects on gendered characteristics because they differ from females without CAH in other ways; most notably they have masculinized genitalia that might affect their socialization. But, there is no evidence that parents treat girls with CAH in a more masculine or less feminine way than they treat girls without CAH (Nordenström et al., 2002; Pasterski et al., 2005). Further, some findings from females with CAH have been confirmed in typical individuals whose postnatal behavior has been associated with prenatal hormone levels measured in amniotic fluid. Amniotic testosterone levels were found to correlate positively with parent-reported male-typed play in girls and boys at ages 6 to 10 years (Auyeung et al., 2009).

The psychological mechanism through which androgen affects interests has not been well-investigated, but there is some consensus that sex differences in interests reflect an orientation toward people versus things (Diekman et al., 2010) or similar constructs, such as organic versus inorganic objects (Benbow et al., 2000). The Things-People distinction is, in fact, the major conceptual dimension underlying the measurement of the most widely-used model of occupational interests (Holland, 1973; Prediger, 1982); it has also been used to represent leisure interests (Kerby and Ragan, 2002) and personality (Lippa, 1998).

In their own study, they compare 125 people and find a Things-People effect size of -0.75 – that is, the difference between CAH women and unaffected women is more than half the difference between men and unaffected women. They write:

The results support the hypothesis that sex differences in occupational interests are due, in part, to prenatal androgen influences on differential orientation to objects versus people. Compared to unaffected females, females with CAH reported more interest in occupations related to Things versus People, and relative positioning on this interest dimension was substantially related to amount of prenatal androgen exposure.

What is this “object vs. people” distinction?

It’s pretty relevant. Meta-analyses have shown a very large (d = 1.18) difference in healthy men and women (ie without CAH) in this domain. It’s traditionally summarized as “men are more interested in things and women are more interested in people”. I would flesh out “things” to include both physical objects like machines as well as complex abstract systems; I’d also add in another finding from those same studies that men are more risk-taking and like danger. And I would flesh out “people” to include communities, talking, helping, children, and animals.

So this theory predicts that men will be more likely to choose jobs with objects, machines, systems, and danger; women will be more likely to choose jobs with people, talking, helping, children, and animals.

Somebody armed with this theory could pretty well pretty well predict that women would be interested in going into medicine and law, since both of them involve people, talking, and helping. They would predict that women would dominate veterinary medicine (animals, helping), psychology (people, talking, helping, sometimes children), and education (people, children, helping). Of all the hard sciences, they might expect women to prefer biology (animals). And they might expect men to do best in engineering (objects, machines, abstract systems, sometimes danger) and computer science (machines, abstract systems).

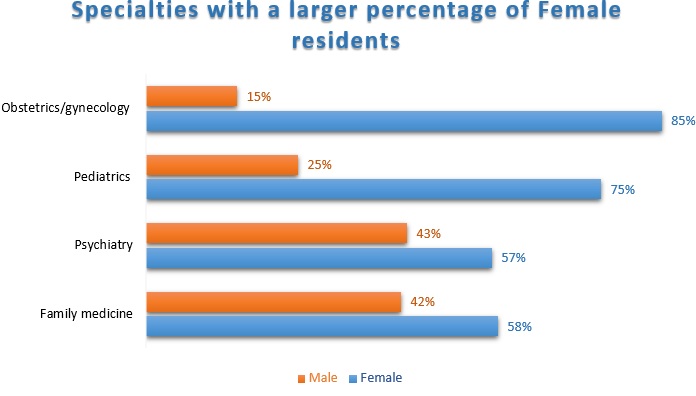

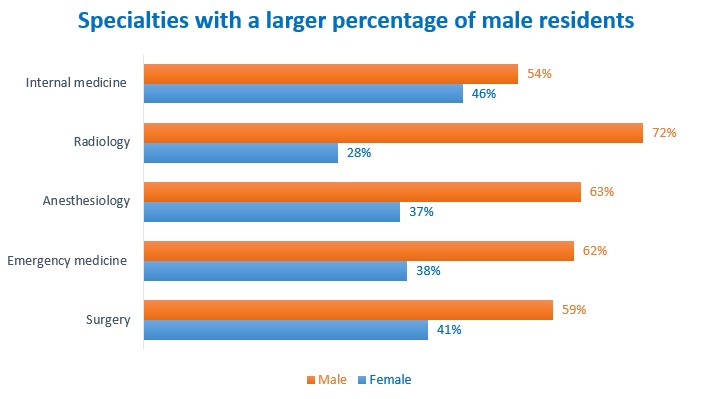

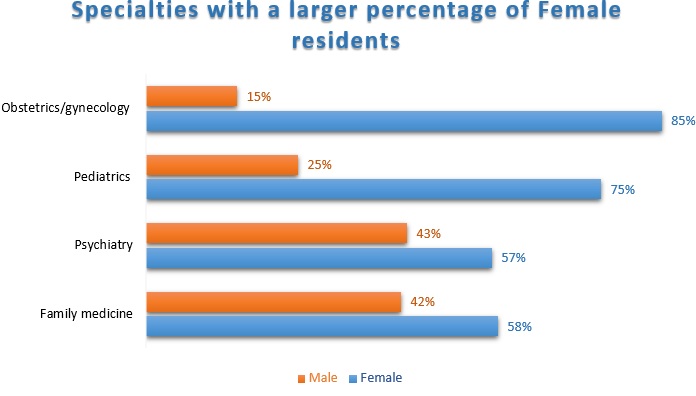

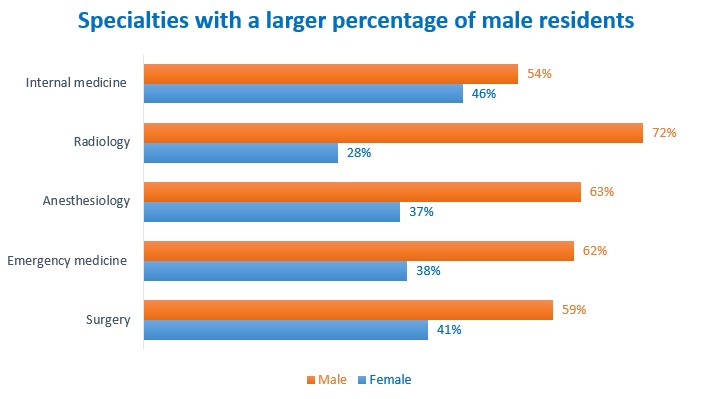

I mentioned that about 50% of medical students were female, but this masks a lot of variation. There are wide differences in doctor gender by medical specialty. For example:

A privilege-based theory fails – there’s not much of a tendency for women to be restricted to less prestigious and lower-paying fields – Ob/Gyn (mostly female) is extremely lucrative, and internal medicine (mostly male) is pretty low-paying for a medical job.

But the people/thing theory above does extremely well! Pediatrics is babies/children, Psychiatry is people/talking (and of course women are disproportionately child psychiatrists), OB/GYN is babies (though admittedly this probably owes a lot to patients being more comfortable with female gynecologists) and family medicine is people/talking/babies/children.

Meanwhile, Radiology is machines and no patient contact, Anaesthesiology is also machines and no patient contact, Emergency Medicine is danger, and Surgery is machines, danger, and no patient contact.

Here’s another fun thing you can do with this theory: understand why women are so well represented in college math classes. Women are around 20% of CS majors, physics majors, engineering majors, etc – but almost half of math majors! This should be shocking. Aren’t we constantly told that women are bombarded with stereotypes about math being for men? Isn’t the archetypal example of children learning gender roles that Barbie doll that said “Math is hard, let’s go shopping?” And yet women’s representation in undergraduate math classes is really quite good.

I was totally confused by this for a while until a commenter directed me to the data on what people actually do with math degrees. The answer is mostly: they become math teachers. They work in elementary schools and high schools, with people.

Then all those future math teachers leave for the schools after undergrad, and so math grad school ends up with pretty much the same male-tilted gender balance as CS, physics, and engineering grad school.

This seems to me like the clearest proof that women being underrepresented in CS/physics/etc is just about different interests. It’s not that they can’t do the work – all those future math teachers do just as well in their math majors as everyone else. It’s not that stereotypes of what girls can and can’t do are making them afraid to try – whatever stereotypes there are about women and math haven’t dulled future math teachers’ willingness to compete difficult math courses one bit. And it’s not even about colleges being discriminatory and hostile (or at least however discriminatory and hostile they are it doesn’t drive away those future math teachers). It’s just that women are more interested in some jobs, and men are more interested in others. Figure out a way to make math people-oriented, and women flock to it. If there were as many elementary school computer science teachers as there are math teachers, gender balance there would equalize without any other effort.

I’m not familiar with any gender breakdown of legal specialties, but I will bet you that family law, child-related law, and various prosocial helping-communities law are disproportionately female, and patent law, technology law, and law working with scary dangerous criminals are disproportionately male. And so on for most other fields.

This theory gives everyone what they want. It explains the data about women in tech. It explains the time course around women in tech. It explains other jobs like veterinary medicine where women dominate. It explains which medical subspecialties women will be dominant or underrepresented in. It doesn’t claim that women are “worse than men” or “biologically inferior” at anything. It doesn’t say that no woman will ever be interested in things, or no man ever interested in people. It doesn’t say even that women in tech don’t face a lot of extra harassment (any domain with more men than women will see more potential perpetrators concentrating their harassment concentrated on fewer potential victims, which will result in each woman being more harassed).

It just says that sometimes, in a population-based way that doesn’t necessarily apply to any given woman or any given man, women and men will have some different interests. Which should be pretty obvious to anyone who’s spent more than a few minutes with men or women.

V.

Why am I writing this?

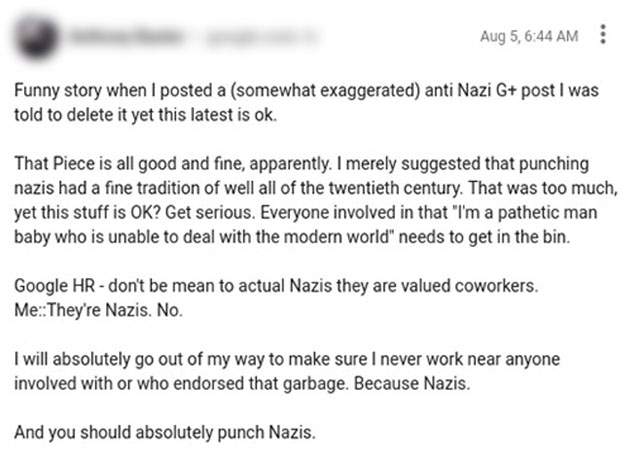

Grant’s piece was in response to a person at Google sending out a memo claiming some of this stuff. Here is a pretty typical response that a Googler sent to that memo – I’ve blocked the name so this person doesn’t get harassed over it, but if you doubt this is real I can direct you to the original:

A lot of people without connections to the tech industry don’t realize how bad it’s gotten. This is how bad. It would be pointless trying to do anything about this person in particular. This is the climate.

Silicon Valley was supposed to be better than this. It was supposed to be the life of the mind, where people who were interested in the mysteries of computation and cognition could get together and make the world better for everybody. Now it’s degenerated into this giant hatefest of everybody writing long screeds calling everyone else Nazis and demanding violence against them. Where if someone disagrees with the consensus, it’s just taken as a matter of course that we need to hunt them down, deny them of the cloak of anonymity, fire them, and blacklist them so they can never get a job again. Where the idea that we shouldn’t be a surveillance society where we carefully watch our coworkers for signs of sexism so we can report them to the authorities is exactly the sort of thing you get reported to the authorities if people see you saying.

On the Twitter debate on this, someone mentioned that people felt afraid to share their thoughts anymore. An official, blue-checkmarked Woman In Tech activist responded with (note the 500+ likes):

This is the world we’ve built. Where making people live in fear is a feature, not a bug.

And: it can get worse. If you only read one link, let it be this one about the young adult publishing industry A sample quote:

One author and former diversity advocate described why she no longer takes part: “I have never seen social interaction this fucked up,” she wrote in an email. “And I’ve been in prison.”

Many members of YA Book Twitter have become culture cops, monitoring their peers across multiple platforms for violations. The result is a jumble of dogpiling and dragging, subtweeting and screenshotting, vote-brigading and flagging wars, with accusations of white supremacy on one side and charges of thought-policing moral authoritarianism on the other. Representatives of both factions say they’ve received threats or had to shut down their accounts owing to harassment, and all expressed fear of being targeted by influential community members — even when they were ostensibly on the same side. “If anyone found out I was talking to you,” Mimi told me, “I would be blackballed.”

Dramatic as that sounds, it’s worth noting that my attempts to report this piece were met with intense pushback. Sinyard politely declined my request for an interview in what seemed like a routine exchange, but then announced on Twitter that our interaction had “scared” her, leading to backlash from community members who insisted that the as-yet-unwritten story would endanger her life. Rumors quickly spread that I had threatened or harassed Sinyard; several influential authors instructed their followers not to speak to me; and one librarian and member of the Newbery Award committee tweeted at Vulture nearly a dozen times accusing them of enabling “a washed-up YA author” engaged in “a personalized crusade” against the entire publishing community (disclosure: while freelance culture writing makes up the bulk of my work, I published a pair of young adult novels in 2012 and 2014.) With one exception, all my sources insisted on anonymity, citing fear of professional damage and abuse.

None of this comes as a surprise to the folks concerned by the current state of the discourse, who describe being harassed for dissenting from or even questioning the community’s dynamics. One prominent children’s-book agent told me, “None of us are willing to comment publicly for fear of being targeted and labeled racist or bigoted. But if children’s-book publishing is no longer allowed to feature an unlikable character, who grows as a person over the course of the story, then we’re going to have a pretty boring business.”

Another agent, via email, said that while being tarred as problematic may not kill an author’s career — “It’s likely made the rounds as gossip, but I don’t know it’s impacting acquisitions or agents offering representation” — the potential for reputational damage is real: “No one wants to be called a racist, or sexist, or homophobic. That stink doesn’t wash off.”

Authors seem acutely aware of that fact, and are tailoring their online presence — and in some cases, their writing itself — accordingly. One New York Times best-selling author told me, “I’m afraid. I’m afraid for my career. I’m afraid for offending people that I have no intention of offending. I just feel unsafe, to say much on Twitter. So I don’t.” She also scrapped a work in progress that featured a POC character, citing a sense shared by many publishing insiders that to write outside one’s own identity as a white author simply isn’t worth the inevitable backlash. “I was told, do not write that,” she said. “I was told, ‘Spare yourself.’

Another author recalled being instructed by her publisher to stay silent when her work was targeted, an experience that she says resulted in professional ostracization. “I never once responded or tried to defend my book,” she wrote in a Twitter DM. Her publisher “did feel I was being abused, but felt we couldn’t do anything about it.”

Parts of tech are already this bad. For the rest of you: it’s what you have to look forward to.

It doesn’t have to be this way. Nobody has any real policy disagreements. Everyone can just agree that men and women are equal, that they both have the same rights, that nobody should face harassment or discrimination. We can relax the Permanent State Of Emergency around too few women in tech, and admit that women have the right to go into whatever field they want, and that if they want to go off and be 80% of veterinarians and 74% of forensic scientists, those careers seem good too. We can appreciate the contributions of existing women in tech, make sure the door is open for any new ones who want to join, and start treating each other as human beings again. Your co-worker could just be your co-worker, not a potential Nazi to be assaulted or a potential Stalinist who’s going to rat on you. Your project manager could just be your project manager, not the person tasked with monitoring you for signs of thoughtcrime. Your female co-worker could just be your female co-worker, not a Badass Grrl Coder Who Overcomes Adversity. Your male co-worker could just be your male co-worker, not a Tool Of The Patriarchy Who Denies His Complicity In Oppression. I promise there are industries like this. Medicine is like this! Loads of things are like this! Lots of tech companies are even still like this! This could be you.

Adam Grant seems like a good person. He is superficially doing everything right. He’s not demanding people feel afraid, or saying that everyone who disagrees with him is a fascist. He’s just trying to argue the science.

But I think he’s very wrong about the science. I think Hyde’s article is a gimmick which buries very real differences under a heap of meaningless similarities. I think that it’s inappropriate to cite it to respond to claims of specific differences that it didn’t investigate. I think that claims of a gender-equitable-society-effect in a different domain are inappropriate given the clear opposite effect in the domain being talked about it. I think it’s wrong to privilege likely-selection-biased evidence from a single college over all the evidence from the country as a whole. I think it’s wrong to suppose unique stereotypes in tech and engineering domains with no theory of how they got there, when there are non-stereotype-based theories that better explain the evidence. And I think it’s wrong to ignore all the studies about congenital adrenal hyperplasia.

And I think that, in being wrong about the science, he’s (probably unintentionally) giving aid and comfort to the people who have admitted that turning tech into a climate of fear and threats of violence is the end goal.

Grant is one of the few people doing the virtuous thing and trying to debate this without calling for other people’s deaths. I’m trying to do the virtuous thing and respond to him. But I worry that lots of people on Grant’s side aren’t as virtuous as he is, and I don’t know how to protect anybody from that except by begging people to please look at the science and try to get it right.

[EDIT: Prof. Grant responded to me via email; with his permission I’ve posted his response as a comment below (ie here). My thoughts on his response are in comments below that.]