[Previously in series: Antidepressant Pharmacogenomics: Much More Than You Wanted To Know; SSRIs: Much More Than You Wanted To Know, etc. This is all preliminary and you should not take it as a reason to change successful medical care. None of this necessarily applies to your particular case and you should talk to your doctor if you have questions about that.]

I. Confessions Of A Gatekeeper

I didn’t realize how much of a psychiatrist’s time was spent gatekeeping Adderall.

The human brain wasn’t built for accounting or software engineering. A few lucky people can do these things ten hours a day, every day, with a smile. The rest of us start fidgeting and checking our cell phone somewhere around the thirty minute mark. I work near the financial district of a big city, so every day a new Senior Regional Manipulator Of Tiny Numbers comes in and tells me that his brain must be broken because he can’t sit still and manipulate tiny numbers as much as he wants. How come this is so hard for him, when all of his colleagues can work so diligently?

(it’s because his colleagues are all on Adderall already – but telling him that will just make things worse)

He goes on to give me his story about how he’s at risk of getting fired from his Senior Regional Manipulator Of Tiny Numbers position, and at this rate he’s never going to get the promotion to Vice President Of Staring At Giant Spreadsheets, so do I think I can give him some Adderall to help him through?

Psychiatric guidelines are very clear on this point: only give Adderall to people who “genuinely” “have” “ADHD”.

But “ability to concentrate” is a normally distributed trait, like IQ. We draw a line at some point on the far left of the bell curve and tell the people on the far side that they’ve “got” “the disease” of “ADHD”. This isn’t just me saying this. It’s the neurostructural literature, the the genetics literature, a bunch of other studies, and the the Consensus Conference On ADHD. This doesn’t mean ADHD is “just laziness” or “isn’t biological” – of course it’s biological! Height is biological! But that doesn’t mean the world is divided into two natural categories of “healthy people” and “people who have Height Deficiency Syndrome“. Attention is the same way. Some people really do have poor concentration, they suffer a lot from it, and it’s not their fault. They just don’t form a discrete population.

Meanwhile, Adderall works for people whether they “have” “ADHD” or not. It may work better for people with ADHD – a lot of them report an almost “magical” effect – but it works at least a little for most people. There is a vast literature trying to disprove this. Its main strategy is to show Adderall doesn’t enhance cognition in healthy people. Fine. But mostly it doesn’t enhance cognition in people with ADHD either. People aren’t using Adderall to get smart, they’re using it to focus. From Prescription stimulants in individuals with and without attention deficit hyperactivity disorder:

It has never been established that the cognitive effects of stimulant drugs are central to their therapeutic utility. In fact, although ADHD medications are effective for the behavioral components of the disorder, little information exists concerning their effects on cognition…stimulant drugs do improve the ability (even without ADHD) to focus and pay attention.

I cannot tell you how much literature there is trying to convince you that Adderall will not help healthy people, nor how consistently college students disprove every word of it every finals season.

That makes “only give Adderall to people with ADHD” a moral judgment, not a medical one. Adderall doesn’t “cure” the “disease” of ADHD, at least not in the same way penicillin cures syphilis. Adderall will give everyone better concentration, and we’ve judged that it’s okay for people with terrible concentration to use it to overcome their handicap, but not okay for people with already-fine concentration to use it to become superhuman.

We could still have a principled definition of ADHD. It would be something like “People below the Nth percentile in ability to concentrate.” Instead, we use the DSM, which advises us to diagnose people with ADHD if they say they have at least five symptoms from a list. The list has things like “often has difficulty sustaining attention” and “often has difficulty organizing tasks”. How often? You know, often! And if you work as a Senior Regional Manipulator Of Tiny Numbers, you’re going to have attention problems a lot more “often” than the rest of us.

So the DSM criteria are kind of meaningless, but that’s fine, because people can just lie about them anyway.

There are whole websites for this: How To Convince Your Shrink You Have ADHD, How To Get Your Doctor To Prescribe You Adderall In Five Easy Steps, et cetera. But I can’t imagine most people need them. Just talk about all the times in your life that you had attention and concentration problems, and if your doctor asks you a more specific question (“Do you often lose things?”) you give the obvious right answer (“Wow, it’s like you’ve known me my whole life!”).

Aren’t psychiatrists creepy wizards who can see through your deceptions? There are people like that. They’re called forensicists, they have special training in dealing with patients who might be lying to them, and they tend to get brought in for things like evaluating a murderer pleading the insanity defense. They have a toolbox of fascinating and frequently hilarious techniques to ascertain the truth, and they’re really good at their jobs.

But me? At best, I can have a vague suspicion you’re not telling the truth. And how many patients genuinely in need of treatment do I want to risk accidentally rejecting just so I can be sure of thwarting you? A lot of 100% honest psychiatric patients’ stories are pretty unbelievable, really, and I don’t want to have to treat every patient like a convicted murderer. Unless you give me some specific reason to doubt you, I start with the assumption that you’re telling the truth.

Think about how wasteful all of this is. We throw people in jail for using Adderall without a prescription. We expel them from colleges. We fight an expensive and bloody War on Drugs to prevent non-prescription-holders from getting Adderall. We create a system in which poor people need to stretch their limited resources to make it to a psychiatrist so they can be prescribed Adderall, in which people without health insurance can never get it at all, in which DEA agents occasionally bust down the doors of medical practices giving out Adderall illegally. All to preserve a sham in which psychiatrists ask their patients “Do you have ADD symptoms?” and the patients say “Oh, yeah, definitely,” and then the psychiatrists give them Adderall. It’s like adding twenty layers of super-reinforced concrete to a bunker with a wide-open front door.

(Also, if by some chance a psychiatrist doesn’t give a patient Adderall, that patient practically always goes to another psychiatrist, and that next psychiatrist does. Trust me, no matter how unsuitable a candidate you are, no matter how bad a liar you are, somewhere there is a psychiatrist who will give you Adderall. And by “somewhere”, I mean it will take you three tries, tops.)

Psychiatrists’ main response to this perverse and unwinnable system is to give people Adderall, but feel guilty about it. Somebody should do an anthropological study on this, but my preliminary observations:

Some people will lecture their patients on how Medication Can Never Address The Root Cause Of A Problem, and the patient will agree that Medication Can Never Address The Root Cause Of A Problem, and then the psychiatrist will give them Adderall and feel good about it.

Some people will discuss alternative options, like behavioral treatments, or non-stimulant medications, and the patient will come back in a month and say that the behavioral treatments didn’t work, and then the psychiatrist will give them Adderall and feel good about it.

Some people will give their patients a formal test where they have to answer questions like “I often have trouble concentrating – strongly disagree, disagree, neutral, agree, or strongly agree?” Then the patient will give whatever answers get them Adderall, the psychiatrist will add up all the answers and score the test and find that it means the patient needs Adderall, and then the psychiatrist will give the patient Adderall and feel good about it.

Some people will occasionally find some little issue with one patient’s story, deny them Adderall, and then ride out the moral high for weeks, feeling so virtuous that they can give the next few people Adderall and feel good about it.

Some people will demand multiple evaluation sessions, lots of laboratory tests, make a patient tell them their whole life story. And after learning that they had a bad relationship with their stepfather in 8th grade and still have sexual hangups over that time they ejaculated prematurely with Sally one time in freshman year, the psychiatrist will give the patient Adderall and feel good about it.

I have been guilty of all of these at one time or another. I still wrestle with these issues a lot. The latest step in my evolving position was reading Kelsey’s blog post about having ADHD and trying to get Adderall. Her doctor gave her a list of things she had to do before he would give her Adderall, and she – having ADHD – got distracted and never did any of them.

(by my calculations, that decreased Kelsey’s effectiveness by 20%, thus costing approximately 54 billion lives.)

So lately I’ve been trying to be smarter about all this. What about good old consequentialism? Most people will get some benefit from Adderall, but it’s a powerful drug with a lot of potential risks. Maybe I should figure out exactly how bad the risks are, and then I can figure out how bad people’s concentration problems would have to be for the risks to be outweighed by the benefits.

Trying to discover the risks of Adderall is a kind of ridiculous journey. It’s ridiculous because there are two equal and opposite agendas at work. The first agenda tries to scare college kids away from abusing Adderall as a study drug by emphasizing that it’s terrifying and will definitely kill you. The second agenda tries to encourage parents to get their kids treated for ADHD by insisting Adderall is completely safe and anyone saying otherwise is an irresponsible fearmonger. The difference between these two situations is supposed to be whether you have a doctor’s prescription. But what if you are the doctor, trying to decide who to prescribe it to? Then what? All they tell you in medical school is to give it to the people who actually have ADHD – which, I repeat, is kind of meaningless.

This post records my attempt to figure out something better. Apologies for the length.

II. Medical Risks

Most people on stimulants will have some minor side effects. Feeling jittery, feeling cold, feeling sick, leg cramps, arm cramps. Some will feel “like a robot” or otherwise psychologically uncomfortable. But these don’t discourage me from giving stimulants to people who need them. If someone needs the drugs, let them try them, see how many side effects they get, and decide for themselves whether it’s worth it.

I’m much more concerned about side effects that are permanent and dangerous. These people give us a list:

Sounds pretty bad. On the other hand, I’ve prescribed Adderall to lots of people and none of them have ever gotten any of these things, except mild hypertension. How common are these, really?

The best source for exact numbers is the guidelines by sinister-sounding European organization EUNETHYDIS. I’ll use US medical database UpToDate as a secondary source. Both lump together Adderall and Ritalin – something I’ll be doing too throughout most of this essay, except where it becomes important to distinguish them.

Seizures: EUNETHYDIS doesn’t believe this happens at normal doses. They write:

There are occasionally concerns that, as with other psychotropics, ADHD medications may lower the seizure threshold so as to cause seizures in previously seizure-free individuals. However, in prospective trials, retrospective cohort studies and post-marketing surveillance in ADHD patients without epilepsies, the incidence of seizures did not differ between ADHD pharmacotherapy and placebo [relative risk (RR)] for current versus non-use for methylphenidate, 0.8; RR for atomoxetine, 1.1

UpToDate is so unimpressed by this that they don’t even mention it. If you ask them about seizure risk for ADHD medications, they start telling you about bupropion. Overall I wouldn’t give these medications to people with a known seizure disorder without a neurologist’s approval, but they seem pretty okay otherwise.

Hypertension: Broad agreement from both sources that stimulants cause hypertension. EUNETHYDIS says 1-4 mm systolic, UpToDate says 3-8 mm.

The main problem with hypertension is that it increases risk for things like heart attacks. I calculated an average 40 year old’s risk of heart attack and got 1% over 10 years. Adding on an average Adderall-related increase in blood pressure, I got 1.1%.

What about in high-risk adults? I calculated risk for a 60 year old smoker with high cholesterol and high blood pressure. He has a 30.5% base risk of heart attack. Then I added in a typical Adderall-related rise in blood pressure, and he ended up at 32.0%. So Adderall only increased risk by about 1/1000 per year, even in this worst case scenario. Also, I never meet 60 year old smokers asking for Adderall. Overall this seems not too interesting.

I haven’t looked into other hypertension-related problems like kidney disease as much, but these seem like things you’ll hopefully have a lot of warning about and be able to talk to your doctor about whether to stop stimulants over.

Heart Attack and Stroke: My usual sources fail me here, but BioMed Central Cardiovascular Disorders comes to the rescue. They review three major studies on stroke and heart attack in stimulant patients.

Study #1 finds that stimulant users have 3x more risk of transient ischaemic attack (a small mini-stroke that does no lasting damage), but no increased risk of stroke.

Study #2 is the best and biggest study, and finds that stimulants actually reduce heart attack and stroke. They suspected “healthy-user bias”; that is, only healthy people would use such a supposedly-dangerous medication.

Study #3 is the most recent, and found no increased risk of heart attack or stroke.

UpToDate writes:

Patients receiving stimulant therapy visited the emergency department or clinician office more frequently than those who were not treated with medications because of cardiac symptoms (10.9 versus 9.1 events per 1000 patient-years, adjusted hazards ratio 1.2, 95% CI 1.04-1.38) [26]. The cardiac symptoms included syncope, tachycardia, or palpitations. However, the group that received stimulant therapy was more likely to receive other psychotropic medications (antidepressants and antipsychotic agents), be male, and be non-Hispanic. The incidence of fatal and serious cardiac abnormalities was low and not different between the two groups, and was similar to the rates seen in the general pediatric population.

The 1/1000 extra ER visit per patient year sounds bad, but “palpitations” means “your heart feels like it’s beating in a weird way”, and Adderall clearly causes this, so my guess is this is mostly just people feeling this and freaking out. I have had patients call me after feeling this and freaking out, and we dealt with it, and they were fine. If I hadn’t been available, maybe they would have gone to the ER and turned themselves into a statistic.

There might be some bias in these studies, but overall there doesn’t seem to be much evidence this is worth worrying about unless your risk of heart attack or stroke is already really high.

Psychosis: I saw this a lot when I worked in inpatient. Somebody would take five times the recommended dose, or take more Adderall every time they felt tired until they hadn’t slept for a week, and then they would start hearing voices or feeling like something was crawling on their skin. After a day or two off Adderall, and a night or two getting a normal amount of sleep, they’d be fine. Take enough stimulants and you will become psychotic – but it’s rare on prescribed doses, and it usually resolves pretty quickly.

What dose can cause psychosis? Amphetamine-Induced Psychosis says:

Early studies demonstrated that amphetamines could trigger acute psychosis in healthy subjects. In these studies, amphetamine was given in consecutively higher doses until psychosis was precipitated, often after 100–300 mg of amphetamine. The symptoms subsided within 6 days.

Compare this to the standard daily dose of Adderall of about 10 – 60 mg.

Can psychosis ever happen at normal doses? EUNETHYDIS is skeptical. They write:

Data from population-based birth cohorts indicate that self-reported psychotic symptoms are common and may occur in up to 10% of 11-year-old children. In contrast, the prevalence of psychotic symptoms in children treated with ADHD drugs from RCTs is reported as only 0.19%. While this very low observed event rate in trials is likely to reflect a lack of systematic assessment and reporting, there is no compelling evidence to suggest that the observed event rate of psychotic symptoms in children treated with ADHD drugs exceeds the expected (background) rate in the general population. In the US FDA analysis, ADHD drug overdoses did not contribute significantly to reports of psychosis adverse events.

So basically, “kids are always kind of weird, studies say kids aren’t weird on Adderall, clearly they’re not paying attention, but it doesn’t look like things got any worse.”

UpToDate links these people, who say:

We analyzed data from 49 randomized, controlled clinical trials in the pediatric development programs for these products. A total of 11 psychosis/mania adverse events occurred during 743 person-years of double-blind treatment with these drugs, and no comparable adverse events occurred in a total of 420 person-years of placebo exposure in the same trials. The rate per 100 person-years in the pooled active drug group was 1.48. The analysis of spontaneous postmarketing reports yielded >800 reports of adverse events related to psychosis or mania. In approximately 90% of the cases, there was no reported history of a similar psychiatric condition. Hallucinations involving visual and/or tactile sensations of insects, snakes, or worms were common in cases in children.

I think their use of “psychotic events per person-year” is misleading. Their study includes 5717 people, which means that for them to have 743 person-years each person must have been monitored for two months or so. But if you’re going to get psychotic on stimulants, usually it’s right after the stimulant is started. That means it might be better framed as “11/5717 patients had a psychotic event”, or even “one in every five hundred patients had a psychotic event”. Note that this matches the 0.19% number given by EUNETHYDIS. And the most common psychotic event was a feeling of snakes or insects on the skin which resolved after the drug was stopped, so we’re not talking “person is forever schizophrenic” here.

Also, I feel like EUNETHYDIS makes a good point with the “kids are always weird” thing. Here’s one of the psychotic events mentioned in the paper:

A spontaneous report from the manufacturer of Strattera (atomoxetine) described a 7-year-old girl who received 18 mg daily of atomoxetine for the treatment of ADHD. Within hours of taking the first dose, the patient started talking nonstop and stated that she was happy. The next morning the child was still elated. Two hours after taking her second dose of atomoxetine, the patient started running very fast, stopped suddenly, and fell to the ground. The patient said she had “run into a wall” (there was no wall there). The reporting physician considered that the child was hallucinating. Atomoxetine was discontinued.

Have these people ever seen a child?

The methylphenidate prescribing information suggests an 0.1% risk of psychosis, which matches the other two studies pretty well.

Does stimulant psychosis always get better after the stimulant is discontinued? My strong impression is “yes”, but I am told that this study claims 5% to 15% of stimulant psychosis patients do not recover. I cannot find the full text to figure out exactly what they mean, and it looks like it was done on chronic meth addicts rather than prescription users.

So a few lines of evidence converge on 0.1% – 0.2% of children who use prescription stimulants become psychotic. I don’t know numbers for adults, but a few people who have read drafts of this article mention they have personally seen someone get psychotic on Adderall, which seems anecdotally to argue for a higher rate. I don’t know if those people were using it correctly or using anything else alongside it. Of people who get psychotic on Adderall, perhaps 5-15% stay psychotic after discontinuation (I predict this is about meth-heads and exaggerated).

Aggressive Behavior: This is just going to be the same as psychosis. Adderall isn’t going to magically turn gentle old grandmothers into killing machines. If you’re already a kind of violent guy, and you take a lot of Adderall, maybe it’ll push you over the edge.

Sudden Death: This is usually cardiovascular – something goes very wrong with your heart and it stops beating without warning. But UpToDate writes:

Reports of unexpected deaths of children receiving stimulant therapy have led to concerns that these medications increase the risk of cardiovascular (CV) adverse events, including sudden unexpected deaths (SUD) [1,2]. However, large cohort studies have not shown an increased risk of serious CV adverse events in children treated with stimulant therapy compared with the general pediatric population…

Among adult patients who are either current or new users of stimulant medications, there appears to be no increased risk of serious CV events. This was illustrated in a large retrospective cohort study of adults (age range 25 to 64 years) based on data from four large health plans that was done in parallel with the study performed in children discussed above [3,16]. Multivariant analysis demonstrated a lower risk of serious CV events (defined as myocardial infarction, stroke, and sudden cardiac death) in individuals who were current users of stimulant therapy versus nonusers (relative risk [RR] 0.83, 95% CI 0.72-0.96). In new users of ADHD medications compared with controls, the risk of serious CV events was even lower (RR 0.77, 95% CI 0.63-0.94). However, there may be a modest amount of healthy-user bias that favored the current users of stimulant therapy. To adjust for this potential bias, a multivariant analysis that compared current users with individuals who had used stimulant therapy more than one year ago (defined as remote use) found no difference in the risk of serious CV events (RR 1.03, 95% CI 0.86-1.24). The crude incidence of serious CV events in the overall cohort was 1.34 per 1000 person-years. These results showing no increased risk of serious CV events are consistent with previously discussed studies in pediatric patients.

And EUNETHYDIS:

when the number of patient-years of prescribed medication was incorporated into the evaluation, the frequency of reported sudden death per year of ADHD therapy with methylphenidate, atomoxetine or amfetamines among children was 0.2–0.5/100,000 patient-years [99]. The analysis of 10-year adverse-event reporting in Denmark resulted in no sudden deaths in children taking ADHD medications [5]. While it is recognised that adverse events are frequently under-reported in general, it is likely that sudden deaths in young individuals on relatively new medications may be better reported. Death rates per year of therapy, calculated using the adverse events reporting system (AERS) reports and prescription data, are equivalent for two ADHD drugs (dexamfetamine and methylphenidate): 0.6/100,000/year [37]. (The accuracy of these estimates is limited however, for instance because in moving from number of prescriptions to patient-year figures assumptions must be made about the length of each prescription). It seems likely, using these best available data, and assuming a 50% under-reporting rate, that the sudden death risk of children on ADHD medications is similar to that of children in general.

Despite this, I am always very wary prescribing stimulants to anyone with any history of heart problems. I always make these people go see a cardiologist. The cardiologist always says yeah, sure, whatever, but it makes me feel a lot better.

In General: Probably the most informative passage I’ve seen on the medical risks of stimulants is this one from Misuse Of Study Drugs:

In 1990, there were about 271 emergency room reports involving methylphenidate, 1,727 in 1998, and 1,478 in 2001 [32]. The total number of emergency department visits resulting from use of all psychotherapeutic CNS stimulants was 4091 in 1998, 3644 in 1999, 3336, in 2000, 3146 in 2001 and 3275 in 2002 [33]. There are approximately 25 emergency room deaths per year among up to 3 million users of prescription stimulant drugs (including both those medically prescribed and not prescribed these drugs). Thus, the likelihood of dying from such drugs appears to be approximately 1 in 120,000.

But isn’t 25 deaths per year still bad?

Here’s another passage from the same source:

Intravenous use of prescription stimulants is particularly dangerous. In particular, intravenous (IV) abuse of methylphenidate may result in talcosis. Talcosis is a reaction to talc, a filler and lubricant in methylphenidate and other oral medication. This inflammation reaction occurs in the lungs and related consequences include lower lobe panacinar emphysema.

People aren’t dying because their psychiatrist gave them Adderall 10 mg bid. They’re dying because they ground it up, injected it into their bloodstream, and had their lungs turn into talc. The people dying of stimulant use are doing things so horrifying you could not possibly imagine them even if you took ten times your prescribed dose of Adderall and used all of it to focus on writing a report on the most horrifying ways you could possibly use Adderall. Did you know that 13% of Massachusetts college students have ground up Ritalin and snorted it up their nose? Did you know the first case report of Ritalin abuse involved a patient who was taking 125 Ritalin pills daily? All of these people are out there, and still only 25 people die of stimulant-related causes per year!

My impression is that, in particularly at-risk people, stimulants may add +1/1000 to the risk of heart attacks per year, and +1/10,000 risk of long-term psychosis. Everything else in this category can be rounded down to zero.

III. Addiction

What about addiction risk?

The data on this are really poor because it’s hard to define addiction. If a prescription stimulant user uses their stimulants every day, and feels really good on them, and feels really upset if they can’t get them…well, that’s basically the expected outcome.

Wilens et al finds that over ten years, 10% of adolescents surveyed got high on their medication, and 22% sometimes used more than prescribed. Does that mean those 10% or 22% are “addicted”? Not really – some of them probably have a tough day one time, so they take two Adderall that day and no Adderall the day after. As for getting high – well, a lot of people get high on alcohol who aren’t alcoholics, and a lot of people get stoned who nobody would call addicted to marijuana.

A lot of studies in this area ask the kind of different question of whether children put on stimulants are more likely to be addicted to drugs in general as adults. Most of them find these children are less likely, which is hypothesized to be an effect of successfully treating their ADHD.

And there’s a book on narcolepsy which apparently claims that between less than 1% and 3% of people taking stimulants for that condition get addicted, but I can’t track down their methodology or really anything beyond one reference. And narcoleptics are a different population than ADHD patients and results might not generalize (though that number sounds kind of right).

I don’t think there are good data here, but my intuitions and personal experience is that “addiction” of the sort you get with heroin or tobacco is very rare, at least when responsible people without a personal or family history of addictive behavior take stimulants as prescribed. Most people agree the risk is lower for extended-release stimulants (eg Adderall XR), and very low for Vyvanse.

IV. Tolerance

Tolerance is when you keep needing more and more of a drug to get an effect. In the worst cases, your baseline changes so that you need the drug to feel normal. The concern is that long-term use of Adderall will make your attention naturally worse, so that medicated-you is only as good at concentrating as unmedicated-you was before, and unmedicated-you is even less attentive.

We know tolerance occurs over the short-term, and we encourage patients to take a few days off Adderall every week or two to let their bodies reset. More concerning is whether it happens over the space of years, where people’s bodies adjust in a more permanent way.

The best study of this phenomenon was the Multimodal Treatment of ADHD (MTA) study, which randomized children to be treated with stimulants or “behavioral therapy” (eg learning coping skills, etc). Behavioral therapy for ADHD is not very good and I interpret it as a nice way of saying placebo.

For the first year, the kids getting stimulants did much better on all metrics than behavioral-therapy-only. For the second year, they did a little better. By the third year, they were the same. In the eighth year, which was as long as anyone kept checking, they were still the same.

This is pretty concerning. It sounds like over three years people’s bodies built up some tolerance to stimulants, after which they provided no further benefit. The only saving grace is that there’s no evidence of stimulants ever making people worse than normal (even on people who stopped the medications later).

People have critiqued this study on the grounds that although they started off giving the experimental group stimulants vs. the control group behavioral therapy, any patient could switch treatments at any time and many of them did. By year three when the groups equalized, only 66% of the medication group was on medication, and a full 43% of the therapy-only group was. So maybe this just drowned out any original effect?

The authors of the study are not convinced:

It is tempting to conclude that intensive medication management beyond 14-months could have resulted in continued differences between the randomly assigned treatment groups…In a previous multimodal treatment study where medication was carefully titrated and monitored for two years, treatment gains were maintained for the entire period. However, after 14 months the MTA became an uncontrolled naturalistic follow-up study and inferences about potential advantages that might have occurred with continued long-term study-provided treatment are speculation. Moreover, with one exception (math achievement), children still taking medication by 6 and 8 years fared no better than their non-medicated counterparts despite a 41% increase in the average total daily dose, failing to support continued medication treatment as salutary (at least, continued medication treatment as monitored by community practitioners)…Finally, a previous analysis of the MTA data through 3 years did not provide evidence that subject selection biases towards medication use in the follow-up period accounted for the observed lack of differential treatment effects.

Thus, although the MTA data provided strong support for the acute reduction of symptoms with intensive medication management, these long-term follow-up data fail to provide support for long-term advantage of medication treatment beyond two years for the majority of children—at least as medication is monitored in community settings.

As far as I can tell, pretty much everyone has ignored this, using the usual range of meaningless excuses like “Well, treatment must be individualized to the patient”.

This is very tempting, because for example I have a lot of patients who have been on stimulants for decades, are still very excited about them, and think they’re doing great. Every so often these patients go off their stimulants, are very unhappy, and insist on going back on them again. They say that pre-stimulant, they were scatterbrained and always losing things and missing appointments and failing to do work, and now, after ten years of stimulant treatment, they feel great.

We can imagine ways these people are wrong. Maybe the stimulants worked for the first three years, stopped working so gradually they didn’t notice, and now they only notice the difference between being on stimulants (baseline), and immediate post-stimulant withdrawal (very bad). But this would require a lot of people to be really wrong about their internal experience.

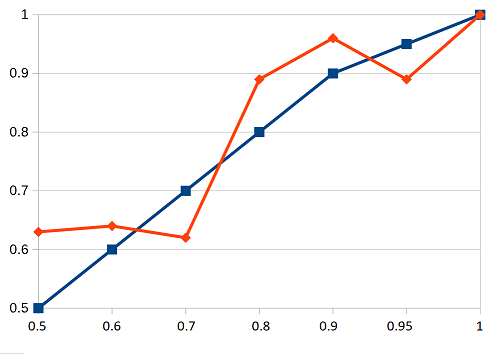

I asked a question on the Slate Star Codex survey about this. People on Adderall more than one month were asked to tell me whether they had no tolerance problems, some tolerance requiring dose escalation, or high tolerance that made the medications stop working entirely. The preliminary results:

Adderall for between one month and one year: (n = 124)

62 (50%) No tolerance, worked as well as ever

57 (46%) Some tolerance, or required dose escalation, but still worked well in general

5 (4%) High tolerance, stopped working

Adderall for one to five years: (n = 117)

33 (28%) No tolerance, worked as well as ever

78 (67%) Some tolerance, or required dose escalation, but still worked well in general

6 (5%) High tolerance, stopped working

Adderall for more than five years: (n = 59)

23 (39%) No tolerance, worked as well as ever

33 (56%) Some tolerance, or required dose escalation, but still worked well in general

3 (5%) High tolerance, stopped working

All three categories were evenly divided between “no tolerance” and “some tolerance but still worked well”, with only about 5% saying the tolerance became a big problem. This matches my clinical experience. So either I’m right, or the problem where they get confused and forget their baseline is affecting my survey-takers.

There are occasional claims that magnesium or some other substance can help reverse Adderall tolerance. As far as I know these have never really been investigated.

So: there’s no good evidence that taking Adderall will actively make your ADHD worse in the long run. There is good evidence from clinical trials that benefits will decrease to zero over the space of a few years, apparently contradicted by the personal experiences of doctors and patients. Overall not sure what to do with this one.

V. Neurotoxicity

There’s some evidence that amphetamines can cause permanent cellular damage, but it’s not clear whether this happens in humans at typical therapeutic doses.

If you give rats very high doses of IV amphetamines, they accumulate so much dopamine in the cytoplasm of their neurons that it causes oxidative stress and destroys dopaminergic nerve terminals. This doesn’t happen to rats at doses matching human doses of Adderall. But it does happen at those doses to squirrel monkeys. At least this is the claim:

Adult baboons and squirrel monkeys were treated with a 3:1 mixture of D/L–amphetamine similar to the pharmaceutical Adderall for 4 weeks. Plasma concentrations of amphetamine (136±21 ng/ml-1) matched the levels reported in human ADHD patients after amphetamine treatment lasting 3 weeks (120–140 ng/ml-1) or 6 weeks in the highest dose (30 mg/day-1) condition (120 ng/ml-1). When the animals were killed 2 weeks after the 4-week amphetamine treatment period, both non-human primate species showed a 30–50% reduction in striatal dopamine, its major metabolite (dihydroxyphenylacetic acid (DOPAC)), its rate-limiting enzyme (tyrosine hydroxylase), its membrane transporter and its vesicular transporter. These consequences are similar, if not identical to the effects of neurotoxic doses in rodents.

I’m not really sure what they’re getting at here – surely they’re not saying just one month of Adderall permanently decreases striatal dopamine by 50%? But it sounds like something bad is happening, and since humans are more like monkeys than rats, maybe there’s cause for concern.

What would it look like if people got this kind of brain damage? One likely possibility is Parkinson’s disease, a condition caused by poor dopaminergic function in the brain. If you were going to tell a story about how Adderall could cause long-term neurotoxic damage, it would look like gradual decrease of brain dopaminergic function without obvious effects through most of the lifespan (since most people have dopaminergic function to spare). As the patient got older and started naturally losing brain function, Parkinson’s would appear. This happens to genetically and environmentally predisposed people anyway (which is why old people get Parkinson’s so often), but in this scenario amphetamine use would present an extra risk factor.

Several studies have shown that meth addicts do have higher rates of Parkinson’s disease. This one says people hospitalized for meth addiction are 60% more likely to get Parkinson’s than people hospitalized for other reasons. This one finds Parkinson’s rates three times higher in meth addicts compared to non-drug-users.

What about at therapeutic doses? This article claims there was a study that found people who used Benzedrine and Dexadrine (early forms of prescription amphetamine) in the 1960s have rates of Parkinson’s Disease about 60% higher than non-users today, but I can’t find the study itself and I don’t know the methodology. Another study finds similar results. Since both ADHD and stimulant addiction are very hereditary, you could make an argument that people who already have problems with their dopamine system are more likely to get Parkinson’s later on. There’s a little bit of conflicting evidence for this. Also, ADHD patients might have three times the rate of dementia with Lewy bodies, a condition closely related to Parkinson’s. On the other hand, there doesn’t seem to be any genetic connection. Overall my guess is this is not what’s going on.

About 1-2% of people will get Parkinson’s if they live long enough. If Adderall increases that risk 60%, then presumably it could cause a 1% absolute increase in risk.

Some people claim various substances (magnesium, minocycline, etc) will protect your brain from amphetamine neurotoxicity. None of these have been studied in anywhere near the depth they would need to be to make me feel comfortable with this.

The good news is that as far as anyone can tell, Ritalin doesn’t cause these problems, even if you give it to rats at super-high doses. It seems to be a difference in the mechanism of action. I’ve been talking about Adderall this whole post because it’s the most commonly-used stimulant and some studies have suggested it’s more effective for a few people, but this might be a strong argument in favor of starting with Ritalin and only switching to Adderall if Ritalin fails. [EDIT: Never mind, recent studies suggest Ritalin is just as likely to cause this problem.]

So overall there is plausible, but not incontrovertible, evidence linking Adderall to a somewhat increased risk of Parkinson’s disease in old age.

VI. Summary

My impression is that the risks of proper, medically supervised Adderall use are the following:

1. High risk of minor short-term side effects that might make you want to stop taking the medication with no long-term issues

2. Extremely low risk of serious medical side effects like stroke or heart attack, except maybe in a few very vulnerable populations

3. Maybe one percent risk, but not literally zero risk, of addiction if patients are well-targeted by their doctors and use the medication responsibly.

4. Perhaps one in five hundred risk, but not literally zero risk, of psychosis. Some anecdotal evidence suggests it is more common than this. Most of these cases will be mild and resolve quickly. Some people find a very small number of cases of stimulant-induced psychosis may be permanent, though I still find this hard to believe.

5. Some evidence for tolerance after several years, though most patients will continue to believe it is helping them. No sign of supertolerance where it actually makes the condition worse.

6. Plausibly 60% increased relative risk (+~1% absolute risk) for Parkinson’s disease with long-term use.

7. Unknown unknowns.

Of these, I find the psychosis, tolerance, and Parkinson’s to be the most concerning. But I am pretty upset about the overall terrible state of this research. In particular, nobody except the MTA takes the possibility of tolerance seriously, and the MTA results really ought to have inspired a lot more soul-searching and hand-wringing than they actually did. The numbers on addiction and psychosis are inexcusably terrible given how easy they would be to collect. Getting good data on the Parkinson’s risk would be harder, but one so-far-unexplored possibility would be to compare past prescription Adderall history to past prescription Ritalin history in Parkinson’s patients to adjust for the potential ADHD confounder. I really think somebody should do this.

Despite all this, I compare these risks to the risks of eating one extra strip of bacon per day and decide that overall this is not enough for me to stop prescribing stimulants to patients who I think might benefit from them. These are about the standard level of side effects for a powerful medication and I think there’s a major role for these in ADHD treatment as long as patients are well-informed about the risks they’re taking.

PS: I don’t accept blog readers as patients, and I won’t prescribe you Adderall just because you liked this post.