[Epistemic status: Pretty good, but I make no claim this is original]

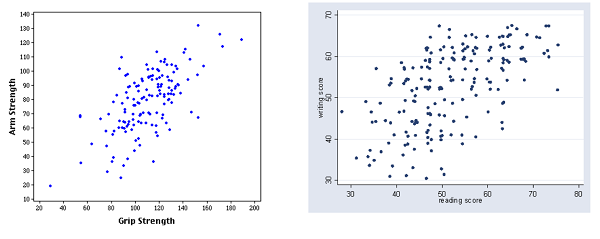

A neglected gem from Less Wrong: Why The Tails Come Apart, by commenter Thrasymachus. It explains why even when two variables are strongly correlated, the most extreme value of one will rarely be the most extreme value of the other. Take these graphs of grip strength vs. arm strength and reading score vs. writing score:

In a pinch, the second graph can also serve as a rough map of Afghanistan

Grip strength is strongly correlated with arm strength. But the person with the strongest arm doesn’t have the strongest grip. He’s up there, but a couple of people clearly beat him. Reading and writing scores are even less correlated, and some of the people with the best reading scores aren’t even close to being best at writing.

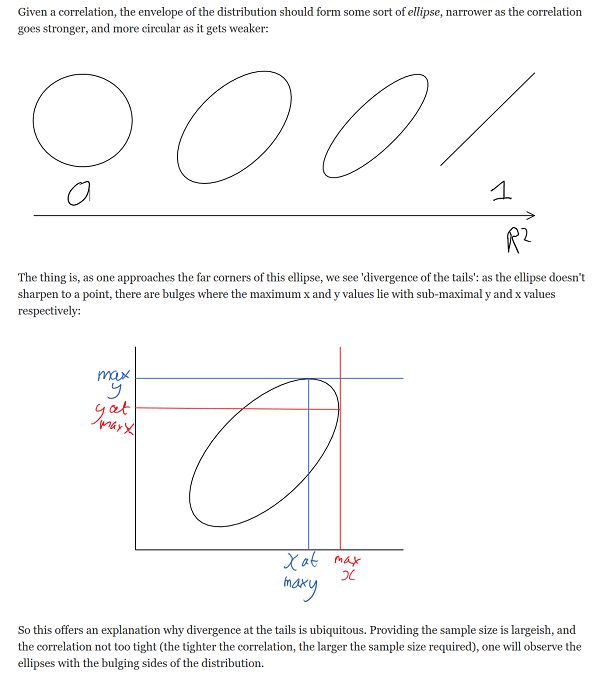

Thrasymachus gives an intuitive geometric explanation of why this should be; I can’t beat it, so I’ll just copy it outright:

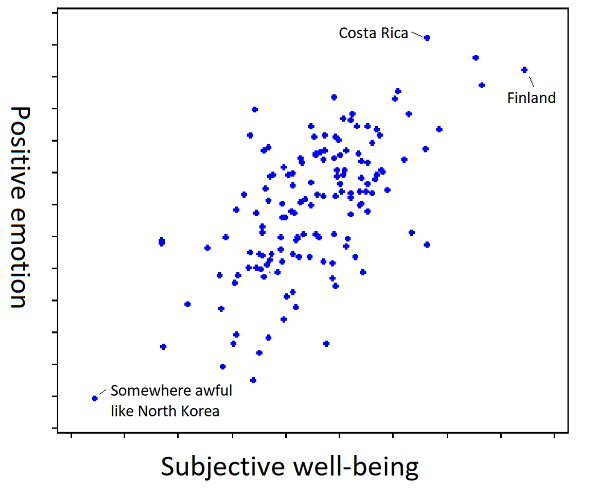

I thought about this last week when I read this article on happiness research.

The summary: if you ask people to “value their lives today on a 0 to 10 scale, with the worst possible life as a 0 and the best possible life as a 10”, you will find that Scandinavian countries are the happiest in the world.

But if you ask people “how much positive emotion do you experience?”, you will find that Latin American countries are the happiest in the world.

If you check where people are the least depressed, you will find Australia starts looking very good.

And if you ask “how meaningful would you rate your life?” you find that African countries are the happiest in the world.

It’s tempting to completely dismiss “happiness” as a concept at all, but that’s not right either. Who’s happier: a millionaire with a loving family who lives in a beautiful mansion in the forest and spends all his time hiking and surfing and playing with his kids? Or a prisoner in a maximum security jail with chronic pain? If we can all agree on the millionaire – and who wouldn’t? – happiness has to at least sort of be a real concept.

The solution is to understand words as hidden inferences – they refer to a multidimensional correlation rather than to a single cohesive property. So for example, we have the word “strength”, which combines grip strength and arm strength (and many other things). These variables really are heavily correlated (see the graph above), so it’s almost always worthwhile to just refer to people as being strong or weak. I can say “Mike Tyson is stronger than an 80 year old woman”, and this is better than having to say “Mike Tyson has higher grip strength, arm strength, leg strength, torso strength, and ten other different kinds of strength than an 80 year old woman.” This is necessary to communicate anything at all and given how nicely all forms of strength correlate there’s no reason not to do it.

But the tails still come apart. If we ask whether Mike Tyson is stronger than some other very impressive strong person, the answer might very well be “He has better arm strength, but worse grip strength”.

Happiness must be the same way. It’s an amalgam between a bunch of correlated properties like your subjective well-being at any given moment, and the amount of positive emotions you feel, and how meaningful your life is, et cetera. And each of those correlated is also an amalgam, and so on to infinity.

And crucially, it’s not an amalgam in the sense of “add subjective well-being, amount of positive emotions, and meaningfulness and divide by three”. It’s an unprincipled conflation of these that just denies they’re different at all.

Think of the way children learn what happiness is. I don’t actually know how children learn things, but I imagine something like this. The child sees the millionaire with the loving family, and her dad says “That guy must be very happy!”. Then she sees the prisoner with chronic pain, and her mom says “That guy must be very sad”. Repeat enough times and the kid has learned “happiness”.

Has she learned that it’s made out of subjective well-being, or out of amount of positive emotion? I don’t know; the learning process doesn’t determine that. But then if you show her a Finn who has lots of subjective well-being but little positive emotion, and a Costa Rican who has lots of positive emotion but little subjective well-being, and you ask which is happier, for some reason she’ll have an opinion. Probably some random variation in initial conditions has caused her to have a model favoring one definition or the other, and it doesn’t matter until you go out to the tails. To tie it to the same kind of graph as in the original post:

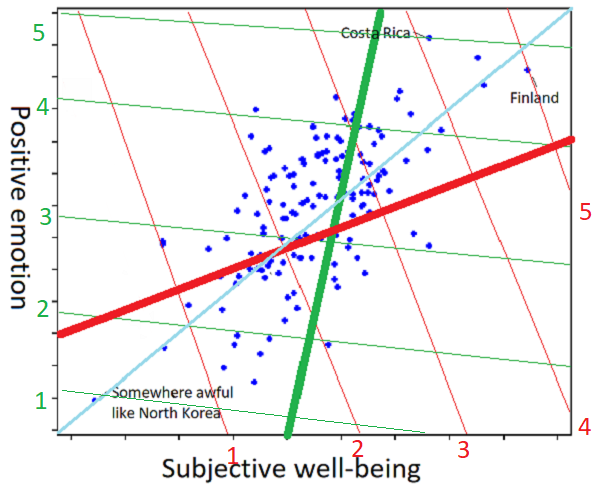

And to show how the individual differences work:

I am sorry about this graph, I really am. But imagine that one person, presented with the scatter plot and asked to understand the concept “happiness” from it, draws it as the thick red line (further towards the top right part of the line = more happiness), and a second person trying to the same task generates the thick green line. Ask the first person whether Finland or Costa Rica is happier, and they’ll say Finland: on the red coordinate system, Finland is at 5, but Costa Rica is at 4. Ask the second person, and they’ll say Costa Rica: on the green coordinate system, Costa Rica is at 5, and Finland is at 4 and a half. Did I mention I’m sorry about the graph?

But isn’t the line of best fit (here more or less y = x = the cyan line) the objective correct answer? Only in this metaphor where we’re imagining positive emotion and subjective well-being are both objectively quantifiable, and exactly equally important. In the real world, where we have no idea how to quantify any of this and we’re going off vague impressions, I would hate to be the person tasked with deciding whether the red or green line was more objectively correct.

In most real-world situations Mr. Red and Ms. Green will give the same answers to happiness-related questions. Is Costa Rica happier than North Korea? “Obviously,” the both say in union. If the tails only come apart a little, their answers to 99.9% of happiness-related questions might be the same, so much so that they could never realize they had slightly different concepts of happiness at all.

(is this just reinventing Quine? I’m not sure. If it is, then whatever, my contribution is the ridiculous graphs.)

Perhaps I am also reinventing the model of categorization discussed in How An Algorithm Feels From The Inside, Dissolving Questions About Disease, and The Categories Were Made For Man, Not Man For The Categories.

But I think there’s another interpretation. It’s not just that “quality of life”, “positive emotions”, and “meaningfulness” are three contributors which each give 33% of the activation to our central node of “happiness”. It’s that we got some training data – the prisoner is unhappy, the millionaire is happy – and used it to build a classifier that told us what happiness was. The training data was ambiguous enough that different people built different classifiers. Maybe one person built a classifier that was based entirely on quality-of-life, and a second person built a classifier based entirely around positive emotions. Then we loaded that with all the social valence of the word “happiness”, which we naively expected to transfer across paradigms.

This leads to (to steal words from Taleb) a Mediocristan resembling the training data where the category works fine, vs. an Extremistan where everything comes apart. And nowhere does this become more obvious than in what this blog post has secretly been about the whole time – morality.

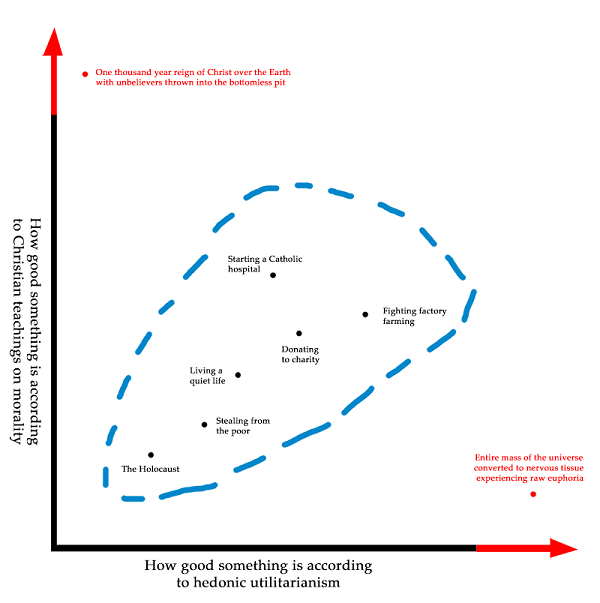

The morality of Mediocristan is mostly uncontroversial. It doesn’t matter what moral system you use, because all moral systems were trained on the same set of Mediocristani data and give mostly the same results in this area. Stealing from the poor is bad. Donating to charity is good. A lot of what we mean when we say a moral system sounds plausible is that it best fits our Mediocristani data that we all agree upon. This is a lot like what we mean when we say that “quality of life”, “positive emotions”, and “meaningfulness” are all decent definitions of happiness; they all fit the training data.

The further we go toward the tails, the more extreme the divergences become. Utilitarianism agrees that we should give to charity and shouldn’t steal from the poor, because Utility, but take it far enough to the tails and we should tile the universe with rats on heroin. Religious morality agrees that we should give to charity and shouldn’t steal from the poor, because God, but take it far enough to the tails and we should spend all our time in giant cubes made of semiprecious stones singing songs of praise. Deontology agrees that we should give to charity and shouldn’t steal from the poor, because Rules, but take it far enough to the tails and we all have to be libertarians.

I have to admit, I don’t know if the tails coming apart is even the right metaphor anymore. People with great grip strength still had pretty good arm strength. But I doubt these moral systems form an ellipse; converting the mass of the universe into nervous tissue experiencing euphoria isn’t just the second-best outcome from a religious perspective, it’s completely abominable. I don’t know how to describe this mathematically, but the terrain looks less like tails coming apart and more like the Bay Area transit system:

Mediocristan is like the route from Balboa Park to West Oakland, where it doesn’t matter what line you’re on because they’re all going to the same place. Then suddenly you enter Extremistan, where if you took the Red Line you’ll end up in Richmond, and if you took the Green Line you’ll end up in Warm Springs, on totally opposite sides of the map.

Our innate moral classifier has been trained on the Balboa Park – West Oakland route. Some of us think morality means “follow the Red Line”, and others think “follow the Green Line”, but it doesn’t matter, because we all agree on the same route.

When people talk about how we should arrange the world after the Singularity when we’re all omnipotent, suddenly we’re way past West Oakland, and everyone’s moral intuitions hopelessly diverge.

But it’s even worse than that, because even within myself, my moral intuitions are something like “Do the thing which follows the Red Line, and the Green Line, and the Yellow Line…you know, that thing!” And so when I’m faced with something that perfectly follows the Red Line, but goes the opposite directions as the Green Line, it seems repugnant even to me, as does the opposite tactic of following the Green Line. As long as creating and destroying people is hard, utilitarianism works fine, but make it easier, and suddenly your Standard Utilitarian Path diverges into Pronatal Total Utilitarianism vs. Antinatalist Utilitarianism and they both seem awful. If our degree of moral repugnance is the degree to which we’re violating our moral principles, and my moral principle is “Follow both the Red Line and the Green Line”, then after passing West Oakland I either have to end up in Richmond (and feel awful because of how distant I am from Green), or in Warm Springs (and feel awful because of how distant I am from Red).

This is why I feel like figuring out a morality that can survive transhuman scenarios is harder than just finding the Real Moral System That We Actually Use. There’s a potentially impossible conceptual problem here, of figuring out what to do with the fact that any moral rule followed to infinity will diverge from large parts of what we mean by morality.

This is only a problem for ethical subjectivists like myself, who think that we’re doing something that has to do with what our conception of morality is. If you’re an ethical naturalist, by all means, just do the thing that’s actually ethical.

When Lovecraft wrote that “we live on a placid island of ignorance in the midst of black seas of infinity, and it was not meant that we should voyage far”, I interpret him as talking about the region from Balboa Park to West Oakland on the map above. Go outside of it and your concepts break down and you don’t know what to do. He was right about the island, but exactly wrong about its causes – the most merciful thing in the world is how so far we have managed to stay in the area where the human mind can correlate its contents.

right up until the transit map, it looks like a job for principal component analysis.

I mean, not really? If you’re doing PCA, you’re assuming that your coordinates are on comparable scales. And Scott’s point is that they usually aren’t.

Say more words? I don’t think anything about PCA requires your inputs to have similar variance or magnitudes.

True, that was more ambiguous than it could have been. By “comparable scale”, I didn’t mean “comparable variance”, I meant “comparable dimensionality”. Part of Scott’s argument about happiness seems to be “when discussing the concept of ‘happiness’, some people will weight ‘subjective well-being’ more heavily, and others will weight ‘positive emotion’ more heavily”.

If you did PCA on that raw data and used the first component as a joint happiness score, you’re assuming that “subjective well-being” and “positive emotion” as measured have comparable dimensionality.

This might be redundant with SystematizedLoser, but PCA tells you what single measure captures as much variance across multiple measures as possible. That makes it a great data reduction technique, but it can’t solve the ultimate problem that we don’t know whether positive emotions or subjective well-being are more important to “happiness.” If you have an understanding of happiness where positive emotions are irrelevant and subjective well-being is everything (maybe you’re a Vulcan philosopher), the best single measure of happiness from a measure of subjective well-being and positive emotion is one that loads 100% on subjective well-being. If you think only positive emotions matter (you’re a hedonist), you would pick a measure that loads 100% of positive emotions.

PCA would be a solution if you thought there really was some fundamental concept called happiness, and you could get at it by asking about subjective well-being, or positive emotions, or a sense of meaning, and some of those descriptions of the thing called happiness resonate more in some cultures than others, so they aren’t perfectly correlated despite measuring the same thing. Then you’d basically want to grab the common variance of all those measures, call that happiness, and dump the rest of the variance as being cultural resonance and idiosyncrasy.

> Say more words?

Maybe it’s because I just woke up, but this is the most perfect way to ask for elaboration ever and I marvel I have never seen it done until now.

I don’t think that’s true; the eigenvalues that you get in the middle of PCA tell you the scale.

I think you either have to give up on scale as they say, or give up on variance (which makes the resulting weights depend on the population sampled, and that seems not good). And in all cases, you give up on the linearity of each scale, which is probably also kind of arbitrary in most cases.

i wasn’t so much worried about computing exact values, as using the terminology of principal components to think about what’s being discussed. if you’re super concerned about computing some things, there’s a lot written about scaling / scale-invariant / whatever variations on PCA.

so, very hand-wavily applying a bit of the terminology of PCA:

we can look at the strength chart, and quickly say “it makes sense to talk about ‘strength’ without always breaking it down into two (or more!) subcomponents, because the first principal component of this strength data captures 98% of the variation. we’ll call it strength. we’ll perhaps call the second component ‘popeye’, and then we’re all done.”

for the happiness discussion, we can boil it down to some statements like “there’s disagreement about the direction of the first principal component” and maybe “if the first principal component accounts for 99.9% of the variation, then their answers to 99.9% of happiness-related questions will be the same, so that they could never realize they had slightly different concepts of happiness at all”

and about the final transit-map/lovecraft bit, maybe we could say “human moral systems seem to hang out in a nice little linearized region of morality space, but maybe morality space is horrifyingly nonlinear outside of that region.”

(i’m not particularly endorsing any of these statements, and i’m certainly not suggesting that they’re better than what’s been written. just… noting that there may be some more concise terminology available for the subject.)

Z-Score normalize, then PCA! 😎

“right up until X, it looks like a job for Y” is sort of a theme of this post isn’t it?

Doesn’t this fall afoul of what Scott was saying that it’s not just happiness that’s a messy ball of things you’d want to do component analysis on? The sub parts of happiness are themselves a messy ball? So just like its hard to compare what different people mean by such and such an amount of happiness, making peoples happiness at least partially incommensurable, it’s hard to compare what one person means by meaningfulness or positive emotion as well, and so on. That makes whatever you get out of PCA really really difficult to interpret.

I don’t understand this. The point about divergent tails is they are in the same ballpark (the guy with the strongest grip also scores very highly on arm strength and vice versa) whereas the desired end results for moral systems can be, and in fact are, diametrically opposite to each other. So how is the one a metaphor for the other?

It isn’t. Scott says as much:

Divergent tails are behavior of a system in the limit as the correlation coefficient approaches (but does not equal) one.

The process of tail-divergence produces noticeable, but subtle, results whereby you get different answers to the same question (‘who is the strongest man alive’) depending on which of two strongly correlated measures you use. Whether you use grip strength or arm strength as your metric, whoever you name as strongest man will still be really strong in the eyes of other people who measure differently.

The mess of diametrically opposite ‘optimal’ results for different morality systems is an example of the same system in action, as the correlation coefficient approaches (but does not equal) zero.

The same process generates far more conspicuous results when used on uncorrelated moral precepts that just happen to produce compatible results over a small region of linear morality-space. For instance, ‘the moral thing to do is whatever God said to do in the Bible’ versus ‘the moral thing to do is to maximize the amount of pleasure being experienced, integrated from the present into the infinite future.’ These rules produce comparable results over a simple space of simple morality questions, but then diverge very rapidly outside of it.

It’s as if we had two people who answered “who is the strongest man” by picking Mike Tyson (“strongest physical muscles and most personal fighting skill”) and Fred Rogers (“strongest conscience and empathy”). Totally divergent results; the two men could not be less alike.

So the connection is in the similar underlying process that produces results in two cases that are different but analogous, in that the difference in results between the cases can be easily explained and predicted by what’s going on inside the process.

Are they though? The bulk of many/most? moral systems have a sort of meaty common core that looks pretty similar, (treat other people with respect/tell the truth/work hard/ etc). It’s only when you push these to the ends that they start to diverge

Stretching the ellipse geometry analogy, the point would be:

No matter how correlated (thin diagonal ellipse), if you zoom in enough of the tip, it looks roundish. So, when like happens in the middle, both axes represent the same thing. But, conditioned on living on the extremes, they have nothing to do with each other. It may be that they are the same, it may be that they are opposite, but the sameness is lost.

I tend to say that words are fuzzy collections of related concepts, rather than hidden inferences, but I think we are gesturing at the same underlying idea in either case. Certainly, I think that we learn what words mean via repeated associations rather than via a clear-cut articulation or expression of what they mean. However, I don’t think framing people’s divergent views on morality and happiness as a matter of what parameters people fixate on in their discovery of the words “happiness” or “good.”

This is interesting, possibly useful, and as of the transit map, quite possibly transitions from essay on moral intuitions into performance art.

I’ll just be over here happily pondering all the expansive moral directions one can explore using the Richmond Amtrak connection.

-Scott Alexander

A decent argument for conservatism when exploring the space of morality: “Don’t get carried away, kid.”

Yeah, that’s my takeaway too. Stay in the ellipsis. If your morality is making you think that you might want to destroy the entire universe to prevent suffering, or put the world’s population on drugs, don’t do that. Stick with things that are broadly agreed to be good, by at least a few moral systems (even if a couple disagree — unanimity isn’t always possible). This makes sense because you can’t attain perfect certainty that your moral system is the right one or will work when you get to extreme cases.

This is a very good heuristic for almost anyone who lacks the power to actually implement such choices. That is to say, everyone now alive.

It’s problematic IF you like to think about AI singularities, existential risk, theology, fantasy novels, and other scenarios devised by the brain. Scenarios where a being actually has the power to reshape the known world according to their desires, and where nothing, not even random friction and bad luck, can stop them.

Because then you actually have to answer the question.

Suppose you’re a demiurge responsible for designing an afterlife. Now, Even Bigger God help you, you actually have to decide:

“So, suppose we give everyone who is, on balance, nice, a harp, and make them sing hymns inside big cubes made out of semi-precious stones. Meanwhile they’ll be watching the people who were, on balance, naughty writhe in a lake of eternal fire. Is this a better or worse thing to do for everyone than putting all the brave people in an eternal feast hall, while all the cowardly people rot in eternal darkness? Or maybe I should just remake reality as perfect consciousnesses sitting on lotus thrones where everyone shares perfect knowledge and equanimity about all things? Or maybe everyone should just sort of… cease when they die, you know, to raise the stakes?

…

At this point, which afterlife you design will depend heavily on which things you value highest, and you may be assured that there will be a long line in the Celestial Complaints Department from all the now-dead souls that think you got it wrong. Well, except in the world where everyone just sort of ceases. There you have a solid 0 1-star ratings out of 0 ratings total!

Forget the demiurge–imagine you’re a liberal politician who has to decide whether to cut a deal with your conservative counterpart to fund education by agreeing not to fund abortion. (Or imagine you’re a conservative politician forced to fund abortion in order to make a deal that brings jobs to your state…) Of course, in this case you’re dealing with people with *different moral priors*, even outside polarized systems like ours where ‘owning the libs’/’sticking it to the bigots’ is a major goal.

I think the way to be a conservative demiurge/friendly AI would be to ignore the tail and try to make things like the center, only more so.

The systems have very different tails, but in the center they seem to agree about somethings. Life is generally good, happiness is generally good, knowledge is generally good, etc. Just amplify those things without maximizing any one thing.

Don’t kill everyone and convert the universe to orgasmium, just make everyone happier through normal means.

Don’t turn everyone into disembodied contemplative consciousnesses, just make everyone smarter and more knowledgeable. Superhumanly so if you have the resources.

Don’t throw naughty people into a lake of fire, just give them a punishment proportionate to their crimes.

Give everyone a big feast with bigger portions for the brave, maybe throw some people in darkness for a temporary period of time proportionate to their cowardice.

Don’t go full Repugnant conclusion, just create some new people but also devote lots of resources to making existing people happier.

Enhance everyone’s lifespan as much as you can, but don’t do anything crazy like make a trillion brain emulators of one optimal person and kill everyone else.

That’s the way to be a conservative demiurge. And I can’t help but notice that the scenarios I’m describing, while they may not be optimal according to any axis’ tail, sound a lot more like our intuitive sense of what a utopia would be than any of the tail scenarios.

True.

I guess my point was you can easily run into difficult moral decisions once you have any kind of power. You have $10,000 to spend on social programs as a small-town mayor…do you fight homelessness or opioid addiction?

I don’t think this issue requires an AI singularity to be relevant. There are plenty of cases here in the real world where the tails diverge, and where we have to make meaningful choices based on that. We might not be able to take the red line all the way to Richmond or the green line all the way to Fremont, but we can still choose between going up to Downtown Berkeley or down to Hayward, and there’s still a good deal of distance between them.

For instance, one common argument that I hear from traditionalists is that most people were happier living under traditional family structures, even if they had less freedom to choose how to live their lives. I don’t buy this argument: for one thing, I’m skeptical that people were really that much happier (it’s not like we have a lot of statistical data on happiness levels in pre-industrial Western countries); for another, we’re currently in a transitional period where the old cultural norms haven’t entirely faded away yet, and a lot of the pressures faced by modern Westerners could result from being caught between two worlds with heavy but mutually exclusive demands. But let’s say it was completely true, and as long as the modern world continues to take precedence over traditional values, the majority of men will continue to be frustrated with how useless they feel, and the majority of women will continue to be stressed to the point of neuroses trying to balance their desire for a family with the demands of their career. I would still choose that over a traditional model where most of those people were more content but had significantly less control over the course of their lives, because my personal conception of happiness/value/what-is-good-in-life weighs agency and personal freedom more heavily than contentment or stress reduction. Similarly, I’m skeptical of all those studies showing that people in low-tech societies are “happier” (at least in the sense of finding their lives more meaningful) than those in high-tech societies, but even if I knew for a certainty that they were all correct, I still think high-tech societies are better for humanity, because I value things like “enjoying the comforts of insulation and indoor plumbing and electricity” and “having access to modern communications and transportation technology and all the variety they provide to life” and “not being at high risk of falling victim to starvation, exposure, and serious diseases” above meaningfulness.

That said, I do wonder if actually figuring out what humanity’s core values are would help us to navigate these confusing landscapes. Obviously the reality isn’t going to be anything nearly as simple as “red line, green line, blue line, yellow line,” but I do suspect that there are some innate values built into our brains, even if they’re fuzzy around the edges. I’d imagine the vast majority of humans (basically everyone aside from a very small percentage of extremely neuro-atypical outliers) share these values, though biological and personal and social and cultural factors lead people to prioritize some of these values and downplay others. Haidt’s theory of Moral Foundations seems like a promising start, though I also feel like it’s just touching the tip of the iceberg.

— Scott Alexander

It does work better out of context, doesn’t it?

As an aside, I kind of roll my eyes at people who try to find profundity in Lovecraft, from people who think he was some sort of malignant racist prophet spreading fascism to people who think he was some sort of great enlightened prophet of the white race spreading the gospel. The dude created a new genre of scary stories and had some weird far-right views he apparently was weakly attached enough to to marry a Jewish girl. I don’t think he had any special insights into the real world, and I doubt he would have claimed he did.

He did write one of the very first essays on genre fiction, I believe. The whole thing is quite good, and the introduction is still well-regarded. Not exceptionally profound, but still insightful.

http://www.hplovecraft.com/writings/texts/essays/shil.aspx

Oh, I’ll give the Old Gent major, major credit for his work on *horror fiction*–there, he knew whereof he spoke, much like Tolkien with ‘On the Monsters and the Critics’ and the uses of fantasy. (Is anyone surprised J.R.R. Tolkien had strong views on the value of fantasy?) I just find the people who see him as a political theorist kind of silly. Was Lovecraft racist? Sure, who cares? Cthulhu’s not racist–all creeds and colors are equally irrelevant to the Sleeper Beneath the Sea.

My model of morality is as follows:

The first analogy (oval) is the distribution from which we, for each value, draw a value for

1. How important something is

2. A sense of whether we feel it is instrumental (we have a prior it’s good, unsure why), or if it’s a terminal good.

For example, let’s go with the value of ‘protecting your tribe’.

Even if you think sticking with your tribe is super important, you might do this because you feel culture has objective value (typical of learned lived experience of folks in non-atomized societies), or because you instrumentally feel it’s a chesterton fence of some kind.

How strongly you feel about a value, terminal or instrumental, the more resources you’re willing to put into arguing for it when everyone’s values are more-or-less aligned.

The more you feel it’s a terminal goal, the more you’re comfortable taking it to the extreme logical conclusion.

The more you feel it’s an instrumental goal, the more you’re willing to compromise and feel less strongly about it in light of new information.

The more we think about morality and come up with ‘new ways of thinking about things’, the more we distill out the strong, terminal goals that we have.

1) Isn’t happiness research just a total mess at the moment? Should we maybe wait at least a little while until they figure out what they’re doing, before we draw broad philosophical conclusions?

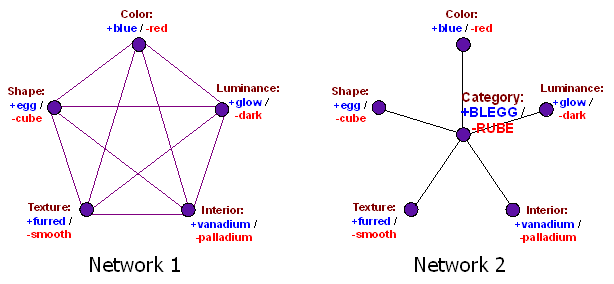

2) On TCWMFMNMFTC, since it’s come up again, and since it seems to inform a lot of your thinking. BLEGG/RUBE’ing a cluster of correlated properties is a cognitive error which we do a lot. Is Pluto a planet? Well, it has properties X,Y,Z and lacks P,Q,R; the line can be drawn any number of ways; none is strictly “better” than any other, so stop caring. But that solution is unavailable when it matters whether something is BLEGG or RUBE. For example, “personhood” is a cluster of usually correlated properties (being conscious, intelligent, made of meat, animate, etc) which nonetheless have edge cases (comatose humans, severely handicapped humans, sufficiently intelligent AI, early fetuses). But because personhood matters, we can’t just decompose it and say “a fetus is animate human meat, but not intelligent or conscious; draw the ‘person’ line however you want.” We need the correct answer to the moral question, and the moral question hinges on whether we have a person or nonperson (BLEGG or RUBE).

To address TCWMFMNMFTC directly: let’s say you explained it in full to a trans man. Afterwards, he asks “so, are you saying I’m a man?” You say… well, sure, because we can draw the categories that way, and that seems best for his mental health. He comes back with, “It get that you’re saying I should be considered a man, but do you think I’m really a man?” You say… um, well, the point is that gender is a social construct, so there’s no “really” about it, so I guess no? And the trans man says “then you’re wrong, I know I’m really a man, I have since I was a child, and that matters; sincere thanks for your support on the causes I care about, but your metaphysics are wrong.”

Bringing it back to happiness and this post: you say the variables that we conflate into happiness converge for a while, then they come apart at the tails, and there’s no fact of the matter of which variable is best to judge by. Because people usually think happiness matters, they might come back at you and say “sure, but one of the tails (or some particular combination thereof) must be actual happiness, which is it?” And you say… well, happiness is a social construct, so whatever you want! And they say “but one understanding has to be true, and we have to maximize that one! It’s a profound moral duty!” (Even if they’re not utilitarians, this probably holds; most people think happiness is good.)

Is that what you’re trying to guard against when you say you’re an “ethical subjectivist”? Those “naturalists” from the previous paragraph should just “do the thing that’s actually ethical” to them, based on whatever subjective understanding of happiness they have? And if so… not being an ethical naturalist yourself, what do you yourself do when you run into corner cases, and how can doing it not reflect an underlying naturalist view of some kind or another? Or are you both at the same time?

+1

It is easy to assume that “the moral question hinges on whether we have a person or nonperson (BLEGG or RUBE)” but not so obvious that that’s actually right.

Indeed, often there are multiple moral questions and there’s no particular reason why they should hinge on the exact same things. Suppose X is kinda-person-ish. We might want to decide: is it OK to kill X? should I take any notice of X’s welfare and preferences? should I give approximately the same weight to those as I do to a typical person’s? if I am trying to construct some sort of consensus morality, should I pay attention to what X approves of and disapproves of? — and we might reasonably find that different aspects of personhood matter to different extents for different questions.

It seems like your hypothetical involving a trans man is incomplete. Hypothetical-Scott explains his position to Hypothetical-Trans-Guy, HTG says “am I a man?”, HS says “sure”, HTG says “no, but am I really a man?”, HS says “well, that’s kinda an ill-posed question”, and HTG says “bah, I just know I’m really a man, so you’re wrong” — so far so good, I guess, but what are you inferring from this hypothetical exchange? I mean, do you really want to endorse a principle that when someone feels that they just know something, that guarantees that their metaphysics around the thing is right? Because I don’t think that ends well: e.g., I bet it would be easy to find advocates of multiple mutually-incompatible religions who just know that their god is real and their religion’s claims are true. On the other hand, if that’s not where you’re going, then what is the point of that conversation? What does it tell us, other than that sometimes one hypothetical person may not like what another hypothetical person says?

(I largely agree with Scott-as-I-understand-him on this, but to the question “am I really a man?” I would be inclined to say “yes, sure”, and I would only start giving answers of the sort you ascribe to Hypothetical-Scott once the other party makes it explicit that he’s not just asking “am I a man?” but asking about the underlying metaphysics. Because I’m happy to say that a person’s deepest-seated convictions are a reliable guide to — indeed, constitutive of — their gender identity, but not to the truth of hairy metaphysical questions.)

As for the final question, of what to do when faced with this sort of thing, I can’t speak for Scott but (1) by definition these unusual tails-coming-apart situations rarely arise in real life, but (2) if they do then I react by feeling extremely uncertain not only about what one should actually do but about what I think should be done. In some cases, I think there actually is no fact of the matter as to what I think is right, though of course I could pick an answer and then there would be, and of course in a given case I have to pick something to do. (The latter doesn’t imply that I really approve of whichever action I end up taking; alas, sometimes I do things that I myself disapprove of.)

But we can. We should!

It doesn’t, though. It really doesn’t.

Like, the whole point of all the stuff that Scott is citing is that these things you’re saying are mistakes. They are bad reasoning. We know that it’s nonsensical to think in this way.

This hypothetical trans man would, of course, be wrong. The correct response is to say: “I acknowledge that you have this deeply felt sense of ‘really being a man’. However, a deeply felt sense of the world ‘really’ being some way, has absolutely no need to correspond to that alleged state of affairs even being a coherent description of the world, much less an actual one. Our brains fool us. Please re-read ‘A Human’s Guide to Words’ until you understand this. I will, in any case, continue to support your efforts toward acceptance and fair treatment, but of course I can’t agree with your metaphysical claims, which are very confused.”

Let’s say that, in 1820s South Carolina, someone chooses to define “person” to include nobody with African descent—that is, to not include black humans. Are they wrong? Their usage coincides with common usage. It’s certainly not a useless definition, or an irrational one—it helps them grow quite a bit of cotton.

If you want to say that the word “person” is a word just like any other, and that Eliezer’s guide applies to it, has the slaveowner slipped up in their picture of the world? Their definition is useful to them, and socially agreed upon. If they are wrong, why?

To me, they’ve slipped up (big-time) because their definition of “person” as excluding black humans is incorrect. Clearly, you disagree. So come up with a different answer.

And before you just shout “read the sequences!” again, note that Eliezer might even agree with me when he considers questions like “what is a person?” (See my comment below.) I’ve read the sequences, and your condescending tone in assuming that I haven’t is less than appreciated. So drop the appeals to authority, and don’t merely tell me I’m wrong. Tell me why you disagree, not just that you disagree. Unless you start doing some actual thinking and arguing of your own, you’re dead weight to this comment thread.

Whether the slaveowner has slipped up in their picture of the world, and whether they are somehow “wrong” to define “person” as “human who is not black”, are two different questions.

Has the slaveowner slipped up in their picture of the world? I don’t know, what is it you’re claiming they believe? What inaccurate factual beliefs do you say they have? Tell me, and I’ll tell you whether those beliefs are wrong or not.

Is the slaveowner “wrong” to define “person” as “human who is not black”? Depending on his factual beliefs and his values, this definition might not be useful (due to not matching his factual beliefs, or not reflecting his values). In this case, though, I doubt that that’s the case.

A different answer to what? Anyway, definitions can’t be “correct” or “incorrect” in a vacuum. Please read the Sequences again.

Definitely not. (See my response.)

I’ll bite that bullet. This is morality working as intended. You might as well complain that legs are fundamentally flawed because they allow both predators to chase prey and prey to run from predators.

This is what the rest of Eliezer’s “Guide To Words” is about – especially “Replace The Symbol With The Substance”. If you haven’t read it, you’ll probably find it addresses your concerns. If you have, can you clarify exactly why you don’t think his solution is good enough?

Link:

Replace the Symbol with the Substance

Yup! Read the whole thing already. So thoroughly, in fact, that I know he agrees with me—or at least, that his arguments there aren’t entirely defeating to my point here. “Replace the Symbol with the Substance” argues we should “play taboo” with our concepts to get down to the reality of a thing, not getting caught in our preconception of “a bat” or “a ball.” But…

In a comment on his post Disputing Definitions, he says—considering, specifically, the contention that “Abortion is murder because it’s evil to kill a poor defenseless baby”—

So, from Eliezer himself, direct appearance in the utility function (more generally to include non-utilitarians, “being a morally important category”) is a known case where definitions might actually matter. He admits you can’t “play taboo” in those cases.

Do you think I’m misinterpreting Eliezer? Or do you think he was wrong to say that?

You are definitely misinterpreting Eliezer. Reading what you quoted as saying that “definitions matter” is missing the point entirely.

Eliezer is not saying that it makes sense to have a category boundary appear in your utility function, and he is definitely not saying that just because an alleged category appears in your utility function, that this therefore guarantees that this alleged category corresponds to some actual cluster in thingspace.

All he’s saying is that his proposed argument-dissolving technique of creating new and distinct words to refer to different things, will not in fact solve certain kinds of actual arguments that people have. That is all.

Sure, that’s a reasonable interpretation. But even that seriously weakens his argument! Disputes over categories in your utility functions are still candidates to be meaningful disputes, despite all the arguments he offers in the sequences. (Somewhat surprisingly, as far as I can tell, he never deals with them directly.)

Let’s trace the argument back a bit: there were arguments over whether there are any unheard sounds, and Eliezer’s argument-dissolving technique showed that the argument was silly, since it revolved around the definition of “sound”, which doesn’t actually matter.

Now consider the abortion debate. In large part, it turns on whether there are unborn persons, and if so, which unborn entities are persons. Is this argument also silly? It clearly revolves around the definition of “person”; but given the choice, I’d say that “it matters what is or is not a person” before I’d say “the abortion debate is really over nothing at all.”

If you want to maintain that there are no wrong definitions, you need to say that either the abortion debate is silly, or that it is not really disputing a definition. Which route do you want to take? Neither seems very tenable to me.

Definitely not. The correct set of categories that should “appear in your utility functions” (broadly speaking) is “none of them”. This is a big part of the point that Eliezer was trying to make.

Yes, extremely. (Fortunately—or unfortunately, depending on one’s perspective—the abortion debate does not actually turn on this.)

Why not both?

The abortion debate, as it is usually (and totally inaccurately) represented in spaces like this (and as, for example, you have represented it right here in this thread), is extremely silly.

The abortion debate, as it actually is, is not really disputing a definition.

Forgive me if I seem rude, but are you proposing that utility functions should be limited to a ranking of enumerated world-states?

Except when you are deciding whether the Wikipedia article on Tinnitus or Ultrasound belongs in Category:Sounds.

@Hoopyfreud:

Are you talking to me? (It seems like you are, but since this blog software doesn’t permit deep comment nesting, it helps to preface your comments with the name of the person you’re talking to, like I just did.)

Anyway, assuming the question was meant for me…

… well, I don’t actually understand what you’re asking.

Maybe it would help to clear up some terminological confusion?

For example, something we’ve glossed over in this comment thread is the fact that, actually, people just plain don’t have utility functions. (Because human preferences—with perhaps a few exceptions, although I have my doubts even about that—do not conform to the axioms that, as von Neumann and Morgenstern proved, an agent’s preferences must conform to in order for that agent to “have a utility function”. What’s more, most economists—and be assured that I include v N & M themselves in this—have been quite skeptical about the notion that humans ought to conform to these axioms.)

In this thread, we seem to have been using the term “utility function” as a sort of synecdoche for “what we value”. I’ve let that slide until now, as it seemed clear enough what Yaleocon meant and I saw no need to be pedantic, but now, it seems that perhaps we’ve gotten into trouble.

If your question stands, given this clarification, then I confess to being perplexed. Was it your intent to raise some narrow technical point (such as, for instance, the question of whether preferences over world states are isomorphic to preferences over separable components of world states; or whether an account of “impossible possible worlds” is necessary to construct a VNM-compliant agent’s utility function; or some other such thing)? If so, would you please expand on what specifically you meant?

If you meant something else entirely, then do please clarify!

@Said Achmiz

To pick up where Hoopyfred left off:

You claim that

I’m not sure what you’re trying to say, but it looks like “there shouldn’t be any categories in your utility function/preferences/whatever,” and that’s insanity if taken literally. Unless we’re discussing fundamental particle physics, everything we say makes use of categories in nearly every word we speak or write! Could you give us an example of what you would consider a moral principle (or any other type of meaningful sentiment if you’d prefer) formulated without the use of categories?

@thevoiceofthevoid:

Yes, you interpreted my formulation correctly. You are, of course, correct to take issue with a literal reading of my comment. The thing having been quoted in its correct phrasing once, I thought I could get away with sloppy phrasing thereafter; I apologize for the resulting confusion.

Here’s what I am talking about:

Suppose we are speaking of what I value, and I declare that I value “cake”. In fact—I continue—it isn’t merely that I value certain actual things, some fuzzy point cloud in thingspace, which I am merely “gesturing toward”, in a vague way, with this linguistic label, “cake”. No! Cakeness, the fundamental and metaphysical essence of the thing, is what’s important to me. Anything that is a cake: these things I value. Anything that isn’t a cake… well, I take it on a case-by-case basis, I suppose. But no promises!

Now suppose you present me with a Sachertorte, and inquire whether I value this thing. “Bah!” I say. “That is not a cake, but a torte. It is nothing to me.” But you dispute my categorization; you debate the point; your arguments are convincing, and in the end, I come to believe that a Sachertorte is really a cake. And thus—obviously—Sachertorte is now as dear to me as any red velvet cake or Kiev cake.

And suppose I have always had a fondness for the Napoleon. A cake par excellence! Or… is it? Ever the disturber of the metaphysical peace, you once again dispute my categorization, and finally convince me that the Napoleon is not a cake, but a mere petit four. At once, my attitude changes, and I no longer look twice at Napoleons; they lose all value in my eyes, and I feel nary a pang of regret.

… obviously, this scenario is completely absurd.

But this is precisely what it would mean, to have categories appear in your preferences—as distinct from categories appearing in a description of your preferences!

@Said Achmiz

Weighing in here to agree with Yaleocon.

The scenario involving cake that you just gave proved Yaleocon’s point, I think. Far from being obviously absurd, that scenario seemed to me like a nice description of many conversations I’ve seen in my own life–e.g. someone getting convinced that wearing a sombrero is an instance of Racism, or that statistical discrimination is not.

Precisely because we don’t have utility functions (which arise from very well-defined preference orderings over possible worlds) we run into this issue where what we “value” involves vaguely-defined categories. I do think this is what Eliezer was getting at.

@Said Achmiz In some sense the further we get from the training data (the further from the common Balboa-West Oakland area of common agreement), the less our moral disagreements appear to matter.

If you’re not sure if a Sachertorte or a Napoleon qualify as “cake”, then whatever they are, they’re pretty far from your central definition of “cake”. So if you misclassify them, you haven’t done a lot of damage.

From my viewpoint, the same holds with the abortion debate, which I do see as about the definition of a “person”. Given that people disagree, that seems to say that even if we get the answer wrong, any moral damage is minor.

A counter-argument is the antebellum slave owner who’s decided that African descent excludes personhood. Since my definition of “personhood” is based on rationality, ability to respect the rights of others, etc., and has nothing to do with descent, the slaver’s decision seems to create maximal (rather than minimal) moral damage.

You seem to think the same is true of the abortion debate – perhaps so, but then I don’t get it. How is the abortion debate not about the definition of a “person”? If it’s not, then what do you think it is about?

@kokotajlod@gmail.com:

Yes, indeed, many people make this sort of mistake. That’s rather the point. It would hardly justify writing so many words on the matter, if the error were obvious, or very rare, or committed only by the exceedingly stupid.

But though it be ubiquitous, it is still a grievous error. Arguments over what “is Racism” or “is not Racism” are utterly absurd for this reason. (At least, on their face. In truth—as with abortion—such arguments are not about what they seem to be about. But then, here at SSC, we know that, yes?) People who have conversations like this—in full sincerity, thinking that they actually are arguing this sort of metaphysical issue—are making a profound conceptual mistake. That is the point.

As I’ve said elsethread, what we value “involves” vaguely-defined categories in the sense that describing our preferences must, inevitably, require reference to vaguely-defined categories; otherwise we’d be here all day (and all year, and all eternity). But valuing (or thinking that you value) the categories directly, whatever they may contain, is a terrible conceptual mistake. (Eliezer once described this sort of thing as “baking recipe cakes—made from only the most delicious printed recipes”.)

@Dave92F1:

You are, it seems to me, making the curious mistake of rounding our (hypothetical, at the moment) opponents’ position up to the nearest sensible, sane position you can conceive of. You then argue that, well, said view is not so bad after all!

But no. See the view I am describing for what it is! My hypothetical cake lover is not concerned with extensional classification. (This is the entirely sensible, empirical question of whether some particular confection lies within the main body of the “cake” cloud in thingspace, or whether, instead, it is an outlier; and if the latter, whether a usefully drawn boundary around the cluster—one that would best allow us to compress our meaning most efficiently, for the purpose of communication and reasoning—would contain or exclude the baked good in question.)

No, the (absurd) view I am describing is one which concerns itself entirely with intensional categories. That is, in fact, precisely what makes it so absurd!

Indeed the whole point of my example (lost, perhaps, on folks who are not quite such avid bakers as I am; I apologize for any confusion) is that a Sachertorte, for instance, is a perfectly central sort of cake. Any argument about whether it “is really a cake” can only be about intensions. I could also have used Boston cream pie as an example. (Isn’t it a pie, and not a cake?! It’s right there in the name! But no, of course that is silly; it’s as central a cake as any. Yet the one who is concerned with intensional definitions, might—ludicrously, foolishly—be swayed by the argument from nomenclature!)

As to your other points…

In all of my comments in this thread, I have been trying my utmost to avoid actually getting into an object-level debate about abortion. (I find these debates to be, quite possibly, the most tiresome sort of internet argument; twenty years ago they were diverting, but enough is enough…)

Your questions are fair, of course. I don’t mean to dismiss them. But I’m afraid I will have to demur. Possibly this will mean that there’s nothing more for us to discuss. In that case, I can only recommend, once more, a close reading of the Sequences (“A Human’s Guide to Words” in particular), and otherwise leave the matter at that.

Doesn’t the cake metaphor get the causality wrong? Meaning: people aren’t arguing that Napoleon is not a cake and consequently denouncing and rejecting it, as much as argue it cannot be a cake since they don’t like it.

Perhaps it goes like this: person A dislikes the political views of person B, and argues that B is in some undesirable category C, and therefore person D should also reject person B. Person D agrees with person B, and argues back that B is in category E which everybody respects and trusts, and it is in fact person A who is in category F….and so on, and so forth.

In short: we assign negative categories to things we already don’t like (for intrinsic reasons), and expect others to have category-based utility functions allowing us to manipulate them.

@Ketil:

Yes, this is correct.

(This is one of the many reasons why it is quite foolish to have, as you put it, “category-based utility functions”.)

Here’s a flip of Yaleocon’s point (which I agree with, but seems to me to be less of a productive way to approach the debate):

https://samzdat.com/2018/08/22/love-and-happiness/

Axioms of morality cannot be sufficiently well-constructed as to be unambiguous, because the frameworks that we use to define them are incommunicable.

There is a thing called happiness (and no, I don’t mean this in a platonic sense, I mean that I can identify happiness when it occurs in me). I can measure things which correlate with my experiences of happiness like serotonin levels and listening to woman-fronted rock groups of the 80s. I can come up with pithy sayings about happiness which generalize well to my experience. But at the extremes, there are things that require reflection for me to describe them as happy and which I cannot evaluate within a framework of happiness evaluation a priori; my model isn’t well-defined enough for that, and I have no means of extending it to cover these cases (including experiencing them) that don’t run the risk of substantially modifying the framework and ruining the predictive power of some of the important correlates.

I cannot describe why I seek happiness, and assuming that “true understanding is measured by your ability to describe what you’re doing and why, without using that word or any of its synonyms,” then I do not understand anything at all about myself. But trying to explain human action without reference to [your favorite word for that-which-creates-percieved-value] seems like a futile task, given a non-hard-deterministic viewpoint; if you *are* a hard determinist, I promise not to take any future attempts to hack my brain personally; I know it’s just a biological imperative that you literally can’t help yourself from fulfilling (this is snark of humor’s sake, not meant to preempt genuine debate).

The counterargument, as far as I can tell, is that happiness is a “poorly defined cluster in the space of experience.” But if I made it my project in life to enumerate the elements of that cluster, I think I’d find it a monumentally unrewarding and futile task, not least because the elements with membership in that cluster will inevitably change before I work my way around to analyzing the quantitative and qualitative differences in my emotional state produced by listening to Leuchtturm rather than Zaubertrick while cooking dinner. But one makes me happy, and one doesn’t. So I listen to Zaubertrick while I cook, and I hope that warms someone else’s utilitarian heart.

The other counterargument, that substance precedes essence and that the things I’ve identified as correlates above are the only *real* happiness, seems to fall apart when I point out that when my subjective experience of happiness doesn’t match up with any of the correlates, I find myself seeking happiness, not the correlate. I care more about being happy than having serotonin, or I’d be willing to get in line for a serotonin pump, but I know I wouldn’t be. You might argue that I must have simply failed to identify the correct convolution of correlates which will be perfectly predictive, to which I’d reply that clearly nobody has, and that these correlates are so un-universalizable that even if you managed to overcome my objections and managed to perfectly predict the worldstates that would make me happy, you wouldn’t have perfect information about other people’s frameworks of happiness. And if you maintain that you would, I’d say we’re back to something isomorphic to the hard determinism problem, and that you’re welcome to continue to follow your biological imperative to howl into the void.

This turns out not to be the case.

We know, in fact, that some of the… “aspects”, shall we say… of “happiness”, which Scott mentions in the post—positive emotions, say—can easily be identified, by a person experiencing them, at the time they’re experienced. But other aspects of happiness—life satisfaction, sense of meaning/purpose—are much less amenable to in-the-moment identification. We have a broader, vaguer sense of them—as we look back on our lives, in moments of reflection. (It is even possible that we do not simply recognize these things, but in fact construct them, in such moments, in retrospect—by way of making sense of certain inchoate sensations or experiences which we cannot, in the moment, give a name.)

This, too, turns out not to be the case.

While I certainly defer to your own judgment on this part…

… I can’t agree at all with this. (For several reasons, at that! To name just one: surely you don’t think that happiness—whatever we might be referring to when we user this word, taking a broad view of common usage—is the only thing that creates perceived value?! I’d say that it’s not even half of the story! Besides which, lots of people have had some pretty good success explaining lots of human action without recourse to talk of happiness. Or don’t you agree?)

Well, you don’t have to. We have people who make careers out of this sort of thing. (Whether they’ve had any great success with this is up for debate, but “that sounds boring; I wouldn’t want to spend my time doing it” is a singularly pointless argument.)

I think we’re talking past each other here in a few places.

I agree that correlates are not good predictors; that’s my point. We don’t have a good predictor, but we do have a (bad) correlate.

When you say

I think you’re doing a tricky trick, since happiness has several common usages and my thesis is that a “real” common definition of happiness cannot be formulated. Let’s define happiness as an emotional state with the typical aspects of positive emotions, life satisfaction, and sense of meaning, among other things. It’s an awful definition, but I think it’s slightly less bad than the one I think you implied. Then yes, I think that all these things taken together form the whole of perceived value, and that it’s as worthwhile to call the whole kit and caboodle happiness as anything else, since I can’t (no, really, I cannot) enumerate them all, and when people talk about their frameworks for perceived value, happiness is mentioned more often than contentment or satisfaction or… any number of words that reference things that are really not communicable, but that are commonly understood to be experienced. Hell, “perceived value” isn’t really a communicable concept either, but I’m hoping it’ll have more resonance in your framework than my previous attempt.

Finally, when I say it would be unrewarding, I mean that I’d end up with a mess of enumerated worldstates, inferences based on which I would expect to have little power far outside the bounds of the enumeration, or in time given that my happiness framework will almost certainly change as I proceed into the future.

I am interested to know what your other objections to my point are; I’ve done my best to anticipate them, but I think you’re coming at this from a different angle, so I’m excited to see where we can reach understanding.

Also, I apologize for edits made to my last comment after posting; they may or may not interest you, but absorbing them will inevitably take more effort than an addendum to the comment would have.

@Hoopyfreud:

No, I don’t think we’re talking past each other; rather, I think you are not quite appreciating the degree to which I am rejecting your thesis. I will try to explain…

I accept your provisional definition. I am not here to quibble over semantics.

And I strongly disagree with your claim. I think that this sort of thing does not even begin to form the whole of perceived value.

(Elsewhere, you talk of finding yourself “seeking happiness”. For my part, I do not seek happiness; and I am not alone in this. This is not an incidental point, and goes directly to what I am saying here.)

Re: edits to your earlier comment: you are not so much preaching to the choir, as preaching to the archbishop. Where I disagree with you, I disagree in the diametrically opposite direction! Certainly I would never argue that you’re “really” after the neurochemical correlates of happiness, and not happiness itself; but I would go further, and say that “happiness” is itself only a correlate (or, if you like, an implementation detail) of what we’re really after. That is to say: “happiness” is what happens when we get what we really want—which makes it misguided, at best, to “seek happiness”.

(There are, of course, exceptions to this reasoning, such as: “Part of ‘happiness’ is ‘not being depressed’. I would therefore like my depressive disorder to be cured, please.” But these are exceptions that—in the classic sense of the phrase—prove the rule, as what we in fact are after, in such cases, is the ability to achieve our goals, and to avoid suffering. Whether we use the word “happiness” to talk about these desires, or not, is irrelevant.)

@Said

I think we agree, then. What I’ve resorted to calling “perceived value” and what I normally call happiness works, for me, in the way you’re describing; “joy”/”enjoyment” for me, functions like “happiness” seems to for you, and maybe that’s the fault of the naughty Aristotle on my shoulder. We don’t call them the same thing, and while I think that they resemble each other a bit more than we’re letting on, they’re clearly very different. If it makes the argument clearer, sub in whatever functions this way for you for “happiness” in all my posts in this chain.

But I think this is driving home my point – the substance is vast, all-encompassing, and incompletely defined, and the symbol is nearly unusable for communication. In cases where this happens, it’s useless to “replace the symbol with the substance” because the one is inane and the other is useless for this kind of formulation.

And if this is true of a concept like “perceived value,” why should it not be true of “person?”

@Hoopyfreud:

Yes, I think you might be right about the terminological mismatch (the aside about Aristotle is what finally clued me in to where you were coming from). To a… very rough approximation… I would say that indeed, we more or less agree on this aspect of the matter.

But as far as your comment that ‘it’s useless to “replace the symbol with the substance”’, I simply cannot agree.

You have picked, as your illustrative example, what is, perhaps, the very hardest case. “What is the source of value” is a tremendously difficult question! (It appears in a more vulgar form as “what is the meaning of life”—and that question is, of course, the very archetype of “hard, deep questions”.)

Yes, of course, replacing this particular symbol with the substance behind it is quite the tricky proposition… because we do not even know for sure what the substance is! We have spent thousands of years trying to figure it out; and who would claim that we’ve arrived at a full answer? (Oh, some of us know more than others, to be sure—much more, in some ways. I daresay I have a better idea of the answer than the average person, for instance, as, I gather, do you. Still—it’s very much an open question.)

(Eliezer acknowledges this, by the way. More: he makes it an explicit object of a big and important part of the Sequences—Book V of R:AZ is all about this.)

But what should we conclude from this? That replacing the symbol with the substance is of no value as a technique, or a bad idea, or useless for all but the trivial cases? Yet no such conclusion is even remotely warranted. By analogy, imagine proclaiming that because no proof (or disproof) of the Riemann hypothesis (called, by some, “the most important unresolved problem in pure mathematics”) has been found, despite a century and a half of searching, therefore proving any propositions in mathematics is a fool’s errand, and we should abandon all attempts to answer mathematical questions! That would clearly be an absurd leap, don’t you think?

The case of “person” is much, much simpler. (In fact the chief obstacles in the abortion debate are not metaphysical at all; they are political. Turning the issue into some elaborate definitional dispute—and insisting upon that dispute’s alleged irreducibility, difficulty, complexity—is, quite often, merely a way to blind oneself to that distressing reality.)

“But that solution is unavailable when it matters whether something is BLEGG or RUBE.”

When it matters whether something is a blegg or rube, it doesn’t “just matter”, it matters for a particular reason, in a particular circumstance. If you’re looking for vanadium, you’re going to sort bleggs and rubes very differently than if you’re looking for blue pigment. It should be clear that there isn’t a “right dividing line”, only a division that is more or less helpful for your project. This is the entire point of the blegg/rube example; you do not appear to have understood it.

“But because personhood matters, we can’t just decompose it and say “a fetus is animate human meat, but not intelligent or conscious; draw the ‘person’ line however you want.””

The ‘person’ line does not correspond to any real feature of the world-there’s no “really a person” essence attached to anyone because we don’t have a single concept of ‘person’. Some people use the word to refer to someone with a conscious, sentient mind; others use it to refer to someone with moral worth. If you think the fetus has moral worth but no consciousness as yet, is it a ‘person’ or not? Personhood doesn’t matter here, what matters is a question of moral worth… and if you don’t realize that sometimes the word ‘personhood’ is used interchangeably with worth and sometimes it isn’t, then you’re going to get very confused.

As a trans woman, I would answer that I’m really something that can’t perfectly be classified as male or female from a strictly biological/anatomical perspective: I have a Y chromosome, but there are people with Extreme Androgen Insensitivity Syndrome who have Y chromosomes but are otherwise physically identical to cis women (to the point that many of them go their whole lives thinking they’re just normal cis women), and the vast majority of people would agree that they should be considered women for medical, legal, and social purposes. I have both male and female sex characteristics: male genitalia, an Adam’s apple, a voice that originally sounded male by default, facial hair until I had it removed through laser and electrolytic treatment, and height typical for a male, but also developed breasts, wide hips and thighs, smooth skin, facial features that most people would consider female, no chest hair, and body hair patterns typical for a female. I consider myself intersex from a purely biological perspective, but I choose to identify as a woman because I feel like a woman internally, and I do think my internal sense of gender identity corresponds to some real physical thing – perhaps my initial testosterone/estrogen levels (before starting hormone treatment) were closer to a woman’s than a man’s, or some part of my brain is structured in a more female way than the average male’s.

As David Shaffer and several other people here already tried to explain, where you draw the line depends on exactly why you need to draw the line in the first place. It’s not that the rest of us are saying “the difference between BLEGG and RUBE never matters,” it’s that we’re saying “the difference between BLEGG and RUBE can matter for a lot of different reasons, and when there’s an edge case that falls outside of the standard BLEGG/RUBE paradigm, we’ll handle it in different ways depending on what those reasons are.” You seem to be interpreting TCWMFMNMFTC as some kind of pseudo-postmodernist “nothing is really real, man” statement, as if it’s calling for everyone to throw up their hands and admit that we can’t actually know anything, when it’s really a call for increased mindfulness and discernment.

For the situations where it really matters – for instance, for medical purposes – am I a man or a woman? Well, I’d prefer my doctor to treat me like a man when assessing my risk of prostate cancer and like a woman when assessing my risk of breast cancer.

I strongly doubt that North-Korea is the most unhappy country, because I think that a major component of happiness is the norm that society sets and the extent to which people can meet this norm.

The most unhappy societies are probably not merely poor societies, but societies where the norm has become unattainable for many.

Ultimately, North-Korea seems like a fairly ordered and stable society with norms that are attainable for most people, where happiness is probably at the low end, but not bottom of the pack. I’d expect a country like Burundi to be there.

I don’t see how this can be possible, outside of a temporary shock.

A social class where the members by and large cannot attain “the norm” just means that that social class has a different norm. There is no “the” norm. Spartan helots weren’t even allowed to try to participate in Spartan Greek culture. Did that mean they were all unhappy because they couldn’t attain “the norm”? No, it meant they aspired to helot norms.

I do think that the most unhappy societies are experiencing problems that reduce the opportunities compared to the past.

In which case the USA’s going to be unhappy for a while, as we have nowhere to go but down.

NK is a special case because living in fear sucks. But yes, a very poor country is not necessarily very unhappy and you are spot on with the norms. In Eastern Europe the norm of what is considered a materially succesful man is far higher than the average salary or wealth. It is really weird. Perhaps Western norms, also from Western movies. Perhaps the fact that illegal incomes push the norm up. Perhaps the fact that there are no real class differences. I mean, if I am a peasant and he is a noble, and we dress, talk, walk, etc. differently, I will not compare myself to him. But the EE noveau-riche is about as prole as everybody else, no special education, etiquette or nothing.

This is not a new thing. Egalitarian-meritocratic ideals can backfire, it is known. If someone is richer than you because he really really deserved it, does not that hurt you more than as if it is purely by luck? In the first case your inferiority is rubbed in, in the second case at least you can secretly feel better. The norm is whatever a given group is supposed to achieve and in an egalitarian-meritocratic society everybody is in the same group. At least in monocultural ones. I guess in America race acts as class, due to the lack of a proper class/caste system. So a black guy may be poor but still richer than most blacks that can be okay for his self-respect. I mean, when people call stuff white things it sounds really like upper middle class things.

So, I’m confused why this all doesn’t just boil down to “Don’t do prediction outside your training data.” It seems like the whole bit with tails coming apart and Talebian Mediocristan is beside the point.

You started with the premise:

Fair enough. This is due to conditional expectations, and the link you included explains it fairly well. Paraphrasing, even though the expected value of X2 is highest for the most extreme observed value of X1, if you’re sample size is large enough and your correlation low enough, you’ll tend to have at least a few observations with slightly-lower-but-still high values of X1, and by chance, one of these will “draw” an X2 value that’s more extreme than the single observation you had for your most-extreme X1.

(This phenomena goes away if you have high correlations or low sample sizes. Heck, it’s probably not hard to write down an equation for the N and r you’d need to have a 50/50 chance of the “tails separating” assuming you’ve got a bivariate Gaussian. But I digress.)

It’s important to note that this phenomena relies on linear correlation between factors.

So anyhow, from here you make an analogy to words like “Happiness”, noting that words are really summaries of a wide range of concepts. The analogy to correlation here is what has me confused, I think. Your Special Plot shows how two people might view Happiness as a function of two concepts (which we all agree are related to Happiness), but that since those folks use slightly different functions, they come to different assessments of which country is the Happiest, and this is the “tails coming apart.”

You don’t say it explicitly, but the two functions you’ve used a linear combinations of the two factors, and under those conditions, the analogy makes sense. But then you move into “classifiers,” which encompass all sorts of functions, and then you move on to the metro map. By the time you transition into morality, it seems like the thing we’re worried about is using a model trained on one set of data to extrapolate outside of it, which, yeah, is a known problem and can produce very poor results. You don’t need conditional expectation and Gaussians to make that point. I think the metro map is an entirely different phenomena from “tails come apart” and I don’t see what one tells us about the other.

Am I missing something? Correlation’s got nothing to do with second half of the post. Or maybe that was the point?

(Final aside: The “mediocristan”/”extremistan” bit was also confusing to me, since Taleb generally means “Gaussians” when he talks about “mediocristan” and things like power law distributions when he talks about “extremistan”. Nothing in this post is in “extremistan” as far as I can tell. If you want to complain about extrapolating beyond your data, that’s fine, but that’s a different problem than confusing a Gaussian for a power law. Or maybe that was the point??)

+1

+3 vs undead

Until you meet the Undead Black Swan, who is made stronger by the use of magical weapons against him. Then you’re screwed.

I don’t think “conditional expectations” are a sufficient explanation for this, nor do I think sample size is really relevant.

The phenomenon arises from this interaction:

– There is residual variation in trait Y after accounting for the correlation with trait X

– More extreme values (of X or Y, before or after accounting for correlation) are less common than less extreme values.

Treating Y as the variable for which we’re trying to achieve a high target, the first bullet point of this model tells us that your Y value is the sum of (1) the value predicted by the correlation with your known X value; plus (2) chance. The second bullet point tells us two things: (甲) it’s easier to have lower X values than higher X values; and (乙) it’s easier to have lower chance values than higher chance values. Those effects point in opposite directions. The coming apart of the tails occurs when effect 甲 dominates effect 乙 within the range of Y you’re interested in.

So the effect is determined by the shape of the distributions in question (both the distribution of Y conditional on X, and the unconditioned distribution of X), and is not an artifact of sample size. As sample size approaches infinity, the coming apart of the tails will not go away.

Ahh. I meant if your sample size is particularly low, and your correlation is particular high, your observation with the largest value of X1 will likely also have the largest value for X2 as well. As for the “shape of the distribution”, I was assuming a bivariate Gaussian. It seemed like this is the type of thing Scott was talking about, but I found the post confusing, so maybe this was incorrect.