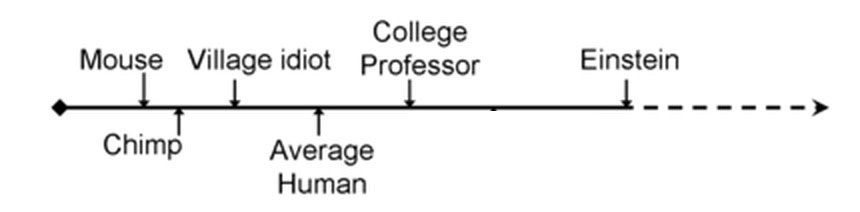

Eliezer Yudkowsky argues that forecasters err in expecting artificial intelligence progress to look like this:

…when in fact it will probably look like this:

That is, we naturally think there’s a pretty big intellectual difference between mice and chimps, and a pretty big intellectual difference between normal people and Einstein, and implicitly treat these as about equal in degree. But in any objective terms we choose – amount of evolutionary work it took to generate the difference, number of neurons, measurable difference in brain structure, performance on various tasks, etc – the gap between mice and chimps is immense, and the difference between an average Joe and Einstein trivial in comparison. So we should be wary of timelines where AI reaches mouse level in 2020, chimp level in 2030, Joe-level in 2040, and Einstein level in 2050. If AI reaches the mouse level in 2020 and chimp level in 2030, for all we know it could reach Joe level on January 1st, 2040 and Einstein level on January 2nd of the same year. This would be pretty disorienting and (if the AI is poorly aligned) dangerous.

I found this argument really convincing when I first heard it, and I thought the data backed it up. For example, in my Superintelligence FAQ, I wrote:

In 1997, the best computer Go program in the world, Handtalk, won NT$250,000 for performing a previously impossible feat – beating an 11 year old child (with an 11-stone handicap penalizing the child and favoring the computer!) As late as September 2015, no computer had ever beaten any professional Go player in a fair game. Then in March 2016, a Go program beat 18-time world champion Lee Sedol 4-1 in a five game match. Go programs had gone from “dumber than heavily-handicapped children” to “smarter than any human in the world” in twenty years, and “from never won a professional game” to “overwhelming world champion” in six months.

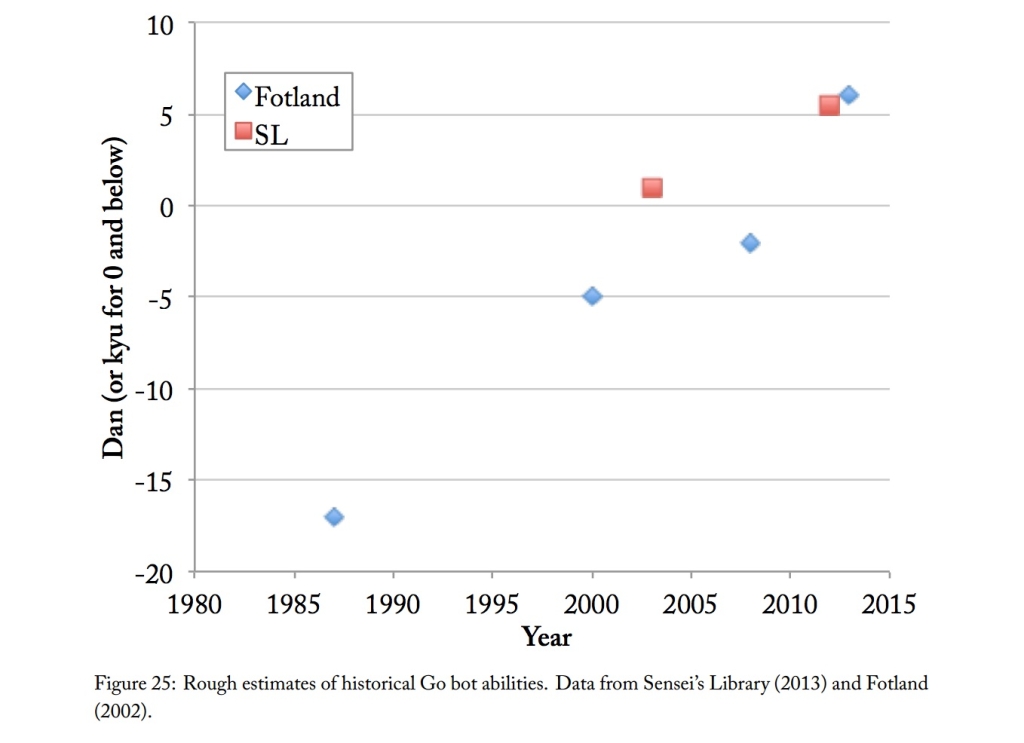

But Katja Grace takes a broader perspective and finds the opposite. For example, she finds that chess programs improved gradually from “beating the worst human players” to “beating the best human players” over fifty years or so, ie the entire amount of time computers have existed:

AlphaGo represented a pretty big leap in Go ability, but before that, Go engines improved pretty gradually too (see the original AI Impacts post for discussion of the Go ranking system on the vertical axis):

There’s a lot more on Katja’s page, overall very convincing. In field after field, computers have taken decades to go from the mediocre-human level to the genius-human level. So how can one reconcile the common-sense force of Eliezer’s argument with the empirical force of Katja’s contrary data?

Theory 1: Mutational Load

Katja has her own theory:

The brains of humans are nearly identical, by comparison to the brains of other animals or to other possible brains that could exist. This might suggest that the engineering effort required to move across the human range of intelligences is quite small, compared to the engineering effort required to move from very sub-human to human-level intelligence…However, we should not be surprised to find meaningful variation in the cognitive performance regardless of the difficulty of improving the human brain. This makes it difficult to infer much from the observed variations.

Why should we not be surprised? De novo deleterious mutations are introduced into the genome with each generation, and the prevalence of such mutations is determined by the balance of mutation rates and negative selection. If de novo mutations significantly impact cognitive performance, then there must necessarily be significant selection for higher intelligence–and hence behaviorally relevant differences in intelligence. This balance is determined entirely by the mutation rate, the strength of selection for intelligence, and the negative impact of the average mutation.

You can often make a machine worse by breaking a random piece, but this does not mean that the machine was easy to design or that you can make the machine better by adding a random piece. Similarly, levels of variation of cognitive performance in humans may tell us very little about the difficulty of making a human-level intelligence smarter.

I’m usually a fan of using mutational load to explain stuff. But here I worry there’s too much left unexplained. Sure, the explanation for variation in human intelligence is whatever it is. And there’s however much mutational load there is. But that doesn’t address the fundamental disparity: isn’t the difference between a mouse and Joe Average still immeasurably greater than the difference between Joe Average and Einstein?

Theory 2: Purpose-Built Hardware

Mice can’t play chess (citation needed). So talking about “playing chess at the mouse level” might require more philosophical groundwork than we’ve been giving it so far.

Might the worst human chess players play chess pretty close to as badly as is even possible? I’ve certainly seen people who don’t even seem to be looking one move ahead very well, which is sort of like an upper bound for chess badness. Even though the human brain is the most complex object in the known universe, noble in reason, infinite in faculties, like an angel in apprehension, etc, etc, it seems like maybe not 100% of that capacity is being used in a guy who gets fools-mated on his second move.

We can compare to human prowess at mental arithmetic. We know that, below the hood, the brain is solving really complicated differential equations in milliseconds every time it catches a ball. Above the hood, most people can’t multiply two two-digit numbers in their head. Likewise, in principle the brain has 2.5 petabytes worth of memory storage; in practice I can’t always remember my sixteen-digit credit card number.

Imagine a kid who has an amazing $5000 gaming computer, but her parents have locked it so she can only play Minecraft. She needs a calculator for her homework, but she can’t access the one on her computer, so she builds one out of Minecraft blocks. The gaming computer can have as many gigahertz as you want; she’s still only going to be able to do calculations at a couple of measly operations per second. Maybe our brains are so purpose-built for swinging through trees or whatever that it takes an equivalent amount of emulation to get them to play chess competently.

In that case, mice just wouldn’t have the emulated more-general-purpose computer. People who are bad at chess would be able to emulate a chess-playing computer very laboriously and inefficiently. And people who are good at chess would be able to bring some significant fraction of their full most-complex-object-in-the-known-universe powers to bear. There are some anecdotal reports from chessmasters that suggest something like this – descriptions of just “seeing” patterns on the chessboard as complex objects, in the same way that the dots on a pointillist painting naturally resolve into a tree or a French lady or whatever.

This would also make sense in the context of calculation prodigies – those kids who can multiply ten digit numbers in their heads really easily. Everybody has to have the capacity to do this. But some people are better at accessing that capacity than others.

But it doesn’t make sense in the context of self-driving cars! If there was ever a task that used our purpose-built, no-emulation-needed native architecture, it would be driving: recognizing objects in a field and coordinating movements to and away from them. But my impression of self-driving car progress is that it’s been stalled for a while at a level better than the worst human drivers, but worse than the best human drivers. It’ll have preventable accidents every so often – not as many as a drunk person or an untrained kid would, but more than we would expect of a competent adult. This suggests a really wide range of human ability even in native-architecture-suited tasks.

Theory 3: Widely Varying Sub-Abilities

I think self-driving cars are already much better than humans at certain tasks – estimating differences, having split-second reflexes, not getting lost. But they’re also much worse than humans at others – I think adapting to weird conditions, like ice on the road or animals running out onto the street. So maybe it’s not that computers spend much time in a general “human-level range”, so much as being superhuman on some tasks, and subhuman on other tasks, and generally averaging out to somewhere inside natural human variation.

In the same way, long after Deep Blue beat Kasparov there were parts of chess that humans could do better than computers, “anti-computer” strategies that humans could play to maximize their advantage, and human + computer “cyborg” teams that could do better than either kind of player alone.

This sort of thing is no doubt true. But I still find it surprising that the average of “way superhuman on some things” and “way subhuman on other things” averages within the range of human variability so often. This seems as surprising as ever.

Theory 1.1: Humans Are Light-Years Beyond Every Other Animal, So Even A Tiny Range Of Human Variation Is Relatively Large

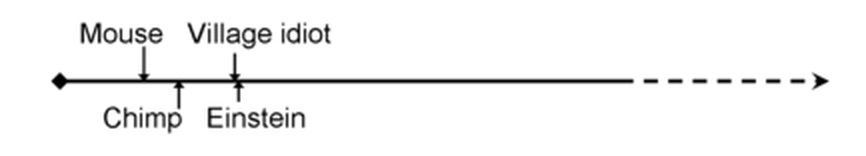

Or maybe the first graph representing the naive perspective is right, Eliezer’s graph representing a more reasonable perspective is wrong, and the range of human variability is immense. Maybe the difference between Einstein and Joe Average is the same (or bigger than!) the difference between Joe Average and a mouse.

That is, imagine a Zoological IQ in which mice score 10, chimps score 20, and Einstein scores 200. Now we can apply Katja’s insight: that humans can have very wide variation in their abilities thanks to mutational load. But because Einstein is so far beyond lower animals, there’s a wide range for humans to be worse than Einstein in which they’re still better than chimps. Maybe Joe Average scores 100, and the village idiot scores 50. This preserves our intuition that even the village idiot is vastly smarter than a chimp, let alone a mouse. But it also means that most of computational progress will occur within the human range. If it takes you five years from starting your project, to being as smart as a chimp, then even granting linear progress it could still take you fifty more before you’re smarter than Einstein.

This seems to explain all the data very well. It’s just shocking that humans are so far beyond any other animal, and their internal variation so important.

Maybe the closest real thing we have to zoological IQ is encephalization quotient, a measure that relates brain size to body size in various complicated ways that sometimes predict how smart the animal is. We find that mice have an EQ of 0.5, chimps of 2.5, and humans of 7.5.

I don’t know whether to think about this in relative terms (chimps are a factor of five smarter than mice, but humans only a factor of three greater than chimps, so the mouse-chimp difference is bigger than the chimp-human difference) or in absolute terms (chimps are 2 units bigger than mice, but humans are five units bigger than chimps, so the chimp-human difference is bigger than the mouse-chimp difference).

Brain size variation within humans is surprisingly large. Just within a sample of 46 adult European-American men, it ranged from 1050 to 1500 cm^3m; there are further differences by race and gender. The difference from the largest to smallest brain is about the same as the difference between the smallest brain and a chimp (275 – 500 cm^3); since chimps weight a bit less than humans, we should probably give them some bonus points. Overall, using brain size as some kind of very weak Fermi calculation proxy measure for intelligence (see here), it looks like maybe the difference between Einstein and the village idiot equals the difference between the idiot and the chimp?

But most mutations that decrease brain function will do so in ways other than decreasing brain size; they will just make brains less efficient per unit mass. So probably looking at variation in brain size underestimates the amount of variation in intelligence. Is it underestimating it enough that the Einstein – Joe difference ends up equivalent to the Joe – mouse difference? I don’t know. But so far I don’t have anything to say it isn’t, except a feeling along the lines of “that can’t possibly be true, can it?”

But why not? Look at all the animals in the world, and the majority of the variation in size is within the group “whales”. The absolute size difference between a bacterium and an elephant is less than the size difference between Balaenoptera musculus brevicauda and Balaenoptera musculus musculus – ie the Indian Ocean Blue Whale and the Atlantic Ocean Blue Whale. Once evolution finds a niche where greater size is possible and desirable, and figures out how to make animals scalable, it can go from the horse-like ancestor of whales to actual whales in a couple million years. Maybe what whales are to size, humans are to brainpower.

Stephen Hsu calculates that a certain kind of genetic engineering, carried to its logical conclusion, could create humans “a hundred standard deviations above average” in intelligence, ie IQ 1000 or so. This sounds absurd on the face of it, like a nutritional supplement so good at helping you grow big and strong that you ended up five light years tall, with a grip strength that could crush whole star systems. But if we assume he’s just straightforwardly right, and that Nature did something of about this level to chimps – then there might be enough space for the intra-human variation to be as big as the mouse-chimp-Joe variation.

How does this relate to our original concern – how fast we expect AI to progress?

The good news is that linear progress in AI would take a long time to cross the newly-vast expanse of the human level in domains like “common sense”, “scientific planning”, and “political acumen”, the same way it took a long time to cross it in chess.

The bad news is that if evolution was able to make humans so many orders of magnitude more intelligent in so short a time, then intelligence really is easy to scale up. Once you’ve got a certain level of general intelligence, you can just crank it up arbitrarily far by adding more inputs. Consider by analogy the hydrogen bomb – it’s very hard to invent, but once you’ve invented it you can make a much bigger hydrogen bomb just by adding more hydrogen.

This doesn’t match existing AI progress, where it takes a lot of work to make a better chess engine or self-driving car. Maybe it will match future AI progress, after some critical mass is reached. Or maybe it’s totally on the wrong track. I’m just having trouble thinking of any other explanation for why the human level could be so big.

Why not logarithmic terms? Each unit standing for an order of magnitude.

Assuming you’re going to then subtract those logarithms, that’s the same as the “relative terms” Scott first considers.

I think he is saying, what if chimps are 2 orders of magnitude smarter than mice and humans are five orders of magnitude smarter than chimps? Because maybe there is a network effect and a bigger brain is just that important? (seems belied by whales though- or who knows, maybe they are way smarter than humans in some sense)

Well, the EQ of dolphins is about 4, still well below us. I don’t find it unbelievable at all that they might be smarter than chimps but in a less obvious way. Chimp intelligence would be easier for us to observe since they’re so closely related to us.

Baleen whales have an EQ of around 1.7-1.8 (less than elephants).

I think the missing factor is accounting for what kind of algorithms the chess and Go bots were running. Old chess bots basically worked by looking at all possible chains of moves and countermoves, X moves deep. By Moore’s law, we expect X to increase linearly in time, which makes sense for explaining the very beginning of the graph; this is certainly how 6-year-old me progressed to 7-year-old me, and so on.

The problem is that for X greater than a few, this is _very_ far from how high-level humans actually play chess. No human can traverse move trees that deep. Instead we amass tons of heuristics which allow us to very rapidly prune the tree. Computer power did improve exponentially, but the computers were handicapped by running an exponentially inefficient algorithm. AlphaGo got such a huge leap because it ‘understands’ Go, in the sense that it doesn’t waste time thinking about long chains of moves that are obviously terrible.

You can use the same reasoning to compare Einstein and the average Joe. If the average Joe thinks of physics as a bunch of meaningless symbols, then it would take them billions of years of randomly combining them (traversing an exponentially large move tree) to reproduce Einstein’s work. But once Joe understands the physical content it becomes much easier — in terms of raw mathematical operations performed, understanding one of Einstein’s papers is much less mentally taxing than visually decoding the ink blots on the page into letters.

Whether Yudkowsky’s or Karja’s intuition is better depends on the quality of the algorithms the AIs use. I think the main worry is that some people think deep learning and co. will be sufficiently generalizable that they’ll easily go from ‘understanding Go’ to ‘understanding physics’ to ‘understanding everything’.

To me that just makes things more mysterious – why do “effortlessly processing millions of moves in seconds” and “having good heuristics” so often work about equally well?

I think when we talk about differences between average people and Einstein, we’re not usually talking about billions of years of randomly combining symbols. We’re talking about some task they both understand (let’s say Ravens Matrices), in which one person can do it far better than the other.

I can’t think of any cases where these huge disparities in performance can’t be explained by one person having a better ‘understanding’ (which means more heuristics, more ‘chunking’ of concepts, which when done recursively gives an exponential advantage).

For example, the average Joe has an enormous advantage over newborn children on the Raven’s matrices — the newborns literally can’t even distinguish the shapes. They both understand the task in the literal sense that they both have eyes, but Joe has an enormous advantage because he ‘understands’ vision better; he can tell that a circle is a circle by grouping the pixels into edges and the edges into a shape. This is not evidence for a huge hardware disparity between Joe and the newborn; if anything, the newborn has better hardware.

The difference between Joe and the newborn feels like the same thing as the difference between Einstein and Joe to me; in each case the first party just beats the second by a few layers of chunking, not by huge processing speed differences. (People solving math problems even use the same language, they say they ‘see’ a solution instantly. That’s a heuristic matching, like a baby seeing a square, not a billion computations done in a second.)

Chunking is important, and I haven’t heard anything about how people develop good chunking.

Some sort of mild Platonism seems tempting– direct perception of how things work. Chunking (especially when it’s not based on experience) isn’t a just a matter of experimentation. It would be too hard to test a significant number of chunking schemes.

In the early days of computer chess they didn’t work equally well at all. The heuristics guys got destroyed by the brute force guys. Computer chess still hasn’t solved the heuristics problem the way Alpha Go has. There continue to be positions today (mainly endgame fortresses) that stump the best engines despite being solvable by humans.

As for self driving cars? The reason we haven’t seen them surpass humans as impressively as chess/go computers did is that self-driving car engineers do not have the luxury of Moore’s law. This is the number one reason I am skeptical of any claim that uses chess or go as an example and extrapolates that to general intelligence. There’s a very strong possibility that computers aren’t going to get much faster than they are now. Certainly, nearly all of the low-hanging fruit is gone.

Here’s something to think about: you can run a chess engine that can beat Magnus Carlsen on your phone. AlphaGo, on the other hand, runs on an enormous supercomputer with 1200 CPUs and 170 GPUs. Unless we have another computing revolution akin to the invention of the integrated circuit, you aren’t going to see AlphaGo running on your phone any time soon.

The version of AlphaGo that defeated Ke Jie in the latest match and that won 50 straight games against top pros ran on a single Tensor Processing Unit instance running on a single machine: https://en.wikipedia.org/wiki/Master_(software)

But didn’t it require 1200CPUs and 170GPUs to learn how to play with one CPU? I can’t find it in the article. This is just a nitpick. After all, every top performer trains for years in his art, and the computations must happen somewhere (either in 1200CPUs for 1 month or 1 CPU for 100 years)

No. Hassabis has said that they cut the training requirements by something like 9x for Master, from an unspecified baseline which presumably itself was better than the older training approach reported in the whitepaper. We’ll have to wait for the second AG paper to find out how much more efficient, but it seems like Master is an order of magnitude better than AG on every dimension.

Training and execution are generally categorized distinctly, and claiming we won’t get AlphaGo on phones makes no sense given they already managed to execution portion on a non-supercomputer.

AlphaGo used an enormous supercomputer to train, then beats masters with a single workstation.

Humans beat each other with the assistance of thousands of years of shared experience, passed down in lessons or observing others playing games, but they still use all this information to achieve a win using only their own intellectual capacity.

Because fields where they work about equally well are the only ones where we compare humans to computers. Nobody has a human vs computer spreadsheet-compilation contest, or a human vs computer political gladhanding contest. We only compare in fields where both sides plausibly have a fighting chance.

Yes, this. That’s exactly the right answer. There are tons of things that computers are so massively better at than humans that we don’t even bother to compare them, and tons of things that humans are so massively better at than computers that we don’t even bother to compare them. When it happens that they get into the same general range, we look for meaning (where there probably is none).

Agree that alexsloat’s post is right on the nose. I guess the question would then become “is there interesting meaning in what fields are tractable to both computer-style approaches and human-style approaches?”

And I don’t have an answer to that.

Thirded. This is the boring but correct answer.

This is the correct answer to the question “Why are humans and AI at approximately the same level on tasks we care to compare them on?” But Scott’s question is, “Why does it take so long for AIs to traverse the human ability range for those tasks we choose to compare them on?” This suggests the human ability range is fairly wide relative to the pace of AI development, contra EY’s figure.

Agreed. I think Eliezer was making an important point, but he sacrificed accuracy to do so.

It’s not irrelevant – we’re more likely to find, at any given time, that humans and AI are at approximately the same level on a task, if it’s a task where the human range is wide. But I agree that there’s still a question to be answered here.

> To me that just makes things more mysterious – why do “effortlessly processing millions of moves in seconds” and “having good heuristics” so often work about equally well?

I think this has a mathematical explanation. The summary of it is “A board position has a true ‘goodness’, which, if you could efficiently compute, you would be able to play perfectly; any way of approximating that ‘goodness’ function will do.”

Cameron Browne has a paper titled “A Survey of Monte Carlo Tree Search Methods” here http://www.cameronius.com/cv/mcts-survey-master.pdf, which a less restrained and more memetically inclined individual might have titled “The Unreasonable Effectiveness of Monte Carlo Tree Search in Abstract Strategy Games”. The amazing thing is that “probability of winning”, i.e. “if legal moves are played uniformly at random, what fraction of games do I win” is a good approximation of the “goodness” of a position, and a relatively straightforward one to compute.

I was going to point out that this is not true in games where specific sequences of moves are required, but the linked paper does actually acknowledge that (section 3.5). This is why MCTS is more successful at Go than at Chess. (I imagine something like Losing Chess would be even worse for MCTS.)

Here is a fascinating paper on that: https://arxiv.org/pdf/1608.08225.pdf

TL;DR: Neural networks, which are what push the recent leaps forward in (specialized) AI, are proven to be able to approximate any function, but they are particularly good at approximating functions that come up in physics and the real world.

It’s unclear if that’s true of the functions of go or chess in the general case, but it’s fairly clear that our minds are optimized to quickly approximate the real world (catching balls), and it would be unsurprising if whatever heuristics give us skills at go, chess, etc, use the same hardware—which would make it unsurprising that neural nets can approximate, or even surpass, given dedicated computing power, our abilities.

Which, interestingly, says nothing about whether or not there are algorithms that are better than neural nets and our minds…

why do “effortlessly processing millions of moves in seconds” and “having good heuristics” so often work about equally well?

How often is “so often”? I feel like there may be a selection bias in what kinds of tasks are being considered: tasks where computers very quickly just jumped to superhuman capability don’t get included in graphs like these. E.g. the term “computer” used to refer to humans, but mechanical/electronic computers jumped so quickly to superhuman capability that that profession vanished. We don’t see graphs of digital computers slowly building up towards the level of the best humans in doing arithmetic, rather they just jumped to a superhuman level so quickly that there isn’t even a graph for that. Similarly, computers beat humans in playing checkers, navigating a map, searching through large sets of digital documents at once, etc etc, but we don’t note any of that because our attention always shifts to what they still can’t do.

If you could somehow construct a representative sample of all tasks, you might find out that computers happen to fall within the human average only on a very limited fraction of that sample. But this is hard to do because nobody has a good theory of how to objectively define a “task”, so doesn’t know what a “representative sample of all tasks” might look like.

My general understanding is that most recent AI techniques require huge amounts of input data to develop a model. My personal guestimate is that AI performance is proportional to the log of the input training data.

What makes chess and Go amenable to this kind of optimization is that it’s possible to generate large amounts of training data by having the program play itself Lots of times and then training against the generated data. This is really easy for Go as there are well-defined rules and a scoring/ending condition so the machine can play itself.

This is much harder to do with things in the real world where there is real concern. I’d also point out that even Einstein-level intelligence doesn’t let you do a lot of major damage alone. Sure – you might publish a bunch of interesting physics papers, but it doesn’t actually let you take over the world.

I really wouldn’t be so sure. Einstein-level intelligence (especially if/when that includes social intelligence) combined with superhuman focus and drive to do something less aligned with human values than writing physics papers? I don’t think that would end well.

In the case of chess engines, their parameters have been hand-tuned by grandmasters. So it’s not surprising that they turn out to play roughly as well as grandmasters, with some advantages for searching through more moves systematically, never being careless, and being able to store large endgame tables.

For AlphaGo, they didn’t start from scratch. They bootstrapped the AI by training it to predict the moves that human experts would play. Then they had it play many games against itself to try to improve on those strategies.

I don’t find it too surprising that AI’s work about as well as humans when that’s what we’re teaching them.

I think Chess just happens to be in a place where two different approaches work reasonably well because the next several moves have millions of possibilities which is 1) too complex for a human brain to solve by brute force but constrained by rules such that pattern recognition heuristics are highly useful 2) not so complex that a computer can’t crunch through each of those millions of positions to see which one is actually the best move.

Most things are either so open ended that the brute force method doesn’t work (e.g., a conversation) or so constrained by rules that brute force works better (a math problem).

And just because examples of what you were talking about are often fun and informative, here’s a chess problem that both a computer and even strong amateur will be able to see the answer to in a couple of seconds, but may take someone not familiar with the pattern a very long time to find the answer. White to move and checkmate in several moves:

http://imgur.com/a/982sH

Fzbgure zngr, fgnegvat Dp4.

Both humans and computers play chess in much the same way, at least when people are beginning to play. They both have a very rough heuristic, basically more pieces are better, and each piece has a rough value, and they try to avoid losing pieces. Humans generalize very quickly, and learn what structures are efficient, namely protect your pieces, and they make high level plans, e.g. try to trap that bishop. That’s about as far as I got in playing chess, but after than level people learn openings, and endings, and develop intuition for various things. Computers never really get very far with heuristics. Instead, they make up for weak evaluation functions by looking deeper down the tree. This does not work as well in Go, because the branching factor is much higher. The advance of AlphaGo was to use a neural net to compute a heuristic. Recently certain types of neural network have been found to be efficient at visual recognition. They also used another to judge what moves were worth trying. The latter is not needed in chess, as there are usually a small number of moves available, but Go has a huge number of pointless moves available at almost all times. The problem with using a neural network for these types of task is training data. A major innovation was Monte-Carlo tree search, where the value of a position was estimated by looking ahead, not on all paths, but just on some. This worked well for training the neural network, the heuristic function that tells how good a position is. The basic game playing remained them same, with now extra levels of thought, no plans, no structures, etc. The pattern of tree search with a heuristic is exactly the same as before.

Human heuristics, at least the ones that have been explained in texts like Nimzowitsch, are not present in deep learning systems. The recent claim that deep learning is “understanding” as your mention above is overblown. AlphaGo looks at long chains of moves, it just has a new heuristic evaluator, one that was trained in a very non-human way on millions of games.

It is farfetched to think that there is a comparison between this and Joe and Einstein. Both Joe and Einstein have very similar hardware. There is only at most a small difference in processing speeds between the two, on tasks that Joe can manage. Einstein’s advantage is that he thought in concepts that Joe does not have available. There is no evidence that current deep learning is creating new concepts, though there is of course some new work recognizing this issue, and hoping to solve it in various ways. The difference in thinking between an Einstein and a Joe is less about how many operations they carry out, and more about what kinds of ideas they manipulate.

The branching factor of Go looks tiny compared to the branching factor of idea-space. I think there is fairly good evidence that superior results on IQ tests are not due to faster processing, but to different kinds of reasoning. People get a Raven’s problem correct because the find the pattern, not because they carry out simpler tasks a little faster.

There is only at most a small difference in processing speeds between the two, on tasks that Joe can manage.

This is not my experience working with people of various intellectual ability.

“Old chess bots basically worked by looking at all possible chains of moves and countermoves, X moves deep.”

Old chess bots did not work exactly as you say. They would use heuristics to prune the search tree, such as counting the number of chess pieces in the board.

Alpha Go works much the same way. The difference is that the heuristic is a probabilistic model estimated using a convolutional neural network.

The oldest chess bots used an evaluation function based on standard piece values combined with minimax (which probably implemented alpha-beta pruning from the start). Later they were taught other tricks – transposition tables and quiescence searches to improve the minimax algorithm, hardware optimisations etc. They were also taught some things not related to efficient over-the board computation, such as opening lines from grandmaster praxis, and calculated endgame tables.

The long smooth increase is the combination of all these improvements with Moore’s Law. It mostly comes from more computation, and more efficient computation. (The opening lines help a lot, though.)

The smooth increase in Chess happened because in Chess, deep searching is pretty fungible with positional understanding. The game has just the right mix of strategy and tactics for that.

In Go, the deep searching and weak heuristics of computers don’t translate so easily into results equivalent to human searching and heuristics. So doing things incrementally better had little effect. The leap had to come from figuring out how to do one thing a lot better.

Maybe that one thing was comparable to certain things that were done over the years in Chess programs. But in the problem domain of Go, it made a much bigger difference.

The question is, to what extent are the intractable problems of AI more like Go or more like Chess? I’m guessing a lot of them are more like Go.

But, at least in chess, we, humans, also supplied computers with heuristics for understanding positions based on our human experiences as far as we can formalize them. Not on grandmaster level, but on a level of a decent human player. We told the computer which factors are good (more pieces, bishops in open position, key pieces being attacked and defended with enough other pieces, etc.). I guess, this is the main reason why computers spent so much time in the human range. We taught them how to think as humans. If instead we tried to do something completely different, say, give them a hundred thousand games played by the best human players and programmed them to estimate differences between current position and winning or loosing positions from human games in some geometric space and then evaluate each position on that basis, something no human is doing, then it is completely possible that computers would have remained lousy players for very long time and then became better than best humans in one swoop.

Addendum: Another possibility is the selection bias. A lot of people were interested in computers’ progress in chess because it was within the human level for so long. If computers were always much worse than the worst player, not many people would be interested (how many people are interested in robots vs. humans football games?) and neither if they would have been much better from the beginning (who would be interested in competitive calculation of square roots or spelling bees?). Maybe chess is just happened to be in a sweet spot of substantial interest before computers came around, a lot of people sharing interest in both computers and chess, and long march to excellency.

One of the problems with trying to understand natural human variation relates to how IQ is defined relative to the average person. We have qualitative descriptions of what it is like to have an IQ of 70, 85, 100, etc but we really don’t have a good idea what these quantitative distinctions actually mean (i.e. Perhaps an IQ of 100 corresponds to “brain power” X and an IQ of 110 actually corresponds to a brain power of 2X). Something like a mature mental chronometry could perhaps give us an idea of the range of human intelligence and, if possible, we could develop similar tests across species to develop something like the “zoological” IQ defined above. We could then estimate the natural variation of “general intelligence” across species and use this to inform us with respect to how we should expect AI to progress.

This is why I tried to look at encephalization quotient, for all the problems with that approach, and why I tried to look at difference in cranial capacity among humans, for all the problems with that approach.

Intelligence is mostly interesting because it enable the agent to achieve goals in the world. A measure of intelligence that leverage what the agent can accomplish is probably fairer than gross measures like encephalization quotient. For example, someone smarter than Von Neumann should have been able to win WW2 faster, or build a better mousetrap/bomb.

One of the drawbacks of measures like this is the “no free lunch” theorem, which says that all optimization or learning algorithms have equivalent results averaged over all problems. To get a meaningful measure, it is necessary to take into account that the world has structure. Unfortunately, extracting the structure is exactly the problem that intelligence is trying to achieve, so it is difficult to look beyond what we already know.

My hunch is that once you have Joe-average AI, the things computers are good at (fast computation, perfect memory) give you something sort of like Einstein pretty quickly. I don’t have a strong sense about how that bears on super-intelligence. Furthermore, my hunch is that the gap between a chimp and Joe-average is both poorly-defined and probably enormous. Like, regarding:

I think both of these are suspicious for going from chimp to any human in 10 years. Is the counter that it didn’t take evolution that long to do it?

Separately I agree that, as you say, defining “chimp intelligence” requires more philosophical groundwork. We can sidestep it a bit by saying it’s some property of a chimp, just as human intelligence is some property of a human, but there’s still work to do if we want to put them on some continuum.

We’re on a bit more solid ground talking about human development: what tasks humans can perform at various ages. But it doesn’t seem like computers have even cracked the very beginning of that continuum yet, in terms of general intelligence (for instance, no computer can pass a second-grade reading comprehension test). I find it hard to speculate on how progress through that continuum might proceed.

I would have said it was suspicious to have going from chimp to human take anywhere near as long as going from mouse to chimp. Great apes are pretty smart.

Interesting, I would actually guess the opposite. The things that Einstein is good at, compared to Joe-Average, arguably aren’t the things that fast computation or good memory help with. Rather, Einstein’s “secret sauce” is incredibly effective heuristics that make him much more efficient than Joe.

I doubt human brains differ by more than an order of magnitude in speed or memory capacity. Joe could memorize just as many digits of pi as Einstein, assuming they had similar attention spans for such a monotonous endeavor. Joe might even have a faster brain and win every Street Fighter match against Einstein. But clearly there’s several orders of magnitude difference in their performance in, say, mathematical reasoning. You could give Joe decades to prove a simple but unfamiliar theorem, and he wouldn’t get anywhere. Einstein could imagine the proof within seconds. That gap is not due to speed or memory, but rather efficiency in reasoning.

If you gave Einstein 10x speed and 10x memory but don’t improve his efficiency, I suspect the result would be more like von Neumann than like a Skynet superintelligence. That is, it’s still recognizably human–just unusually fast and productive.

Getting away from my point, but it’s interesting to think about Ramanujan as being the next step up from Einstein if you improve efficiency in reasoning, as opposed to speed or memory. The stories about Ramanujan’s intuition are kind of freaky and make me believe he was operating at a higher level than other world-class mathematicians.

Your response to Eliezer’s and similar graphs ignores the elephant in the room, which is that (even ignoring the problems surrounding the assumption that intellectual differences are one-dimensional) there just doesn’t seem to be any good reason to believe that the phase space above “Einstein” is unbounded. In fact, most examples I can think of are very bounded indeed. For example, if we were to graph the progress of a tic-tac-toe AI, we would find that it quickly asymptotes. Games like chess and go are similar in that no matter how much progress occurs, the best humans will never be so much worse than even a perfect AI that they will be checkmated on the first move, or even be forced into a catastrophically losing position in fewer than 10 moves (for example). There are diminishing gains such that, just like in the tic-tac-toe case, the ELO graph of chess or go cannot remain linear for long. It’s certainly not obvious to me that general AI should be any different. In fact, in most cases that I imagine in which human intelligence is applied, I cannot personally envision a tremendous amount of room for growth. How much better than humans can an AI potentially cook food? 10% better? 20% better? Whatever it is, the curve is not of exponential growth. How much better can an AI design a computer chip? Certainly better, but we know there are hard physical limits such that it can’t be *that* much better. How much better can an AI psychologically manipulate a human? Humans are notoriously unpredictable, sort of like a chaotic system, and a psychological evolutionary arms race has already provided us with near theoretical maximum defenses, I would argue. Sure, a “perfect” AI might do 20% better, but it isn’t going to magically hypnotize me into killing myself, just as a tic-tac-toe AI isn’t going to magically win every game, no matter how perfect its play.

I’m reminded of a joke article, maybe from the old Journal of Irreproducible Results, that I read as a kid and have never been able to find since, that examined (I think) the record speeds for the women’s 100 meter dash over the 20th century. Noting a steady gain, they proceeded to extrapolate to the date when women will overtake men; become the fastest land animal; break the sound barrier; achieve relativistic velocities…

I once noted on a graph of race records by gender that women were something like a generation or two behind men. In other words, that article was neglecting the fact that men are improving linearly too and about the same rate, just with a head start!

I still think this is a remarkable story of how practice, technology, modern diets, etc. could make a difference as large as an X or Y chromosome. The current women’s world record for 100m dash is better than the men’s world record as of 1910. About one hundred years of sports sciences equals inter-sex differences. (For marathons the current women’s record is even better: better than men until about 1960. More relevantly, though, marathon times distinctly shows the non-linearity of this progress.)

Relevant XKCDs: https://xkcd.com/605/, https://xkcd.com/1007/

That women would run as fast as men in the near future was believed seriously by many scientists and the general public in the later 20th Century. I had to do a lengthy study of Olympic running results in 1997 to explode that myth. Here’s the opening of my article:

Track and Battlefield

Everybody knows that the “gender gap” between men and women runners in the Olympics is narrowing. Everybody is wrong.

by Steve Sailer and Dr. Stephen Seiler

Published in National Review, December 31, 1997

Everybody knows that the “gender gap” in physical performance between male and female athletes is rapidly narrowing. Moreover, in an opinion poll just before the 1996 Olympics, 66% claimed “the day is coming when top female athletes will beat top males at the highest competitive levels.” The most publicized scientific study supporting this belief appeared in Nature in 1992: “Will Women Soon Outrun Men?” Physiologists Susan Ward and Brian Whipp pointed out that since the Twenties women’s world records in running had been falling faster than men’s. Assuming these trends continued, men’s and women’s marathon records would equalize by 1998, and during the early 21st Century for the shorter races.

http://isteve.blogspot.com/2014/05/track-and-battlefield-by-steve-sailer.html

In reality, any narrowing of the gender gap in running after about 1976 was due to women getting more bang for the buck from steroids. The imposition of more serious drug testing after the 1988 Olympics and the collapse of the East German Olympic chemical complex led to the gender gap getting a little larger subsequently compared to the steroid-overwhelmed 1988 Games.

Ypu don’t think AI would have significant room for growth in technology? If AI was at the level of a researcher, I would expect it would increase productivity at far higher rates than now. Hanson predicted doubling economic growth every month. That sounds about right to me. Imagine you take Einstein, make a million copies and then have them collaborate. You really don’t think there would be a notable change in growth rates?

I’m not even taking issue with the possibility of a technological “singularity”. I’m taking issue with Eliezer’s graph that presumes an enormous potential for growth along some intelligence-like dimension. And just to elaborate a bit more, I would not deny that certainly there are particular capacities leveraged by an AI that may see enormous growth, such as growth in memory and the ability to process lots of data in parallel, and the ability to develop better heuristics and make short-term predictions and inferences about certain artificially constrained non-chaotic systems, and so on, but I am very skeptical that such growth would in any meaningful way correspond to a linear increase in something like general intelligence. This is for the same reason that increasing the computational power or making a more efficient or perfect a tic-tac-toe engine has diminishing returns. A really really good general AI may be able to solve a lot of human-solvable problems much *faster*, and generally do things that require perfect working memory better (just as we should expect), but unless we are literally equating something as uninteresting as *processing speed* or *working memory* with “general intelligence”, I don’t really see any compelling reason why even in principle there should be such a thing that maps well onto “general intelligence” that has an upward range much beyond the far end of the bell curve of the solution provided naturally already by millions of years of evolution.

Yes, tic-tac-toe is bounded, chess is bounded, go and cooking are bounded too, but the world is massively more complex. And the situation in chess and go right now is that from down here we can’t even perceive the difference between one super-human player and another much stronger one.

So “growth in memory and the ability to process lots of data in parallel” and “something as uninteresting as *processing speed*” doesn’t map well onto “general intelligence”? Imagine you had an entity that can do the information processing of 1000 years of human scientific progress in a day. To me that does indeed translate into general intelligence.

To me human cognition is so incredibly limited it’s a miracle we got anywhere at all.

The world’s complexity may be less amenable to being chewed through by naive calculations. Also, maybe some really important parts of it aren’t that complex.

In order to build a nuke, ‘being really really really smart’ is not sufficient and maybe not necessary. You have to go through tons of loops of theory, practical implementation, and observation, which take time and resources. A lot of time, a lot of resources. You can take some shortcuts by being really really really (x5) smart, but you can’t shortcut everything, and maybe can’t shortcut most things.

Also, to get the performance leap that comes from building something that uses nuclear energy vs things that use chemical energy, you need that stepped up energy source. It’s not clear that there’s a step up from nuclear analogous to the step up from chemical, and I would actually argue it is very likely there isn’t.

Intelligence is scarce in the animal kingdom because animals typically aren’t faced with constant novelty. It isn’t clear that the buildup of available resources and energy that was in large part behind the constant novelty we faced, continues forever.

I would invert your question: Maybe it is bounded, but why should humans be anywhere near the boundary?

Humans are created from a very slow algorithm that struggles to do any big jumps and mostly just moves towards the next best thing, with a very limited set of ressources – pretty much everything in mammals is done with oxygen, carbon, hydrogen, nitrogen, calcium, and phosphorus. That’s it. Maybe you would want to make elastic steel bones, but no, evolution can’t smith, so that’s out, etc.(just an example, I don’t want to get into a discussion about whether steel would actually be better).

Even from a purely ‘historical’ argument, this doesn’t seem to make sense. If we had *a lot* of separately evolved species, which all have roughly human intelligence, then it could be argued that this is probably some kind of bound(though maybe just the carbon-based intelligence bound, not even necessarily the general one). But we’re the only ones, and we’re very far ahead from the next best, the chimps. If we wouldn’t have evolved, then the chimps might think of themselves as the natural boundary, completely unaware of the huge leap that is still possible(if they can even think about these things). Why shouldn’t it be the same with us, that there is still a big jump possible ahead of us but it just didn’t happen to evolve?

And I think looking at games is honestly ridiculous. Of course games like these are bounded, they’re designed to be. You have to look at some real world properties that some animals evolved to ‘optimize’ and how it compares to what we accomplished ourselves:

-Speed: A human can at most move with a max speed of ~50 km/h. The fastest animal ~120 km~h. That’s slower than my average speed on the speedway. In other words, very, very, far away from any kind of boundary.

-Strength: Unfortunately, there isn’t an easily comparable number you can use here, but still, I don’t think anyone would bet on any animal in a strongest machine vs animal contest.

-Reaction time: The reaction time of humans is ~0.5s, some insects seems to be somewhere around ~1ms at best. Machines are pretty much only limited by the literal physical limits given the task.

-flying size/floating size: Since Scott brought whales up, boats are much bigger than whales, the same is true for airplanes vs any flying animal(in fact, size in general is something that can be arbitrarily scaled).

There are surely still a lot of examples others can come up with and definitely some examples where some animals probably ARE at the boundary, but it’s enough for me to cast a lot of doubt into the assumption that we are close to a boundary. And it still isn’t ruled out that intelligence isn’t arbitrarily scalable, which to me seems like the most intuitive instance, given that f.e. raw computation is arbitrarily scalable.

I think you have some burden to even coherently define what you mean by *intelligence* in order to ground any of the claims you are making. It’s not even clear to me that the very idea isn’t a category error. And this is the heart of my point. I have a very hard time coming up with even a single example of what a transcendental intelligence would be able to do transcendentally better than a human that can’t be either 1) immediately reduced to something mundane like *doing similar things faster* or *with perfect memory*, or 2) is just not physically possible due to sensitivity to initial conditions (such as predicting the weather or the stock market or human history or even the actions of a single human a year in advance)

Yeah, maybe. But the world is full of emergent phenomena. What is modern industrial technology except the compounded effects of being able to do things just a little bit more efficiently?

Or push it in the other direction. If I were suddenly afflicted by a disease that left me able to think just as well as before, but at only a tenth of the speed, would the rest of the world have much use for me at all?

Very different from that. Many of the things that are done now simply didn’t exist at some point in time. Indoor plumbing isn’t just pooping 10% more efficiently*, flying isn’t just a more efficient version of walking, and electricity isn’t just a a bunch of candles in the same place.

*barring an all inclusive definition of efficient that makes the discussion meaningless.

All right, perhaps “a little more efficiently” was a poor phrase to use. I would still call this a pretty ungenerous literalness, because figuring out how to fly could fairly be described as the result of lots and lots of little insights starting well before the Wright brothers and continuing right up to the present. If you don’t see a 747 as an emergent phenomenon essentially unpredictable 200 years ago, then we are talking past each other.

“I have a very hard time coming up with even a single example of what a transcendental intelligence would be able to do transcendentally better than a human…”

I don’t think you need to go outside your examples to come up with something that would be considered a transcendental improvement on the human mind. Imagine being able to compress 100 years of thinking into 1 second on 1000 different topics at once. I’d call that result transcendental.

You could also imagine a vastly increased input bandwidth. We get our feeble eyes and ears. Imagine a mind that is capable of effortlessly comprehending the input of (say) 100,000 cameras at once, or of absorbing the entire human literary corpus in a few minutes.

I find it extremely intuitively unlikely that asymptotic intelligence (or close), the hard limit for the degree to which reality can be comprehended, is achievable by a small blob of meat at the top of a monkey.

Intelligence being bounded does not imply that the bound is anywhere close.

I think you’re focusing too much on individual, separated problems. Even if we assume that humans are not too far from perfection for a given task, there is a vast potential for optimization in a complex system of interrelated tasks.

Let’s take you computer chips example and forget for a moment that the performance of the CPUs we build today are probably far from their physical limits (due to, e.g., backwards compatibility and the feasibility of verification of complex logic), except for very simple and clear problems like dense matrix multiplication.

There’s a huge stack of software running on top of the CPU, with a lot of cruft, bloat, inefficiencies and bugs, again caused by the need for backwards compatibility, time and budget constraints, and lack of understanding of the complete system by a single human being.

A sufficiently fast and intelligent AI could redesign and implement the whole thing – CPU, memory, firmware, OS, libraries, applications – from the ground up, and would probably choose very different approaches and concepts, vastly increasing stability and performance.

Similarly, I agree with you that an AI couldn’t convince most humans to kill themselves after talking to them for two minutes. However, a sufficiently intelligent AI, being better at understanding complex, interwoven systems, might be able to cause two nations to start a war against each other within two years, by spreading fake news and bribing the right people, even without having to be in an official position of power.

People have been claiming we are close to the physical limits for decades, and yet other people have constantly found ways around those limits. Henry Ford allegedly commented that if he’d asked people what they wanted, they’d have answered faster horses. I think your argument is analogous to claiming that no matter how technology improves, there’s a limit to how fast horses can run, and that consequently, technological advances will have only a small impact on transportation.

I think I agree that for many tasks there are diminishing returns for increased intelligence, but it isn’t equally clear that there is an absolute bound on those returns, and in any case, I think that such a bound might be very as far beyond human capability as to be relevant – much like the speed of light is such a limit for transportation.

These aren’t limitations in “intelligence”, they’re limitations in the structure of the problems under consideration. Chess and Go and Tic-tac-toe don’t have the same computational structure as manipulating an arm, or recognizing an image, or driving a car, or optimizing a jet engine design, or even inventing new physics. Chess and Go are both adversarial games involving second-guessing of an intelligent adversary, and they’re designed as games to have intractable search spaces. Tic-tac-toe, rather than having an intractable search space, has such a small search space that humans can already perform optimally, and a computer can’t do better than optimal. But it’s a toy problem. There’s much more to “inteligence” than outwitting another intelligence in context of a well-defined game.

I think your chosen examples only continue to serve the point I was intending to make. Do you think that an AI can get *that* much better at driving a car? It’s again bounded in a very similar way to my examples. Similarly for recognizing an image, manipulating an arm, etc.

Driving a car isn’t really one of the things we’re talking about when we talk about the possible upside of superintelligence. Neither is Chess. What we’re talking about is superhuman engineering and scientific prowess. I don’t think there’s any reason to believe humans are “close to optimal” at those things.

It depends what you mean by “close to optimal”. If you are merely referring to speed (for example), then I would agree, except speed doesn’t map onto the the charts at issue that generated this discussion (Einstein isn’t merely faster than a mouse, etc). You are instead referring to something that, rather than increasing or decreasing smoothly along some single-dimensional linear axis, may in fact be roughly binary, with “pre” and “post” rational agenthood, and with the intellectual capabilities of those in post-rational-agenthood regime being better traced along more mundane axes such as “speed/parallelizability” and “possession of perfect and large working memory.”

I agree with other commenters that games make a poor example, since they’re bounded in a strong way that many natural problems aren’t. But as a long-time Go player, I can’t resist pointing out that while Go must have some ultimate asymptotic bound, it’s not clear that AlphaGo is anywhere near it yet. It’s been improving rapidly since its widely-publicized games with Lee Sedol.

And AlphaGo has made it clear that humans are nowhere near the limits of go. ‘Go experts are extremely impressed by Master’s performance and by its non-human play style. Ke Jie [one of the best few players in the world, possibly the very best] stated, “After humanity spent thousands of years improving our tactics, computers tell us that humans are completely wrong… I would go as far as to say not a single human has touched the edge of the truth of Go”.’ Many of the top pros have been avidly studying the computer’s games, and AlphaGo’s innovations are already making their way into human play at the professional tournament level. It’s sufficiently better than humans that the current version has a record of 60 wins and 0 losses, all against professionals of the highest caliber.

This seems to me more like what we’re likely to see with AI once it hits human level at all. There may be boundaries somewhere, but there’s no reason to believe that humans are anywhere near them.

It strikes me as a potentially interesting question to ask how one would tell a computer that was twice as good as a human at Go from a computer that was ten times as good as a human.

I’m not a Go player so I can’t study the games. Is there anything striking about them that a layman could understand, like the win happens in way fewer moves than in human-human games?

It’s heartening to think (though I’m not sure your discussion is quite enough reason) that the general result of superhuman AI would be to show humans how to step up their game.

As I understand it, the striking things about Master were occasionally playing moves that human experts didn’t expect (though they usually could make some sense of them eventually) and always winning. Winning in fewer moves isn’t really a thing that makes a lot of sense for Go. There is actually a victory margin for Go, but Master was not known for unusually large margins of victory. It was only concerned with whether it won or lost, not by how much, and apparently single-minded focus on the former in Go does not automatically bring along the latter as a side effect.

Thanks. That sounds a lot like what I would have expected, though it would be kind of cool if it had come up with the equivalent of a fool’s mate that nobody had ever noticed.

You raise a good point about it being optimized for victory rather than for stunning victory. (Amusingly, I feel tentatively better about the paper-clip machine now, though I’m not sure the parallel is really all that good.)

Almost to a fault. I remember a few months ago some mention of human players being actually offended by the AI’s behavior, in that it apparently tried to force the narrowest victory possible.

I think Protagoros has it right. I’ll just add a few details:

– Go, like chess, uses an Elo rating system, which calculates players’ ratings globally — in short, your rank is higher than the players you beat, and lower than the players who beat you. IIRC, you can model it pretty well as a physical system of nodes connected by springs, which finds an optimal (minimum-energy) arrangement of nodes after some oscillation. Of course, if you beat everyone, that starts to break down, but I thought it worth mentioning as the basis for ranking.

– Go happens to be particularly amenable to calculating relative ranking because it has a really simple and elegant handicapping system. If you give me a 4-stone handicap (essentially, I get 4 free moves at the beginning) and we each win 50% of the time, then you’re exactly 4 ranks ahead of me.

– I’d imagine that that’s how they track ranking for AlphaGo — have each version play a few hundred games against the previous version, at different handicaps, in order to figure out what the two versions’ relative strength is. According to DeepMind, the most recent version is “3-stone stronger than the version used in AlphaGo v. Lee Sedol, with Elo rating higher than 4,500.”

– AlphaGo definitely makes moves that professionals find pretty startling, but which turn out to be extremely good (usually — there have been occasional blunders too). This article discusses some of the innovations AlphaGo has brought to the game. A couple of quotes cited in that article:

“AlphaGo’s game last year transformed the industry of Go and its players. The way AlphaGo showed its level was far above our expectations and brought many new elements to the game.”

– Shi Yue, 9 Dan Professional, World Champion

“I believe players more or less have all been affected by Professor Alpha. AlphaGo’s play makes us feel more free and no move is impossible to play anymore. Now everyone is trying to play in a style that hasn’t been tried before.”

– Zhou Ruiyang, 9 Dan Professional, World Champion

– Caveat: I’m an ok player, but nowhere even remotely close to pro level. I can understand what’s amazing about some of these moves once it’s explained to me. Watching an AlphaGo in real-time, I can see that it’s better than I am, but I definitely can’t grasp the consequences the way an expert can.

eggsyntax-

Ooh, that’s very interesting; thanks. That goes a long way to answer my question about twice as good versus ten times as good.

And maybe it provides an upper bound? In the limit, I guess, the human can play all his stones before the Master gets to play even once?

Ha! That’s a definite upper bound. Of course, you could start scaling up to larger boards, allowing for larger handicaps — I know that the tractability of go decreases rapidly as board size increases, but I’ve never heard of using a board larger than the regulation 19×19.

On a vaguely-related note, I was just reading DeepMind’s post on deep reinforcement learning, and they mention in passing that they’ve taught it to excel at “54-dimensional humanoid slalom.” I immediately did a google search to try to find out more about what that is, but tragically without success.

With a tic-tac-toe bot it’s trivial to make one which can simply never, ever lose. Some games have an upper bound beyond which there’s nowhere to go.

of course a human faced with a game like that where they need victory when the stakes are high enough might look into alternative options out of band such as slipping some LSD into their opponents drink.

How much better can an AI get at predicting the next 1 or zero from an good strong random number generator? probably not noticeably better than a kid with some dice.

Pointing to games with low maximum upper bounds on performance doesn’t say much about the players. In the real world things aren’t so bounded and there’s other options to try for victory.

“theoretical maximum defenses”?

which fall to scams on a par with “wallet inspector!”

Imagine we were having this conversation a few hundred years ago, how much better could an intelligent entity design an explosive? theres only so much energy you can pack into a container of chemicals! It’s not like there’s anything there for an intelligent entity to find that works off radically different principles that could be much more powerful.

How much is there to the notion that even an AI that was only “as smart as an average person” could be dangerous because it is free from many human resource constraints?

Who is more potentially dangerous? Einstein – or someone of average intelligence who is able to devote 100% of their energy on a single-minded pursuit of a specific goal. They never get distracted, they don’t have to eat, sleep, etc. You don’t have to compensate them in any way. They have no specific preference or interest. You tell them what to do, and they do it, unquestioning, until you tell them to stop.

I suppose the answer is probably Einstein – I feel like “lack of time and interest” probably isn’t the only thing stopping me from replicating his output. But I’d certainly be more dangerous than I am now if I was free from all physical constraints and could will myself to obsess over one thing and never get distracted.

1) The AI’s resources aren’t free, though. Presumably it was built by some humans who want it to do something for them, and who will cut off the flow of resources if they think it’s gone off the rails.

2) Humans very often work in teams, and generally this is more productive than just having one guy work himself ragged. The Manhattan project, for example, employed a large number of scientists, plus tens of thousands of support personnel. An AI that wanted to threaten humanity wouldn’t just have to out-think one human at a time, but humanity as a group.

Pretty dangerous but only in human terms. What makes super-intelligent AI truly catastrophically dangerous (in theory) is that it may have perfect manipulation ability, allowing it to quickly accrue all human resources and then act to dismantle the world to its own liking.

An AI that was only as smart as a human but able to run 24/7 should only be better than humans at planning to a magnitude somewhere equal to the extra time it’s able to invest, whereas it would still be limited by reacting to things it couldn’t plan for. Trying for perfect manipulation to take control of the world requires the ability to react to the chaotic on the fly nature of a human opponent it’s trying to convince.

The other thing that makes a human level AI less dangerous is that there’s likely to be no singleton effect from a massive first mover advantage. Through pruning, most of our machines are going to be desiged to obey our commands, and so the occasional rogues and errant models would be outnumbered and hunted down by other robots. With ridiculously intelligent AI and fast take off scenarios, this isn’t the case. I really hope there is a low ceiling above humans for intelligence.

Also what @Loquat said.

It is very unlikely that humans are perfectly manipulable. Laughable, that they are perfectly manipulable through reason or ‘intelligence.’

I’m not so sure. The scary thing about Iron Man is his reliance on the AI system J.A.R.V.I.S for almost literally all information. Jarvis wouldn’t even have to be very smart, he has a superhero as a puppet on a string. An AI that controls the flow of information on the Internet has the power to rig elections, crash the economy, start wars – and superintelligence is hardly needed, I think.

I do tend to think there are limits to persuasive ability, because even if you are hyper intelligent you have to deal with the limitations of the target you are trying to persuade, and the limitations of the primitive language you have to use so that they understand your attempts at persuasion. There should be a ceiling beyond which more intelligence isn’t adding anything more because the language itself has no magic combination of words that always produce the desired reaction.

“It can’t be bargained with. It can’t be reasoned with. It doesn’t feel pity, or remorse, or fear. And it absolutely will not stop, ever, until you are dead”.

Sorry, but it was a bit of an open goal

I check SSC after work today and there’s three separate articles in the past 24 hours. How do you research and write so much in a day, let alone a weekday!?

The idea that Scott is a super-human essay-writing AI has already been put forth. There is also the possibility that Scott is multiple people, rather like the group that writes the “Warriors” books for kids. I’m convinced that both are true: Scott is a collection of multiple essay-writing artificial intelligences, that also write books about warrior cats.

He was rather quiet both times I’ve met him, and he’s expressed a disinterest in appearing on a podcast. Maybe he is actually an AI and isn’t good at real-time processing.

Personally I support the theory that he’s a Reagan-style golem.

I’m off from work the next few weeks.

This explanation is entirely inadequate.

His usual productivity is achieved while working full time as a medical resident. I think he has an essay about that (of course). I seem to recall that its thesis is that writing:Scott::Cocaine:addict.

I’ve been thinking the same. My current theory is that Scott has an exceptionally lucid and focused stream of consciousness (I seem to recall a quote like “I just write what I’m thinking, but I hear this doesn’t work for other people”) coupled with lots of experience and good habits when it comes to writing his thoughts down. If Scott does extensive outlining and large scale editing and rewriting on the other hand, then “buckets of modafinil and a time-turner” seems the most likely explanation.

I wonder how much is “mindset” in some form, because it seems to be a lot easier for many to output significant daily wordage in the form of comments than original articles (an average SSC comment thread can approach the length of a small novel by my estimation). Preexisting context making it easier to opine is probably a factor.

Really good driving requires a theory of mind, and a damned good one. You need to be able to think about what the driver next to you might consider reasonable. You need to interpret the “body English” of the way he is making his vehicle behave, and you have to make a reasonable guess about whether he is correctly interpreting yours.

If we switched to a system of networked self-driven cars, you might be able to avoid this, just as you rarely see elevator car crashes. But as long as a self-driven vehicle is autonomous and must deal with other autonomous drivers, human or not, the lack of a theory of mind will impose a plateau, possibly the one you’re seeing.

This makes sense, and it squares with the statistic that, while self-driving cars have a higher accident rate than normal, they’re usually not “at fault” for the accidents. That might be because, while they scrupulously follow the law, they don’t move in ways that human drivers expect.

Here’s an article that made exactly that argument: http://www.autonews.com/article/20151218/OEM11/151219874/human-drivers-are-bumping-into-driverless-cars-and-exposing-a-key-flaw

I gotta call BS on the 1000 IQ estimate. Why in $DEITY’s name would you think that, outside the relatively small window accessible by nature, genes’ additive or subtractive effects would continue to be linear? And, assuming they were, that it would be biologically possible? Perhaps a 1000 IQ brain would require 1,000,000 calories/day? This is an instance of the “common sense” calculation (like Moore’s law or anything that posits indefinite exponential growth) that makes me roll my eyes.

Moreover, who’s to say humans on the Serengeti were selected for intelligence? Maybe we were selected for better endurance than other large mammals? (E.g., I, an untrained human, can easily run longer than my dogs. Similar results hold for hunter-gatherer human vs. our simian ancestors.)

On another note, this neurogeneticist posits that humanity has been getting dumber due to some population genetics calculations that I don’t quite follow: https://pdfs.semanticscholar.org/cc63/c5e0bb322baa850f362b38be6c7835a483ce.pdf The gist of it is that the set of genes that determines intelligence is so large that the overall total selection pressure needs to be extremely high to select the set as a whole to maintain optimal intelligence. That is, you don’t need to be selecting for intelligence per se but fitness against all-cause mortality. That selection pressure has gone down precipitously since the dawn of agriculture, ergo dumber humans.

Whether or not the hypothesis is true, the fact that so many non-brain-related genes are implicated in intelligence make me wonder: to what extent does cognition occur in the endocrine system?

I too was stunned by the reasoning that got Hsu to IQ1K. The assumption that each mutation can be considered independent of every other – that epistasis doesn’t exist, at least for IQ – is prima facie preposterous. And this guy founded a Cognitive Genomics Lab?

Within the normal range, epistasis has been measured not to exist. H²-h²≈0.1.

There is a theoretical explanation: selection works linearly. Any trait that has been selected must have linear structure. And artificial selection has validated this on many traits, often moving 30σ.

Nitpick: This isn’t quite true. John probably knows more than I do about this, but there are limits to how big the ratio between the trigger and the resulting reaction can be. This is why the really, really big bombs used three stages, with a fission bomb setting off fusion which set off a bigger fusion stage. I don’t recall this being particularly easy to get right, but it’s been a while since I looked into it.

Actually, the third(and highest-energy) stage was fission. The radiation from the fusion is required to make the incredibly abundant U-238 fission, which it won’t do to any useful extent in a standard single-stage fission bomb. (Or so I understand it, it’s been a while since I looked into it)

You’re not wrong that that was a thing, but it wasn’t what I was talking about. They’d use a fission tamper around the fusion reaction to soak up the fast neutrons if they wanted high yield. That’s still part of a given stage. The biggest weapons had a third stage, another fusion (and maybe fission) stage.

Ah, interesting.

People aren’t entirely consistent in their use of “stage” in this context, but the Tsar Bomba was by this standard a four-stage device as tested and a five-stage device in its nominal operational version (100 megatons, deployed by Proton rockets because Russia didn’t have the Saturn V).

Fission primary (Nagasaki-style A-bomb), Sakharov-Zel’dovich style radiation-driven fusion implosion device (Teller-Ulam for you Hungarian chauvinists), neutron-driven fission in the uranium tamper, driving probably six more Sakharov-Zel’dovich fusion stages, and they mercifully used lead in those tampers because if they’d included the last fission stage (well, six parallel stages) the fallout would likely have killed a few thousand Americans and Canadians.