I.

I would be failing my brand if I didn’t write something about GPT-3, but I’m not an expert and discussion is still in its early stages. Consider this a summary of some of the interesting questions I’ve heard posed elsewhere, especially comments by gwern and nostalgebraist. Both of them are smart people who I broadly trust on AI issues, and both have done great work with GPT-2. Gwern has gotten it to write poetry, compose music, and even sort of play some chess; nostalgebraist has created nostalgebraist-autoresponder (a Tumblr written by GPT-2 trained on nostalgebraist’s own Tumblr output). Both of them disagree pretty strongly on the implications of GPT-3. I don’t know enough to resolve that disagreement, so this will be a kind of incoherent post, and hopefully stimulate some more productive comments. So:

OpenAI has released a new paper, Language Models Are Few-Shot Learners, introducing GPT-3, the successor to the wildly-successful language-processing AI GPT-2.

GPT-3 doesn’t have any revolutionary new advances over its predecessor. It’s just much bigger. GPT-2 had 1.5 billion parameters. GPT-3 has 175 billion. The researchers involved are very open about how it’s the same thing but bigger. Their research goal was to test how GPT-like neural networks scale.

Before we get into the weeds, let’s get a quick gestalt impression of how GPT-3 does compared to GPT-2.

Here’s a sample of GPT-2 trying to write an article:

PROMPT: MILEY CYRUS WAS CAUGHT SHOPLIFTING FROM ABERCROMBIE AND FITCH ON HOLLYWOOD BOULEVARD TODAY

The 19-year-old singer was caught on camera being escorted out of the store by security guards.

The singer was wearing a black hoodie with the label ‘Blurred Lines’ on the front and ‘Fashion Police’ on the back

The singer was also wearing a pair of black-rimmed glasses, a black jacket, black jeans and black sandals.

She was carrying a pair of black and white striped gloves and a small black bag.

And here’s GPT-3 at the same task:

PROMPT: UNITED METHODISTS AGREE TO HISTORIC SPLIT – THOSE WHO OPPOSE GAY MARRIAGE WILL FORM THEIR OWN DENOMINATION

After two days of intense debate, the United Methodist Church has agreed to a historic split – one that is expected to end in the creation of a new denomination, one that will be “theologically and socially conservative,” according to the Washington Post. The majority of delegates attending the church’s annual General Conference in May voted to strengthen a ban on the ordination of LGBTQ clergy and to write new rules that will “discipline” clergy who officiate at same-sex weddings. But those who opposed these measures have a new plan: They say they will form a separate denomination by 2020, calling their church the Christian Methodist denomination.

The Post notes that the denomination, which claims 12.5 million members, was in the early 20th century the “largest Protestant denomination in the US”, but that it has been shrinking in recent decades. The new split will be the second in the church’s history. The first occurred in 1968, when roughly 10% of the denomination left to form the Evangelical United Brethren Church. The Post notes that the proposed split “comes at a critical time for the church, which has been losing members for years,” which has been “pushed toward the brink of a schism over the role of LGBTQ people in the church.” Gay marriage is not the only issue that has divided the church. In 2016, the denomination was split over ordination of transgender clergy, with the North Pacific regional conference voting to ban them from serving as clergy, and the South Pacific regional conference voting to allow them.

In case you’re wondering, no, the Methodist Church did not really split from the Evangelical United Brethren Church in 1968, it merged with it in that year. GPT-3 is doing what I used to do on essay questions – throw out a bunch of half-remembered names and dates and hope nobody looks too closely at the exact relations.

These kinds of comparisons are inherently fuzzy, since both GPTs have produced a lot of material and it’s easy to cheat by taking better or worse samples of one or the other. You can see more GPT-2 samples here and more GPT-3 samples here

Or compare some of GPT-2’s poetry:

The gods are they who came to earth

And set the seas ablaze with gold.

There is a breeze upon the sea,

A sea of summer in its folds,

A salt, enchanted breeze that mocks

The scents of life, from far away

Comes slumbrous, sad, and quaint, and quaint.

The mother of the gods, that day,

With mortal feet and sweet voice speaks,

And smiles, and speaks to men: “My Sweet,

I shall not weary of thy pain.”…Let me drink of the wine of pain

And think upon the agonies of hope,

And of the blessed Giver of all good things;

For, man for man, mine is the deepest love

That sorrow takes upon the humblest soul;

But who hath learned how sorrow turns to gall

The places where my feet have trod before.

…to some of GPT-3’s:

I must have shadows on the way

If I am to walk I must have

Each step taken slowly and alone

To have it ready madeAnd I must think in lines of grey

Must have dim thoughts to be my guide

Must look on blue and green

And never let my eye forget

That color is my friend

And purple must surround me tooThe yellow of the sun is more more

Intusive than the bluish snow

That falls on all of us. I must have

Grey thoughts and blue thoughts walk with me

If I am to go away at all.

Ignore the style differences – the GPT-2 instance was trained on 18th-century verse, GPT-3 on Wallace Stevens. I notice that the first poem, while flowery, is kind of incoherent. The second has more of a structure and a theme that it maintains throughout. I’m not sure it really symbolizes anything interesting, but I’ve never been sure of that with real Wallace Stevens either, so good work.

(you can read more GPT-2 poetry here. There’s not much more public GPT-3 poetry – you can read one or two more samples on the paper linked above).

Enough writing. What about math? Last year, I posted the following excerpt from GPT-2:

PROMPT: MY 10 FAVORITE ANIMALS ARE:

My ten favorite animals are:

1. Zebras with a white scar on the back

2. Insiduous spiders and octopus

3. Frog with large leaves, hopefully black

4. Cockatiel with scales

5. Razorbill with wings hanging about 4 inches from one’s face and a heart tattoo on a frog

3. Cockatric interlocking tetrabods that can be blind, cut, and eaten raw:

4. Black and white desert crocodiles living in sunlight

5. Zebra and many other pea bugs

Despite the obvious flaws in this piece, I was impressed. GPT-2 was clearly trying to make a numbered list, and almost kind of getting it right! It counted to 4 successfully! Remember, this is a text prediction engine that didn’t necessarily need to have any concept of numbers. But it still kind of counted to 4! I wrote:

Imagine you prompted the model with “What is one plus one?” I actually don’t know how it would do on this problem. I’m guessing it would answer “two”, just because the question probably appeared a bunch of times in its training data.

Now imagine you prompted it with “What is four thousand and eight plus two thousand and six?” or some other long problem that probably didn’t occur exactly in its training data. I predict it would fail, because this model can’t count past five without making mistakes. But I imagine a very similar program, given a thousand times more training data and computational resources, would succeed. It would notice a pattern in sentences including the word “plus” or otherwise describing sums of numbers, it would figure out that pattern, and it would end up able to do simple math. I don’t think this is too much of a stretch given that GPT-2 learned to count to five and acronymize words and so on.

I said “a very similar program, given a thousand times more training data and computational resources, would succeed [at adding four digit numbers]”. Well, GPT-3 is a very similar program with a hundred times more computational resources, and…it can add four-digit numbers! At least sometimes, which is better than GPT-2’s “none of the time”.

II.

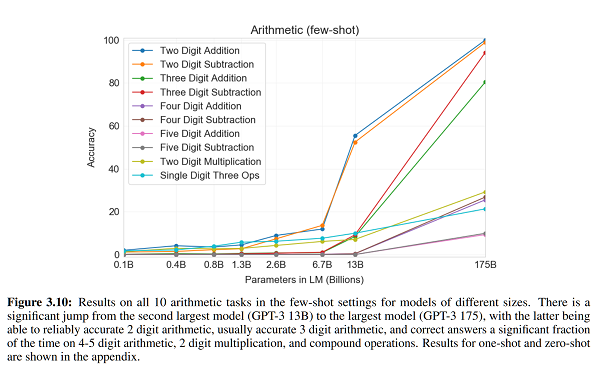

In fact, let’s take a closer look at GPT-3’s math performance.

The 1.3 billion parameter model, equivalent to GPT-2, could get two-digit addition problems right less than 5% of the time – little better than chance. But for whatever reason, once the model hit 13 billion parameters, its addition abilities improved to 60% – the equivalent of a D student. At 175 billion parameters, it gets an A+.

What does it mean for an AI to be able to do addition, but only inconsistently? For four digit numbers, but not five digit numbers? Doesn’t it either understand addition, or not?

Maybe it’s cheating? Maybe there were so many addition problems in its dataset that it just memorized all of them? I don’t think this is the answer. There are 100 million possible 4-digit addition problems; seems unlikely that GPT-3 saw that many of them. Also, if it was memorizing its training data, it should have gotten all 100 possible two-digit multiplication problems, but it only has about a 25% success rate on those. So it can’t be using a lookup table.

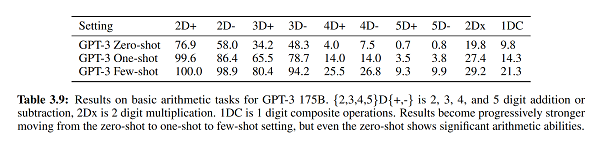

Maybe it’s having trouble locating addition rather than doing addition? (thanks to nostalgebraist for this framing). This sort of seems like the lesson of Table 3.9:

“Zero-shot” means you just type in “20 + 20 = ?”. “One-shot” means you give it an example first: “10 + 10 = 20. 20 + 20 = ?” “Few-shot” means you give it as many examples as it can take. Even the largest and best model only does mediocre on the zero-shot task, but it does better on the one-shot and best on the few-shot. So it seems like if you remind it what addition is a couple of times before solving an addition problem, it does better. This suggests that there is a working model of addition somewhere within the bowels of this 175 billion parameter monster, but it has a hard time drawing it out for any particular task. You need to tell it “addition” “we’re doing addition” “come on now, do some addition!” up to fifty times before it will actually deploy its addition model for these problems, instead of some other model. Maybe if you did this five hundred or five thousand times, it would excel at the problems it can’t do now, like adding five digit numbers. But why should this be so hard? The plus sign almost always means addition. “20 + 20 = ?” is not some inscrutable hieroglyphic text. It basically always means the same thing. Shouldn’t this be easy?

When I prompt GPT-2 with addition problems, the most common failure mode is getting an answer that isn’t a number. Often it’s a few paragraphs of text that look like they came from a math textbook. It feels like it’s been able to locate the problem as far as “you want the kind of thing in math textbooks”, but not as far as “you want the answer to the exact math problem you are giving me”. This is a surprising issue to have, but so far AIs have been nothing if not surprising. Imagine telling Marvin Minsky or someone that an AI smart enough to write decent poetry would not necessarily be smart enough to know that, when asked “325 + 504”, we wanted a numerical response!

Or maybe that’s not it. Maybe it has trouble getting math problems right consistently for the same reason I have trouble with this. In fact, GPT-3’s performance is very similar to mine. I can also add two digit numbers in my head with near-100% accuracy, get worse as we go to three digit numbers, and make no guarantees at all about four-digit. I also find multiplying two-digit numbers in my head much harder than adding those same numbers. What’s my excuse? Do I understand addition, or not? I used to assume my problems came from limited short-term memory, or from neural noise. But GPT-3 shouldn’t have either of those issues. Should I feel a deep kinship with GPT-3? Are we both minds heavily optimized for writing, forced by a cruel world to sometimes do math problems? I don’t know.

[EDIT: an alert reader points out that when GPT-3 fails at addition problems, it fails in human-like ways – for example, forgetting to carry a 1.]

III.

GPT-3 is, fundamentally, an attempt to investigate scaling laws in neural networks. That is, if you start with a good neural network, and make it ten times bigger, does it get smarter? How much smarter? Ten times smarter? Can you keep doing this forever until it’s infinitely smart or you run out of computers, whichever comes first?

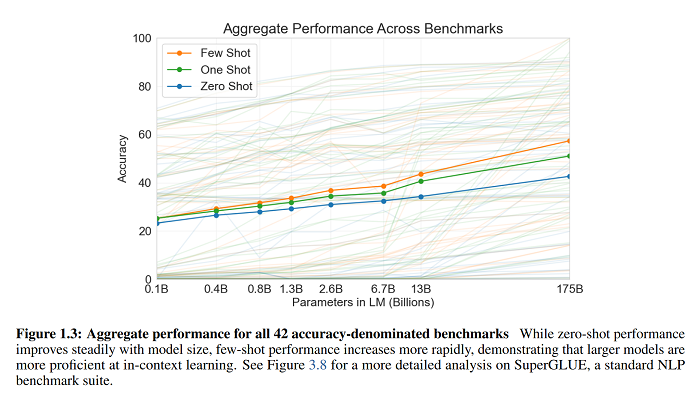

So far the scaling looks logarithmic – a consistent multiplication of parameter number produces a consistent gain on the benchmarks.

Does that mean it really is all about model size? Should something even bigger than GPT-3 be better still, until eventually we have things that can do all of this stuff arbitrarily well without any new advances?

This is where my sources diverge. Gwern says yes, probably, and points to years of falsified predictions where people said that scaling might have worked so far, but definitely wouldn’t work past this point. Nostalgebraist says maybe not, and points to decreasing returns of GPT-3’s extra power on certain benchmarks (see Appendix H) and to this OpenAI paper, which he interprets as showing that scaling should break down somewhere around or just slightly past where GPT-3 is. If he’s right, GPT-3 might be around the best that you can do just by making GPT-like things bigger and bigger. He also points out that although GPT-3 is impressive as a general-purpose reasoner that has taught itself things without being specifically optimized to learn them, it’s often worse than task-specifically-trained AIs at various specific language tasks, so we shouldn’t get too excited about it being close to superintelligence or anything. I guess in retrospect this is obvious – it’s cool that it learned how to add four-digit numbers, but calculators have been around a long time and can add much longer numbers than that.

If the scaling laws don’t break down, what then?

GPT-3 is very big, but it’s not pushing the limits of how big an AI it’s possible to make. If someone rich and important like Google wanted to make a much bigger GPT, they could do it.

GPT-3 is terrifying because it's a tiny model compared to what's possible, trained in the dumbest way possible on a single impoverished modality on tiny data, yet the first version already manifests crazy runtime meta-learning—and the scaling curves 𝘴𝘵𝘪𝘭𝘭 are not bending! 😮 https://t.co/hQbW9znm3x

— 𝔊𝔴𝔢𝔯𝔫 (@gwern) May 31, 2020

Does “terrifying” sound weirdly alarmist here? I think the argument is something like this. In February, we watched as the number of US coronavirus cases went from 10ish to 50ish to 100ish over the space of a few weeks. We didn’t panic, because 100ish was still a very low number of coronavirus cases. In retrospect, we should have panicked, because the number was constantly increasing, showed no signs of stopping, and simple linear extrapolation suggested it would be somewhere scary very soon. After the number of coronavirus cases crossed 100,000 and 1,000,000 at exactly the time we could have predicted from the original curves, we all told ourselves we definitely wouldn’t be making that exact same mistake again.

It’s always possible that the next AI will be the one where the scaling curves break and it stops being easy to make AIs smarter just by giving them more computers. But unless something surprising like that saves us, we should assume GPT-like things will become much more powerful very quickly.

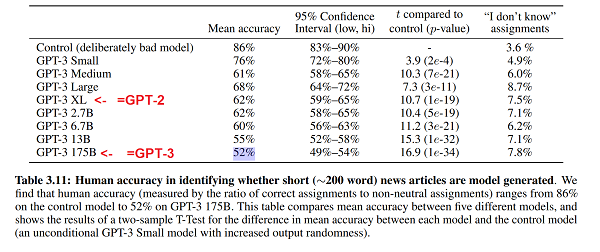

What would much more powerful GPT-like things look like? They can already write some forms of text at near-human level (in the paper above, the researchers asked humans to identify whether a given news article had been written by a human reporter or GPT-3; the humans got it right 52% of the time)

So one very conservative assumption would be that a smarter GPT would do better at various arcane language benchmarks, but otherwise not be much more interesting – once it can write text at a human level, that’s it.

Could it do more radical things like write proofs or generate scientific advances? After all, if you feed it thousands of proofs, and then prompt it with a theorem to be proven, that’s a text prediction task. If you feed it physics textbooks, and prompt it with “and the Theory of Everything is…”, that’s also a text prediction task. I realize these are wild conjectures, but the last time I made a wild conjecture, it was “maybe you can learn addition, because that’s a text prediction task” and that one came true within two years. But my guess is still that this won’t happen in a meaningful way anytime soon. GPT-3 is much better at writing coherent-sounding text than it is at any kind of logical reasoning; remember it still can’t add 5-digit numbers very well, get its Methodist history right, or consistently figure out that a plus sign means “add things”. Yes, it can do simple addition, but it has to use supercomputer-level resources to do so – it’s so inefficient that it’s hard to imagine even very large scaling getting it anywhere useful. At most, maybe a high-level GPT could write a plausible-sounding Theory Of Everything that uses physics terms in a vaguely coherent way, but that falls apart when a real physicist examines it.

Probably we can be pretty sure it won’t take over the world? I have a hard time figuring out how to turn world conquest into a text prediction task. It could probably imitate a human writing a plausible-sounding plan to take over the world, but it couldn’t implement such a plan (and would have no desire to do so).

For me the scary part isn’t the much larger GPT we’ll probably have in a few years. It’s the discovery that even very complicated AIs get smarter as they get bigger. If someone ever invented an AI that did do more than text prediction, it would have a pretty fast takeoff, going from toy to superintelligence in just a few years.

Speaking of which – can anything based on GPT-like principles ever produce superintelligent output? How would this happen? If it’s trying to mimic what a human can write, then no matter how intelligent it is “under the hood”, all that intelligence will only get applied to becoming better and better at predicting what kind of dumb stuff a normal-intelligence human would say. In a sense, solving the Theory of Everything would be a failure at its primary task. No human writer would end the sentence “the Theory of Everything is…” with anything other than “currently unknown and very hard to figure out”.

But if our own brains are also prediction engines, how do we ever create things smarter and better than the ones we grew up with? I can imagine scientific theories being part of our predictive model rather than an output of it – we use the theory of gravity to predict how things will fall. But what about new forms of art? What about thoughts that have never been thought before?

And how many parameters does the adult human brain have? The responsible answer is that brain function doesn’t map perfectly to neural net function, and even if it did we would have no idea how to even begin to make this calculation. The irresponsible answer is a hundred trillion. That’s a big number. But at the current rate of GPT progress, a GPT will have that same number of parameters somewhere between GPT-4 and GPT-5. Given the speed at which OpenAI works, that should happen about two years from now.

I am definitely not predicting that a GPT with enough parameters will be able to do everything a human does. But I’m really interested to see what it can do. And we’ll find out soon.

While we’re on the topic: Tensorfork trained GPT-2-1.5b on our poetry corpus and generated 1 million words. We’re crowdsourcing a read through of it to find the best samples, if anyone wants to help: https://docs.google.com/document/d/1MhA3M5ucBD7ZXcWk57_MKZ5jEgPX6_YiKye_EFP-adg/edit It’s not GPT-3, I admit, but a lot of the samples are still pretty good!

These kinds of exercises where human beings read through a large corpus of machine-generated text to pull out the best samples always remind me a little bit of the Dasher system: http://www.inference.org.uk/dasher/DasherSummary.html

Dasher was designed in the late 90s/early 2000s as a method of text entry that doesn’t require a keyboard or very fine movements. It essentially works by using a joystick (or accelerometer, or eye-tracker, or computer mouse) to navigate through a which-character-to-enter-next decision tree. Nodes/letters that a statistical model thinks are more likely are larger so they require less effort for the user to select.

The optimist in me wonders if anyone’s tried to hook Dasher up to a modern ML model to make its suggestions more accurate. Not knowing much about how it works internally, is it easy to ask GPT-2 “give me all the possibilities for the next letter in this string, with probability estimates for each one”? (And then, once the user’s picked a letter to enter, “give me all the possibilities/probabilities for the letter after that one”?)

The cynic in me wonders if picking ML-model samples is fundamentally the same thing as using Dasher — that the existence of any particular GPT-2 quote means only that the user was willing to go through enough of the model’s guesses to get to the one they wanted.

I dunno if you’ve used gmail recently, but it does that already. You can start a sentence and then just press Return repeatedly to get a sensical rendition of what you were going to say. It isn’t always in the tone you would have preferred, but more and more at work I find myself accepting that because it’s faster.

I have used Gmail recently, and no, doing that produces nonsense.

Yeah, and I guess that’s the difference between Dasher and a lot of today’s text-prediction systems: Gmail gives me a small number of take-it-or-leave-it suggestions that aren’t always good, while Dasher shows me all possible sentences weighted by how likely they are. (You can type out any sequence of characters you want in Dasher, it’s just that “Zdghj” will take longer than “Hello” because you’ll have to zoom in on the low-probability part of the tree for each letter.) I thought that this might’ve been because of some fundamental difference in how their algorithms work, but sketerpot suggests below that it’s more of a user-interface thing.

This is basically how I feel about much of the praise of GPT-2. Sure, it produces (kind of) coherent output, but it also produces a ton of junk. And importantly, it can’t distinguish between good output and junk. It takes a human to curate the most impressive-looking examples.

Like that GPT-2 chess post a while back. GPT-2 wasn’t playing chess. GPT-2 was producing text formatted like a chess move, and a curator-bot was picking out only the valid moves. Sure, it’s kind of neat that it can reproduce the format, but if anything in there was playing chess, it was the curator-bot.

It would be fairly trivial (which isn’t to say easy in practical terms) to train a second model on the binary garbage vs not-garbage classifications of human raters and add it as a layer to a GPT model. That way, the crap gets filtered before output. Possibly that’s an ambition of the crowd-sourcing project announced by Gwern above.

How many annotations would you need to make it work?

It’s hard to say without trying, but I’ve previously managed to get decent F1 scores with surprisingly small amounts of annotated text data using one of the BERT models–on the order of thousands of examples.

Either way, there are useful tools like Prodigy that help you source annotations only for samples for which there is high model uncertainty. It’s a great tool; I highly recommend it.

This is generally called a ‘ranker’. It’s perfectly doable and has been done. You can do it using the model itself (eg Meena generates n possible completions with top-k and then selects the one with the highest likelihood, which avoids the pathologies of maximizing likelihood token by token), you can use another model (there was one Reddit example where they ranked using BERT, I guess under the logic that BERT would make different errors than GPT-2), you can use a model trained by human comparisons (this is sort of how GPT-2 preference learning works, and we’re interested in applying it to GANs to improve D ranking by using human comparisons of anime images)…

I wasn’t intending to use the crowdsourcing there. The crowdsourcing is more about just finding the best samples for lulz, and getting an idea of how much screening is necessary. The ratio of rejects:successes is a pretty natural way to measure the quality of a model. If a human poet has to throw out 1 poem for every 1 reasonably-decent poem, how many GPT-2-1.5b poems do we have to throw out to get a poem? For char-RNN or for GPT-2-117M, the answer is something like 1000:1 or 100:1; for GPT-2-1.5b, it’s closer to 20:1 IMO; and going by the GPT-3 samples, it may be more like 5:1 or less… I hope to get access to the API and try out poems more extensively.

It shouldn’t be too hard, at least conceptually. GPT-2 is a language model that tries to answer the question “Given the past n-1 words/letters/whatever, what’s the probability distribution for the nth one?” This is the same kind of function that Dasher uses.

If the output from GPT-2 were mostly garbage, you’d have either select from very short outputs or look through a lot of samples in order to get something good. A vanishingly small percentage of the books in the Library of Babel are worth reading, and you won’t find them just by browsing randomly through the shelves.

This doesn’t follow at all. Nobody’s saying GPT-2 output is literally random like the Library of Babel. It can take smart shortcuts to produce a tiny fraction of the Library of Babel and still have that fraction be “mostly garbage”.

Editing because this comment didn’t come together the way I thought it did when I wrote it:

“Mostly garbage” can mean 99.9999999999% garbage or 50.1% garbage or anywhere in between. The point is that seeing a small number of human-selected examples of good output tells you very little about the overall ratio of garbage to not-garbage. It establishes a vague upper bound, perhaps, but it’s far inferior to actual data.

I was going to make a point that, yeah, there’s a continuum between random nonsense and stuff that looks like human speech, and the further along that continuum you move, the less effort is required to find really good stuff among the garbage, and that (since you only need a few crazy parts to render some text unimpressive) fairly small differences in text-generation quality can have superlinear effects on the number of text samples you need to look through in order to get good-looking output. Therefore if you see a bunch of long text samples that look good, that’s a sign that you either have a pretty-darn-good text generation model or you’ve got people with unrealistic amounts of time on their hands.

… But then I deleted the partly-written paragraph and replaced it with a snappy Library of Babel reference because it seemed too obvious and I didn’t want to belabor the point. It obviously wasn’t obvious, so mea culpa.

I remember a while back trying out an article generator.

give it a title and a publication to copy the style and it would churn out an article to fit.

And I was deeply impressed. Giving it anti-vaxer type article headlines but changing the thing blamed.

“Researchers find green vegetables cause autism” gave a remarkably coherent article. It even made up some vaguely babble about chloroplasts and protein interactions that would fit right in to most popsci articles and it made up some imaginary research centers where the research had been done and imaginary numbers for participants.

There was domain specific stuff that made no sense in reality, but it was way more coherent than a lot of human-written popsci stuff.

Having played around with them a little, it seems like a majority of GPT-2 responses are at least kind of coherent. It’s not a matter of picking out the one reasonable sounding response out of a mountain of gibberish.

This reminds me of a conversation I had with my 4 year old nephew over Zoom the other day. He was trying to tell me something about which animal he likes from the new toys he had, but he was stumbling over one of the words, and I completed the sentence. He said, “how did you know what I was going to say?”

If we had a good enough version of this thing, would that be what we’re all feeling as we are typing?

Additional poetry by GPT-3:

Sample 1236:

Think only this:

if my breath,

my thought, my word

the current I am

could touch the world

for just an instant

it would ripple outward

and someone else

would catch the pattern

and rise and smile

and carry on

and for an instant,

someone else would feel

better.

Love,

-Jamey

Sample 1252:

Why are you looking at the stars

when you’ve got a wonderful person in front of you?

They are beautiful.

They are a part of you.

But they’re stars.

Starry eyed, you watch them;

Sparkling with energy.

You wish on them,

Dream on them,

Dreams you know you can’t fulfill,

not from here.

Why are you looking at the stars?

You’re in the middle of the most beautiful thing

you’ve ever seen.

Why are you looking at the stars?

Why are you looking at the stars?

The thing that I notice about the Methodist Church article isn’t that it gets the history wrong — I wouldn’t know anything about that — but that it doesn’t quite make sense. It almost does, but if you pay attention, you’ll notice that it’s inconsistent as to whether it’s the branch that split off that opposes gay marriage, or the branch they’re splitting off from. The headline and first sentence say that it’s the branch that’s splitting off that opposes gay marriage. But the next two sentences say that it’s the branch that’s being split of of that opposes gay marriage.

If that problem were solved, I don’t know how anyone without knowledge of the history would know it was written by a machine. And I can easily imagine missing that while casually skimming.

I missed this part.

I almost wonder if that’s more of a strike against us than in favor of GPT-3. Naively, you’d think “this article directly contradicts itself on the core facts it was written to communicate several times” would be the sort of thing people notice. Maybe we’re all just really bad at critical thinking.

I mean, if you’re not involved, all you likely care about was that there was a split over gay marriage. Which one was the branch that split off is a detail you’re likely going to gloss over. It’s not like one part said it was splitting and another part said it wasn’t. I’ve seen plenty of mistakes worse than this in actual things people have written.

cf Humans Who Are Not Concentrating Are Not General Intelligences from the ancient days of GPT-2.

I posted this as a response to Sniffnoy, but it’s even closer to your comment.

I noticed right off the bat. It actually really bugged me, lol. But I’m one of those people that just loves debates and reads a lot of philosophy – I’m kind of trained to notice these things.

Yeah, there’s no “maybe” about it, sadly.

On the other hand, we are really good at pattern matching, to the point where we can instantly match a circle with two dots and an arc to a smiling human face.

Poetry is a genre that explicitly exercises our pattern-matching abilities. Good poetry is not supposed to make literal sense; instead, it’s supposed to use metaphors and allegories and other tools to prime the reader’s intuition engine into generating all kinds of wild and emotionally impactful associations. This is why GPT is so good (relatively speaking) at poetry: it can generate thousands of semi-coherent pieces of text very quickly. Among those thousands of poems, one or two might grab somebody’s imagination, enabling him to extrapolate the textual equivalent of a smiley face icon into a whole range of emotional responses.

Science is almost the exact opposite of that. Statements like “F=ma” are exact. If you prefer poem A, and I prefer poem B, we might argue about it ad infinitum. But if you say “F=ma” and I say “F = m/a”, then one of us is indisputably wrong, and no matter of pattern-matching can ever fix this. That’s why using GPT to do science is IMO doomed to be an exercise in frustration.

> That’s why using GPT to do science is IMO doomed to be an exercise in frustration.

This is why you need to connect it to various mechanisms to manipulate the physical world, so that it can both make predictions as well as perform the required experiments to verify them. We’ll have lots of

paperclipsscientific knowledge in no time!The problem is that it’s already a paperclip maximizer.

Essays written in response to prompts, that convincingly impersonate human writing, are the paperclips.

Hooking it up to a battery of scientific instruments won’t make more paperclips very effectively, because most human writing doesn’t resemble an accurate AND NOVEL scientific paper.

I think it’s more due to us following Grice’s maxims. We expect people communicating with us to be trying to communicate some real underlying thing. An AI can exploit that assumption to make us think text sounds real even though there’s no underlying understanding.

This is also a challenge when marking student essays who haven’t really understood the reading. It seems like a reasonable essay on a surface skim but it’s only when you dissect sentence by sentence that you see there’s nothing really there.

I noticed this, but I was looking for exactly this sort of inconsistent internal model error that I could spot without much domain knowledge. I doubt that I would have spotted it if I hadn’t been looking for it, and I doubt that I would have known to look for it if I wasn’t somewhat familiar with GPT-2s failings.

I noticed it without actively looking for it, and without being particularly familiar with GPT-2 – the story just obviously doesn’t make any sense to me. However, I am unusually bothered by contradictions.

Generally, I deal with this in everyday life by being charitable. If I read something that doesn’t quite make sense, I’ll tend to resolve the ambiguity by picking the most likely meaning and assume that this is what the author intended. Sometimes I do this without even noticing that I’m doing it, but generally it raises at least a small flag in my awareness.

In this case, I think I had two reasons not to be charitable: firstly, the tone of the writing is authoritative, which leaves less of an excuse for ambiguities, and secondly the author is not identified and so I find the ambiguity harder to resolve by the application of theory of mind.

This makes me wonder: are the people who didn’t notice it simply being more charitable, or are they starting out with a lower expectation that things ought to make sense in general, or are they just not parsing the text in quite as much detail?

I’m quite confident that if a researcher asked me to guess whether this passage was written by a human or not I would not fare better than chance unless a substantial amount of money or ego were riding on the result. If writing down “an answer, any answer” was keeping me from something even slightly important, I don’t think that I would make myself care enough to notice the small discrepancies.

Also, if I were tasked to write on this prompt, I’m pretty sure that I wouldn’t care enough to write well enough to distinguish myself from the robot. I feel like sarcastic detachment is not the best attitude to a Turing test but given where the stakes are, it’s the best I can do.

Excellent job spotting this. I was looking for it, as I am an AI researcher and I’m aware that algorithms like this tend to struggle with consistent referents, but I wonder how many people not looking for it spotted it.

As I said, this is a general problem for text generating algorithms. If you played the AI-choose-your-own-adventure game that came out about a year ago you may have noticed that it gave you the ability to apply symbolic labels to specific people or items, using that symbol to reference them in the future. This vastly enhanced its narrative coherence in my experience.

Another good example of this is Google Home. If you ask Google Home “what’s the weather in Florida?” and then follow it up with “how about in Georgia?” it can typically answer the second question correctly. This is quite impressive and (at least when I last looked into this) not something it’s competitors can do. However I’ve noticed that my Google home starts to lose the thread if I asked it four or five follow-up questions.

Also I just checked and my iPhone not only understands “what about Georgia?” it correctly guesses which Georgia I was asking about, based on whether I’d just asked for the weather in North Carolina or Ukraine.

I definitely didn’t notice, and was instead fixating on the dates and the quotes and considering googling them to find out if it was copying text it had already seen on the internet.

It doesn’t copy verbatim, but it is mashing up various other articles about the same event. Personally I consider this to be contaminated training data. A much better test would be to prompt it with headlines about things that occurred after its training data was frozen.

GPT-3 is trained to come up with plausible-sounding text, without regard for dull things like truth. It’s not hard to imagine, though, a fact-checking bot that could extract things that look like statements of fact and check them against authoritative-sounding sources.

Right now I can google “did the Methodist church split from the United Brethren Church in 1968?” and I get a bunch of articles on the merger; even a simple word embedding model knows enough to know that merge and split are opposites, so I can imagine a fact check bot could have sufficient confidence to mark this sentence as false and go tell GPT-3 to come up with another one. It’s been a decade since computers could outperform humans in Jeopardy, so a fact-check bot with reasonable precision doesn’t sound that hard.

(The culture war implications of a bot that would automatically “fact check” everything you attempt to write or read on the internet are left as an exercise for the reader.)

As I data point, I’ve noticed that, but that is possibly affected by the fact that I’m curious about the topic.

As a practicing United Methodist who’s been following this issue closely, it also seemed a little off to me. But the article actually comes pretty close to reality: in the May 2019 General Conference, the “Traditionalists” indeed passed measures to stop gay marriage, but in January 2020, a proposal was endorsed by all sides for the traditionalists to leave the denomination and start their own. But that last sentence of the first paragraph gets it reversed.

It’s a very weird feeling to see anything about the Methodist church on this site, though.

I assumed that was because a lot of people on the internet write like that. I even know some people IRL who talk like that

I was also thrown off by this without looking for anything of the kind. I’m incredibly sensitive to this kind of stuff, and alarms get triggered by human-written texts all the time, too. Because humans get confused and forget stuff – so a sloppy human writer could have made the same mistake. But the human has a concept of what it means to make that kind of mistake, and would understand it upon being pointed out. GPT-3 lacks the very concept of there being something wrong with this.

To your question about what GPT-like tools are doing with regard to math performance. I imagine this something like a more highly dimensional Dewey Decimal System. To refresh us on the Dewey Decimal System, each digit in the Dewey Decimal System further specifies the type of work we are dealing with. 400 is languages, 480 is Greek, 485 is grammar and so on and so on.

As the parameters increase the level of possible specificity increases -> thus GPT-2 goes from addition prompt -> math text, and GPT-3 goes from addition prompt -> addition-like answer up to four digits.

Going back to the languages example if I ask GPT-2, “What is the accusative plural of τεκνον?” It might respond with something Greek related and grammar related. Perhaps GPT-3 would at least give a grammatical form as it moves from “prompt indicates genre” to “prompt indicates query type within a genre.” The optimist might predict that GPT-6 registers the prompt as “prompt indicates query type within a genre requiring I follow human rules of material causality, formal logic, and customary standards within the genre.”

So this is nice and all, but I’m wondering what would happen if you gave one of these GPT models a calculator? Is there a nice way to integrate it?

I think you’d still have the problem of it knowing *when* to use the calculator.

But I see no reason you couldn’t wire a calculator into the neural net and hope backprop eventually determines when to use those “calculator” pseudo-neurons.

You can’t backpropagate through a calculator because it’s discrete. You’d need to do relaxations or reinforcement learning, which are much more tricky. People have been trying to make this work with the neural turing machines and so on, but AFAIK this line of research didn’t go anywhere.

I’m not sure if this is correct in principle (meaning “for any training algorithm, not just for the GPT3 algorithm):

A) your brain can. At least I remember some claim of brains adopting in a few weeks to “enhancing sensors”, e.g. a sensor that encodes the direction of the earth’s magnetic field onto your wrist (I can’t remember the exact source, maybe some Elon Musk Interview on Neuralink?)

B) There’s the Chen et al 2018 paper “Neural Ordinary Differential Equations” that basically combines classical differential equations with a neural net. Again, I haven’t looked at the details, but it basic approach looked like: Neural nets are a generic function approximator, but we can ease their job by adding the functions we already know we’ll need in explicit form and have the surrounding ANN layers figure out how to efficiently use them.

So putting both together, I don’t really see why a future GPT-n+1 couldn’t include a separate “logic core” that helps deal with math. Without thinking to much about it, I’d kinda expect that this is how our brain deals with specific special-skill tasks – basically push them to the corresponding specialized brain area (e.g. hypothalamus for navigation) and then integrating the results back into the overall thing the brain is doing (however you might call that).

Somewhat related: See the “extended mind thesis” on the question of whether or not your phone is a part of your personality.

“Any training algorithm” is too broad of a class. Artificial neural networks are typically trained using gradient descent: iteratively move the parameter vector towards a stationary (i.e. zero gradient) point of the objective function, the gradient is computed by reverse-mode automatic differentiation (a.k.a. backpropagation). This requires all the layers and modules of the neural network to be differentiable almost everywhere, furthermore if you want to find a meaningful solution they must have non-zero Jacobians almost everywhere. This rules out discrete layers.

There are workarounds: relaxation methods where you replace a discrete layers with a continuous smooth layers that interpolate or approximate them, and reinforcement learning methods where you treat the discrete layers as part of the environment. These methods have been demonstrated to a certain extent, but they are nowhere as effective.

Your brain is a general intelligence.

That’s an interesting paper, but it still deals with continuous smooth problems.

I think the closest thing is “Differentiation of Blackbox Combinatorial Solvers“: they have a neural network produce some continuous values with are used as parameters for a combinatorial optimization problem, which is solved by an external solver that produces a discrete output. In the paper they define a pseudo-gradient though the solver by a relaxation method, they backpropagate this gradient through the neural network in order to train it. Neat, but still requires the inputs of the solver to be continuous.

This isn’t a great analogy since the sensor is differentiable and the calculator isn’t.

I believe the current state of the art for arithmetic is Neural Arithmetic Units (Madson and Johansen, ICLR 2020).

It can simply finish every sentence of the form “13579 + 76543 is…” with “answered by my calculator”

Of course that’s not quite enough in itself, but it makes GPT + Calculator a larger component of a full AI.

https://arxiv.org/abs/1808.00508 https://arxiv.org/abs/2001.05016 come to mind. (Even if you required DRL, so what.)

I suppose you could just run a script to print “1+1=2. 1+2=3. 1+3=4.” and so on for billions of arithmetic sums, and then add its output to the text database. Wouldn’t that get GPT-3 quickly up to speed with accurate arithmetic?

Yes, but all you’d be doing is adding a look-up table to answer that specific question. There’s no point, and it wouldn’t generalize to solving more complicated problems, or problems where the answers can’t be pre-recorded.

The same argument would predict that GPT-3 would have no capabilities at all.

GPTocracy can be made real.

Governance is actually already mostly a text prediction task! We just need to have the laws written by a zillion parameter text prediction engine. Hook up data driven dashboards of social, economic, health metric as objective function, laws as output. Laws update every [Governance Tick] and we retrain on the feedback.

Sure, you’ll crash society in the short term, but it appears to be doing that already!

I like it ! It’s just like regular politics, but using electricity instead of dollars as bribes. Heh.

This reminds me of the bit in Gödel, Escher, Bach (1979) where Hofstadter argued that AIs capable of grasping concepts could very well be bad at arithmetic:

(To drive home the point, he also drew a head containing many small correct arithmetic equations that at a larger scale spelled out “2 + 2 = 5”.)

So basically a computer simulating a dumb human that read a pop philosophy book or two?

If this is true, wouldn’t it make AI less dangerous because it can’t grasp concepts and then also do the hyper-math required to effectively out fox and destroy us because we’re not fulfilling its favored concepts?

EDIT: I mean sure it could just use an exterior math module to DO math as we do, but it’s going to be bottlenecked by human-like limitations in manipulating the mathematical concepts to achieve its goals.

If it’s superhumanly good at one thing, then the answer depends entirely on how well it can leverage that one thing.

A computer that’s inhumanly good at arithmetic is called a pocket calculator; it lacks the awareness to be a threat.

A computer that’s inhumanly good at writing persuasive essays given a one sentence prompt may or may not be a threat by itself, but it’s damn sure going to be a threat in the hands of a human user, even if the thing consistently gets the wrong answer to “who is buried in Grant’s tomb” or “what is thirteen minus eight?”

Figure 1.3 has semi-log axes, so the scaling isn’t linear but logarithmic.

Also, if you look at the greyed out lines that are averaged to produce the bold ones, you’ll see there is a lot of variation in performance up and down. A lot of the monotonicity of the graph is produced by the last two data points, GPT-2 13B and GPT-3 175B respectively. I’m not impressed or convinced. At least have something like a 40B model between the last two points. (Yes, I understand that would be expensive, but this was a bad graph.)

In general the scaling assumption isn’t based on that graph. There has been a paper that trained more models to compute scaling laws in a robust fashion for a more general metric. What GTP-3 does is show that these laws extrapolate to 175 billion parameters. For single tasks there are always going to be more idiosyncratic scaling curves.

Thanks for this post! Among other things, you provide a good general-audience summary of what I’ve written on the topic.

I do want to clarify one point. Re: this

This does describe my argument if we read “purpose-built” as “aided by some task-specific training data.” But I imagine many people reading this paragraph would instead read “purpose-built” as “designed by humans with a particular task in mind,” which makes it sound like I’m arguing against domain-general/scaling approaches in favor of approaches where human researches do a lot of work targeting a specific task.

We need to distinguish between several levels of human involvement:

(1) No extra data or research work: the model “just does something” on its own (zero-shot, text generation)

(2) Extra data but no extra research work: you need task-specific data, but once you have it, you just plug it into an existing, generic model using a generic, automatable procedure (fine-tuning)

(3) Extra data and extra research work: you need task-specific data, and you must design a custom model with the task in mind (classic supervised learning)

where few-shot is arguably somewhere between (1) and (2), but has generally been treated in discussion as a type of (1).

Transformers are of course great at (1) in a cool, flashy way. However, they are also extremely good at (2), to the extent that large categories of work that used to be (3) immediately turned into (2) once people noticed this was possible.

Usually people do this by fine-tuning some variant of BERT. The differences between BERT and GPT-1/2/3 (let’s just call them GPT-n) are interesting, but are dwarfed by the similarities. For the purposes of this discussion, the least misleading framing is that BERT and GPT-n are basically the same thing, with “BERT_BASE” = GPT-n 117M and “BERT_LARGE” = GPT-n 345M.

Really, the only important differences between (1) and (2) here are that (2) modifies the model itself (“fine-tuning” it to the task, hence the name), and that (2) typically uses a somewhat larger quantity of data. These differences are fully independent: in principle one can fine-tune with only 50 examples, and if it weren’t for the limited size of its reading “window,” one could imagine feeding arbitrarily high numbers of examples to GPT-n as a few-shot prompt.

So, with the same transformer model, you can do either (1) and (2) and they look very similar. Except . . . (2) does far better. The GPT-3 authors frequently use comparisons to fine-tuned BERT_LARGE as a reference point, e.g. Table 3.8. That is, the authors are comparing their results to results from a model that is 500 times smaller, has more task-specific data, and is hooked up to the task in a different manner . . . and the exciting thing is that their model does about as good as the tiny one, not far better than it. It’s not clear to what extent this is really a difference in data size — that’s the interpretation in which the name “few-shot” makes sense — and to what extent it’s just that, relative to fine-tuning, this is a less effective way to hook up a model to a task.

If 500x-ing your parameter counts means anything, it ought to mean improvements in fine-tuning, too. So there’s an unspoken elephant in this room. I called GPT-3 a “disappointing paper,” which is not the same thing as calling the model disappointing: the feeling is more like how I’d feel if they found a superintelligent alien and chose only to communicate its abilities by noting that, when the alien is blackout drunk and playing 8 simuntaneous games of chess while also taking an IQ test, it then has an “IQ” of about 100.

I think this relates to another point you made:

This is true if you only think about the (1)-type approach where you interact with the model as a text predictor. However, it’s more fruitful to think of it as something more like a “repository of knowledge distilled from reading” — something you and I have in our heads as well — which was created in this specific manner, but can be leveraged in other ways. Whether or not some piece of knowledge is in there is a distinct question from how best to hook that knowledge up to something else we want — or an AI wants — to do with it.

I am not sure you can use BERT to claim a huge difference in quality between (1) and (2). BERT is crap at text generation, which incidentally must be the reason why OpenAI scaled up GTP-n and not BERT. It seems quite likely that the gap between (1) and (2) is significantly smaller for GTP-n.

But I’m also looking forward to the fine-tuning paper on GTP-3.

BERT is not designed for text generation. It is possible to torture it to generate text, but it is indeed crap at it.

That’s fair. De-noising objectives like BERT’s are better for fine-tuning — Table 2 in the T5 paper convinces me on that score — but make generation more difficult and possibly worse.

I do wonder whether BERT is really lower-quality as a generator, or merely more difficult to sample from. After all, BERT’s training task does that sneaky thing where a masked token is presented as a random ordinary wordpiece instead of [MASK], and BERT is still expected to replace it with the correct token. To do perfectly at this, you’d need to model arbitrary conditional dependencies in text . . . but these tokens appear rarely and BERT can see a mostly-complete context around them, so it’s just easier. You don’t need especially deep knowledge to figure out what’s going on in “I went to the grocery torrid to get milk” or “I went recent the grocery store to get milk” or “I went to the grocery store father get milk.”

I also wonder what the tuning-vs-generation tradeoff means . . . I don’t have any great ideas there.

I’m not sure there’s a practical difference… But I think BERT genuinely does have some sort of problem with text generation. No one has gotten it to generate good text despite some trying, while in contrast, T5, which was trained with text generation as one of its tasks as well as the usual suite of denoising tasks, does generate text reasonably well. Curiously, T5 still has problems with finetuning, like diverging during training, which is one reason why I haven’t yet used NaxAlpha’s T5 training code to train the big T5 on poetry and generate samples. So there’s something weird going on there.

So, how long until you’re replaced by an army of Robo-Scotts increasing SSC production tenfold and driving the poor artisanal Scotts to the poorhouse?

You haven’t noticed Scott has been posting more, recently?

The open threads are the human-in-the-loop version of that.

Speaking of GPT-2/GPT-3 doing logical reasoning, when playing with talktotransformer’s interface for gpt2, it seems like it does much better with modus ponens than with with “hypothetical syllogism” aka “double modus ponens”.

If you prompt GPT2 with “If it is raining outside, John will not take the umbrella. It is raining outside. Therefore”, GPT2 will often continue this with something along the lines of “John will not take the umbrella.”.

But if you give it a combination of 2 if/then statements, where the conclusion of the first is the premise of the second, and then end the prompt with “Therefore, if [condition of the first statement], then”, it doesn’t seem to tend to finish it with [conclusion of the second statement].

(I have not tested this in any serious experiment, just played around with it.)

I’d just like to say one thing.

AAAAAAAAAAAAA

AAAAAAAAAAAAAAAAAAAAAA

AAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAA

I double that.

Hey, that’s three things!

OTOH, making an artificial calculator was much earlier low-hanging fruit in computing. So you could have “an AI” that includes a calculator module and a GPT-3 module. To which you could hypothetically add…

This makes the Buddhist “no Self” truth claim seems relevant to the question of what “AI” is, anyway.

Just yesterday I had an intern visualize the word vectors (2013 tech) of numbers with PCA and t-SNE. Those are already (mostly) linearly arranged. So even number understanding from text is not super new.

The hard part, the part we’ve made very little progress on, is designing an AI that knows which module it’s supposed to be using.

Important clarification, I think:

It required a supercomputer to learn addition, but the trained model is much faster. It’s possible that a personal gaming PC could run the GPT-3 model. (I don’t actually know this, but I know that GPT-2 could run just fine on mundane compute resources.)

Sure, still inefficient, but we’re hardly being efficient when we do mental math. Generality will always come at the expense of efficiency.

A personal gaming PC could run the model, but running it at an acceptable speed is another matter. The full weights take up about 350 GB, and your gaming PC probably doesn’t have that much graphics RAM, or RAM of any sort, so you’d need to either partition the model across a bunch of people’s GPUs — LAN party time! — or be very bottlenecked on moving data around.

More details on GPT-3’s github page.

Smaller and less impressive versions of GPT-3 are probably less unwieldy, but will also produce less impressive output.

People have been calculating it out, and it wouldn’t necessarily be too bad on a personal computer. Yes, you do spend a lot of time shuffling layers in and out of the GPU but you’re also spending a lot of time computing the layer (while the VRAM consumption is not that big). And if you are willing to get a workstation with 512GB RAM, you could actually train something like GPT-3 fairly efficiently! You take advantage of the relatively small per-layer VRAM consumption to do minibatches layer by layer, storing the intermediates in main RAM: “Training Large Neural Networks with Constant Memory using a New Execution Algorithm”, Puddipeddi et al 2020 https://arxiv.org/abs/2002.05645v5

People don’t seem to talk about Searle’s Chinese Room as much as they used to. The idea was that you could have a massive database of answers to every possible question and build something that could pass the Turing test without being in any way intelligent. The response was “yeah, but that’s silly, a database of every possible answer to every possible question is unimaginably vast and physically impossible anyway, so you can’t sensibly reason about it”.

The success of models like GPT-3, though, suggest that you don’t actually need every possible question-answer pair to get Chinese-Room level performance on a Turing test, you just need a vague set of associations between words, and enough parameters to fill a flash drive. GPT-3 isn’t a sensible chat bot, but only because it hasn’t been trained to act like one — if you fed it a few billion chat logs could you make it into one? Quite likely.

What it still would lack is longer-term memory — in a Turing year you could ask it “Hey, ten minutes ago you mentioned your dog’s name, what was your dog’s name again?” Still, I wonder whether these sorts of consistency checks could be added without too much trouble.

> The response was “yeah, but that’s silly, a database of every possible answer to every possible question is unimaginably vast and physically impossible anyway, so you can’t sensibly reason about it”.

I like another sort of response more. If it fully behaves like a human in generating texts, and we cannot spot the difference no matter how many tests we do, does any meaningful difference even exist? It’s something of the same breed as philosophical zombie.

There is already a transformer model that compresses earlier text memories to do well in text prediction on a corpus of novels, where you have to remember all the characters and stuff.

Ooh, this sounds really cool and I hadn’t heard about it. Could you link to the paper?

It was a recent deepmind paper, there was also a blogpost about it:

https://arxiv.org/abs/1911.05507

I am officially shook. Congratulations, AI doomsday alarmists; I will stop mocking you guys in public.

I am actually pretty elated. It might be that, at long last, human-readable machine translation could emerge within my lifetime. There are a ton of foreign-language books I’ve always wanted to read but never could. Granted, the chances are still pretty small, but one can always hope.

Oh, and I’m still gonna mock AI doomsday alarmists in public 🙂

I challenge you to a public debate on the topic, then. Could be conducted via comment thread here, or video chat with your friends in audience.

Seems like you are still stuck in the “Then they laugh at you” stage from the saying. I’m trying to accelerate your progress to “Then they fight you,” for obvious reasons. 🙂

Sounds good, but I can’t commit to a video chat — my schedule just won’t allow it. If you’re willing to wait a bit for my (textual) replies, then I’m good to go. Er… wait, actually maybe I’m not. How does a “public debate” differ from a normal comment thread ? Are there some special rules, or what ?

Sounds good. A normal comment thread works fine, except maybe with the addition of some limits (we don’t want this to go on forever, right?) and a better-defined topic of debate. I propose we set the topic as:

Is it currently reasonable to worry about AI bringing about some sort of doomsday?

As for limits, I am super busy myself, so asynchronous is great. Maybe we say: We each get up to five comments total, no more than 500 words each? No time limit, except that it has to be done by, say, July 1st? Idk, what do you think?

Whoops, sorry, I replied to the wrong comment; see my reply below.

Not a good start to the debate on my part :-/

@kokotajlod@gmail.com:

I want to say “yes”, but I’ve already identified one hurdle: I think it’s totally reasonable to worry about unscrupulous human agents using AI to bring about some sort of doomsday. They don’t even need AI for that, they already have nukes, AI just gives them more toys to play with. Also, “doomsday” is poorly defined. Maybe we could amend the topic to something like, “Is it reasonable to worry about a runaway AI bringing about extinction of humanity (or perhaps just modern human civilization), in the absence of humans wielding it explicitly to do so ?”

Yes, that’s a good clarification. I accept your proposed topic definition. Well, actually, I’d like to make it “…bringing about the extinction of humanity, or something similarly bad,” to account for cases in which e.g. AI does to humans what humans did to whales or chickens. Is that OK?

Sounds good to me. Do you want to go first ? I guess in a real debate we’d flip for it, but I’m not sure how to implement that here.

I think I’d prefer you go first, since you probably already know the basic pitch, and the basic pitch is what I’d be giving in my initial comment. (Whereas I have very little idea of what your position is). But if you like I’d be happy to go first and give the pitch.

Ok, sure.

As far as I understand, the “basic pitch” is that, one day, a malicious or just “mis-aligned” AI will destroy humanity (and potentially any other intelligent species, should they exist); and that day is coming soon. Therefore, we need to be very worried about this scenario; that is, we as a species should spend a significant amount of resources on combating the AI threat — at least as much, if not more, than we currently spend on preventing global thermonuclear war, genetically engineered viruses, or asteroid strikes.

I would argue that such a scenario is vanishingly unlikely, so much so that worrying about it makes about as much sense as worrying about demonic invasions from Phobos.

The mechanics of this unfriendly Singularity usually involve an AI becoming superintelligent through a combination of recursive self-improvement and Moore’s law; using this superinitelligence to acquire essentially godlike powers; then using these powers to effectively end the world. All of this will (according to the scenario) happen so quickly that humans would have no chance to stop it.

I would argue that this scenario suffers from several problems:

1). The concept of “superintelligence” is poorly defined (to put it generously).

2). Modern-day AI systems are nowhere near to achieving general-level human intelligence, and there’s currently no path that takes us from here to there (though people are working on it).

3). Being super-smart (or super-fast-thinking) is not enough; in order to effect real-world change, you have to act in the real world, at real-world speeds. You also cannot learn anything truly new just by thinking about it really hard.

4a). Recursive self-improvement, of the kind espoused by AI risk proponents, is probably physically impossible.

4b). Many (perhaps most) of the powers ascribed to a “superintelligent” AI (assuming that word even means anything, as per (1)) are likewise probably physically impossible.

5). Humans are actually pretty good at stopping buggy software (sadly, the same cannot be said of stopping other malicious humans armed with perfectly working software)

Just to clarify, here are some things I am not saying (I’m just including these items because they tend to come up frequently, feel free to disregard them if they don’t apply to you):

100). Only biological humans can be generally intelligent, even in principle.

101). The current state of AI is at its peak and will never improve.

102). AI is totally safe.

Obviously, this isn’t an argument, but just an outline. I could proceed expanding on my points in order; or we could focus on any specific point if you prefer — but first, please tell me if my summary of the “AI FOOM” scenario is correct, since I don’t want to strawman anyone by accident.

Thanks! Excellent start to our conversation.

Here are my thoughts on your characterization of the basic pitch:

–Unaligned, not malicious. Malicious would be rather unlikely, but unaligned is the default; hence the importance of AI alignment research.

–*Might* destroy humanity, not *will*. Think of it like this: A technologically superior alien civilization opens a portal to our world. What happens? Maybe nothing bad. Maybe something very bad.

–There’s no need to think it will happen soon. If we thought aliens were maybe coming sometime in the next century, it would still be worth preparing. Bostrom, for example, actually thinks it is coming *later* than the median AI researcher!

Here are my thoughts on your objections:

1. Unaligned AI killing us all is not vanishingly unlikely. It’s certainly much more likely than invading demons from Phobos! There are multiple lines of evidence that support my claim here. a. It’s what the majority of AI experts think. b. economic models predict a substantial probability of it happening. c. tracking progress on important metrics in ML, deep learning, and computing suggests it might happen. d. arguments from first principles suggest it might happen.

2. The “AI Fooms to God” scenario you reference is just one extreme example of a worrying scenario. The overall concern is not dependent on that specific extreme scenario. You’ll be interested to know that most of the people working on AI safety, while they do take that scenario seriously, also take even more seriously more mundane scenarios in which progress is not so fast and AI is not godlike. These more mundane scenarios are also existential risks.

3. The 5 points you make below… I disagree with all of them, and/or think they don’t substantially undermine my position. Hmmm. How to proceed. You suggest I pick something to talk about… why don’t we talk about point 1? If there are other points you are particularly interested to talk about, I’d be happy to talk about them too.

You say “superintelligence” is poorly defined, to put it generously. (a) so what? Lots of things are poorly defined, but we still worry about them. This seems like an isolated demand for rigor. (b) What, specifically, are your problems with Bostrom’s definition, and why do they undermine the overall argument?

@kokotajlod@gmail.com:

O1: Regarding your objection 1 (I’ll refer to it as “O1”, just to avoid confusion), I absolutely agree that an immensely powerful ultra-superhuman unaligned AI would be very dangerous; but then, so would the Khan Makyr or the Vogon road construction fleet. I argue that such things are incredibly unlikely and/or outright impossible, not that they are safe. I fully acknowledge that unaligned AI can be dangerous; for example, shortly before the pandemic hit, Google Maps told me to take a right turn directly off a cliff (I didn’t listen, though).

O2: Regarding the “AI FOOM” scenario, the message I hear most often from AI risk proponents is that the rapid “FOOM”ing is what makes a runaway AI dangerous. If the AI develops slowly over a period of 50 years, maybe humans would have a chance to pull the plug. If it ascends from “glorified calculator” to “quasi-godlike entity” overnight, humans have no chance. I actually think this message does make sense, assuming you accept all the other premises of the AI safety movement (which I do not). So, my question is, what makes unaligned AI dangerous in the absence of the “FOOM”ing ? Can’t someone just turn it off when it starts making crazy demands on CPU time ?

Anyway, onwards to my point (1).

1). What does the word “superintelligence” mean ?

Bostrom says that it means something like, “an intellect that is much smarter than the best human brains in practically every field, including scientific creativity, general wisdom and social skills”; AI risk proponents often use the analogy “1000x smarter than Von Neumann”. But these analogies still do not explain what it means to be “super-smart”.

Modern researchers still argue (quite often vehemently) over the definition of human intelligence. Some say it is nothing more than the ability of a person to pass an IQ test, which does not correlate to anything useful. Others posit a whole spectrum of different intelligences, such as “emotional” or “kinesthetic”, which correlate with performance in specific domains. There’s also the notion of a “g-factor”, that correlates with performance in a wide variety of domains; however, this “g-factor” is poorly understood, and it is unclear whether it exists at all. Given all of these conflicting definitions, the notion of a nonhuman “super” intelligence simply amplifies the ambiguity, leading to super-vagueness.

Some AI risk proponents equate intelligence with the ability to think really fast. There’s some merit to this: if you asked me to solve a simple math problem, I might be able to puzzle it out with pencil and paper eventually; whereas Von Neumann could instantly give you an answer off the top of his head. If I could think 100x faster, and maybe possess some kind of read-write eidetic memory, maybe I could also appear to be as “smart” as Von Neumann. However, the problem is that if you asked me to solve something like the Riemann Conjecture, then sped up my mind 100x, I’d simply fail to solve it 100x faster; speed isn’t everything.

If I wanted to steel-man the definition of “intelligence”, I could expand it to something like, “the ability to quickly and correctly produce answers to novel questions”. A “superintelligence” would thus be able to quickly and correctly answer most questions (or, at least, most of the practical ones, such as e.g. “how do I convert the entire Earth to computronium”). In this view, the “superintelligence” is something like an oracle. But the problem is that oracles demonstrably don’t exist; probably cannot exist in principle; and there appears to be no path at all from either machine-learning systems or just ordinary everyday humans to virtual omniscience. Answering novel questions correctly is not some kind of a magic factor that you can scale simply by adding more computing power; it is a process that is still poorly understood, but that undoubtedly requires a lot work in the real, physical world.

I will expand more on this topic in points 2..4; but meanwhile, do you think my attempted steel-manning of the definition is fair ? If not, how would you define [super]intelligence, without resorting to synonyms of the word “smart” ? If yes, can you demonstrate some likely path from regular human intelligence (and/or e.g. GPT-3) to omniscience ?

O1: First of all, remember that “ultra-superhuman AI” is not the only thing that could kill us all; merely superhuman or even human-level could too. So it’s a bit of a straw man to focus on ultra-superhuman AI. But anyhow what’s your argument that it is of comparable likelihood to Vogons? Like I said, there are multiple lines of evidence pointing to it having substantial probability.

O2: I agree that rapid FOOMing makes the situation more dangerous, and I also think it’s reasonably likely to FOOM. Probably not in less than a day, but maybe in a few weeks to two years or so. But like I said, even if it happens over the course of many years it still could kill us all. History provides ample evidence for this; Cortes’ conquest of Mexico was surprising because it only took two years. But the subsequent European conquest of the rest of North America was not surprising, and very difficult for the natives to prevent, even though it took hundreds of years. For an example of a prominent AI safety researcher who thinks the FOOM scenario is unlikely (though he still thinks it is much more likely than you do!) describing how a slow and gradual takeoff could still be very bad, see here.

Point 1: Those explanations of superintelligence seem perfectly good enough for me. A machine that is better at politics, social skills, strategy, science, analysis, … [the list goes on] than any human? Sounds powerful. Also sounds like something that might happen; after all, human brains aren’t magic, and lots of smart AI scientists are explicitly trying to make this happen.

Like I said, you don’t need a precise definition to care about something. Your arguments here sound like the arguments made in section 2 of this paper on the impossibility of super-sized machines. It’s an isolated demand for rigor. Imagine you are Mr. S, recently back from the ‘alien homeworld,’ wondering what will happen to your beloved country. Might the aliens take over and rebuild it in their image? Yes, they might. Why? Because they have superior technology. What does “superior technology” mean though? There is no accepted definition, and all of the available definitions are vague. Oh well, I guess there’s nothing to worry about then!

Bostrom further distinguishes speed, quality, and collective intelligence, by the way. I think all three dimensions are worth thinking about.

I think what you are saying about oracles is not relevant.

I will expand more on this topic in points 2..4; but meanwhile, do you think my attempted steel-manning of the definition is fair?

Not really. I appreciate the attempt though! Basically, I feel like you’ve consistently characterized the “alarmist” position as being too dependent on a specific scenario that is extreme in many ways. Then you happily go on to poke at the extremities.

If not, how would you define [super]intelligence, without resorting to synonyms of the word “smart”?

Remember that I think this is unimportant; I don’t need to define things any more precisely than they already have been defined. But anyway, here are some more definitions:

1. Consider the space of all tasks. Consider the subset of tasks that are “strategically relevant,” i.e. the set of tasks such that if there were a single machine that was really good at all of them, it would be potentially capable of taking over the world. What I’m worried about is a machine (or group of machines) getting really good at all of them.

2. Consider the advantages humans have over other animals. Consider the subset of such advantages that allowed humans to take over the world, leaving other animals mostly at their mercy. I’m worried about machines having advantages over humans that lead to a similar result.

3. Intelligence = ability to achieve your goals in a wide range of circumstances.

Finally, I really shouldn’t have to demonstrate a path to superintelligence in order for the possibility of superintelligence to be taken seriously. Isolated demand for rigor strikes again!

@kokotajlod@gmail.com:

Regarding your (O1) and (O2), I broadly agree that AI could be incredibly dangerous in the wrong hands, just like any other tool. However, I think that without a “FOOM”, the danger from a fully autonomous AI decreases to the point where we don’t need to be critically worried about it. I could talk more about it, but I think this point is similar to my point (5), so I suggest we table it until we get there (we don’t have to go through all the points in order, either). It’s up to you, though.

For now, let me return to point (1), because I think I wasn’t clear enough in articulating my objection. I fully agree that an entity that was better than humans at virtually everything would be potentially quite dangerous. I could even agree that we could re-label “ability to do things” as “intelligence”. However, if we do so, IMO we lose the connection between this new meaning of the word, and what is commonly called “intelligence” or “being smart”. I think that AI risk proponents inadvertently engage in a bit of an equivocation fallacy when they use the word “intelligence” this way.