This is the semimonthly open thread. Post about anything you want, ask random questions, whatever. Also:

1. Comments of the week are Scott McGreal actually reading the supplement of that growth mindset study, and gwern responding to the cactus-person story in the most gwernish way possible.

2. Worthy members of the in-group who need financial help: CyborgButterflies (donate here) and as always the guy who runs CrazyMeds (donate by clicking the yellow DONATE button on the right side here)

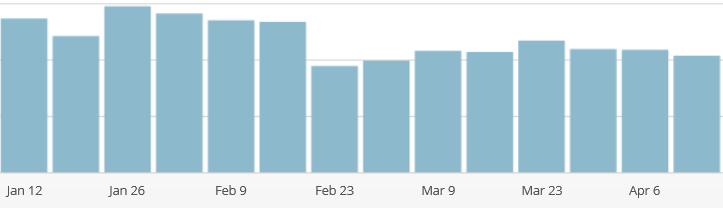

3. I offer you a statistical mystery a little closer to home than the ones we usually investigate around here: how come my blog readership has collapsed? The week-by-week chart looks like this:

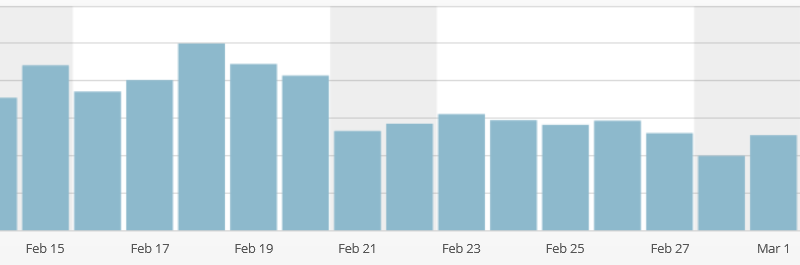

Notice that the week of February 23rd it falls and has never recovered. In fact, I can pinpoint the specific day:

Between February 20th and February 21, I lost about a third of my blog readership, and they haven’t come back.

Now, I did go on vacation starting February 20 and make fewer posts than normal during that time, but usually when I don’t post for a while I get a very gradual drop-off, whereas here, the day after a relatively popular post, everyone departs all of a sudden. And I’ve been back from vacation for a month and a half without anything getting better.

I would assume maybe WordPress changed its method of calculating statistics around that time, but I can’t find any evidence of this on the WordPress webpage. That suggests it might be a real thing. Did any of you leave around February 20th for some reason and not check the blog again until today? Did anything happen February 20th that tempted you to leave and you only barely hung on? I get self-esteem and occasionally money from blog hits, so this is kind of bothering me.

4. I want to clarify that when I discuss growth mindset, the strongest conclusion I can come to is that it’s not on as firm ground as some people seem to think. I do not endorse claims that I have “debunked” growth mindset or that it is “stupid”. There are still lots of excellent studies in favor, they just have to be interpreted in the context of other things.

Didnt you make a comment around that time that there would be fewer posts? Maybe people are just taking a break and then will come back when they think you are back to normal (I dont find this too plausible).

Fewer posts means fewer people linking to your posts? (maybe you can see these stats and this isnt the issue).

Maybe readership is actually back to normal and the earlier period was the unusual one?

Google has changed their algorithm for search a few times in the past few months. Maybe that has had an effect?

Just some really quick thoughts that could be tested empirically, possibly.

Also this may be a “Fooled by Randomness” kind of thing: Starting Feb 18 ending Mar 1 is a pretty linear drop off, if you only look at the beginning and ending values. Only when you look at the points in between does it look like a big drop. The big drop may just be random and not indicative of anything. If the drop starting Feb 18 maybe we should look there for clues.

We may also need to see a longer time period to identify a real break from long term trends.

Didnt you make a comment around that time that there would be fewer posts? Maybe people are just taking a break and then will come back when they think you are back to normal (I dont find this too plausible).

Maybe readership is actually back to normal and the earlier period was the unusual one?

My guess is that it’s a combination of these two. The few months before that graph starts, Scott posted a bunch of stuff that got a lot of outside attention. The untitled post garnered a lot of attention and was posted two weeks before that graph starts. These posts garnered a lot of new readers and, after Scott announced he was taking a couple weeks off, some of the new readers decided that they wouldn’t check while updates stopped and just forgot to come back.

I’m one of those crazy people who doesn’t use RSS, so when I want to know if there’s a new SSC post I’m reduced to actually visiting the site (like an animal). I probably check multiple times per day, more if it’s been a while since a post or if I’m particularly bored. If there are others like me, maybe we all started checking less frequently the day after Scott announced that there wouldn’t be any blogging for a while. That could explain the suddenness of the dropoff, and then its persistence could be part of some longer-term trend?

I also actually visit the site to see if there’s a new scott post. When he says there will be fewer posts I still check just as often for that sweet sweet intermittent reward. And hey this past week it has paid off, despite him saying that there would be fewer posts.

I would agree that it was probably a return to normal after a few unusually popular posts.

But I would also recommend not saying “expect fewer posts in the near future.” Your standard of “fewer posts” is still more posts than most bloggers. My general expectation of bloggers is for them to overpromise and underdeliver (not that I have any right to expect them to “deliver” since they are generally providing me with free entertainment/intellectual stimulation), so when I see “expect fewer posts for the near future,” I read it as “expect me to drop off the face of the Earth for an indefinite period.”

You tend to underpromise and overdeliver, but newer readers may not know that.

Oh, and post more about social justice, tribal politics, and similar things that make people angry.

He should, but not because it makes people angry. If anything, the way he does it probably helps people to be less angry and less stupid to each other about these things.

Yes, I also like Scott’s posts on these topics for the same reasons. But even when written about in an evenhanded, rational manner, these sorts of topics do excite people more. What sexy women and cute animals are to advertising politics and social justice may be to blogging.

And considering that people like Ezra Klein definitely read Scott, it may not be too much to hope for that his writing actually affects national conversations in a positive way.

Yes, google is the only plausible way that traffic could decline overnight. However, I see people saying 4 February, not 20 February. Also, the analytics software should report proportion coming from google. (Scott, don’t you have google analytics?)

I think it is pareidolia.

Or Facebook algorithms, or maybe Twitter algorithms. But the same applies there: people ought to track the change dates and Scott ought to be able to determine whether his traffic from these sources declined.

Thank you for using the word “pareidolia.” I learned a new word today thanks to you. I will now abuse this term by using it in every possible conversation for a week, driving my family nuts.

“We may also need to see a longer time period to identify a real break from long term trends.”

I know very little about time series analysis, but from what I understand there are ways to get at this question statistically.

Needs moar data – not enough to show changepoint, and differences by traffic source matter. My first thought was ‘if fewer search hits, then must be Mobilegeddon, but if referrals, it’s lack of political/economics blogging prompting traffic from other bloggers, and if direct or RSS, may be lack of quality’. All quite different causes & traffic patterns, all easily checked.

I just read a book of first-aid advice for backpackers, published in 1910. How badly wrong would I go if I were to follow this advice?

My sense is that 21st-century medicine is much better when it comes to vaccines, treating serious illness, more impressive surgical techniques, antibiotics. But I wouldn’t think that basic first-aid techniques have changed much (thinking about things like broken or sprained bones, cuts, burns, colds, flu, drowning) especially in the backpacking context. With pain management, if anything we’ve probably gone downhill. (The advice for removing a foreign object from the eye begins with my new favorite phrase “First, cocainize the eye.”) Cocaine is also used for toothache and diarrhea, and calomel (mercury) for colds.

Here’s the list of basic drugs to carry on a backpacking trip: Calomel, dosimetric trinity, chlorodyne, intestinal antiseptic, quinine sulphate, elaterin, phenacetine, sun cholera, apomorphia hydrochlorate, digitalin, morphine, strychnia, cocaine (50 cents a tube).

EDIT: medicine used to be more poetic too. “If the patient is of strong physique and God smiles, he may not have septic fever.”

I know CPR has been modified a bunch of times since (did they even have CPR back then?)

I’d strongly advise to not rely on that book. A lot of the medical procedures, as well as available tools/materials have changed a lot. Even nowadays things like CPR are undergoing changes on a <10 year scale regularily.

In my hypothermia book essential for backpacking in New Zealand there are a lot of techniques and data that got only got invented/implemented after 1970. Nobody before did run data analysis on success rates for different methods for reheating patients!

Interesting. It actually doesn’t discuss either CPR or hypothermia care.

I’m going to hazard a guess that the value of CPR on a backpacking trip is extremely small, in 1910 or today. Is there any discussion of straight artificial respiration, as might be used for a drowning victim whose heart is still beating? That would be more plausibly useful in that environment, and I think it was understood in 1910.

Hypothermia treatment, that’s definitely useful to backpackers. And it is an area where the state of the art has improved enormously over the past century. In part due to actual Nazi medical research, which obviously wasn’t available in 1910. For that matter, I understand there have been significant improvements even since ~1980, when I was both studying and receiving hypothermia treatment.

I’m not really sure how badly wrong you would go without a better idea of what the advice is.

From the specifics you’ve given, though… the drug list seems excessive. I include an antiseptic, maybe ibuprofen. I may have iodine pills if I can’t carry all the water I’ll need, but not with the first aid kit.

On the other hand, your drug list seems too short. I’d say the iodine pills are a must (always assume you might get stuck at least one day longer than you plan). I’d also add Imodium – diarrhea is no joke, and dehydration is a big hazard in a wilderness setting. If you’re hiking with a group, I’d also strongly recommend Benadryl. Anyone with known risk of anaphylaxis probably carries an epi-pen or two, but that’s a fast acting medication – obviously necessary if breathing is immediately endangered, but if you don’t simultaneously pop an oral antihistamine (which is slower but longer lasting) it’s possible that symptoms will return before you can reach help.

Other than that, you mostly need bandages etc. for wound and blister care, and ace bandages/athletic tape are seriously handy (and don’t weigh much). I’d also have a plan for turning your gear into a sling/splint for an arm/leg. Most injuries are going to be limb related, or cuts/burns/abrasions.

The biggest thing with wilderness first aid is wrapping your mind around the time scale – most of us spend most of our time in places where appropriate first aid is “plug any gushing wound and call 911”. Once you get outside of cell phone coverage (and even some places with it), 911 might be hours away at best. One outcome of this is that, as mentioned, CPR isn’t super useful – if your heart stops that far from a hospital, you’re probably dead. Same goes for a lot of other conditions that might be survivable, but only if medical care is reached immediately. If it will kill you in 30 minutes, you’re dead. Fortunately most stuff isn’t in this category.

Your biggest goals in wilderness first aid are 1) don’t get anybody else hurt trying to rescue 2) mitigate any immediate threats to life (airway, breathing, circulation (bleeding), “ABC”) 3) assess condition (what’s wrong, being particularly mindful of shock, head, and spinal injuries) 4) decide on treatment – evacuate, call for help, or carry on (for minor stuff) 5) stabilize/treat – wound care, pain mitigation, and mobility restoration.

Source: wilderness first aid class from NOLS a couple years ago, which was pretty fun and informative. My local REI offered it. Would recommend.

A 1910 guide obviously predates penicillin, and that’s a big deal. Unless you are certain of reaching a hospital within 24 hours, you probably do want a broad-spectrum antibiotic or two for an early start on dealing with potentially infected wounds or infectious diseases. Maybe specific antifungal or antiparasitics as well depending on where you are doing your backpacking. The good thing about being in the wild is that you’ll probably be dealing with wild strains of infection, rather than the resistant-to-everything versions that have evolved in and around modern hospitals, so basic antibiotics are still likely to work.

These are usually prescription meds, but if you’re serious and if you have any sort of formal training – for me, the Eagle Scout badge was enough – it’s usually not a problem to get your family GP to write out a prescription for some Zithromax every year. It should not need to be noted that “Scott Alexander” is not your family GP.

Pain medications beyond ibuprofen are a more sensitive matter these days, but still worth pursuing. There’s a world of difference between a day with a broken leg, and a day with a broken leg and no narcotics, and it goes beyond just the negative utils of experiencing pain. The victim’s ability to assist his own evacuation, or even just keep still when he needs to keep still, can be vital, and pain is very much Not Helpful when it comes to early healing. In the 1980s, our family had a standing prescription for Demerol, but doctors (and the FDA) are more sensitive about “gimme some narcotics, just in case” in the current political climate.

The antihistamines and antidiarrhoeals are, as Gbdub notes, potentially vital and fortunately nonprescription.

I’m an Eagle scout and have taught the wilderness survival merit badge about 5 times. I’ve now lowered my confidence levels in everything I learned in BSA.

(Except knots; relevant experts tell me that BSA does a fine job with those (click ‘exit’).)

I am pretty sure that advice for treating snake bite has changed in recent decades, and that sounds like the sort of thing that would be good to know if you were backpacking in the countryside. For example, I have seen older books (I think from the ’70’s) that advise making cuts on the bite and applying a tourniquet if the bite is on a limb. (In old movies people would try to suck out the poison, but I’m not sure if that was ever a seriously advised thing.) Nowadays, as I understand, the advice is to wrap the entire limb in a tight bandage, as tourniquets can be harmful, and cutting the wound apparently is useless. For all I know, there might be lots of old formerly accepted first aid advice that has been discredited in recent years.

Removing the venom one way or another is apparently still potentially effective, but it’s sort of like rescue breathing – it’s fallen out of favor because the average amateur is likely to muck it up and do more harm than good. And adding an extra wound is almost always a bad idea.

The reason tourniquets are a bad idea is because you can damage the limb by cutting off blood flow, and, even if it works as intended (keeping the venom in the limb), that’s bad – it increases the local tissue damage and makes it more likely you’ll lose the limb.

Do you mean rescue breathing as opposed to CPR? What is the risk of rescue breathing? CPR appears to me to be exactly opposite to your theory. It appears to me to be extremely dangerous, and yet extremely popular. Maybe people who are actually teaching about first-aid give good advice about not doing it or extensive training in how to do it, but it is extremely fashionable to do teach to amateurs. For example, my high school made everyone take a 1 day course on CPR. I’m pretty sure that had negative value by producing people who would act beyond their competence, but even if not, compared to a 1 day course on basic first aid, it was a really terrible decision.

In the past decade or so there has been a push to remove rescue breathing from the CPR protocol for amateur rescuers, and recommend chest compressions only.

The logic is that in the case of cardiac arrest, chest compressions are critical for delivering already oxygenated blood to the brain, and interrupting this process to deliver rescue breaths is counterproductive (there is already enough blood oxygen for survival for a few minutes, the trick is getting it moving)

Also, the ideal ratio of compressions to rescue breaths is complicated, and depends on the number of rescuers and what exactly caused the arrest. So there’s a theory that it’s better to teach everybody simple chest compressions rather than expect non professionals to remember all the rules.

It looks like there have been a couple studies suggesting increased success with a chest compression only protocol, but I’m not familiar enough with the underlying issue, and in any case it doesn’t sound like the medical community has really reached a consensus.

As for chest compressions being dangerous, I understand that it can cause injury, but if you really are in cardiac arrest I don’t see how it could be more dangerous than not doing chest compressions.

As my CPR trainer explained:

Q: What do you do when you hear ribs crack?

A: Keep going. Ribs heal. Death doesn’t.

They also implied that people have been overselling the danger of chest compressions. But then, they also said that it usually doesn’t work.

If you assume away the problem, you’re fine.

EMT here.

The main focus of CPR is circulating blood to the brain which contains available oxygen, namely chest compressions. Many people (even in EMS) involved with CPR will take too much time away from compressions to provide ventilations. Lay people are even worse, hense the focus on hands-only CPR.

The big problem with rescue breathing is that most people get hung up on the “mouth-to-mouth” aspect of it, and so they freeze, instead of starting the important part, the compressions.

Even in EMS we are starting to take the approach that ventilations shouldn’t be provided until 10 minutes into working a cardiac arrest.

I have a long standing issue with the removal of tourniquets as the recommendation for serious bleeding.

The new fad appears to be “apply pressure above the wound” which seems to have all the disadvantages of cutting off bloodflow while being far less effective.

If I accidentally open an artery in my arm or leg I’m quite happy to tell anyone who tries to stop me applying a tourniquet to fuck off: I’d rather lose some feeling in a limb than die.

I’m of the opinion that it’s a legal choice rather than a medical one: someone who loses a limb is pretty much certain to sue someone, anyone. Someone who dies in an accident isn’t going to sue and their next of kin are unlikely to be able to go after the first aiders for failing to keep the person alive.

Plus the numbers appear to be wrong in some recent first aid books, you can cut off bloodflow to a limb for more than 10 minutes and still have it work perfectly well later. Hands and feet are pretty resistant to reasonably short term loss of oxygen.

Just looked it up and the first first aid guide I found talking about it recommended getting signed written permission to apply the tourniquet from the person before doing so: this being the person who is currently bleeding to death. fucking 100% lawyer change to first aid rules rather than medical one.

http://www.wikihow.com/Decide-to-Use-a-Tourniquet-%28Home-Remedy%29

better they bleed to death than sue you i guess

Last I heard, tourniquets were coming back into favor based on results gleaned from Iraq and Afghanistan.

tourniquets are definitely coming back. They’re a major reason, for instance, that the only three people died from the Boston Bombing.

Late Wittgenstein. Thoughts?

Perhaps yours, first?

Better than early Wittgenstein. But unless you are interested in the history of philosophy, you are honestly better off just reading Eliezer Yudkowsky’s “A Human’s Guide to Words” instead.

I thought it was interesting that the writer of Ex Machina was so enamored with *early* Wittgenstein in particular.

Overrated. There are a few interesting ideas in PI, but they’re buried in a cryptic mess.

interest in late Wittgenstein among philosophers has almost completely collapsed. My own diagnosis is that this is deserved, and the story is roughly this: early Wittgenstein was associated with Logical Positivism. Late Wittgenstein is anti-LPist, so when LP went spectacularly out of fashion, criticisms by someone who had been associated with the movement seemed particularly appealing (in an “even one of their own heroes figured out it couldn’t work” sort of way). Hardly anybody bothers to hate on LP any more, because it’s been dead for too long (there are now even substantial numbers who have come around to the view that it was unfairly maligned), so the main source of late Wittgenstein’s fashionability has disappeared.

“interest in late Wittgenstein among philosophers has almost completely collapsed.”

That’s not my impression of the field at all. Maybe it is more more true wherever you are. Here in the UK though, that statement couldn’t be further from the truth. In one of the departments I’ve been in over the past few years, it was an explicitly but unofficially said that you had to “like Wittgenstein” to get into the department, we had around 4 staff working explicitly on Wittgenstein and a couple of others who work with Wittgenstein but on other areas. In another department, the head of department was explicitly a late-Wittgenstein/Ryle scholar and we had about 4 (out of 13) primary Wittgenstein scholars on staff (again, these weren’t ‘just early’ Witt scholars. At Cambridge, where I did my undergrad, Wittgenstein was the only named philosopher to have a whole paper devoted to him, a number of the scholars there routinely cited Wittgenstein approvingly as the most important philosopher of the 20th century, and so on. I get a similar impression just from speaking to other philosophers generally, *a lot* explicitly cite Wittgenstein’s work as a heavy influence on their work, and it shows. And there’s plenty of activity on Wittgenstein directly as far as I can see: I could easily go to a couple of large Wittgenstein specific conferences in the UK alone every year if I wanted to.

Fair enough, I only know the U.S. scene. I know that there are some big differences between that and the UK scene, and I’ll take your word for it that this is one of them.

My impression is more along the lines of severe evaporative cooling: the

remaining Witters really, really think he has The Answer,

Wittgensteinians (and I count myself among them) do tend to be pretty… intense, but I think t’was ever thus (e.g. with a number of his students being life-long disciples). But I think Wittgenstein has had a fairly undiminished influence in philosophy more broadly: there are plenty of big names who’re clearly and explicitly influenced by him (Dennett, Hilary Putnam, Simon Blackburn, Michael Williams, Robert Brandom) actually it’s probably more revealing to try to list people who are definitely not influenced by Wittgenstein, because his influence seems to be felt a lot more broadly. If anything I would use a metaphor of how Wittgenstein has so permeated the atmosphere/changed the landscape or whatever, that it’s hard to discern clearly where he’s influential, because his influence is just everywhere (apart from a couple of outposts that are clearly anti-Wittgenstein, like Fodorians or hyper-formalists or naturalists).

‘Naturalists’ are a rather large group in contemporary philosophy though, at least in the US. (For non-philosophers, a naturalist here isn’t just someone who doesn’t believe in God/the paranormal/mind-body dualism, but rather someone with a particular (very vaguely defined) view on the relationship between philosophy and science.)

Philosophical Investigations helps me sleep at night. That is to say, my bedframe is broken, and I’m propping it up with a pile of books, PI included.

I’ve seen ideas from PI pop up in various places. From my own inexpert viewpoint: The family resemblances has been picked up by psychologists and linguists (eg Rosch, Lakoff) quite successfully IMO, and all that has found it’s way into EY’s guide as mentioned by jamieastorga2000 above. The business with language games doesn’t seem to have been picked up as such – there’s been a fair bit of development of pragmatics but not along Wittgensteinian lines.

I’ve seen ideas from PI crop up in cultural anthropology: well, “seen” in the sense of someone on Language Log pointing to cultural anthropology and saying “the horror! the horror!”. Also in other areas which seem influenced by the whole postmodernism thing.

I was reading a Daniel Dennett book, and he said he read PI and was very influenced by it… but got confused when he encountered lots of Wittgensteinians and found that he didn’t think like them at all. Perhaps the cryptic nature of PI makes it very ambiguous in practice, making it so easy to read your own personal ideas into it. Sort-of like a Rorschach test.

My personal thoughts: there are some interesting ideas there; PI is fit for raiding, not so fit for fixing up.

Agreeing with Peter. Kripkenstein is a good guide for a raid, IMHO.

“I’m all in favor of googaboogabloo,” Tom probably said.

“Gather up the boys. We’re going after that no-good rotten scoundrel,” Tom possibly said.

“The waiting is the hardest part,” said Tom pettily.

“Fortunately, the knife only grazed my spleen,” Tom said organically.

“I’m… urk… having a seizure,” Tom said twitchily.

“I’m taking her flying in a hot air balloon,” Tom updated.

“The trajectory of the stack of paper was a perfect parabola,” Tom remarked.

“I’m conducting a performance review of our company’s telecommuters,” Tom elaborated.

What is this tomfoolery?

In an attempt to understand the distribution of story lengths and the empirical clusters they form, I copied the text of several short stories, novellas, and novels into a word count tools (then rounded the count to two significant digits, since different tools gave slightly different word counts), and tried to sort them into categories as well as I could carve reality at the joints. Here’s what I got.

I relied a lot on third-party and first-party descriptions to help me classify the boundary cases (for example, Iceman called Friendship is Optimal a novella and Wikipedia calls “Second Variety” a short story), and also on some other characteristics about the pieces themselves (like the fact that True Names has always been published as part of a collection but never by itself, or the fact that “The Star” won the Hugo award for best short story). Children’s novels were particularly frustrating, since it appears they can be novella length and still get published as standalone books. But the shortest adult novel I found was Fahrenheit 451, which is at least somewhat above the cutoff.

Anyway, the whole point of this was to help me organize my fanfic reading list, which it has done. I can now sort fanfics into one of the three categories, and will be able to guesstimate how long it will take me to read them based on their length and the amount of time it has taken me to read similar pieces in the past.

What was the point? If you want to look for clusters, why bother with labels? If you want to know how long it will take to read, why bother with clusters and not just stick with raw word counts? (Maybe the answer is that you don’t encounter words counts, but do encounter the labels “short story” and “novella”? Now you know that they are consistent, but cover broad ranges.)

Are you aware of the Hugo categories? Half of your “short stories” count as Hugo novelettes, splitting non-novels into three equally common categories.

I have never seen the label “novelette” used outside of the Hugo and Nebula award categories, but I have seen several people call works novellas. There also doesn’t seem a to be a break around the 7,500 word mark in the stories I sampled, but there does appear to be a several thousand word gap around the 17,500 and 40,000 word marks (though the latter only appears if one ignores the children’s novels and Friendship is Optimal).

The three remaining SF magazines (Analog, Asimov’s, and F&SF) all break down their contents by novella/novelette/short story. Back when magazines were a major outlet for original genre fiction, this was an important distinction for the publishers. It may be useful for the readers now that internet publication is a thing, but I haven’t seen it come into common use there.

Huh, so they do. I never noticed that. Thanks!

Historically, novellas were more popular among genre fiction authors, and there’s a number of award groups that retain the technical category, typically at <40,000 words. Now, this category is near-extinct, even compared to the already-sparse short story market. The cutoff between novella and short story was more arbitrary and generally relied more on structure or publishing method than word-count, though award groups usually put the cutoff point around 7,500 words.

Part of this reflects a drift in the size of the community and its expectations. Big-name publishers would regularly take in 40k-60k word books during the Asimov days, while after the early '90s most publishers will prefer 80k+ word-counts for any unsolicited manuscript. There's been the start of a revival for the novella category, between ePublishing and smaller boutique publishers, but it remains a pretty small category for general scifi and fantasy — even well-recognized authors usually can only get it released as part of an anthology or for children's lit.

There probably is an underlying group of Natural Categories, but I don't think they strongly tie with any real-world usage. As a writer, it's hard to push the "Single Scene and Event" popularized in short stories beyond 15k words. Novellas and novelletes can stretch or compress to a greater extent than that, but <10k words rushes very heavily and much more than 30k tends to drag with a two-act structure, and two-act structures start fraying after 55k words. There's another grouping for Very Very Long works that doesn't really have a name (for online works, usually well-defined by the 500k+ words or the various online 1,000,000+ word monsters, for mainsteam publisher, think Steven King's longer novels or George RR Martin's oeuvre), that tend to use yet another different group of narrative structures that don't really resemble conventional act-based structures.

As a reader, and for works of normal complexity, the average adult can go around 16,000 WPH, while people who read more regularly can average 20,000 to 30,000 WPH (with higher sustain and comprehension). This is only a rough estimate, though, and you’ll usually go through works with simpler structures (one-scene, one-act, two-act) faster than more complicated ones (three-act, four-act, or saga) if reading for full comprehension even if they could somehow have the exact same word count.

Between about 25,000 words and about 80,000 words, there is the Unpublishable Void: no markets.

‘cept nowadays you can go indie.

While I applaud your industry, I don’t really get the point of it. That seems to me that it would only work for complete fanfic works, not works-in-progress (which could be any length from Chapter 68 to not-updated-since-2011).

And if you’ve already read the story/novella, you have a good idea how long it took you, so if you’re looking at (say) a five-chapter new work, can’t you guessitmate “Yeah, I can get through that in two hours” or however long it takes, without needing to go to such arrangements?

That being said, I actually have no idea how long it takes me to read anything – I generally tend to keep reading straight through until finished, and I also generally only stop reading straight through if I’ve either starting reading late at night (so I do need to get five hours at least sleep) or the book is very, very long.

Most recent book I read was 400 pages in length and I split that over two nights, because (a) I could only start reading it about 9 at night and (b) I have to get up for work in the mornings. If I’d started reading it on, say, Friday night I’d have read straight through until finished.

I have pretty much committed to only reading finished fanfics (with one or two exceptions grandfathered in from before I adopted this policy). The little copy of gwern that lives in my head tells me that there is no reason why ongoing fanfiction should be any better quality-wise than finished fanfiction, and since there are so many good finished fanfics for me to read, why should I go through the trouble and uncertainty of following unfinished fanfiction? Incidentally, he has also convinced me that there is no point buying the latest science-fiction novels and stories and that I was better off just reading whatever award-winning decades-old science-fiction I happened to find at libraries and thrift-stores. Good ol’ gwern.

You’re wise to stick to completed works.

I think most of us have probably suffered the pain of getting really involved in an excellent story, reading the last instalment, and then looking at the date (to find out when the next chapter is likely) and finding out that the author hasn’t updated in one/two/the time the Y2K flap years 🙂

I hope this doesn’t sound too critical, but: You misspelled “word”. I don’t think that reality has any joints to cut at; at best the publishing industry has joints to cut at. Your sample size isn’t very large, and it’s not clear what the inclusion criteria are; are they just works you’ve read? The presentation isn’t particularly reader friendly; could you at least right-justify the numbers?

My favorite posts of yours are the critical analyses of social justice stuff. With the Ferguson/Garner/Trayvon, etc.. stuff slowing down the last few months, perhaps people like me aren’t checking in as often? I’ve seen several Patheos bloggers asking similar questions lately, so maybe it’s something else. Could be blog fatigue, outrage fatigue, etc.. There were so many social justice-y catastrophes this past fall, I wonder if a lot of people are just tired of the internet. I know I’ve quit Facebook and pared down my blog reading a lot in the past few months.

I recall a post awhile back where you compared your page views to their topics, and “things i’ll regret” was the runaway winner. Haven’t seen many of those lately. So…regret more things?

Any links to the Patheos people talking about this?

Here’s the most recent one I saw, with replies by Hemant Mehta and James McGrath. This particular blogger blames Facebook.

http://www.patheos.com/blogs/mercynotsacrifice/2015/04/22/is-facebook-screwing-bloggers-or-have-readers-sharing-habits-changed/

He may have a point. I saw a webcomic that was complaining about how facebook hides his posts from fans.

http://www.sheldoncomics.com/archive/150128.html

I think this is one of those “be careful what you optimize for” things. The blog has seemed more technically-oriented and less controversial lately, which could explain a decline in hits, but it’s not clear that’s a bad thing. Maybe the answer is to cultivate a smug sense of superiority over your low traffic compared to the Gawker Media Empire, and then you won’t feel so bad about it.

What you say makes sense except for the suddenness with which it happened.

The weather is getting better perhaps people are getting out more? There were a lot of snowed in people this winter.

Spring break?

This was my thought too because feb 20th was the day the weather turned for the better.

How did it look last year? Lots of people were snowed in last winter too.

February 20th is famous to me because a family crisis began that day, which kept me occupied and offline for at least a week or so. It was a big deal to us, but I wouldn’t have thought it would cause such a disturbance in the Force. Perhaps that was vice versa.

In enough of the world at once? This is a pretty geographically diverse readership, I expect.

I also do want to say that your medical/psychological analyses are some of my favorite posts on this blog. Maybe it’s not needed, but I figured positive feedback for doing social justice stuff should be counterbalanced with positive feedback for technical medical/psychological stuff.

I enjoy the fiction, ethics, and the more philosophically based posts.

My favorite is probably the steelman arguments for positions he opposes, generally they’re far more thought provoking than the actual arguments made by many of the sides he’s steelmaning.

Broken website theory: For some reason, coding is getting a lot better. There are far fewer broken websites, which means there are less hooligans around that might happen upon a SSC post.

Abortion: a sharp drop in 1990 means that there are fewer people starting to read it at around age 25, and nobody is replacing those who “age out” of reading SSC.

Or maybe it’s an increased NSA presence online — people sense that and choose to just stay offline.

Come to think of it, the decrease in numbers is probably due to a multi-factorial trend.

🙂

This made me smile.

That’s the same as my guess, around February or so the political posts become fewer and further between. Then it takes until late in the month for people to notice.

It could just be, as suggested, people paring down their feeds. I’ve done a bit of housekeeping myself about the blogs I follow, and the ones I’ve culled have been because:

(a) this only makes me angry and if the only reason I’m following this is to be OUTRAGED and start typing angry responses, that’s not a good enough reason for either me or them

(b) it’s not that they’re bad or worse than any of the others I follow, but they’re new on the list and I’m loading too much on my feeds and I need to drop someone and sorry, new person, it’s going to be you because I’m sticking with my old reliables.

People may stop following not because you’re not a good writer but simply because they need to prune their reading lists and you’re the new guy so last in, first out 🙂

Yeah, but what are the chances of so many people deciding to cut SSC at the same time? If it really were people just paring down their reading list, we would expect a gradual decline rather than 30% of the readership going *poof*.

I suppose another important question is whether that 30% loss is among established, regular readers or with one-off readers who get linked here from other sites (usually in response to posts that SA regrets writing).

RE #3, have you tried comparing the drop in traffic with the trends in incoming traffic from the Financial Times, which has included you in its “Further Reading” link dumps several times in the past few months?

Your crazymeds donation link is not working.

Does anybody else associate rationalism/Less Wrong with disliking spicy food, or am I projecting?

I associate it with the opposite, assuming both are partially caused by the “openness to experience” personality trait. But I admit I haven’t had the chance to evaluate many LWers’ culinary tastes.

I associate LW/rationalists (and nerds in general) with sensory issues, and sensory issues with a dislike of intensely flavored foods, including spicy food. I don’t think that it’s a majority, though: just a slightly higher than baseline dislike for spicy foods.

Not me, though. I love spicy foods to death.

I think that nerds in general are pretty enthusiastic about spicy food. Maybe that’s another sensory issue.

From what I’ve seen, it’s either one extreme or the other – either no time for spicy food, or the hotter the better I have watched dozens of nerds (as a participant) basically form ranks over the likes of Christmas and anniversary parties. It definitely seems like a sensory issue.

One data point against your impression: I consider myself a nerd and I like moderately spicey food, but not very spicey food.

Well I think spicy food is just kinda OK!

I hang out with a lot of young Australian computer-science/videogame nerds. Food groups are:

– Pizza (Often covered-in-meat varieties)

– Various Asian – once a week one of the groups visits a food court in what is roughly the Asian district in the city. Chinese is probably the most popular.

– Indian. A different group gets Indian takeout on a weekly basis.

– Pasta. It’s a fallback for when people don’t want to get takeout – probably mostly a convenience thing.

There’s a Mongolian BBQ place that’s pretty popular with my friendship group as well, but that’s a pretty customisable taste.

Out of that set, really only the Indian food is particularly spicy, and it’s not usually extremely-spicy curry that gets ordered (Vindaloo is about the limit).

The closest thing I have to an association between these is that

1. Razib Khan is kind of LW-adjacent

2. Razib Khan likes absurdly spicy food.

So, weakly the opposite.

The most recent Saturday LW party in Palo Alto had, among many other things, a spicy Korean dish, and it seemed popular enough.

White People.

I assumed we were correcting for broad demographic categories like race.

I mostly associate rationalists with the following passage from Harry Potter and the Methods of Rationality:

Nerds in general I tend to associate with this entry from ESR’s jargon file:

Note that I am relying on other people’s accounts because my taste in food is highly atypical. I am a very picky eater who tends to stick to the same few dishes, prefers plain, lightly seasoned food, and has a strong dislike of spicy food. I also eat my steaks well-done, which I am given to understand is considered a hanging offense in foodie circles.

It’s funny, I felt kind of insulted by that passage when I first read it, because I’m the kind of person who orders the same thing over and over again at restaurants – and just for that, I’m written off as a curiosity-lacking mutant!? I eventually realized that it’s probably just a typical mind thing, though – I’m a picky enough eater that most items on the menu at a restaurant are going to be of negative value to me, so when I find something I like I usually stick with it. I can imagine, though, that if most menu items were likely to taste good to me, then yeah, I would be frustrated by not being able to try all of them.

Apropos of this discussion, my strategy for ordering at restaurants is to find the spiciest thing on the menu and then order it every time, except that first I check for any new/interesting spicy-sounding things. So I’m more varied at, say, a Thai place, than I am at a generic family restaurant.

You may have just reached the saturation point in Feynman’s Restaurant Problem earlier than most.

In the other direction: Last time I was in Italy (Note: I speak perhaps five words of Italian) I deliberately did not even attempt to find out what the dishes were, I just ordered whatever was first on the menu unless I recognized the name, in which case I ordered something else.

This conquered Choice Paralysis utterly and also gave me a number of truly excellent surprises – and one dish that was absolutely terrible.* As such, I recommend the method to everybody who is not a picky eater.

*I’m sure the chef had prepared it right and the issue was entirely predicated on my taste buds

The point of trying things is to find out what you like. Which is hot and sour soup, and Singapore needles.

*screeching ethical roadblock*

No, no whale for me thanks. The swallowing live octopus thing also seems unecessarily cruel and unusual.

Might give the fugu a try once, though I believe the farmed variety has no neurotoxin.

CONTENT WARNING – Animal cruelty

I believe fugu is also not great from an animal suffering perspective, because the fish has to be alive until unusually late in the preparation process, i.e. it’s still alive when it’s being cut up.

[This video shows a living pufferfish being cut up]

https://www.youtube.com/watch?v=JmCzfeiqjj4

Mammalian chauvinism is in effect, though the more I learn about fish the more sentient they seem.

Would not consider fugu anything but a once off experience in any case.

The Jargon File often strikes me as somewhat dated. This is one of those times, at least as regards the details, although I think the top-level trend (ethnic, vaguely health-foody) is still pretty accurate. I suspect that comes partly out of openness to experience and partly out of nerds’ wariness-to-hostility toward the cultural mainstream, and I’d expect both to be pretty stable.

But those details? Americanized Chinese is pretty thoroughly mainstream now, and modal nerd tastes seem to be shifting towards the likes of Vietnamese, Korean barbecue, Indian, or Ethiopian. (I expect tech’s sizeable population of immigrants and children-of-immigrants also has something to do with this.) Modern nerds also seem to be overrepresented in pursuing home cooking and preservation methods that have largely fallen out of favor in the mainstream; I make my own pickles and kimchi, and I know a lot of nerds that maintain their own sourdough cultures.

The Jargon File was under criticism even many years ago for Eric Raymond writing as though he is typical of hackers. This may be one of the parts that is not so much dated as it was a case of one person typical-minding.

But surely the food entry predates him.

He worked on it for a *long* time. The last pre-ESR version was 1983. He maintained it from 1991-2003. The food section was added between 2.2.1 and 2.3.1 and that was after he had started maintaining it.

Edit: The main entries came from other people, but the end matter was written by him.

I definitely remember thinking that the section on hacker politics seemed like ESR drastically self-projecting.

Aside: he occasionally comments here; I wonder if he’ll see this discussion.

To me its datedness is part of its charm, though. It paints a picture of a certain bygone era very evocatively, I think. Probably best regarded as more of a historical document than a contemporary reference, though.

The sourdough and kimchi connection rings a bell to me. Some of my arguably-geekish-though-not-quite-hacker friends do the same.

Oddly, I’ve have thought rationalists of that stripe would be very enthusiastic for spicy or ‘foreign’ (depending on what your native cuisine and therefore what ‘foreign’ means to you) foods because of the whole idea of being open to new experiences, examining your biases, and – sorry – a bit of showing off about being sophisticated in their tastes 🙂

My somewhat caricatured, straw-rationalist vision of a LW type hates spicy food. I guess I get this partly from the enthusiasm for soylent/mealsquares, which suggests a view of food as more of an inconvenience than a source of sensory pleasure. (Actually, I suspect a lot of people have this attitude towards food; it’s just that rationalists, as is their wont, take it to an extreme.) I also remember reading a post or comment in which the poster was baffled by how anyone could enjoy spicy food, since the sensation of hotness is, strictly speaking, a form of pain.

Then again, there is also the fabled hacker penchant for spicy food. As well ESR’s mention of it in the jargon file, cited below (or is that above?), I seem to recall Stephen Levy mentioning in his book Hackers that the original group of MIT hackers would competitively eat the spiciest thing on the menu at east Asian restaurants.

Do I contradict myself? Very well, then I contradict myself.

I don’t think this is so much a contradiction as it is time-differing preferences. Sometimes I want an interesting sensory experience, in which case I’ll go get Thai or make something out of Fuchsia Dunlop’s Sichuan cookbook, but sometimes I just need to replenish nutrients, in which case I’ll drink some Soylent or make myself a peanut butter sandwich or, rather too often, just eat some fast food.

(uh, if the Thai/Sichuan wasn’t a clue, I greatly enjoy spicy foods)

I like spicy food, though I’m not sure whether I’m a rationalist.

There’s been some talk about flavors on LW last week. There’s even a poll that goes against this hypothesis.

(I don’t have that association at all)

I weakly associate LessWrong with kink/BDSM, and associate liking spicy food with masochism, so transitivity yields a (weak) association opposite from yours.

data point against:

I’m dominant/sadist in the bedroom, but I like very spicy food.

I think pain-inflicted-by-others and pain-inflicted-on-oneself are very different things, psychologically.

For example, people perform feats of athletic endurance that are quite physically unpleasant, but this is to demonstrate to themselves and others how tough they are, not to show submission. If eating spicy food was about the pain (which I think is wrong anyway, since most people build up a tolerance to capsaicin), it would likely fall into the proving-your-mettle category, not the almost polar opposite showing-you’re-at-the-mercy-of-the-sadist category.

I find it interesting how much the responses treat food as a philosophical issue. While psychology does affect sensory perception to some extent, how one experiences food is, I believe, determined primarily by culture and physiology. People who like spicy food aren’t more adventurous or braver or anything like that. They simply have taste receptors that produce pleasurable signals when presented with spicy foods.

You’re projecting. I love spicy foods. In fact, prior to living with my fiancee, I basically put z’hug in everything I cooked except for sweets.

What probability would you assign the the idea that there has been, on net, more pain than pleasure in our world so far? I’ve been thinking about this lately and I’m honestly not sure what I think.

Seems like a toughie that contains lots of hidden definitional/preference issues about pleasure and pain, in addition to the empirical question of what happens to an average organism.

If a grass plant grows happily for a month but in its second month suffers a drought that floods it with stress signals. Was the grass pleased at all by its normal growth? Was the pain of drought more intense? (Pretend you agree with me that plants’ pain is measurable on a similar scale to animals’.)

But if you held a gun to my head, I’d say 70% there’s been more pain than pleasure overall. This is with stress and strong disliking grouped into pain, but fulfilled wants not grouped with liking.

This seems like a weird example. Plants are pretty clearly not conscious, so grass can’t experience pleasure or pain.

If you have to use the word “clearly”, it often isn’t clear.

Do you want to tell me that plants are conscious? How is that supposed to work? They don’t have a brain or a nervous system, or anything comparable to it.

As is usual in biology, we might not know as much as we think we do. Mimosas appear to have memories.

Pain isn’t just a representation, and it isn’t just an aversive representation. (That would include itches, for one thing. ) It’s an internal state that typically but not always signals damage. We learn to refer to it before we learn any neurology, but particular neural processes are what it is.

to play devils advocate: plants sense damage and release poison into their own tissue to defend themselves, plants warns others about herbivores by releasing chemicals into the air to signal to other plants, some plants will curl leaves away from touches.

Indeed Plant Neurobiology is a real area of study:

http://www.sciencedirect.com/science/article/pii/S136013850500018X

Now I wouldn’t bet money on any particular plant having notable information processing ability but I’m not so certain that it’s safe to say that there are definitely no plants anywhere on earth with an information processing ability similar to that of an insect or crustacean.

By pain, I have included not just representations in neurons, but representations in general. A human who has stubbed their toe might represent pain by certain patterns of neurons firing, and certain changes in body chemistry. A tree getting eaten by beetles might represent pain by activating signalling pathways related to stress and injury, and attendant changes in tree chemistry. Both of these representations are correlated with similar sorts of insults to the organism in question, and both evolved to manage reactions to those insults in a conceptually similar way (though the reactions themselves are quite different).

Now, I care much more about the human’s pain than the tree’s pain. But the question was about pain in general, not about pain weighted by how much I care about the organism in question.

Looking at it evolutionarily, you’d have to ask whether pleasure-seeking or pain-avoidance is *generally* more effective in promoting fitness-increasing behaviors. That’s a hard question.

What probability would you assign the the idea that there has been, on net, more pain than pleasure in our world so far?

In the human world, mostly pain. In the animal world, mostly pleasure. (Plants more pain perhaps.)

In the wild, an unhealthy animal doesn’t live long enough to experience long-term pain. Plants can live on indefinitely in an unhealthy state, as do civilized humans.

What fraction of all animals that ever lived, lived in feed lots? I’d guess not that tiny.

I would guess very tiny. You may be forgetting just how small a fraction of Earth’s history contained feed lots.

I’d guess that it might be significant for large animals, but for every one of those in a factory farm there are many more of the six-legged majority, often living on that large animal.

It can’t be significant for large animals. The claim was about animals that ever lived. There have been large animals for several hundred millions of years. There have been significant numbers of animals in feed lots for (I’m guessing) less than a century. So unless the modern population of feedlot animals is more than a million times as large as the average number of large animals over the past several hundred million years, the claim cannot be true.

This is a very good point. I was generalizing from humans (seems to intuitively make sense that the number of wild cows 10,000 years ago was give or take the order of magnitude equal to the number of humans then, and the current number of farmed cows is similarly close to the number of humans) but forgot that the wild animals existed for a much longer time than humans.

“I was generalizing from humans”

Do you think the number of humans now alive is a large fraction of the number that have ever lived? It isn’t–not even close. That’s a popular myth.

The Atlas of World Population History has some estimates of world population c. 1000 A.D.—roughly 200 to 300 million. Figure a generation of 20 years (high infant mortality). That’s a billion people a century. So even if there had been no increase since then, it would give you more people dying just in the past millenium than are currently alive.

A figure I’ve seen as an estimate for total number who have ever lived is around a hundred billion, but that’s very uncertain. The early period has low population but an enormous number of generations.

Even healthy animals experience distress. A predator experiences distress every time it fails in a hunt. Prey experiences distress every time it’s hunted, even if it gets away. Social animals experience distress whenever they fail to reach the top of the status pyramid (and even then, they experience distress every time that status is threatened) and every social group can only have one top individual. Males experience distress when they fail to mate.

@ RCF

Even healthy animals experience distress. A predator experiences distress every time it fails in a hunt. Prey experiences distress every time it’s hunted, even if it gets away.

The prey, yes, during the hunt. But if prey lives in a place so dangerous that distress would be constant — then pretty soon it would be caught and eaten. If a predator lives in a place where food is so scarce that failing a hunt often causes actual distress, it is unlikely to live long and reproduce successfully.

Social animals experience distress whenever they fail to reach the top of the status pyramid (and even then, they experience distress every time that status is threatened) and every social group can only have one top individual. Males experience distress when they fail to mate.

I think this sort of thing is more likely to cause long-term distress in humans than in non-human animals.

I’d go further and say that pain and pleasure units are not directly comparable. Kind of like asking if a car is faster or bluer.

I can’t help rounding it off to emotion. Lewis (quoted from memory) says “Coming out of the snow and warming one’s hands at a fire, no one minds the sequence, ‘Um, that’s warm, warmer, nice, very warm, that stings’. ” Different people will move their hands away at different degrees of objective heat — but usually with the happy emotion, ‘Ah, that’s better, that’s enough!’ Sometimes physical pleasure can cause emotional pain (the smile that brings the tear). Either one, below some threshold, can cause ‘irritation’ enough to blink, without being strong or long enough to interrupt whatever happy or unhappy thought was already going on.

I think that in general animals take small physical pains less seriously than most humans do, but appreciate small pleasures more.

concur on this point. pain is something we usually want to avoid, pleasure is something we try to maximize, but that doesn’t mean that the two are poles on a single axis. Especially for the more abstract forms of pain and pleasure.

I don’t have an answer for that, but I do have a question:

When I suggested that a factory farm chicken life could be net negative, most people disagreed that a life could ever be net negative. It went mostly along the lines of “everything is better than being dead”.

By that reasoning, is it not necessary that there’s always less pain than pleasure, Since being alive is always at least one iota more pleasure than any pain you experience?

The trouble with this view is that it doesn’t disentangle pleasure and pain from preferences. A preference for living is the thing that leads to you choosing to live. But we usually use the words pleasure and pain to refer to subjective experiences that aren’t directly preferences. People routinely make the choice to not take drugs that would increase their amount of pleasure, because they prefer not to.

Yeah, shortly after I wrote that comment, I realized this will all be about words and how we define them in the end.

I think I might define them in other ways than others do, which not only makes this discussion hard, but could also mean, I got the last discussion wrong 🙁

I sort of agree with Alex above – I don’t think pain and pleasure are actually comparable like two opposite sides of a single hedonic factor (see for example how reward and punishment influence behaviour in strongly asymmetrical ways, according to this pretty interesting paper). But I guess you can investigate preferences to try to measure how you might value these two factors compared to each other in behavioural situations. Disregarding curiosity, and if it could all be translated to equivalent qualia comprehensible to humans, would you choose to experience everything that has been experienced in the world so far? Or replay the history of life with you as a randomly picked sentient being in it?

To me, it seems that biological life here on Earth mostly works with suffering-based motivational systems where states of satisfaction and happiness are rare, pain and milder discomfort very frequent in comparison (and a stronger motivating factor, so also more intensely experienced) – the latter type of signal just is more useful in most situations.

Since you asked for probabilities, I’m almost certain (>97%) that suffering has been “greater” in the sense described above. But I’m also very curious about the roots of your uncertainty on the matter, because it seems so suspiciously obvious to me and I’m probably missing something (and I would certainly like to be wrong about this.)

Thanks for that pretty interesting paper : it was. Also, your reformulations of the question are brilliant. Technically incoherent (because personal identity ), but I think the spirit of the questions still works.

@ Kaura

From the study:

Surprisingly, the effects of the reward magnitude and the penalty magnitude revealed a pronounced asymmetry. The choice repetition effect of a reward scaled with the magnitude of the reward. In a marked contrast, the avoidance effect of a penalty was flat, not influenced by the magnitude of the penalty.

This kind of fits with my notion that animals attend more closely to pleasure than to pain, and better remember the information learned. Food is necessary for survival, but absence of pain is not.

I’m not sure how you could quantify this for comparison, even in theory. I guess the only way would be to reduce pain/pleasure to some specific neural firing and then estimate based on that. Still, I can think of certain times where I’ve been physically uncomfortable but also happy, or vice versa, so I don’t know if that sort of thing would be an appropriate measure even for a non-selfish hedonist or eudaemonist (of which I am neither so I could be wrong).

I tend to think pain and pleasure are valid considerations but maybe an imperfect proxy for something more significant and real, like survival / thriving / something else.

I usually read you via RSS feed – if WordPress even counts that, it counts it once. I come to the www page only to comment, and if I’m proud of that comment I will return to the article several times to check for answers because this comments system doesn’t notify me of answers automatically. So from me, you’re getting way more traffic for a post where I comment something substantial than for one where I don’t.

Your recent posts are focused on topics where I’m glad to learn but don’t have interesting information or reasoning to contribute, so I wouldn’t be surprised if I heard the number of pageviews here that I generate had declined a bit. I would expect it to go back up if you make far-ranging analyses or meditations again, or if you ask interesting questions that I feel qualified and invited to try and answer, like the discussion about who lacks which specific experiences.

I’m a variation on this – I read through RSS and used to click through to read comments, but started to find it too time consuming to try to keep up. So I just gave up on reading comments, and so stopped clicking through to the website. Also the really annoying girl in my dept went on maternity leave on Feb 20, so I contribute to office chat a bit more, and read internet a bit less.

Where’s my question? Are we only allowed to ask PC questions?

no race or gender in the open threads is the global rule, I believe. I think I saw your post, and I’ve likewise had comments touching on the subject in question deleted on the one occasion I made them. It was somewhat disconcerting, but I’d hardly call the general environment here “PC”.

Are we only allowed to ask PC questions?

I’m sure if you’re using a Mac, no-one here will judge you (though I do have An Opinion, based on work experience, of the kinds of people who choose Macs over PCs).

Ooooh, do tell!

Does this opinion include those who find it the most user-friendly flavor of UNIX?

EDIT: response to question that was deleted, I guess.

I think you’re missing the point. She’s not a “pre-pubescent girl” she’s a thousand year old cyborg construct.

Wat?

Boy oh boy am I curious about the deleted post now.

Likewise.

I posted a thread that argued that some paedophilic attraction would have been adaptive for men in ancestral times.

It’s in /r/evopsych

(1) That’s a tangled mess

(2) Refine your defintions: paedophilia, hebephilia, ephebophilia, what? Also, if you’re talking about notional “people back in X period only lived to be Y years of age”, then yes, something like e.g. “Romeo and Juliet” where Juliet is somewhere around 14 and her mother was married off at 12, and women tended to be married when they started their menses which can be anywhere from 11-14, and the age of marriage for women in Rome was 14, and men tend to be older when marrying and – given rates of death in childbed – could have multiple successive spouses, then all right, men being sexually attracted to much younger women could have been evolutionarily adaptive.

But I don’t think it’s much of a useful argument to make, since women are not heifers, even if Teagasc is arguing you should bull 15 month old heifers to increase lifetime output and for better calving practice.

That objection sounds like an isolated demand for rigor, since it would apply to any evopsych explanation of anything involving women.

It’s not a mess, you just don’t understand the elegant mathematical argument.

I think the deletion means our host would rather we didn’t discuss the topic here?

Y’know, now I’m curious. What do we really know of relative life span for humans in the Neolithic (say?)

Okay, any complete skeletal remains can be broadly classified for age, but can we really say that men tended to be older than women? Maybe all the brash young hunters getting killed when going out to hunt large fierce animals, or getting killed in wars, meant that there were lots more older women around than older men to get their pick of the newly sexually mature – the cougar effect, if you will. A thirty year old woman might have been (the equivalent of) a toothless crone, but she could still bear children.

So can we really start speculating about evolutionary adaptiveness for men preferring younger sexual partners, when it might well have gone the other way (older women, younger men)?

Nah. Life expectancies in the Neolithic through the Renaissance were a lot lower than modern ones, but most of that comes out of (extremely) high mortality rates in infancy and early childhood; if you made it to five in most places, you stood a good chance of making it to fifty. Thirty would not have been seen as old in most places; it may have been seen as pushing middle age, but no more.

And life expectancies were actually lower in the Neolithic than they were in the Paleo-, if skeletal proxies are anything to go by — probably thanks to cramped living conditions and close proximity to livestock making it easier for disease to spread.

On a slightly related note: An Afghani refugee I know tells me that in Afghanistan, 45 is considered old.

That’s not an impression that would be caused by high child mortality if everybody who reached 5 could be expected to reach 45. I don’t know (and, frankly, have not ever asked) whether this is due to the war or due to other causes.

@Shieldfoss

I think he might have been exaggerating. According to the site below the life expectancy at birth in Afghanistan is about 60 and at 5 it’s about 66.

http://www.worldlifeexpectancy.com/country-health-profile/afghanistan

UK for comparison:

http://www.worldlifeexpectancy.com/country-health-profile/united-kingdom

Even in Sierra Leone the L.E. at birth is the mid forties and at 5 it’s the mid fifties.

http://www.worldlifeexpectancy.com/country-health-profile/sierra-leone

Cavemen didn’t have short lives.

I’m not saying that cavemen had adult life expectancies close to ours. They didn’t. I’m saying that the difference wasn’t dramatic enough for 30 to have been counted as old, despite life expectancies at birth in the mid-to-high thirties during the Upper Paleolithic.

Equally importantly, we can’t simply assume that life expectancy increased monotonically as civilization grew more complex. Paleolithic adult skeletons are taller — a proxy for nutrition and general health — than sedentary populations until the Renaissance at the earliest; some populations don’t make up the gap until industrialization. Data is deficient for very early civilizations, but Roman life expectancy at birth was about twenty — again driven mostly by infant mortality.

(There are some caveats. Infectious disease is the main killer among modern forager populations, but it’s not clear how well that generalizes to the Paleolithic.)

@Nornagest

What do you mean? The evidence is that they did.

Define “close”? The sources I’ve seen vary, but I’ve seen estimates as low as 40 to 45ish (e.g. here or here). The error bars are quite wide, and considering some of the sampling issues involved (and in view of modern forager populations, e.g.), I’m inclined to shoot a bit higher; but first-world adult life expectancy is higher still, and by more than a little.

Interestingly, once you start digging into the actuarial tables, you find that forager life expectancy as late as age 45 is quite high — as much as 20 more years (compare ~30 expected years at age 20). We’re not looking a population where everyone would get to 45 or 50 and then keel over; we’re looking at a population where large portions of every age cohort would die, probably thanks mostly to infectious disease, violence, or predation.

@Nornagest

In Sierra Leone which has the worst living conditions and lowest LE in the world, LE at birth is still about 45 and at 5 it’s about 55.

The fossil evidence shows that prehistoric people generally lived in productive habits and were well fed and so their LE must have surely been (much ?) higher than that.

Extrapolating from modern sedentary populations, even marginal ones, is an extremely bad idea in this context. Even the most marginal wouldn’t be dealing with many of the issues that foragers would have — and, to be fair, would be dealing with a lot of new ones. The lower infant mortality rates should make that clear.

Modern foragers rarely die of malnutrition, either — nor, famously, any of the so-called diseases of affluence that tend to kill us. That doesn’t mean they’re long-lived.

This is interesting:

https://www.youtube.com/watch?v=XNeEVv_aDC8

Yeah, I understand. But my point was that prehistoric people seem to have been healthy and robust.

With regards to the Afghani refugee comment, I wonder if it came from the perspective not that 45 is old because the average life expectancy is less than that, and more that 45 Afghanis look old.

That they are much more worn down than a Westerner of the same age.

What’s the easiest way to get a naltrexone prescription if you want to try the Sinclair method? Would most GPs just give you a prescription if you ask? Should you try a psychiatrist?

Just a month or so ago, an alcoholic in my family ordered some online from a pharmacy in India, which apparently had a reliable method of shipping it into the US without getting caught. He received it fairly promptly and it seems to be working as intended with no unexpected side effects.

Your GP may or may not be willing to prescribe it for you – there apparently are increasing numbers of doctors becoming aware of the Sinclair method, and others may be open to considering it if you explain the theory to them. There is apparently also at least one online support community with a section to post about doctors who will prescribe it, so you may be able to find help there.

Thanks!

If there was a Patreon link, I’d certainly chip in a couple of bucks a month. I’ll grant that it’s not great from an effective altruism point of view, but it’s certainly something that I’d like to support, and that I’d be proud to be seen signalling support.

Think of it this way; if donating 100 dollars to SSC’s continued operation means that at some point down the line, SA writes a cracking post that persuades someone to donate 1000 dollars to charity, you will have donated your money very effectively indeed.

Yes, if money were a bottleneck to its continued existence, that money would be well-spent. But blogs are cheap, so it isn’t. Moreover, Scott thinks that money would make him more stressed and less productive.

This is a compelling statement of which I was not aware.

I 100% agree with this statement. Me and several of my friends joined EA movement after we’ve read SA posts, and I presume we are not the only one.

Some of SA posts here and on LW are one of the most effective rationalist evangelism I’ve ever encountered and even from purely consequentialist perspective it would be perfectly rational to support Scott in his work.

http://www.wired.com/2015/04/geeks-guide-kazuo-ishiguro/

Kazuo Ishiguro is the author of Never Let Me Go, and more recently, The Buried Giant, an Arthurian fantasy. He loves and respects sf, but his background is mostly literary, so I found the interview interesting (I recommend the whole thing if you have an hour– the transcript is very incomplete) because it’s quite an alien take on my home subculture.

the drop in readership could just be noise. gonna need more data

I’m sure this has been asked in the past, but I can’t find an answer – what’s the favourite rss reader around here these days? I’ve been using newsblur since the shuttering of Google Reader, but there has to be something better.

Feedly. Works fine, though I don’t quite love it.

I used to be a Google Reader devotee, after the shutdown I switched to feedly. It has essentially the features I used Google Reader for and a very similar web-interface, and it can sync across devices.

Problems: I use my RSS reader by going through everything that’s popped up and opening it in a new tab. Feedly (and to an extent, approximately every RSS reader I’ve ever tried) makes that a pain – I have to hold CTRL, left click on the title of each entry in the RSS thing (and not the bit that says where it’s from, that just goes to the main page for the thing), and then mark-all-as-read. Mostly works out, unless it’s been a few days and I’ve got enough unread RSS things in a category that I have to scroll, because then I have to spend mental effort remembering my position.

I also use feedly and I also find it annoying to open many links in new tabs. So I wrote a user script that opens all the links for me when I hit the “Q” key on my keyboard. Maybe others will find it useful.

http://pastebin.com/XhgXh8Hq

I wrote it for Firefox’s Greasemonkey, but I suppose it would work for other browsers and user script addons.

Feedly may be the favorite but digg is my favorite

The Old Reader seems to work for me, though the adds have gotten a bit more annoying recently.

I don’t remember what, but something about Feedly annoyed me so much that I switched to The Old Reader. That works great for me (I use Adblock)

I use Firefox Live Bookmarks.

I’ve been quite happy with https://www.inoreader.com/

New reader to the blog. Very much enjoying it, Scott. Thanks.

As for #3, this does not explain recent traffic decline, but it’s something to keep in mind moving forward.

On Tuesday, April 21, 2015 Google updated its algorithms to favor mobile friendly sites (aka ‘mobilegeddon’). The change boils down to this: from now on, if a website is mobile friendly it will appear higher in searches performed on mobile devices. I’m just guessing but I bet a significant slice of your traffic (maybe 10-15%) is coming from mobile organic search.

Slate Star Codex is not mobile friendly. You can run a test with Google’s Mobile Friendly Test here: http://goo.gl/lTm3de

It’s always a pain to make changes, but you should be able to switch to another WP theme without too much trouble.

Google will roll out their update over the course of a few weeks, so the changes might not be noticeable immediately. If you’re not mobile friendly today, you can take action and Google will notice.

Happy to lend a hand or answer any questions if you have any.

Keep up the good work.

It’s funny that it might not be “mobile friendly” but being almost entirely text based, it is quite easy to browse on my (not exactly top of the line) phone.

Yeah, I’m not sure I’ve ever actually come here on my pc – maybe once or twice when I first started commenting.

Mobilegeddon was my first guess as well. Even though your drop off occurred prior to this, it is possible that Google was testing the algo prior to the official release date. There are monthly changes in any case and there was an earlier February update that caused some fluctuations: https://www.seroundtable.com/google-algorithm-update-19820.html

You should check your Google Analytics reports and see where the traffic drop off is occurring. If you see a large decline in Acquisition : Organic Search then Mobilegeddon, or other update, is a reasonable bet — you should try and pass the Mobile Friendly Test that @William links to regardless.

If you see a big drop in Direct Traffic I would look at RSS / syndication issues. Check your own WordPress admin settings, and if you get a lot of traffic from other services (Feedly, Twitter), check those sites and see if something has changed about how your content is getting displayed.

For the record, I’ve found it awkward to read SSC posts on my phone.

Of course, that just leads to me going back later on my PC, so it’s actually <i.increasing your traffic…

Cool tabletop game based around inductive reasoning: Zendo.