[EDIT: The author of this paper has responded; I list his response here.]

Jacques Derrida proposed a form of philosophical literary criticism called deconstruction. I’ll be the first to admit I don’t really understand it, but it seems to have something to do with assuming all texts secretly contradict their stated premise and apparent narrative, then hunting down and exposing the plastered-over areas where the author tries to hide this.

I have no idea whether this works for literature or not, but it’s a useful way to read scientific papers.

Consider a popular field – or, at least, a field where a certain position is popular. For example, we’ve been talking a lot about growth mindset recently. There seem to be a lot of researchers working to prove growth mindset and not a lot working to disprove it. Journals are pretty interested in studies showing growth mindset interventions work, and maybe not so interested in studies showing they don’t. I’ll admit that my strong suspicions of publication bias don’t seem to be borne out by the facts here – see this meta-analysis – but I bet its more sinister cousin “all experimenters believe the same thing and have the same experimenter effects” bias is alive and well.

In a field like that, you’re not going to get the contrarian studies you want, but one way to find the other side of the issue is to look a little more closely at the studies that do get published, the ones that say they’re in support of the thesis, and see if you can find anything incriminating.

Here’s a perfect example: Mindset Interventions Are A Scalable Treatment For Academic Underachievement, by a team of six researchers including Carol Dweck.

The abstract reads:

The efficacy of academic-mind-set interventions has been demonstrated by small-scale, proof-of-concept interventions, generally delivered in person in one school at a time. Whether this approach could be a practical way to raise school achievement on a large scale remains unknown. We therefore delivered brief growth-mind-set and sense-of-purpose interventions through online modules to 1,594 students in 13 geographically diverse high schools. Both interventions were intended to help students persist when they experienced academic difficulty; thus, both were predicted to be most beneficial for poorly performing students. This was the case. Among students at risk of dropping out of high school (one third of the sample), each intervention raised students’ semester grade point averages in core academic courses and increased the rate at which students performed satisfactorily in core courses by 6.4 percentage points. We discuss implications for the pipeline from theory to practice and for education reform.

This sounds really, really impressive! It’s hard to imagine any stronger evidence in growth mindset’s favor.

And then you make the mistake of reading the actual paper.

The paper asked a 1,594 students from a bunch of different high schools to take a 45 minute online course.

A quarter of the students took a placebo course that just presented some science about how different parts of the brain do different stuff.

Another quarter took a course that was supposed to teach growth mindset.

Still another quarter took a course about “sense of purpose” which talked about how schoolwork was meaningful and would help them accomplish lots of goals and they should be happy to do it. This was also classified as a “mindset intervention”, though it seems pretty different.

And the final quarter took both the growth mindset course and the “sense of purpose” course.

Then they let all students continue taking their classes for the rest of the semester and saw what happened, which was this:

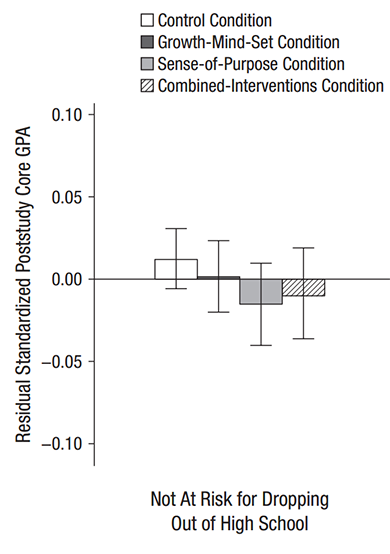

Among ordinary students, the effect on the growth mindset group was completely indistinguishable from zero, and in fact they did nonsignificantly worse than the control group. This was the most basic test they performed, and it should have been the headline of the study. The study should have been titled “Growth Mindset Intervention Totally Fails To Affect GPA In Any Way”.

Instead they went to subgroup analysis. Subgroup analysis can be useful to find more specific patterns in the data, but if it’s done post hoc it can lead to what I previously called the Elderly Hispanic Woman Effect, after medical papers that can’t find their drug has any effect on people at large, so they keep checking different subgroups – young white men…nothing. Old black men…nothing. Middle-aged Asian transgender people…nothing. Newborn Australian aboriginal butch lesbians…nothing. Elderly Hispanic women…p = 0.049…aha! And the study gets billed as “Scientists Find Exciting New Drug That Treats Diabetes In Elderly Hispanic Women.”

As per the abstract, the researchers decided to focus on an “at risk” subgroup because they had principled reasons to believe mindset interventions would work better on them. In their subgroup of 519 students who had a GPA of 2.0 or less last semester, or who failed one or more academic courses last semester:

But the control group mysteriously started doing much worse in all their classes right after the study started, so growth mindset is significantly better than the control group. Hooray!

Why would the control group’s GPA suddenly decline? The simplest answer would be that by coincidence the class got harder right after the study started, and only the intervention kids were resilient enough to deal with it – but that can’t be right, because this was done at eleven different schools, and they wouldn’t have all had their coursework get harder at the same time.

Another possibility is that sufficiently low-functioning kids are always declining – that is, as time goes on they get more and more behind in their coursework, so their grades at time t+1 are always less than at time t, and maybe growth mindset has arrested this decline. This is plausible and I’d be interested in seeing if other studies have found this.

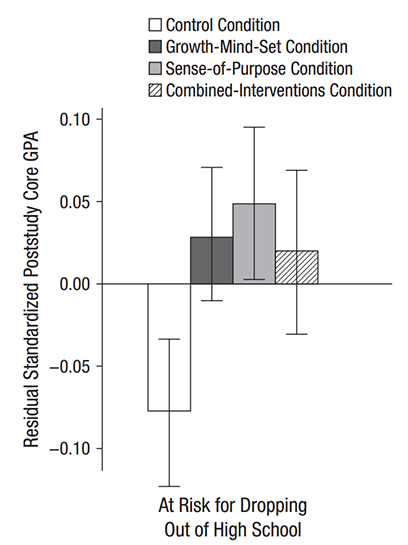

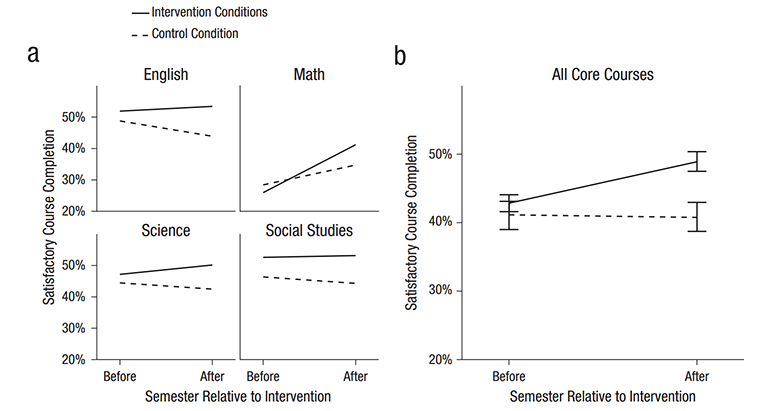

Perhaps aware that this is not very convincing, the authors go on to do another analysis, this one of percent of students passing their classes.

This is better, but one part still concerns me.

Did you catch that phrase “intervention conditions”? The authors of the study write: “Because our primary research question concerned the efficacy of academic mindset interventions in general when delivered via online modules, we then collapsed the intervention conditions into a single intervention dummy code (0 = control, 1 = intervention).

We don’t know whether growth mindset did anything for even these students in this little subgroup, because it was collapsed together with the (more effective) “sense of purpose” intervention before any of these tests were done. I don’t know if this is just for convenience, or if it is to obfuscate that it didn’t work on its own.

[EDIT: Scott McGreal looks further and finds in the supplementary material that growth mindset alone did NOT significantly improve pass rates!]

The abstract of this study tells you none of this. It just says: “Mindset Interventions Are A Scalable Treatment For Academic Overachievement…Among students at risk of dropping out of high school (one third of the sample), each intervention raised students’ semester grade point averages in core academic courses and increased the rate at which students performed satisfactorily in core courses by 6.4 percentage points” From the abstract, this study is a triumph.

But my own summary of these results, as relevant to growth mindset is as follows:

For students with above a 2.0 GPA, a growth mindset intervention did nothing.

For students with below a 2.0 GPA, the growth mindset interventions may not have improved GPA, but may have prevented GPA from falling, which for some reason it was otherwise going to do.

Even in those students, it didn’t do any better than a “sense-of-purpose” intervention where children were told platitudes about how doing well in school will “make their families proud” and “make a positive impact”.

In no group of students did it significantly increase chance of passing any classes.

Haishan writes:

If ye read only the headlines, what reward have ye? Do not even the policymakers the same? And if ye take the abstract at its face, what do ye more than others? Do not even the science journalists so?”

Titles, abstracts, and media presentations are where authors can decide how to report a bunch of different, often contradictory results in a way that makes it look like they have completely proven their point. A careful look at the study may find that their emphasis is misplaced, and give you more than enough ammunition against a theory even where the stated results are glowingly positive.

The only reason we were told these results is that they were in the same place as a “sense of purpose mindset” intervention that looked a little better, so it was possible to publish the study and claim it as a victory for mindsets in general. How many studies that show similar results for growth mindset lack a similar way of spinning the data, and so never get seen at all?

This study is shockingly bad. How did this even get published?

Do you think getting abstracts to be written independently of the paper itself as part of the peer review process would improve science communication?

Btw first time commenting, and I’ve gotta say I love your blog. If I ever get to meet you personally I’m gonna give you a big hug lol.

You know, in my field (computational linguistics) as a reviewer, you typically *do* write an abstract of the paper!

The first line or two of a good review summarises your understanding of it, and instructs the area chair/editor whether you buy the claims etc. So it probably wouldn’t be so much work to make this official.

Same.

Same in non-computational linguistics also.

I imagine it would improve the quality of reviews, by forcing the reviewers to actually read the paper. But maybe I’m just bitter. (And maybe actually reading the paper would prove too burdensome and this would bring the peer-review edifice crashing down on our heads.)

You can’t blame reviewers, though, you have to improve the incentive structure for peer review. People always follow incentives, you can’t yell at them for that. People doing that is the problem statement.

The disposition of bystanders to yell is one source of incentives, sometimes important and sometimes not.

But in this case it is a “tragedy of the commons” scenario:

Continuous public bitching about the shortcomings of peer reviews erodes the status of academic scientists as a group. Each academic scientist probably doesn’t like that, but individually they can do nothing about it, after all reviewers remain anonymous and can’t gain personal status by doing a good job. Therefore, the individual incentive to slack remains unbalanced.

Baseball statistics analysis has improved radically over the last 30 years, while most social science has not. Why?

Baseball statistics was pretty much of a free for all among ambitious amateurs criticizing the old guard and each other.

Plus, baseball was an ideologically Safe Space where bright white males would have a hard time getting in trouble over gender, sexual orientation, or even race if they practiced a little crimestop. In contrast, social sciences are sedated by the need for “protective stupidity” to avoid mentioning, except in approved fashions, the big, recurrent factors that drive so many results: race, sex, etc.

Of course, the sabermetricians still did a terrible job on steroids, sticking their heads deep in the sand.

You can’t blame reviewers, though, you have to improve the incentive structure for peer review. People always follow incentives, you can’t yell at them for that. People doing that is the problem statement.

I’m all for changing incentives, but I sure can yell at people for following incentives, when doing so is morally wrong. It is inexcusable to recommend a paper for publication that you have not read. (It is much more excusable to recommend a paper for rejection that you have only read part of and have found to be incomprehensible.)

Ok, but that’s not productive. A sizable portion of humanity follows incentives, morality be damned. You may not like it, you may yell at them for it, but that does not seem like a productive response.

Do you think yelling will enact change? There is no bolshevik’s “new man.” There is just “man.”

I can’t change everyone, but I may be able to change some of those I am in direct contact with in academia, e.g., my students.

You say this like it’s a bad thing.

You know, it seems like many things in the world are some sort of priesthood or another.

What I mean is, we attempt to give added credibility to a field by grouping together people who are *supposed to*, through moral force or something, review each other and police each other’s actions, but this typically NEVER happens.

So the people in these fields get a ‘status rent’ through their inclusion in the priesthood, but this is typically underserved. Let’s call this a ‘status priesthood’. I think humanities academia, congress, peer reviewers, medicine, law etc. are all places where this occurs. ‘Religious thinking’, in boh the reactionary sense and in the Sailerian ‘megaphone’ sense, probably runs amok in these places due to such social mechanisms.

But this immdiately raises the question: what happens in a society with no ‘status priests’ at all? Something like Twitter or tumblr lynchings i suspect. The worst aspects of egalitarianism or devolving to a common denominator will occur, and everything will degenerate into popularity contests. Which then raises the question of how a true meritocracy can ever transpire in real life. A ‘Raven’s matrices meritocracy’ probably lol.

In general I’m all in favor of having abstracts written by reviewers instead of authors. But if the situation is like Scott proposed, that all researchers in this field believe growth mindset interventions work and are excited about new research proving how well it works, then probably it would not help that much?

Like, the paper presumably has an intro section putting the same spin on the result as the abstract does, and presumably the reason the reviewers accepted it is that they bought that spin. Then I would expect that they would just repeat that point of view in their own reviews. (A typical accepting review starts something like “I recommend this paper for acceptance because it makes the following important contributions: …”.) If they were as jaded as Scott, they’d just reject it outright…

Wow. This is the first time I’ve ever seen anyone make something useful out of Deconstruction.

Heh heh.

I hate that I have this sense. The quote was perfectly innocent.

It would have been better to say “groups that become decreasingly numerous” — but worded better. It’s actually hard to find a good way to word that. But yeah, “weird” is a less-than-perfect word for this.

especially because in this sense, “weird” is actually the opposite of WEIRD.

Smaller subgroups, or more arbitrary subgroups, etc. would have been better phrasing, I suppose.

I think your “arbitrary subgroups” is the best term for that.

I skimmed this post.

Based off the first paragraph, I believe he’s saying that deconstructing growth mindset causes chicken pox or something.

On the topic of deconstruction, I recommend How to Deconstruct Almost Anything: My Postmodern Adventure.

The thing about Deconstruction is that it works, I use it often and it gives me accurate results that allow me to make successful future predictions. I hesitate to claim I know the true and final reason why people disparage it, but my guesses are:

1) It is commonly used by the Greens, forcing the Blues to deny its efficacy (See: All other subjects)

2) For the untrained, it is hard to distinguish good and bad deconstruction, enhanced by the fact that there is so much bad deconstruction.

1+2=3) A common attack against good deconstruction is that it is nonsense. A common counter is that the speaker must be unsofisticated if he thinks it is nonsense. Since deconstruction is a skill that must be learned, this makes it hard for outsiders to see whether any given exchange of nonsense/unsofistication is based in fact or partisan bias. Since arguments are soldiers, even people with the skill will tend to judge along partisan lines.

I’m sure a lot of people around here would be interested an elaboration of that. The community kind of dismisses that stuff, for lack of the time to properly investigate it. What does it allow you to predict? Can you demonstrate?

I am under NDAs on basically every project I work on so not with a real-world example, but much like Scott, I can get away with a composite story made up from individual pieces.

So:

Say you’re on a project for a company. The company creates a position for, and hires, a Chief Diversity and Sustainability Officer. Shortly after, it is announced that there will be a course on Business Ethics and a subsequent test. The test is taken online. The course is not mandatory, but the test is.

So here’s a real question: Do you need to take the course (a significant investment of time during crunch on a big project) to avoid failing the test? If you are skilled at deconstruction, you take the Text (Here: the announcements of the new CDSO, course and test) and find out the real intentions behind the authors – are we going to commit real resources to this or is it just a low-effort signalling game? Will the course have actual new practices we put into effect or just a lot of nice-sounding yes words?

The cynical answer is “It is always a signalling game.” The idealist answer is that of course they care about X.

I have worked in places where the cynical assumption would get you (a) dead wrong and (b) no re-hire for future projects and I have also worked places where the idealist answer would be a waste of everybody’s time.

Really, deconstruction is just an academic formalized version of HPMOR’s CP. 20: “The import of an act lies not in what that act resembles on the surface, Mr. Potter, but in the states of mind which make that act more or less probable” – deconstruction is the rationalist skill of figuring out the possible mind-states of the Author of the Text instead of just taking the Text at face value, which will often be wrong, or just assuming the Text is a lie, which will also often be wrong.

Incidentally, the deconstruction of “How to Deconstruct Almost Anything: My Postmodern Adventure” is fairly simple: From the fact that the author spends so much time talking about how silly Deconstructionism is, you can easily infer that the author cares deeply about it – possibly because it has been annoying him for quite some time.

Expanding on this: A signalling Text is different from an idealist text written in a signalling company is different from an idealist text written in an idealist company. With experience, you learn to tell the difference.

Not to say Heidegger and Derrida were not, in other ways, absolutely full of it – I do not recommend wasting any time reading them, you can get their actual insights much better in other ways.

(Bonus homework for the class: Deconstruct this post. There are three paragraphs here. There is at least one and at most two paragraphs for purposes that are half signalling and half an attempt to convey information without stating it outright. What information might that be?)

Sounds like if it’s improperly used it can quickly become Bulverism.

You’re being a little obtuse here. Deconstruction method (like Game Theory methods) are just tools that can improve decision-making if implemented properly. The formalisms of procedure, structure, organization, and mathematics are simply techniques that can be learned and applied. And there is no guarantee of success, just an improved probability.

This is not even wrong. Deconstruction is not the revealing of some hidden message in the text whether construed as the intention of the author, the social subtext, the historical context etc. In fact this is one of the forms of textual interpretation that Derrida explicitly argued against.

Perhaps you were being ironic-sarcastic?

How does “deconstruction” in the sense you give here differ from good ol’ “reading between the lines”?

I agree with MagicMan. Deconstructionists don’t claim that everyone is signalling, they claim that truth-claims have certain inconsistencies or limitations, and that looking at these is often useful.

Some deconstructionists might say that inconsistencies like this exist because truth doesn’t exist. But another way of looking at deconstruction would be to say that humans aren’t perfectly objective, so our vocabulary for describing the world is fundamentally broken – the map is not the territory, but also the map is more like an ugly patchwork quilt than a solid sheet of paper. If all the breaks in a sentence or essay line up in a certain way, then that suggests something about what the mistakes of certain ideas are.

One problem in deconstruction is that what one deconstructionist reads as an assumption of the work or considers to be “inconsistent” can be pretty different than what a reasonable person might. The worst argument in the world runs rampant in the field, for example. Also, like a lot of criticism, it’s in practice more about destroying bad ideas than introducing new good ideas as alternatives – it’s not good at building useful things, and often it’s too quick to sneer at ideas or reject them on a flimsy basis. But deconstructionists are often good at poking holes in mainstream thought-cached ideas despite the method’s weaknesses.

It’s really more an art than a science. I use deconstruction, personally, in a “Random Noise is Our Most Valuable Resource” kind of way. I don’t take it seriously, but it’s sometimes nice for brainstorming.

My understanding is that within the field of literary criticism (where the technique of deconstruction originated,) the intent of the author is *not* considered relevant to the interpretation of the text. Motive analysis is a useful skill to have, but the academic practice is very distinct from motive analysis.

Is there a test that a particular example of deconstruction can pass or fail? How do you know it works?

Do the “trained” agree among themselves on which ones are good and which are bad? How does one recognize who is sufficiently trained?

Serious question, isn’t deconstruction basically “Debate 101”? You find the weak points in the opponents arguments and destroy them.

If not, how is it different? How (from the outside) can we tell deconstruction from a mere desire to defeat the author’s/speaker’s argument?

I’ve read the link before. I’ve also read and reread the wikipedia article. I still have no idea what Deconstruction entails. If I steelman this, it sort of resembles the rationalist game “Consider the Opposite”. Maybe.

You could just email Dweck and ask about the chronology of the study design, i.e. when they decided to collapse all the interventions together and break out subgroups, etc. And her general practices regarding creation/change of analysis plan after seeing the data.

Gelman’s “Garden of Forking Paths” is relevant here:

http://www.stat.columbia.edu/~gelman/research/unpublished/p_hacking.pdf

The frustrating thing is, I don’t know how to put a stop to weaselly studies like this. I’m a grad student and I can see myself doing this kind of thing all the time when I write papers. I try to at least be aware of it so that I’m not fooling myself (there’s a mental feeling that goes along with spin, like trying to stretch something into a state it shouldn’t be in, and I’ve learned to recognize and hate it). But it’s hard to avoid altogether – I’m under pressure from my supervisor to produce high-impact results and present them in the best light possible. And sure, I could try to make a stand and stop spinning my results, but what would that do? Probably my papers would just be published in slightly lower-quality journals (Ha! The better I try to make my papers the worse places they end up), or in some cases the paper wouldn’t get published at all, and my supervisor would be annoyed with me, and I might be delayed in finishing my PhD.

Something something incentives something something Moloch.

(Option 1) If you are under pressure to publish in an ideologically unbalanced field (like social psychology), there is a lot of low-hanging fruit in terms of results that the ideologues of the field don’t want to find (see, e.g., Lee Jussim’s work picking some of this fruit in social psychology). You can pick some of this fruit. Downside: you publish ideologically unpopular results.

(Option 2) Once you get your Ph.D., get a job that focuses more on teaching than research. Obviously there are still bad incentives in teaching, but if you’re a good teacher you have a lot of freedom here.

(Option 3) Contribute to what in most fields is a growing literature on methodology. If you do it well, you can get widely cited and recognized. See, for example, this very clever experiment in psychology. You might think this has the same downsides as option 1, but in fact methodological naysayers are often popular, largely because everyone agrees there’s a problem but is convinced it’s not their problem.

Actually the downside of (1) is that you don’t get your stuff accepted for publication.

I think it’s more accurate to say that it’s harder to get your stuff accepted for publication. (There are, after all, people like Jussim who get published.) On the other hand, it’s too easy to get published right now — witness the topic of this post. So perhaps the extra scrutiny is not entirely a bad thing.

What kind of life do you want?

Do you want an academic research position so you can have an academic research position? Or do you want an academic research position so that you can do good research?

That may make it sound like an “easy” decision, or that I am priming you for one answer over the other. I assure you I am not, but rather echoing back to you the essence of what you said. There are legitimate arguments either way.

If you don’t keep an eye on your ultimate goals, then you end up somewhere you did not want to be.

Well actually, I don’t want an academic research position at all. But the same question applies to my PhD, so it’s a fair point. And at this point I would say that, yeah, I am pretty much getting my PhD just to get my PhD. I think I mostly just drifted into going to grad school by default, because it seemed like the thing to do at the time (to use the LW-favoured terms, which I think are unfairly maligned, I was acting more NPC-ish than PC-ish). Now that I’m here, though…well, I get paid a livable wage, I’m not overworked at all, I have a very flexible schedule. So it’s not a bad gig, really. Of course, I’m quite cynical about the value of my research (not that it’s worthless, per se – more overhyped). But if you want to get published you have to play the spin game, and so I play the spin game.

So, get your PhD, but don’t sell your soul to do so.

But of course, there is also the “what then” question…

A lot of academics want a piece of what you might call the Motivational Writing and Speaking industry. Malcolm Gladwell, who repackages social science studies for employees, largely, in sales and marketing, really opened a lot of eyes among academics to how much money you can make speaking at corporate events. Gladwell’s fee for addressing, say, a corporate sales conference is routinely in the $50,000 and up range.

A large part of the appeal of Gladwell’s speeches is his assertion that what he’s telling you is Science with a capital S. So, if you are a social scientist, why not try to cut out the Gladwell-like middlemen and get into the greater Motivational industry yourself?

For example, here’s Dweck’s page on the website of her agent, the All American Speakers Bureau:

Carol Dweck

Leading Researcher in the Field of Motivation; Professor of Psychology at Stanford University; Author of “Mindset”

Categories: Authors, Mental Health, Motivation, Empowerment,

Booking Fee Range: $10,001 – $20,000

Speaker Travels From: California – CA

Carol S. Dweck, Ph.D., is one of the world’s leading researchers in the field of motivation and is the Lewis and Virginia Eaton Professor of Psychology at Stanford. Her research focuses on why people succeed and how to foster their success. More specifically, her work has highlighted the critical role of mindsets in business, sports, and education, and for self-regulation and persistence on difficult tasks in general. In addition, she has shown how praise for ability or talent can undermine motivation and learning.

http://www.allamericanspeakers.com/speakers/Carol-Dweck/9233

Dweck’s 10k to 20k speaker’s fee range is pretty low, by the way. I only recognize the names of about 1/4th of the celebs in that range, and most of them are over the hill, like Alan Bean, Alan Keyes, Alice Rivlin, Amber Rose, Andrew Fastow (I guess he’s out of prison for Enron by now), Andy Dick, Angela Davis, Bart Starr, and Bela Karolyi.

In contrast, in the $30,000 to $50,000 range, the bureau features such luminaries as Adam Carolla, Art Laffer, Boomer Esiason, Carmen Electra, Clayton Christensen (of Harvard BS), etc.

So, there’s a lot of upside if you can become a little more famous than Dr. Dweck is right now.

Here are some academics listed on the All American Speakers Bureau:

Cornel West

Dr. West’s Writing, Speaking, and Teaching Weaves Together the American Traditions of the Black Baptist Church, Progressive Politics, and Jazz

Fee Range: $30,001 – $50,000 About Fees

Travels From: New Jersey – NJ

Richard Florida

Urbanist and Commentator on Creativity and Innovation; Author of “The Rise of the Creative Class”

Fee Range: $30,001 – $50,000 About Fees

Travels From: District of Columbia – DC

Laura J. Snyder

Science Historian, Philosopher and Author of “The Philosophical Breakfast Club”; TED Talker

Fee Range: $5,001 – $10,000 About Fees

Travels From: New York – NY

Michael Beschloss

Michael Beschloss is an award-winning historian of the Presidency and the author of eight books, including his most recent work, the acclaimed New York Times bestseller The Conquerors: Roosevelt, Truman and the Destruction of Hitler’s Germany, 1941-1945.

Fee Range: Please Contact About Fees

Travels From: District of Columbia – DC

Ray Kurzweil

Inventor, Entrepreneur, Author and Futurist

Fee Range: $50,001 and above About Fees

Travels From: Boston – MA

Adam Grant

Professor, The Wharton School of Business at the University of Pennsylvania and Author, “Give and Take: A Revolutionary Approach to Success”

Fee Range: $30,001 – $50,000 About Fees

Travels From: Pennsylvania – PA

Alice M. Rivlin

Founding director of the Congressional Budget Office, served at the Department of Health

Fee Range: $10,001 – $20,000 About Fees

Travels From: Washington – DC

Steve: perhaps I’m just not in tune with all of the professional incentives in social science right now, but in most fields — certainly my own — popular publishing is (I think wrongly) very much frowned upon, and contributes almost nothing towards tenure. So you’re balancing financial incentives in terms of speaker’s fees etc. against what strike me as much larger academic disincentives (not to mention the fact that most academics are not great public speakers).

Someone is making $30,000 for one or two hours of speechifying, and you think saying “…and so now we will not let you be a Tenured Professor of Somethingology” is a large disincentive?

Academics aren’t choosing between making $30,000 an hour on speaking or becoming tenured. They’re choosing between focusing their energies on making $30,000 an hour with a 1% chance of succeeding vs. focusing their energies on getting tenure with an 80% chance of succeeding.

Perhaps those percentages are off, but even if it’s, say, 5% vs. 50%, working towards tenure still seems the rational course of action.

There is essentially zero chance that 1% is too low an estimate. There are too many professors and not enough high-profile speaking engagements.

It was projected that the increase in number of professors in the US between 2004 and 2014 would be 524,000 .

I’d say achieving tenure is also significantly below 50%, wasn’t this discussed extensively in a former post?

Could well be. It of course depends also on your field and personal skills/qualifications.

Tenured academics remained tenured, and pretty much all influential academics are tenured. Practically no one can go the academic route to becoming a public speaker without first making it through the tenure track. So what actually happens in terms of incentives is that prior to receiving tenure, people have a strong incentive to do whatever maximizes their chances of progressing through the next bottle-neck towards tenure if that’s what they are aspiring to do. College students maximize their chances of getting into a PhD program. PhD candidates maximizes their chances of getting a good post-doc fellowship. Post-docs maximize their chances of becoming an associate professors. Associate professors maximize their chances of getting tenured. And then tenured professors maximize their chances of becoming influential either within their field or by writing for more mainstream audiences. (Becoming prominent in one’s field before writing for a popular audience seems like a more-winning strategy than first aiming to write for a popular audience. Chomsky, Levitt, Christensen, Ariely, Pinker, Hawking, and most of the other big-name authors who are also academics were already tenured professors who were well-known within their field before they wrote a book that gained more mainstream popularity. The only noteworthy exception to this rule I can think of is Dawkins who fell off the tenure tract and was merely a lecturer/reader by the time The Selfish Gene made him famous. Even Gould who is pretty much the iconic example of a pop-science scientist that gives pop-science a bad name among people who take scholarship seriously, became one of the biggest names within paleontology long before he wrote anything for a mainstream audience.)

I don’t know what your cutoffs are for picking your numbers… but if you are counting PhD candidates who hope to someday become professors as academics (which thepenforests comment that you replied to was), they seem pretty far-fetched to me.

After getting into a PhD program, academia becomes much more selective… I wouldn’t be terribly surprised if 50% of people who manage to become associate professors eventually getting tenure, but that’s already pretty far along the path to becoming an academic. But that number does seem pretty high to me.

By my estimations, fewer than 5% of the people who go to grad-school hoping to eventually become tenured professors end up becoming tenured professors, and less than 1% of the tenured professors hoping to become celebrity researchers end up making it to the level that allows them to get enough speaking gigs that the speaking brings in more income a year than their day-job as a professor.

In math and physics at my university (which are the only departments I enteracted with enough to have any data), there were many more PhD candidates and post-docs hoping to eventually become professors than there were total professors (associate+tenured), and there were more associate professors than there were tenured professors, and the average amount of time people spent tenured once tenured was at least three times as long as the average PhD candidate/post-doc spent in those two phases of their careers so that gives a 1/(2*2*3) = 8.3% likelihood of someone who had managed to become a PhD candidate eventually becoming a tenured professor… that’s back of the envelope from a limited sample, but all of my rounding is generous to the prospect of getting tenure and all of the included facts would apply to most universities.

It might not be fair to your comment to count all PhD candidates who hope to become professors as academics, even though most of them would describe themselves as such. My general impression of the weed-out process in academia is that it is practically non-existent until post-doc fellowships. 10/11 of my friends from undergrad that applied to get into a PhD in physics got into a program (all 10 of them hope to eventually become professors). I don’t think this is non-representative of people applying to PhD programs in hard sciences from top-tier universities. (I can’t compare to other universities, but the same heuristics holds for people interested in other hard sciences that I knew from my university. My friend group was drawn disproportionately from the hard sciences, but it was not drawn disproportionately from the people in the top of the class within those disciplines — I don’t think.)

Thanks for the thoughtful comment. I think you (and Steve) are right that the incentives change after tenure, and that the overall incentive structure is more like you described: work towards tenure until you get it, then work towards influence, which may come in the form of being a public intellectual but usually even then goes through prestige in one’s field first. And as I reflect on my own field, it does seem right that the big “public intellectuals” became so post-tenure.

I don’t know what your cutoffs are for picking your numbers… but if you are counting PhD candidates who hope to someday become professors as academics (which thepenforests comment that you replied to was), they seem pretty far-fetched to me.

I was thinking mainly of pre-tenure professors with those numbers. I suppose I was assuming that Ph.D. candidates aren’t going to be at a place in their career where they would pursue the popular media option. But upon reflection it seems to me to actually be anecdotally more likely for Ph.D. candidates than pre-tenure professors to go the popular media route – albeit on a much smaller scale than Carol Dweck et al. – perhaps partly because it looks like a better gig than actually finishing their Ph.D.

In math and physics at my university (which are the only departments I enteracted with enough to have any data), there were many more PhD candidates and post-docs hoping to eventually become professors than there were total professors (associate+tenured), and there were more associate professors than there were tenured professors, and the average amount of time people spent tenured once tenured was at least three times as long as the average PhD candidate/post-doc spent in those two phases of their careers so that gives a 1/(2*2*3) = 8.3% likelihood of someone who had managed to become a PhD candidate eventually becoming a tenured professor… that’s back of the envelope from a limited sample, but all of my rounding is generous to the prospect of getting tenure and all of the included facts would apply to most universities.

I think your numbers are probably more accurate than my earlier numbers, but doesn’t this presume a fixed size, i.e., that academia is not growing?

One small quibble. It isn’t $30,000 an hour, it’s $30,000 a day, because you have to allow for the time spent getting to and from an event that is probably a fair distance from where you live. Still pretty good pay, however.

I don’t know if Other is basing his picture of the academic hierarchy on a country other than the U.S., but he seems to have left out one step—assistant professor. That’s the position the post-doc is trying for. The next important step is tenure, which may come either with promotion to associate professor or, later, to full professor.

“In math and physics at my university … there were many more PhD candidates and post-docs hoping to eventually become professors than there were total professors ”

But many institutions have no Ph. D program at all, and all of their professors need to come from somewhere. Your numbers are reasonable for the odds of becoming a professor at an institution that pursues graduate research, but should be raised significantly when you include all the undergraduate-only institutions. (That said, thanks for trying to get some numbers in the conversation! Such numbers are very hard to find.)

No. It was projected, back in 2007, that there would be an increase of 524,000 ‘postsecondary teachers’, which at an average salary of $64k in 2006, is not 524,000 professors. As the BLS comments of the ‘postsecondary teacher’ category involved in that projection, “Many jobs are expected to be for part-time or adjunct faculty.” (http://www.bls.gov/ooh/education-training-and-library/postsecondary-teachers.htm) Good luck making a speaking career on the strength of being an adjunct.

@gwern

The question proposed by Troy was what percentage of those pursuing an academic career focus on pursuing a speaking career, rather than optimizing their chance at tenure.

524,000 is the right number to use. That is the overall increase in the number of people pursuing an academic career. I assure you, all of those adjunct faculty members would love to have tenure, and are far more likely to get tenure than get on the speaking circuit.

The speaking circuit rewards people who are impressively credentialed in some way. Either they have an impressive array of academic honors, or an impressive number of sales of books, an impressive number of op-eds published in impressive news outlets, etc.

None of those set of impressive credentials has a readily available path for most academics. Tenure on the other hand, while hard to get, has a readily available path. Apply for a tenure track job, be chosen for the tenure track job, do work that your university peers find to be worthy of tenure.

What is your point?

Regardless of whether Dweck made a reasonable gamble, the fact that she has a speaking agent shows that she is interested in speaking. Leaving aside the public speaking and the money, popular books can generate a lot of press coverage, which professors and deans also want. Writing a popular book may be a bad gamble, but it doesn’t take a lot of academics making that gamble for the people we hear about to be dominated by them.

It is very strange for you to emphasize tenure. Once you have tenure, popular writing is still bad for promotion, but who cares?

Dweck is almost 70. She spent decades working her way up through the system, moving from school to school, obtaining press coverage, until finally, at age 55, she moved to Stanford, wrote a popular book, had her press coverage explode, and finally had a shot as a speaker. Stanford may well have recruiter her for this new phase in her career.

(I’m not sure what Steve’s point was, either.)

My point was that Steve seemed to me to be overemphasizing the extent to which “[a] lot of academics want a piece of what you might call the Motivational Writing and Speaking industry.” Obviously I don’t deny that some academics (like Dweck) are interested in that. I was just observing that academic incentives still favor not getting very involved in “popular” writing/speaking. I mentioned tenure because it is one of the biggest carrots the academic world offers, but obviously there are other incentives too.

There are a lot of tenured professors out there. Even ones battling for tenure have career incentives to publish papers announcing results that Malcolm Gladwell and Company would find comforting and usable.

Carol Dweck is 68. She came to Stanford when she was about 57, presumably with tenure. Public speaking allows her to sock away a nice nest egg. It also allows her to have more influence on her time by getting her ideas (and face) out there in front of more people.

I’m a little creeped out by this morphing into a discussion on Dweck’s personal qualities. Let’s keep this about the study.

Scott, that shouldn’t be the only thing you are creeped out by…

Dweck’s career got a nice little push in 2002 from Gladwell’s article in The New Yorker: “The Talent Myth: Are Smart People Overrated.”

http://www.newyorker.com/magazine/2002/07/22/the-talent-myth

Good Guy Scott Alexander:

“You can continue to expect less blogging” Posts a new blog post everyday for five days in a row.

I think the problem is he expects to have less writing time while he plans on working more, but on the job his mind is working on ideas that he feels compelled to type up. And research, footnote, annotate, etc.

He’s making a meta-point about expectations, obviously.

Just so long as he takes this into account when calibration time comes ’round again.

I really am really busy. For some reason I become more productive when I have less time. Either it has to do with me being so stimulated by working hard all day that I can’t turn off, or with time management being easier when you have so little of it – ie I don’t have to choose when to do the thing, I just do it in the only slot of free time that I have.

While we’re discussing statistics, I’ve had an idea on how to help alleviate the replication crisis floating around in my head for the last few days:

Rather than forcing journals to publish a truckload of negative results alongside the positive ones, just have researchers record each time they test a p-value. Then whenever they submit a paper, they get scrutinized based on how many p-value tests they did. Did the last 19 come up negative? Then no publication for you. This seems like it would perfectly eliminate the Elderly Hispanic Woman Effect. Obviously you need to drill this in people’s heads as a norm such that not recording your p-value tests is as big a sin as outright falsifying data, but other than that it seems like an extremely cheap and easy way of improving things.

It also seems so simple that I have a bad feeling someone else has thought of it before and found a reason why it won’t work.

What Gelman describes as the forking path problem is that researchers don’t run that many tests: they ‘eyeball’ the data and make only a few tests they can see are likely to pass, then convince themselves they didn’t really engage in multiple testing that has to be corrected for (or just knowingly engage in the practice knowing that it misleads).

Personally, I don’t think the specific practices that Gelman complains about are the heart of the problem. In the Big Picture, what’s really missing is a critical attitude and a culture of doing quick reality checks on assertions in papers.

And a lot of that is due to a culture of what Orwell called “crimestop” or “protective stupidity.” People murkily grasp that asking a series of hard questions might lead you to thoughts that could get you in big career trouble. So, why bother?

I think people do badly enough on politically-non-radioactive scientific questions that political considerations can’t be the main source of the problem.

I agree. What Sailer says is just a small part of the problem. See Greg Francis’s work, the P-curve approach, or the Replication Index, for example. Social science research on topics with no clear political implications is as biased as any other.

Steve’s got an axe, and by gum he will grind it…

When 1) professors/researchers are expected to self fund via grants, 2) grant money is far more likely to go towards “successful” research because 3) the broad populace doesn’t want to hear about money being “wasted” on unsuccessful research, and 4) money available for research is under downward stress, what outcome should we expect?

But look how much better amateurs have done in the politically safe field of baseball statistics than professionals in the social sciences. You don’t need to cultivate Crimestop in baseball stats (except on steroids), but it is deleterious to science: “Crimestop refers to the ability to stop short of any thought that might be heretical or unorthodox before it is even thought, as if by instinct. It is the ability to misunderstand analogies, fail to perceive logical errors, and be repelled or bored by any train of thought or conversation that might be inimical to Ingsoc.”

@ Steve Sailer: I’m not sure how I feel that analogy, because I think you’re overestimating how well amateurs have actually done in baseball statistics.

I think baseball stats look pretty good from the outside, in large part, because the state of the field was so dismal for so long – when you were in a situation where the conventional wisdom was totally and fundamentally wrong about the basic nature of the game of baseball, it’s not that hard to look good. And amateur work has been pretty decent at finding good measures for one specific part of baseball (offense).

On the other hand, offense is perhaps the easiest thing to measure in baseball, and amateur work has been much worse at measuring the harder things – and this has become more and more true as there has developed more and more of an actual professional field of baseball statistics. At this point, if you’re talking about things like defense or a catcher’s ability to frame pitches, the statistics that are good at measuring that are almost certainly going to be professional and non-public. We don’t actually have good public ways of measuring that.

And what’s more, I think that there are actually a lot of similar dynamics in the field that we’re talking about here – when you look at (for instance) Fangraphs and UZR, there’s certainly a narrative you can tell there about things with shitty outcomes being promoted anyway. UZR is frankly a crummy stat for measuring defense, and yet it gets a lot of play because it’s publicly available, and specifically associated with a well-known blog. It’s not ‘crimestop’ maybe but it’s not a great situation either.

Or…. maybe I just want to talk about baseball a bunch. Could be.

I think baseball stats look pretty good from the outside, in large part, because the state of the field was so dismal for so long – when you were in a situation where the conventional wisdom was totally and fundamentally wrong about the basic nature of the game, it’s not that hard to look good

Certainly. Might not the social sciences be another field which has long been in a dismal state because conventional wisdom was totally and fundamentally wrong about the basic nature of the game?

@ john schilling

Uh? Maybe. I don’t really know and I’m kind of confused about where you’re going with the line of argumentation.

I want to clarify the point of my comment, which was not to make a judgment on social science as it exists one way or the other. It was in the context of the argument over why the social sciences often seem to do badly, and the argument as to whether that was because of political bias in social sciences experimentation and reporting. Now, it may or may not be because of political bias – but my point was, I don’t think a comparison to baseball statistics proves anything either way, because (publicly available) baseball statistics aren’t as good as you might think and seem to have many of the same issues despite being apolitical. And the point I was making in passing was that one of the reasons, in my view, that baseball statistics seem to be so successful is because they were starting from such a low point. Whereas we’re able to see the flaws of social sciences much more easily, I think, because they’ve not had as easy a time of it.

Your point seems (and correct me if I’m misreading here) to be making an argument about the merits of the social sciences as against their critics – and whatever opinion I have about that issue, it’s not really all that connected to the point that I was originally making, which was pretty specifically about whether baseball statistics were more successful because apolitical. Which is fine – it just kind of confused me for a second, so lmk if I am misreading you here.

All of those caveats said… it’s certainly possible that there are basic and fundamental errors in the social sciences comparable to the thing. I don’t think I’m qualified to say either way. It does seem to me unlikely that there’s going to be anything comparable in the study of people, because I think human society is fundamentally substantially more complex than baseball. But I would certainly not say anything definite either way.

Baseball has better data and is a simpler game.

(oooh a chance to argue about baseball statistics on SSC)

I think baseball statistics are very good! Take the correlation between team WAR and wins. It’s not perfect, but r = .86, and when you factor in how much of a team’s win-loss record is pure luck (sequencing of hits, winning and losing close games, etc.), there’s not a whole lot of room to improve. Defense in particular lags behind, and catcher framing is sort of included in WAR but is misattributed to pitchers… but these are relative nitpicks compared to the clusterfuck that is much of social science.

That said, I will respectfully disagree with Steve here; the salient difference is that there is a vast trove of public data about baseball; to a certain granularity we know everything that happened on a major league field in the past four decades. Furthermore baseball’s structure makes it unusually amenable to statistical analysis; compare basketball and American football. Social science has vastly more variables, interacting in vastly more complex ways.

I do wish there were something analogous to the sabermetric community — a community of statistically-savvy amateurs, interested in social science but not concerned with whether their work has The Wrong Implications, ideologically. Inasmuch as Steve does this kind of thing, he’s doing the Lord’s work, but it’s not clear whether this approach can scale in the current political environment.

Or, rather than forcing them to ignore one result for every 19 failed tests, just correct for the number of failed tests by making the p-value necessary to establish significance harder and harder for each one.

Do you lose much by insisting on a p-value of 0.000001? It only roughly doubles the size of the study group you need, because the normal distribution drops off so fast; you can sort of argue that this is unethical if the study group is made of mice and you have to blend their brains to figure out what’s happening, but not if your cost is giving $25 amazon vouchers to undergraduates.

I remember that the particle physics like their p-values nice and small. Let’s see… ah yes, 0.003 = “evidence of”, 0.0000003 = “discovery”. I played around with some randomly gaussians and t-tests, and I think “roughly doubles” is a bit of an understatement – I think it’s more like a factor of four or five.

Standard error scales with the square root of the sample size – I think that’s it.

Quibbles aside, I like the suggestion.

If you think people in the social sciences are hostile to attempted replications now, just imagine how bad it would get if they didn’t have the 1/20 fluke rate to fall back on when their effects failed to replicate!

Well, if you use a familywise error rate and only one of your hypothesis tests is significant, you could still say that there was a 1/20 fluke rate that one of them wouldn’t fail overall and it just so happened that the significant result is whatever the other person tried to replicate.

Yes; this was specifically in response to the idea of just insisting on a p-value of 0.000001.

One study will show an effect for only low GPA, another for only high GPA, one for minority students, one for non-minority, one for women, one for men etc. Soon or later someone will come along and say hey it works for everybody.

I’m imagining something like this xkcd only with ~20-50 extra research teams each finding a different random color causes acne and only getting published in that case followed by “review shows acne caused by all colors of jelly beans”

https://xkcd.com/882/

Judith Harris, in The Nurture Assumption, provides a real world example of the phenomenon—birth order research.

As much as I appreciate JRH, I think she’s wrong about that. I’ve seen some really good looking studies, and the Less Wrong Survey, which I did myself and so I know isn’t full of trickery, shows very strong birth order effects.

I was thinking of stereotype threat.

If the interventions helped students with low GPA’s, but had a neutral effect overall, does that mean they made good students do worse?

It wasn’t neutral overall. It was neutral on “students not at risk for dropping out of high school” (or “ordinary students”), the complement of the population on which it was positive.

The overall effect was not statistically significant. Doesn’t mean it didn’t exist, just that if there was one, it was small enough to be lost in the noise.

Statistical significance is weird and defies a lot of standard arithmetic. There can be no statistically significant difference between groups A and B, no statistically significant differences between groups B and C, and yet there can be a statistically significant difference between groups A and C.

I think you are ignoring the elephant in the room. This study title (and the revealed manipulation of the analysis) are deliberate and intentional acts of deception as opposed to inadvertent incompetence. Being mislead about the efficacy of growth mindset is a minor level of harm compared to the damage done to the integrity of the scientific method. The former is malfeasance, the latter is criminal.

How can any 15-minute intervention have an effect, when they tell us that what a parent does over the course of 18 years hardly makes a difference?

(Irony alert: I’m sitting at the breakfast table with my daughter, ignoring her while I write this. Just think how great an effect I could have if I stopped reading SSC to give her a 15-minute pep talk on growth mindset!)

It’s usually different people who say those things, though. And while it’s easy to cheat and squint and peek at the data to find “evidence” by e.g. looking at cherry picked subgroups, it’s even easier to NOT find evidence if that’s your goal.

This subtlety tends to get lost, but when people say parents can’t affect their kids, what they mean is “can’t affect their kids’ personalities in a way that lasts past their contact with their children and carries over into different domains of life.”

So there might be some wiggle room to say that an intervention at school affects performance at school, at least in the short-term and at the same school where it was given.

You’re right that this is a big contradiction, and I am getting increasingly frustrated with twin studies showing completely opposite results to everything else, but I still don’t have a good solution.

I was rather puzzled when I saw that the title of the paper was about “academic overachievement” – like over-achievement is a bad thing?? But then I read the paper and noticed the title uses the word “underachievement”, which makes more sense.

On to more serious matters. The whole collapse the interventions regarding satisfactory course completion into one analysis thing seems incredibly dodgy, especially as they report results for each of the three interventions when GPA was the dependent variable. However, the results for each intervention regarding satisfactory course completion are reported in the supplementary materials. The truth is not pretty. I’ll just quote a chunk of text describing the results for the benefit of the statistically inclined:

We focused on the “collapsed treatment” regression because this analysis is less statistically powerful than the analysis of semester GPA due to the smaller sample (only at-risk students) and the less sensitive binary outcome. However, we also conducted a “by condition” regression; this was equivalent except that it used individual treatment contrasts in place of collapsed treatment effects. The model revealed a significant Time x Purpose interaction, OR=1.58, Z=1.97, p=0.048, 95% CI [1.00, 2.48], a trending Time x Mindset interaction, OR=1.38, Z=1.52, p=0.13, 95% CI [0.91, 2.10], and a marginal Time x Combined interaction, OR=1.52, Z=1.78, p=0.075, 95% CI [0.95, 2.41].

The take away message: the sense of purpose intervention produced a statistically significant result, the growth mindset and the combined interventions each did not. The result for the growth mindset was not even close to significance, yet they describe p =.13 as trending. Come on, really! The combined intervention was closer to a conventional level of significance with p = .075.

So what can be seen in this paper is that a result for growth mindset intervention specifically is reported in the body of the paper when it is statistically significant, yet shunted off into the supplementary material (which fewer people will be inclined to read as it usually contains the more boring stuff) when it is not significant. I suppose this is not technically dishonest, yet it does present a distorted and misleading picture of what was actually found.

Thanks for that. It was just what I was looking for and I should have known there’d be a supplement somewhere.,

People calling everything a “deconstruction” is the only thing in the modern world more annoying than MLP fans.

At this point the reflexive snarky backlash is worse than the original overuse IMO.

Will I be ahead of the curve if I complain here about the reflexive backlash against the reflexive backlash embodied by, say, ShardPhoenix’s comment?

Derrida is actually better than his fanboys; the tiny, tiny exposure to his work that I had* showed that there is real mental activity, real thinking, going on.

You don’t need to agree with it, but he does show evidence of having an intellect and using it 🙂

*Through a book on the uncanny that I made the misfortune of purchasing. Written by an obviously third-rate** English professor of English at an English red-brick university, who fanboyed all over the place about Derrida and Derridaism until I was ready to burn Derrida – about whom I knew nothing but his name and what this prat was telling me he and his chums understood Derrida to be doing – at the stake from sheer annoyance, and whose version of what Derrida was on about struck me as incoherent and trite.

He then made the error of quoting some actual Derrida, rather than “Here’s my second-hand version” and the difference was astounding Derrida may be a typical French intellectual, but it’s undeniable that he is an intellectual. His fanboys? That’s a different matter.

** It may be that I am very, very stupid; the reviews laud this to the skies, but I think it’s dreadful and not half as clever as it thinks it is.

> there is real mental activity, real thinking, going on

Which is, crucially, different from being correct. I’ve no doubt that Derrida is a genius of some kind, but it’s possible to be a genius and for almost all of your claims to still be wrong. Compare theologians making brilliantly reasoned, impeccably-cited arguments about how many angels can dance on the head of a pin. Or, for a more concrete and more SSC-familiar example, Chesterton.

I’m going to have to be pedantic here and point out that there’s no evidence people actually seriously debated angels dancing on pins.

As your link points out, Aquinas did seriously consider whether multiple angels could occupy the same point in space. It’s pretty clear to me that “Scholars debated how many angels may dance on the head of a pin” is a colorful or pop-theology way of saying “Scholars studied and debated questions like whether multiple angels can occupy the same point in space”. Am I the only one who thinks this?

It’s kind of like how cosmologists today can be said to debate whether an astronaut falling into a black hole would turn into spaghetti or encounter a wall of fire. They aren’t really talking about what happens to astronauts, they’re talking about the properties of black holes. But as a layperson I’m happy to use the more colorful language.

As your link points out, Aquinas did seriously consider whether multiple angels could occupy the same point in space. It’s pretty clear to me that “Scholars debated how many angels may dance on the head of a pin” is a colorful or pop-theology way of saying “Scholars studied and debated questions like whether multiple angels can occupy the same point in space”. Am I the only one who thinks this?

It’s kind of like how cosmologists today can be said to debate whether an astronaut falling into a black hole would turn into spaghetti or encounter a wall of fire. They aren’t really talking about what happens to astronauts, they’re talking about the properties of black holes. But as a layperson I’m happy to use the more colorful language.

@anonymous: “Philosophers of yesteryear debated such bizarre arcanna as whether pushing a fat man from a bridge would stop a trolley and whether this was moral…”

TIL: angels are bosons.

For the record, I’m aware that the angels-on-the-head-of-a-pin lies somewhere between ‘egregious oversimplification’ and ‘made up’. But I went for it anyway because it expressed what I wanted.

(Believing you might get away with using a pop simplification as a figure of speech on a forum full of rationalists? Talk about naive…)

@Nornagest, on the contrary, Aquinas concludes that two angels can’t occupy the same place and therefore are fermions.

Honestly, after reading that passage from Aquinas this seems like a perfectly fair thing to mock.

But if angels existed, that would be one of the first things that rationalists would investigate – is there some resource of “space” that angels inhabit, or is it a completely different plane.

The actual question there is valid and relevant.

Right. But my point is exactly that it’s all wasted effort because the underlying axiom is false; angels don’t exist. (Fleshing out the rest of the analogy to the current case is left as an exercise for the reader.)

I’ve tried tracking down the “angels on pinhead” quote, and the nearest I’ve come to an answer is that it may originate with, or at least was popularised by, Benjamin Disraeli’s father, Isaac Disraeli, as one of the Victorian progressive jibes about the musty old Middle Ages – though it may date as far back as the 17th century.

Alas, it is less a “pop culture version” of a real question and more one of those “everybody knows” type things (you know: everybody knows Columbus set sail to prove the world was round, etc.)

As to Chesterton, I can but answer by getting Hilaire Belloc to speak for me, in the verse he wrote on this very topic – but before we start, we do all know what a don is, don’t we? (Have patience with me; I often see a lack of knowledge about things I’d consider commonplace, but then I’m one of the dinosaurs) :

Some day, people will make jibes about how Rationalists used to debate whether or not cactus people can factor enormous numbers.

” but it’s possible to be a genius and for almost all of your claims to still be wrong. … Or, for a more concrete and more SSC-familiar example, Chesterton.”

At least one of GKC’s claims was wrong—his refutation of evolution depended on his not understanding it. But I don’t think almost all of them were. Compared to his more in fashion opponents, Shaw and Wells, he looks pretty good.

Clearly the best mindset interventions are universal love and transcendent joy.

But they only work if you can convince people to GET OUT OF THE CAR.

Mostly thanks to this blog I’ve largely stopped believing in “social science” altogether. The field seems riddled with intellectual dishonesty, conscious or not.

The preregistered audits that show most social science studies are false also show a large fraction are true, enough that you should still update significantly off the existence of social science claiming X, even though you shouldn’t yet think it likely.

And for old well-established findings most are true (at least in that the original experiments replicate). See the Reproducibility Project and Many Labs Project.

I’ve been a social science aficionado since 1972. I love the social sciences.

You just have to read social science papers very carefully, just like while watching POW Jeremiah Denton explain to the TV camera how nicely his North Vietnamese captors were treating him, you had to notice he was blinking in an odd way.

I sometimes compare a research paper to a sandwich; the introduction and conclusion are the bread, the methods and results are the filling. There seem to be quite a few papers which resemble prime steak between two bits of stale Chorleywood-process “bread”.

I remember when I realized how distorted things could get talking to a grad student in my old uni when we were out drinking.

She was complaining about her supervisor being a dick and doing things like sharing her data with others before she could publish it herself under her own name. Her thesis was on risk taking behavior in extreme sports and she’d spent many months collecting data. I remember a conversation along the lines of

“So are you thinking of switching supervisors?”

“I would in a heartbeat, except the only other lecturer who could be my supervisor in the department is [academics name] and the only way I’d *ever* be able to get anything whatsoever published with her would be to make it all about how women are discriminated against in extreme sport”

“Oh, so your data showed women were discriminated against?”

*snort of laughter* “Nope, but if I want to get away from [dickhead academic’s name] that’s what it’s going to have to show”

For example, Raj Chetty’s big Harvard project on “Where are the lands of opportunity” has gotten tons of national publicity. Hillary Clinton is begging Raj for his insights.

But the more I’ve looked at Chetty’s famous map and its supporting data, the more apparent it is that practically nobody has thought hard about what Chetty has found.

For example, a big effect on his map is that being a blue collar teenager on northern Great Plains in 1996 correlated with making a lot more money in 2011 than your parents made in 1996. Well, when I dug into Chetty’s online databases, it became obvious that large parts of his map are dominated by sparsely populated sections of the northern part of the center of the country from which a lot of early 30s blue collar guys who can stand working outdoors in North Dakota winters have been recently recruited to make big bucks in the fracking boom.

In contrast, their equivalents in the Southeast, who typically had their best years working construction in the exurbs, got killed by the slowdown in construction after 2008. (Another factor is that the Southeast is filling up with illegal aliens who will work construction cheap, so white blue collar guys in the Southeast are getting their wages pounded down by Latin Americans who find the Southeastern climate acceptable, while North Dakota’s fracking fields are mostly worked by white guys used to frigid weather.)

So, Chetty’s attempt to come up with some enduring explanations for some of his patterns — the Legacy of Segregation? Sprawl? — that don’t mention race are largely pointless because much of the effects he observed are temporary: North Dakota isn’t quite as booming today as in 2011 and North Carolina home construction isn’t as dead in the water anymore either.

It mostly just takes some critical reading skills to turn the current morass of social science papers into something useful and informative.

“Mostly thanks to a site that deliberately points out bad examples, I have come to believe that there are only bad examples.”

…

I’m not sure that’ll work out for you. What are you going to go on instead of peer reviewed science? Your gut feeling?

If I had the impression that this kind of thing was a rare exception obviously I’d feel differently, but I don’t. And most reasonably intelligent people seem to get on fine mostly ignoring all of social science and relying on tradition, anecdotal evidence, and instinct. It’s not that absolutely all social science studies are wrong, but that they’re wrong enough often enough that there’s low-to-negative value in paying attention to them, especially at the pop-science headline level.

“especially at the pop-science headline level.”

This is key. Read the papers. Read the papers. Read the papers. Not the articles about the papers, not the abstracts of the papers, read the whole thing. Or at least the Methods section and the most important numbers and tables in the Results section.

Even our esteemed host fails this sometimes; a couple months back he linked to a parenting-doesn’t-matter study which had the problem that its authors apparently forgot that power was a thing.

Does it matter if we dress the papers? Policy is made based on headlines, and executive summaries, if were lucky. The real skill on social science is abstract writing to get a conclusion shock connects to the data if you squint a bit. . The research is nearly busy work.

“For if ye read only the headlines, what reward have ye? Do not even the policymakers the same?

“And if ye take the abstract at its face, what do ye more than others? do not even the science journalists so?”

Well, the point was that knowing the truth is nice, but only really matters if it is actionable. Some of it might be, especially for Scott or someone in a similar profession, but mostly would only matter in terms of wide scale policy, and few have much impact on such.

Perhaps one could push for more or less growth mindset mantras in the local school district, and more power to you but these are increasingly centralized anyway.

I think avoiding social science altogether is the wrong reaction. There are important, robust results in social science that people should know about. Some, such as Milgram’s obedience experiments, are widely (and rightly) trumpeted. Others, especially those offending progressive “sacred values,” are not.

So long as you have a critical eye, I think a good introductory textbook in, say, social psychology, can be very informative — you just have to be able to test claims against prior plausibility and where you would expect researchers’ bias to lie. Also informative can be reading the writing of contrarian social scientists, such as Lee Jussim or Jonathan Haidt.

Milgram’s experiments and the way he portrayed and interpreted them have been strongly criticized, and Milgram’s work may suffer from the same kind of problems as many other social science experiments.

Fair enough. I’ve read some of the criticisms, though not the book being discussed here. I am confident that Milgram’s findings are by and large correct because of repeated replications of the experiment. But I’m quite willing to grant that some of his particular claims were ill-supported, based on bad experimental design, or both.

https://slatestarcodex.com/2014/12/17/the-toxoplasma-of-rage/.

I mostly just write about social science findings I’m skeptical of – the good ones get a links post if they get mentioned at all. I think overall there’s a lot of good there, especially if you read between the lines as is mentioned elsewhere on this thread.

Note that this is only the fuckery that you see. There’s probably more fuckery going on that you don’t see. So update in the opposite direction of these people’s claims.

Personally, I don’t think the Motivational Speaking/Writing Industry is wholly a hoax.

For example, there are a lot of high-earning salesmen out there who will spend their own money to hear motivational speakers. (Granted, a lot of salesmen want to get into the motivational speaking business themselves because it beats what they’re currently doing for a living, so a lot of salesmen are at motivational seminars to study the pros so they can get cushy careers giving motivational seminars … But, still …)

But if we think of what Dweck is trying to do as being part of the venerable American tradition of motivational speaking/writing, we can get a more realistic perspective on its potential even when well executed. I have no idea if Dweck herself is good at motivating students, but I also don’t doubt that some people out there are, on the whole, pretty good and some are pretty bad.

The problem with Dweckism is that people are hoping she’s going to discover some eternal scientific truth that can be used to make students more motivated forever and ever, like Newton’s law of gravity keeps working and working.

But if we look at the motivational speaker industry, we see constant churn and little agreement. Sure, there are classics like Dale Carnegie and Napoleon Hill, but there is a huge amount of effort put into generating some level of novelty. Motivational speakers don’t want to be too original, but they also don’t want to be too repetitious of what everybody else is doing.

Another thing we see is that different motivational speakers work best for different people. For example, my first roommate in college was devoted to playing Zig Ziglar tapes at all hours. I got a different roommate. On the other hand, I find Paul Graham’s essays on how to get rich in Silicon Valley admirable. Granted, Graham hasn’t yet inspired me to get rich in Silicon Valley yet, but, still …

So, what we see is that motivating people isn’t like coming up with Maxwell’s equations; motivating people is like the motivational speaker business: a constant tumult of small successes dogged by declining impact as boredom and skepticism sets, offset by new fads and new personalities. In fact, that sounds a lot like the educational research business, as well.

Yeah, the problem is that various bodies are going to seize on this as “Let the classes do two online sessions and bob’s your uncle – no need for teachers, support or intervention, the kids can monitor themselves and do it all online!”

Aside from what Scott said, it’s also suspicious that they report all their effect sizes after adjustment for various covariates. “Zero-order” effects are not reported. They also use data only from “core courses”, and admit in a footnote that including data from all courses would have weakened the results. It’s not clear how they decided which courses were “core.”

It certainly seems that way. There are red flags all over that paper.