[Disclaimer: I have done odd jobs for MIRI once or twice several years ago, but I am not currently affiliated with them in any way and do not speak for them.]

A recent Tumblr conversation on the Machine Intelligence Research Institute has gotten interesting and I thought I’d see what people here have to say.

If you’re just joining us and don’t know about the Machine Intelligence Research Institute (“MIRI” to its friends), they’re a nonprofit organization dedicated to navigating the risks surrounding “intelligence explosion”. In this scenario, a few key insights around artificial intelligence can very quickly lead to computers so much smarter than humans that the future is almost entirely determined by their decisions. This would be especially dangerous since most AIs use very primitive untested goal systems inappropriate for and untested on intelligent entities; such a goal system would be “unstable” and from a human perspective the resulting artificial intelligence could have apparently arbitrary or insane goals. If such a superintelligence were much more powerful than we are, it would present an existential threat to the human race.

This has almost nothing to do with the classic “Skynet” scenario – but if it helps to imagine Skynet, then fine, just imagine Skynet. Everyone else does.

MIRI tries to raise awareness of this possibility among AI researchers, scientists, and the general public, and to start foundational research in more stable goal systems that might allow AIs to become intelligent or superintelligent while still acting in predictable and human-friendly ways.

This is not a 101 space and I don’t want the comments here to all be about whether or not this scenario is likely. If you really want to discuss that, go read at least Facing The Intelligence Explosion and then post your comments in the Less Wrong Open Thread or something. This is about MIRI as an organization.

(If you’re really just joining us and you don’t know about Tumblr, run away)

II.

Tumblr user su3su2u1 writes:

Saw some tumblr people talking about [effective altruism]. My biggest problem with this movement is that most everyone I know who identifies themselves as an effective altruist donates money to MIRI (it’s possible this is more a comment on the people I know than the effective altruism movement, I guess). Based on their output over the last decade, MIRI is primarily a fanfic and blog-post producing organization. That seems like spending money on personal entertainment.

Part of this is obviously mean-spirited potshots, in that MIRI itself doesn’t produce fanfic and what their employees choose to do with their own time is none of your damn business.

(well, slightly more complicated. I think MIRI gave Eliezer a couple weeks vacation to work on it as an “outreach” thing once. But that’s a little different from it being their main priority.)

But more serious is the claim that MIRI doesn’t do much else of value. I challenged Su3 with the following evidence of MIRI doing good work:

A1. MIRI has been very successful with outreach and networking – basically getting their cause noticed and endorsed by the scientific establishment and popular press. They’ve gotten positive attention, sometimes even endorsements, from people like Stephen Hawking, Elon Musk, Gary Drescher, Max Tegmark, Stuart Russell, and Peter Thiel. Even Bill Gates is talking about AI risk, though I don’t think he’s mentioned MIRI by name. Multiple popular books have been written about their ideas, such as James Miller’s Singularity Rising and Stuart Armstrong’s Smarter Than Us

. Most recently Nick Bostrom’s book Superintelligence

, based at least in part on MIRI’s research and ideas, is a New York Times best-seller and has been reviewed positively in the Guardian, the Telegraph, Salon, the Financial Times, and the Economist. Oxford has opened up the AI-risk-focused Future of Humanity Institute; MIT has opened up the similar Future of Life Institute. In about a decade, the idea of an intelligence explosion has gone from Time Cube level crackpottery to something taken seriously by public intellectuals and widely discussed in the tech community.

A2. MIRI has many publications, conference presentations, book chapters and other things usually associated with normal academic research, which interested parties can find on their website. They have conducted seven past research workshops which have produced interesting results like Christiano et al’s claimed proof of a way around the logical undefinability of truth, which was praised as potentially interesting by respected mathematics blogger John Baez.

A3. Many former MIRI employees, and many more unofficial fans, supporters, and associates of MIRI, are widely distributed across the tech community in industries that are likely to be on the cutting edge of artificial intelligence. For example, there are a bunch of people influenced by MIRI in Google’s AI department. Shane Legg, who writes about how his early work was funded by a MIRI grant and who once called MIRI “the best hope that we have” was pivotal in convincing Google to set up an AI ethics board to monitor the risks of the company’s cutting-edge AI research. The same article mentions Peter Thiel and Jaan Tallinn as leading voices who will make Google comply with the board’s recommendations; they also happen to be MIRI supporters and the organization’s first and third largest donors.

There’s a certain level of faith required for (A1) and (A3) here, in that I’m attributing anything good that happens in the field of AI risk to some sort of shady behind-the-scenes influence from MIRI. Maybe Legg, Tallinn, and Thiel would have pushed for the exact same Google AI Ethics Board if none of them had ever heard of MIRI at all. I am forced to plead ignorance on the finer points of networking and soft influence. Heck, for all I know, maybe the exact same number of people would vote Democrat if there were no Democratic National Committee or liberal PACs. I just assume that, given a really weird idea that very few people held in 2000, an organization dedicated to spreading that idea, and the observation that the idea has indeed spread very far, the organization is probably doing something right.

III.

Our discussion on point (A3) degenerated into Dueling Anecdotal Evidence. But Su3 responded to my point (A1) like so:

[I agree that MIRI has gotten shoutouts from various thought leaders like Stephen Hawking and Elon Musk. Bostrom’s book is commercially successful, but that’s just] more advertising. Popular books aren’t the way to get researchers to notice you. I’ve never denied that MIRI/SIAI was good at fundraising, which is primarily what you are describing.

How many of those thought leaders have any publications in CS or pure mathematics, let alone AI? Tegmark might have a math paper or two, but he is primarily a cosmologist. The FLI’s list of scientists is (for some reason) mostly again cosmologists. The active researchers appear to be a few (non-CS, non-math) grad students. Not exactly the team you’d put together if you were actually serious about imminent AI risk.

I would also point out “successfully attracted big venture capital names” isn’t always a mark of a sound organization. Black Light Power is run by a crackpot who thinks he can make energy by burning water, and has attracted nearly 100 million in funding over the last two decades, with several big names in energy production behind him.

And to my point (A2) like so:

I have a PhD in physics and work in machine learning. I’ve read some of the technical documents on MIRI’s site, back when it was SIAI and I was unimpressed. I also note that this critique is not unique to me, as far as I know the GiveWell position on MIRI is that it is not an effective institute.

The series of papers on Lob’s theorem are actually interesting, though I notice that none of the results have been peer reviewed, and the paper’s aren’t listed as being submitted to journals yet. Their result looks right to me, but I wouldn’t trust myself to catch any subtlety that might be involved.

[But that just means] one result has gotten some small positive attention, and even those results haven’t been vetted by the wider math community yet (no peer review). Let’s take a closer look at the list of publications on MIRI’s website- I count 6 peer reviewed papers in their existence, and 13 conference presentations. Thats horribly unproductive! Most of the grad students who finish a physics phd will publish that many papers individually, in about half that time. You claim part of their goal is to get academics to pay attention, but none of their papers are highly cited, despite all this networking they are doing.

Citations are the standard way to measure who in academia is paying attention. Apart from the FHI/MIRI echo chamber (citations bouncing around between the two organizations), no one in academia seems to be paying attention to MIRI’s output. MIRI is failing to make academic inroads, and it has produced very little in the way of actual research.

My interpretation, in the form of a TL;DR

B1. Sure, MIRI is good at getting attention, press coverage, and interest from smart people not in the field. But that’s public relations and fundraising. An organization being good at fundraising and PR doesn’t mean it’s good at anything else, and in fact “so good at PR they can cover up not having substance” is a dangerous failure mode.

B2. What MIRI needs, but doesn’t have, is the attention and support of smart people within the fields of math, AI, and computer science, whereas now it mostly has grad students not in these fields.

B3. While having a couple of published papers might look impressive to a non-academic, people more familiar with the culture would know that their output is woefully low. They seem to have gotten about five ten solid publications in during their decade-long history as a multi-person organization; one good grad student can get a couple solid publications a year. Their output is less than expected by like an order of magnitude. And although they do get citations, this is all from a mutual back-scratching club of them and Bostrom/FHI citing each other.

IV.

At this point Tarn and Robby joined the conversation and it became kind of confusing, but I’ll try to summarize our responses.

Our response to Su3’s point (B1) was that this is fundamentally misunderstanding outreach. From its inception until about last year, MIRI was in large part an outreach and awareness-raising organization. Its 2008 website describes its mission like so:

In the coming decades, humanity will likely create a powerful AI. SIAI exists to confront this urgent challenge, both the opportunity and the risk. SIAI is fostering research, education, and outreach to increase the likelihood that the promise of AI is realized for the benefit of everyone.

Outreach is one of its three main goals, and “education”, which sounds a lot like outreach, is a second.

In a small field where you’re the only game in town, it’s hard to distinguish between outreach and self-promotion. If MIRI successfully gets Stephen Hawking to say “We need to be more concerned about AI risks, as described by organizations like MIRI”, is that them being very good at self-promotion and fundraising, or is that them accomplishing their core mission of getting information about AI risks to the masses?

Once again, compare to a political organization, maybe Al Gore’s anti-global-warming nonprofit. If they get the media to talk about global warming a lot, and get lots of public intellectuals to come out against global warming, and change behavior in the relevant industries, then mission accomplished. The popularity of An Inconvenient Truth can’t just be dismissed as “self-promotion” or “fundraising” for Gore, it was exactly the sort of thing he was gathering money and personal prestige in order to do, and should be considered a victory in its own right. Even though eventually the anti-global-warming cause cares about politicians, industry leaders, and climatologists a lot more than they care about the average citizen, convincing millions of average citizens to help was a necessary first step.

And this which is true of An Inconvenient Truth is true of Superintelligence and other AI risk publicity efforts, albeit on their much smaller scale.

Our response to Su3’s point (B2) was that it was just plain factually false. MIRI hasn’t reached big names from the AI/math/compsci field? Sure it has. Doesn’t have mathy PhD students willing to research for them? Sure it does.

Peter Norvig and Stuart Russell are among the biggest names in AI. Norvig is currently the Director of Research at Google; Russell is Professor of Computer Science at Berkeley and a winner of various impressive sounding awards. The two wrote a widely-used textbook on artificial intelligence in which they devote three pages to the proposition that “The success of AI might mean the end of the human race”; parts are taken right out of the MIRI playbook and they cite MIRI research fellow Eliezer Yudkowsky’s paper on the subject. This is unlikely to be a coincidence; Russell’s site links to MIRI and he is scheduled to participate in MIRI’s next research workshop.

Their “team” of “research advisors” includes Gary Drescher (PhD in CompSci from MIT), Steve Omohundro (PhD in physics from Berkeley but also considered a pioneer of machine learning), Roman Yampolskiy (PhD in CompSci from Buffalo), and Moshe Looks (PhD in CompSci from Washington).

Su3 brought up the good point that none of these people, respected as they are, are MIRI employees or researchers (although Drescher has been to a research workshop). At best, they are people who were willing to let MIRI use them as figureheads (in the case of the research advisors); at worst, they are merely people who have acknowledged MIRI’s existence in a not-entirely-unlike-positive way (Norvig and Russell). Even if we agree they are geniuses, this does not mean that MIRI has access to geniuses or can produce genius-level research.

Fine. All these people are, no more and no less, is evidence that MIRI is succeeding at outreach within the academic field of AI, as well as in the general public. It also seems to me to be some evidence that smart people who know more about AI than any of us think MIRI is on the right track.

Su3 brought up the example of a BlackLight Power, a crackpot energy company that was able to get lots of popular press and venture capital funding despite being powered entirely by pseudoscience. I agree this is the sort of thing we should be worried about. Nonscientists outside of specialized fields have limited ability to evaluate their claims. But when smart researchers in the field are willing to vouch for MIRI, that give me a lot more confidence they’re not just a fly-by-night group trying to profit off of pseudoscience. Their research might be more impressive or less impressive, but they’re not rotten to the core the same way BlackLight was.

And though MIRI’s own researchers may be far from those lofty heights, I find Su3’s claim that they are “a few non-CS, non-math grad students” a serious underestimate.

MIRI has fourteen employees/associates with the word “research” in their name, but of those, a couple (in the words of MIRI’s team page) “focus on social and historical questions related to artificial intelligence outcomes.” These people should not be expected to have PhDs in mathematical/compsci subjects.

Of the rest, Bill is a PhD in CompSci, Patrick is a PhD in math, Nisan is a PhD in math, Benja is a PhD student in math, and Paul is a PhD student in math. The others mostly have masters or bachelors in those fields, published journal articles, and/or won prizes in mathematical competitions. Eliezer writes of some of the remaining members of his team:

Mihaly Barasz is an International Mathematical Olympiad gold medalist perfect scorer. From what I’ve seen personally, I’d guess that Paul Christiano is better than him at math. I forget what Marcello’s prodigy points were in but I think it was some sort of Computing Olympiad [editor’s note: USACO finalist and 2x honorable mention in the Putnam mathematics competition]. All should have some sort of verified performance feat far in excess of the listed educational attainment.

That pretty much leaves Eliezer Yudkowsky, who needs no introduction, and Nate Soares, whose introduction exists and is pretty interesting.

Add to that the many, many PhDs and talented people who aren’t officially employed by them but attend their workshops and help out their research when they get the chance, and you have to ask how many brilliant PhDs from some of the top universities in the world we should expect a small organization like MIRI to have. MIRI competes for the same sorts of people as Google, and offers half as much. Google paid $400 million to get Shane Legg and his people on board; MIRI’s yearly budget hovers at about $1 million. Given that they probably spend a big chunk of that on office space, setting up conferences, and other incidentals, I think the amount of talent they have right now is pretty good.

That leaves Su3’s point (B3) – the lack of published research.

One retort might be that, until recently, MIRI’s research focused on strategic planning and evaluation of AI risks. This is important, and it resulted in a lot of internal technical papers you can find on their website, but there’s not really a field for it. You can’t just publish it in the Journal Of What Would Happen If There Was An Intelligence Explosion, because no such journal. The best they can do is publish the parts of their research that connect to other fields in appropriate journals, which they sometimes did.

I feel like this also frees them from the critique of citation-incest between them and Bostrom. When I look at a typical list of MIRI paper citations, I do see a lot of Bostrom, but also some other names that keep coming up – Hutter, Yampolskiy, Goetzel. So okay, it’s an incest circle of four or five rather than two.

But to some degree that’s what I expect from academia. Right now I’m doing my own research on a psychiatric screening tool called the MDQ. There are three or four research teams in three or four institutions who are really into this and publish papers on it a lot. Occasionally someone from another part of psychiatry wanders in, but usually it’s just the subsubsubspeciality of MDQ researchers talking to each other. That’s fine. They’re our repository of specialized knowledge on this one screening tool.

You would hope the future of the human race would get a little bit more attention than one lousy psychiatric screening tool, but blah blah civilizational inadequacy, turns out not so much, they’re of about equal size. If there are only a couple of groups working on this problem, they’re going to look incestuous but that’s fine.

On the other hand, math is math, and if MIRI is trying to produce real mathematical results they ought to be sharing them with the broader mathematical community.

Robby protests that until very recently, MIRI hasn’t really been focusing on math. This is a very recent pivot. In April 2013, Luke wrote in his mini strategic plan:

We were once doing three things — research, rationality training, and the Singularity Summit. Now we’re doing one thing: research. Rationality training was spun out to a separate organization, CFAR, and the Summit was acquired by Singularity University. We still co-produce the Singularity Summit with Singularity University, but this requires limited effort on our part.

After dozens of hours of strategic planning in January–March 2013, and with input from 20+ external advisors, we’ve decided to (1) put less effort into public outreach, and to (2) shift our research priorities to Friendly AI math research.

In the full strategic plan for 2014, he repeated:

Events since MIRI’s April 2013 strategic plan have increased my confidence that we are “headed in the right direction.” During the rest of 2014 we will continue to:

– Decrease our public outreach efforts, leaving most of that work to FHI at Oxford, CSER at Cambridge, FLI at MIT, Stuart Russell at UC Berkeley, and others (e.g. James Barrat).

– Finish a few pending “strategic research” projects, then decrease our efforts on that front, again leaving most of that work to FHI, plus CSER and FLI if they hire researchers, plus some others.

– Increase our investment in our Friendly AI (FAI) technical research agenda.

– We’ve heard that as a result of…outreach success, and also because of Stuart Russell’s discussions with researchers at AI conferences, AI researchers are beginning to ask, “Okay, this looks important, but what is the technical research agenda? What could my students and I do about it?” Basically, they want to see an FAI technical agenda, and MIRI is is developing that technical agenda already.

In other words, there is a recent pivot from outreach, rationality and strategic research to pure math research, and the pivot is only recently finished or still going on.

TL;DR, again in three points:

C1. Until recently, MIRI focused on outreach and did a truly excellent job on this. They deserve credit here.

C2. MIRI has a number of prestigious computer scientists and AI experts willing to endorse or affiliate with it in some way. While their own researchers are not quite at the same lofty heights, they include many people who have or are working on math or compsci PhDs.

C3. MIRI hasn’t published much math because they were previously focusing on outreach and strategic research; they’ve only shifted to math work in the past year or so.

V.

The discussion just kept going. We reached about the limit of our disagreement on (C1), the point about outreach – yes, they’ve done it, but does it count when it doesn’t bear fruit in published papers? About (C2) and the credentials of MIRI’s team, Su3 kind of blended it into the next point about published papers, saying:

Fundamental disconnect – I consider “working with MIRI” to mean “publishing results with them.” As an outside observer, I have no indication that most of these people are working with them. I’ve been to workshops and conferences with Nobel prize winning physicists, but I’ve never “worked with them” in the academic sense of having a paper with them. If [someone like Stuart Russell] is interested in helping MIRI, the best thing he could do is publish a well received technical result in a good journal with Yudkowsky. That would help get researchers to pay actual attention(and give them one well received published result, in their operating history).

Tangential aside- you overestimate the difficulty of getting top grad students to work for you. I recently got four CS grad students at a top program to help me with some contract work for a few days at the cost of some pizza and beer.

So it looks like it all comes down to the papers. Su3 had this to say:

What I was specifically thinking was “MIRI has produced a much larger volume of well-received fan fiction and blog posts than research.” That was what I inended to communicate, if somewhat snarkily. MIRI bills itself as a research institute, so I judge them on their produced research. The accountability measure of a research institute is academic citations.

Editorials by famous people have some impact with the general public, so thats fine for fundraising, but at some point you have to get researchers interested. You can measure how much influence they have on researchers by seeing who those researchers cite and what they work on. You could have every famous cosmologist in the world writing op-eds about AI risk, but its worthless if AI researchers don’t pay attention, and judging by citations, they aren’t.

As a comparison for publication/citation counts, I know individual physicists who have published more peer reviewed papers since 2005 than all of MIRI has self-published to their website. My single most highly cited physics paper (and I left the field after graduate school) has more citations than everything MIRI has ever published in peer reviewed journals combined. This isn’t because I’m amazing, its because no one in academia is paying attention to MIRI.

[Christiano et al’s result about Lob] has been self-published on their website. It has NOT been peer reviewed. So it’s published in the sense of “you can go look at the paper.” But its not published in the sense of “mathematicians in the same field have verified the result.” I agree this one result looks interesting, but most mathematicians won’t pay attention to it unless they get it reviewed (or at the bare minimum, clean it up and put it on Arxiv). They have lots of these self-published documents on their web page.

If they are making a “strategic decision” to not submit their self-published findings to peer review ,they are making a terrible strategic decision, and they aren’t going to get most academics to pay attention that way. The result of Christiano, et al. is potentially interesting, but it’s languishing as a rough unpublished draft on the MIRI site, so its not picking up citations.

I’d go further and say the lack of citations is my main point. Citations are the important measurement of “are researchers paying attention.” If everything self-published to MIRI’s website were sparking interest in academia, citations would be flying around, even if the papers weren’t peer reviewed, and I’d say “yeah, these guys are producing important stuff.”

My subpoint might be that MIRI doesn’t even seem to be trying to get citations/develop academic interest, as measured by how little effort seems to be put into publication.

And Su3’s not buying the pivot explanation either:

That seems to be a reframing of the past history though. I saw talks by the SIAI well before 2013 where they described their primary purpose as friendly AI research, and insisted they were in a unique position (due to being uniquely brilliant/rational) to develop technical friendly AI (as compared to academic AI researchers).

[Tarn] and [Robby] have suggested the organization is undergoing a pivot, but they’ve always billed themselves as a research institute. But donating money to an organization that has been ineffective in the past, because it looks like they might be changing seems like a bad proposition.

My initial impression (reading Muelhauser’s post you linked to and a few others) is that Muelhauser noticed the house was out of order when he became director and is working to fix things. Maybe he’ll succeed and in the future, then, I’ll be able to judge MIRI as effective- certainly a disproportionate number of their successes have come in the last few years. However, right now all I have is their past history, which has been very unproductive.

VI.

After that, discussion stayed focused on the issue of citations. This seemed like progress to me. Not only had we gotten it down to a core objection, but it was sort of a factual problem. It wasn’t an issue of praising or condemning. Here’s an organization with a lot of smart people. We know they work very hard – no one’s ever called Luke a slacker, and another MIRI staffer (who will not be named, for his own protection) achieved some level of infamy for mixing together a bunch of the strongest chemicals from my nootropics survey into little pills which he kept on his desk in the MIRI offices for anyone who wanted to work twenty hours straight and then probably die young of conditions previously unknown to science. IQ-point*hours is a weird metric, but MIRI is putting a lot of IQ-point*hours into whatever it’s doing. So if Su3’s right that there are missing citations, where are they?

Among the three of us, Robby and Tarn and I generated a couple of hypotheses (well, Robby’s were more like facts than hypotheses, since he’s the only one in this conversation who actually works there).

D1: MIRI has always been doing research, but until now it’s been strategic research (ie “How worried should we be about AI?”, “How far in the future should we expect AI to be developed?”) which hasn’t fit neatly into an academic field or been of much interest to anyone except MIRI allies like Bostrom. They have dutifully published this in the few papers that are interested, and it has dutifully been cited by the few people who are interested (ie Bostrom). It’s unreasonable to expect Stuart Russell to cite their estimates of time course for superintelligence when he’s writing his papers on technical details of machine learning algorithms or whatever it is he writes papers on. And we can generalize from Stuart Russell to the rest of the AI field, who are also writing on things like technical details of machine learning algorithms that can’t plausibly be connected to when machines will become superintelligent.

D2: As above, but continuing to apply even in some of their math-ier research. MIRI does have lots of internal technical papers on their website. People tend to cite other researchers working in the same field as themselves. I could write the best psychiatry paper in human history, and I’m probably not going to get any citations from astrophysicists. But “machine ethics” is an entirely new field that’s not super relevant to anyone else’s work. Although a couple key machine ethics problems, like the Lobian obstacle and decision theory, touch on bigger and better-populated subfields of mathematics, they’re always going to be outsiders who happen to wander in. It’s unfair to compare them to a physics grad student writing about quarks or something, because she has the benefit of decades of previous work on quarks and a large and very interested research community. MIRI’s first job is to create that field and community, which until you succeed looks a lot like “outreach”.

D3: Lack of staffing and constant distraction by other important problems. This is Robby’s description of what he notices from the inside. He writes:

We’re short on staff, especially since Louie left. Lots of people are willing to volunteer for MIRI, but it’s hard to find the right people to recruit for the long haul. Most relevantly, we have two new researchers (Nate and Benja), but we’d love a full-time Science Writer to specialize in taking our researchers’ results and turning them into publishable papers. Then we don’t have to split as much researcher time between cutting-edge work and explaining/writing-down.

A lot of the best people who are willing to help us are very busy. I’m mainly thinking of Paul Christiano. he’s working actively on creating a publishable version of the probabilistic Tarski stuff, but it’s a really big endeavor. Eliezer is by far our best FAI researcher, and he’s very slow at writing formal, technical stuff. He’s generally low-stamina and lacks experience in writing in academic style / optimizing for publishability, though I believe we’ve been having a math professor tutor him to get over that particular hump. Nate and Benja are new, and it will take time to train them and get them publishing their own stuff. At the moment, Nate/Benja/Eliezer are spending the rest of 2014 working on material for the FLI AI conference, and on introductory FAI material to send to Stuart Russell and other bigwigs.

D4: Some of the old New York rationalist group takes a more combative approach. I’m not sure I can summarize their argument well enough to do it justice, so I would suggest reading Alyssa’s post on her own blog.

But if I have to take a stab: everyone knows mainstream academia is way too focused on the “publish or perish” ethic of measuring productivity in papers or citations rather than real progress. Yeah, a similar-sized research institute in physics could probably get ten times more papers/citations than MIRI. That’s because they’re optimizing for papers/citations rather than advancing the field, and Goodhart’s Law is in effect here as much as everywhere else. Those other institutes probably got geniuses who should be discovering the cure for cancer spending half their time typing, formatting, submitting, resubmitting, writing whatever the editors want to see, et cetera. MIRI is blessed with enough outside support that it doesn’t have to do that. The only reason to try is to get prestige and attention, and anyone who’s not paying attention now is more likely to be a constitutional skeptic using lack of citations as an excuse, than a person who would genuinely change their mind if there were more citations.

I am more sympathetic than usual to this argument because I’m in the middle of my own research on psychiatric screening tools and quickly learning that official, published research is the worst thing in the world. I could do my study in about two hours if the only work involved were doing the study; instead it’s week after week of forms, IRB submissions, IRB revisions, required online courses where I learn the Nazis did unethical research and this was bad so I should try not to be a Nazi, selecting exactly which journals I’m aiming for, and figuring out which of my bosses and co-workers academic politics requires me make co-authors. It is a crappy game, and if you’ve been blessed with enough independence to avoid playing it, why wouldn’t you take advantage? Forget the overhyped and tortured “measure” of progress you use to impress other people, and just make the progress.

VII.

Or not. I’ll let Su3 have the last word:

I think something fundamental about my argument has been missed, perhaps I’ve communicated it poorly.

It seems like you think the argument is that increasing publications increases prestige/status which would make researchers pay attention. i.e. publications -> citations -> prestige -> people pay attention. This is not my argument.

My argument is essentially that the way to judge if MIRI’s outreach has been successful is through citations, not through famous people name dropping them, or allowing them to be figure heads.

This is because I believe the goal of outreach is get AI researchers focused on MIRI’s ideas. Op eds from famous people are useful only if they get AI researchers focused on these ideas. Citations aren’t about prestige in this case- citations tell you which researchers are paying attention to you. The number of active researchers paying attention to MIRI is very small. We know this because citations are an easy to find, direct measure.

Not all important papers have tremendous numbers of citations, but a paper can’t become important if it only has 1 or 2, because the ultimate measure of importance is “are people using these ideas?”

So again, to reiterate, if the goal of outreach is to get active AI researchers paying attention, then the direct measure for who is paying attention is citations. [But] the citation count on MIRIs work is very low. Not only is the citation count low (i.e. no researchers are paying attention), MIRI doesn’t seem to be trying to boost it – it isn’t trying to publish which would help get its ideas attention. I’m not necessarily dismissive of celebrity endorsements or popular books, my point is why should I measure the means when I can directly measure the ends?

The same idea undercuts your point that “lots of impressive PhD students work and have worked with MIRI,” because it’s impossible to tell if you don’t personally know the researchers. This is because they don’t create much output while at MIRI, and they don’t seem to be citing MIRI in their work outside of MIRI.

[Even people within the rationalist/EA community] agree with me somewhat. Here is a relevant quote from Holden Karnofsky [of GiveWell]:

SI seeks to build FAI and/or to develop and promote “Friendliness theory” that can be useful to others in building FAI. Yet it seems that most of its time goes to activities other than developing AI or theory. Its per-person output in terms of publications seems low. Its core staff seem more focused on Less Wrong posts, “rationality training” and other activities that don’t seem connected to the core goals; Eliezer Yudkowsky, in particular, appears (from the strategic plan) to be focused on writing books for popular consumption. These activities seem neither to be advancing the state of FAI-related theory nor to be engaging the sort of people most likely to be crucial for building AGI.

And here is a statement from Paul Christiano disagreeing with MIRI’s core ideas:

But I should clarify that many of MIRI’s activities are motivated by views with which I disagree strongly and that I should categorically not be read as endorsing the views associated with MIRI in general or of Eliezer in particular. For example, I think it is very unlikely that there will be rapid, discontinuous, and unanticipated developments in AI that catapult it to superhuman levels, and I don’t think that MIRI is substantially better prepared to address potential technical difficulties than the mainstream AI researchers of the future.

This time Su3 helpfully provides their own summary:

E1. If the goal of outreach is to get active AI researchers paying attention, then the direct measure for who is paying attention is citations. [But] the citation count on MIRIs work is very low.

E2. Not only is the citation count low (i.e. no researchers are paying attention), MIRI doesn’t seem to be trying to boost it – it isn’t trying to publish which would help get its ideas attention. I’m not necessarily dismissive of celebrity endorsements or popular books, my point is why should I measure the means when I can directly measure the ends?

E3. The same idea undercuts your point that “lots of impressive phd students work and have worked with MIRI,” because its impossible to tell if you don’t personally know the researchers. This is because they don’t create much output while at MIRI, and they don’t seem to be citing MIRI in their work outside of MIRI.

E4. Holden Karnofsky and Paul Christiano do not believe that MIRI is better prepared to address the friendly AI problem than mainstream AI researchers of the future. Karnofsky explicitly for some of the reasons I have brought up, Christiano for reasons unmentioned.

VIII.

Didn’t actually read all that and just skipped down to the last subheading to see if there’s going to be a summary and conclusion and maybe some pictures? Good.

There seems to be some agreement MIRI has done a good job bringing issues of AI risk into the public eye and getting them media attention and the attention of various public intellectuals. There is disagreement over whether they should be credited for their success in this area, or whether this is a first step they failed to follow up on.

There also seems to be some agreement MIRI has done a poor job getting published and cited results in journals. There is disagreement over whether this is an understandable consequence of being a small organization in a new field that wasn’t even focusing on this until recently, or whether it represents a failure at exactly the sort of task by which their success should be judged.

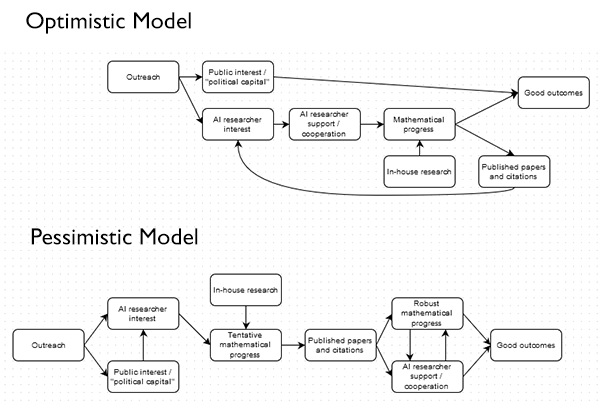

This is probably among the 100% of issues that could be improved with flowcharts:

In the Optimistic Model, MIRI’s successfully built up Public Interest, and for all we know they might have Mathematical Progress as well even though they haven’t published it in journals yet. While they could feed back their advantages by turning their progress into Published Papers and Citations to get even more Mathematical Progress, overall they’re in pretty good shape for producing Good Outcomes, at least insofar as this is possible in their chosen field.

In the Pessimistic Model, MIRI may or may not have garnered Public Interest, Researcher Interest, and Tentative Mathematical Progress, but they failed to turn that into Published Papers and Citations, which is the only way they’re going to get to Robust Mathematical Progress, Researcher Support, and eventually Good Outcomes. The best that can be said about them is that they set some very preliminary groundwork that they totally failed to follow up on.

A higher level point – if we accept the Pessimistic Model, do we accuse MIRI of being hopelessly incompetent, in which case they deserve less support? Or do we accept them as inexperienced amateurs who are the only people willing to try something difficult but necessary, in which case they deserve more support, and maybe some guidance, and perhaps some gentle or not-so-gentle prodding? Maybe if you’re a qualified science writer you could apply for the job opening they’re advertising and help them get those papers they need?

An even higher-level point – what do people worried about AI risk do with this information? I don’t see much that changes my opinion of the organization one way or the other. But Robby points out that people who are more concerned – but still worried about AI risk – have other good options. The Future of Humanity Institute at Oxford research that is less technical and more philosophical, wears their strategic planning emphasis openly on their sleeve has oodles of papers and citations and prestige. They also accept donations.

Best of all, their founder doesn’t write any fanfic at all. Just perfectly respectable stories about evil dragon kings.

Incidentally, MIRI’s site says “As featured in (prestigious journals)” but doesn’t give links to these. I tried searching Business Week and got nothing (Forbes and the Independent have old articles referencing SIAI President Michael Vassar). Does anyone have those links? And does anyone know why MIRI doesn’t link to the articles?

http://www.independent.co.uk/news/science/revenge-of-the-nerds-should-we-listen-to-futurists-or-are-they-leading-us-towards-lsquonerdocalypsersquo-2073910.html is an example. I feel describing this as “MIRI is featured in the Independent” without linking to the article is misleading at best. Maybe there’s another article I’m not aware of.

Stupid question time:

“This is probably among the 100% of issues that could be improved with flowcharts” – what does this mean? I mean, what does this add to “This is probably an issue that could be improved with a flowchart”? Is it saying that any issue could be improved with a flowchart?

Several years ago I read the opinion that the hardest thing for a group of smart people to do is actually do work. A group of smart people will spend lots of time talking about really interesting and challenging topics and will learn a lot from each other but they’ll probably not get much actual work done.

Someone else in the comments suggested MIRI hire a Research Director, and I second that suggestion. Guiding the overall direction of research is a secondary job for a Research Director. Their primary job is simply getting researchers to produce discrete output.

I think its unfair to hold the MIRI to normal standards of scholarly productivity. Most mathematicians chose topics (at least largely or at least partially) based on where they think they can get good results. Not the topics they think are the most important. Unless you have tenure you cannot survive a dry period and even if you have tenure a dry period will sink your status among your peers (and humans do not enjoy this very much). If you do not need to get rigorous results you can almost always make some progress by doing more simulations or doing better statistics, making assumptions etc. But if you need to actually prove something there might be no clear way forward.

Let me talk about something many SSC readers know about. The study of algorithims in CS. People have proved a huge of upper bounds for the number of operations. This is useful but what is also useful is to compute the actual average case running time (over some set of cases). People get results that say quicksort is on average ~ C*nlog(n) but I have never seen anyone (except Knuth in AoCP) make progress on actually finding the C. Algorithm analysis also frequently just ignores memory issues even though they are pretty fundamental. Despite it being well known from tests that Quicksort runs significantly faster than mergesort on most machines (At least by a factor of 2 but often more) I don’t think anyone has been able to show this except by running the algorithims and seeing which was faster. People would love to prove results about which algorithims are faster on average when both are asymptotically of the same order. But no one seems to know a good way of doing this. IF you want to respond that this depends on the hardware then the problem just shifts to the fact that rigorous results in CS almost never actually abstract hardware differences.

Another good example is Gaussian elimination with Partial pivoting. After decades of use the method has proven to give accurate solutions to Ax = b. However the method can totally fail for certain Matrices. People have tried for years to show the set of matrices where Gaussian elimination with partial pivoting is small but no one has done this (for any formalization of the problem and any reasonable definition of small). Examples like abound in all fields of math but I assumed the readership of this blog was semi-likely to have heard of sorting algorithms and maybe taken linear algebra.

Progress can very difficult if you don’t get to pick what to work on.

I agree with the gist of the comment, but the average analysis of quicksort and lots of nice results on why it is better in practice do exist. Check out the book of Sedgewick, An Introduction to the Analysis of Algorithms, especially Chapter 1. and the references therein.

Thanks I will take a look!

Ah, the Medawar Zone! This is actually a reasonable argument.

However, if progress in this field is so unlikely, do people actually improve the world by donating, or do they only waste their own money and years of someone else’s life?

All depends what you think the consequences of failure are, doesn’t it? If no progress -> we all die, then we just have to solve it, period, no matter the cost. Otherwise, well… not.

Actually, from this angle, it seems like popularizing the superintelligence / friendliness problem (along with the many ideas and arguments required to support it) might be a better use of resources. With enough people interested, perhaps someone will stumble upon a productive approach.

Please let’s not conflate the effective altruism movement with MIRI. If you live in California you might know a lot of people who belong to both camps, but elsewhere they’re not so connected and most EA types are interested in Peter Singer-style poverty and animal causes. (Which may have their problems, but that’s a different conversation.)

The first problem is that while we have effective and well meaning altruists, we have passive allies and passive (in)effective altruists.

No one in this community is willing to be aggressive enough to actually do something relevant. This is why I changed my mind about Anissimov, because at least he’s not a coward and will just “Put it down” and get it over with.

When someone who does research with you says something like this that negatively effects credibility without giving any reasons then he should “just say it” no matter if it hurts a few feelings or not and every one should get ready to fight a little. The point is *not* about FOOM, the point is about why he said the latter part.

Every mode of organization is not a tea party. Our camp wonders why people love Heartiste so much when we just won’t stop holding hands. One day I will write a rant about how the platonic “effective altruists” we have are not really so, but passive altruists who should learn to run a campaign for once. But what we have is such an utter improvement that I cannot bring myself to do so.

This is why Taleb is great because he’s not fucking around and will just start killing people left and right. I see nothing wrong with Eliezer. I see plenty wrong with the camp of so called “supporters” who can’t do shit.

I find this comment very hard to parse. Can you clarify what you mean? I feel like I’ve wandered into your head and been hit with a stream of thought that only makes sense in the context of previous thoughts I wasn’t privy to.

The supporters in our vague network cluster are too passive to defend the side they ‘should'(according to them) be on. If some one like christiano says that MIRI is not more capable he should be pushed to say what he wants to.

This is up to my observation that all so called ‘effective altruists’ and almost all ‘intellectuals’ have a barrier to their effectiveness roughly corresponding to their horribly passive personality. LW won’t stop getting killed by journalists/vague detractors if every one doesn’t step up the fight inside them. I some times think an alternate movement that does not prize passivity and cowardice as the main virtue will be better suited for the future.

You say “passive” I say “literally the best point of our community.”

We don’t kick people out for believing differently if they are neither stupid nor assholes. Niceness, community, and civilization!

False. We do not have to kick people out. We just stop having to get bullied/passive cowards.

An important feature of all passive people is that they generate sophisticated obscurant obfuscation that is almost always recursive to “passive” “coward” “afraid to take personal risk” “not willing to go out on a limb”. We have people running arguments about science fiction, basilisks and stuff, and journalists writing about forum drama on Less Wrong that has nothing to do with anything. Why don’t we give them the fight they’re looking for? Why don’t we we write all our posts using their first and last names and try to embarass them for things they legitimately do until they at least “fight fair”?

Does that mean we might actually stand a fighting chance? Will passive altruists ever be effective and not merely pay for it? Nothing that was a part of my post meant “Kicking people out”, but when this great community is largely bullied successfully and we have roughly 200+ people idling on IRC, what does that say?

Why can’t anyone do anything? Is it because all the original population of people are nerds, soft individuals, and just too gentle? I am utterly gentle. However it might just happen that every one might have to “learn to play”. Why can people join A) gangs B) scientology cults C) organizations that blow themselves up for a false causes, but “Rationalists” can’t do shit to get any one dedicated/motivated?

as a reference point see:

http://www.overcomingbias.com/2014/09/do-economists-care.html

&

http://www.overcomingbias.com/2014/09/tarot-counselors.html

“Do economists care?” Does any one care?

Can we get atheists/rationalists/NRx/passive altruists/economists to do some thing that might be at a superficial level not “rationality” such as aggressiveness, tarot card counseling etc for a larger good? Are we all doomed to be impotent? Why won’t intellectuals ever succeed?

@SanguineEmpricist: I am confused by your response.

Other than the stupid potshot at fan fiction, this tumblr criticism doesn’t seem to be “bullying” in the way you suggest. Nothing was brought up about the quirks of the rationalist community.

If anything, the fact that critics are judging MIRI as a research institute means that MIRI is winning the credibility war.

The original post is all about kicking people out for believing differently. Specifically, kicking MIRI supporters out of the EA club.

@Anon (I hate the depth limit of replies)

I think a steelman would be something like “donations to MIRI don’t constitute effective altruism, because MIRI isn’t effective because… ”

This sort of criticism seems like the sort of criticism MIRI should want, because its implicitly taking MIRI seriously as a charity- unlike the idiotic journalism about the basilisk.

One should defend one’s community from aggression but one should also distinguish between attack and critique. Given the substantive nature of this comment thread, I think this is the territory of the latter.

Will, if you want a better arguments, perhaps “steelmanning” can produce them. If you want a better community, “steelmanning” it is just a fantasy. Maybe that fantasy will help you design a future community, but the question is does this community today kick people out? Descending into a fantasy world does not help answer that question.

Not sure I understand you correctly, but I’ll try to reply anyway (and thus perhaps join you on a meta-level of overcoming passivity / cowardice).

I am also often frustrated by passivity of smart people. Yes, I understand their arguments. Idiots are often the first to start screaming and kicking, and then they usually do more harm than good, and of course ruin their image in the eyes of the smarter folk. Therefore, we should try to be silent, careful, talk a lot, but avoid even using too strong or too active words while talking… well, I’m not literally saying we should do nothing, oh no — saying that would be too definite, which is exactly what I want to avoid — but perhaps we should approximate doing nothing, wait some more time, play impartial observers, etc., until we become old and die. Then perhaps someone will remember us as those smart guys who were right, and did nothing wrong (because they pretty much did nothing at all). That’s what smart folks do, right?

Yeah: Reversing stupidity is not intelligence. But not-reversing stupidity ain’t not-not-intelligence either. Being active is good, but only if it is being active in the right way. However, maybe for a human psychologically it is easier to switch from a wrong way to a “less wrong” way, than from inaction to action. At least, the wrong way gives you some data and experience you can later reuse.

When we admire people, we should realize (in “near mode”) that we admire then because they did something. And that we are invited to do our own work, too.

On the other hand, there are people around LW doing awesome things. (Unrelated to MIRI research, but the Solstice celebration comes to mind.) Maybe we should expose these people and their actions more, as role models. So that when people think about LW, they (also) think about them, not only about just another web debate site. And maybe we could also once in a time have a “What are you doing to increase the sanity waterline?” thread. Actually… in the spirit of the debate, I am just going to create one right now. (Done.)

Is it actually your intuition as a medical professional that the nootropics stack is dangerous?

I only take modafinil but I am also very concerned. I thought most nootropics were safe. With the exception of most ampethamine based stimulants (ncluding adderall etc. I only take modafinil and have read some research on it. Leading me to believe it is reasonably ok to use long term (there is a good amount of research as Modafinil is prescribed for narcolepsy).

However many friends of mine take many forms of nootropics. And even in cases where I have looked at the research I trust Scot. LW is full of nootropics users. I imagine if Scott knew anything we needed to hear about nootropics safety he would have posted it somewhere.

But if he is considering such a post I would be deeply interested.

edit: To be clear by “safe” I don’t mean 100% free of side effects. Safe is not well defined but I mean the long term risks are either tolerable or very uncommon.

For what it’s worth, I suspect that Scott was being flippant, exaggerating for effect the uncertainty involved in taking little-studied substances. It’s also possible that the stack in question had components much dicier than regular ol modafinil.

I think it’s part of a running joke where the Responsible Doctor part of Scott has to say experimenting with random pills might result in an array of “exotic cancers”. Throwing the giant nootropics stack down your mouth on a daily basis probably isn’t /great/ for your liver, but the available information suggests that it’s not worse than aspirin. *finil specifically seems to still have Gwern’s research.

((And in fairness, some drugs actually are like that, including a fun set where the we see deadly and exotic cancers popping up only in post-marketing.))

I feel wary about criticizing MIRI given that from what I can tell, the organization consists of highly intelligent people who are extremely passionate about their goals and austere about ensuring that they behave maximally rationally. I sort of feel like anything that some random person on Tumblr can come up with as a criticism, the people of MIRI have most likely already considered. I’m sure that there are flaws in the organization, but I don’t expect someone not highly involved in the field and/or privileged to the inner workings of MIRI to actually be able to pick them out.

s/MIRI/Society of Jesus

IDK I would also predict that the Society of Jesus has already considered every criticism some random person on Tumblr could come up with.

Considered, yes, properly addressed, no, otherwise they would have a pretty strong case for theism.

This is supposed to be criticism? The Jesuits are really good at what they do.

Except for the part about beliving in a most likely false religion.

If you’ve got a general way of debugging religious thinking that doesn’t break anything more important, I’d like to hear it.

The absurdity heuristic isn’t good enough; that’s how you get (e.g.) creationists talking about the religion of evolution.

Occam’s razor.

…is a heuristic, not a reliable principle, and any halfway intelligent theist can give you a half-dozen responses to it.

Nornagest, here’s my tip from personal experience. It’s not an argument, but a collection of persuasion tactics and framing devices that improve receptivity to argument.

I realized that someone who believed in God wouldn’t be afraid of evaluating the evidence impartially. Every time I refused to be impartial, I told myself that meant I didn’t really believe in God.

I also would ask myself, “if I were talking to a Muslim and they made such an argument, would I count it as valid proof?” This is what allowed me to get rid of ideas like justification by faith. I believed justification by faith existed, but also believed that relying on it shouldn’t be necessary.

My approach could probably be taken further. Even giving atheist ideas the benefit of the doubt occasionally or often shouldn’t lead someone to the wrong conclusion, if there are strong reasons to believe in God they should overcome such mistakes.

The science debate was muddled and confusing to me at the time. I wouldn’t recommend trying to convince someone with it. At least for me, I had to lose almost all of my religion before I could understand that the natural world was truly made up of nothing but patterns.

So I focused on the ethical debate, and realized that the moral ideas in the Bible were usually justified only if you believed God was inherently good by definition. I thought it was possible he could be inherently good, but forced myself to admit that the balance of probability seemed to be against him. Asking myself questions such as “what do I mean by good” was helpful to me, since it revealed to me that divine-command morality was unworkable.

Even that wasn’t enough to tip me over the edge. But it was enough to get me interested in learning some biology and geology. Then I realized that even if some scientific explanations were partial or had slight flaws they still did better than I’d expect them to if God actually existed. Much better than the Bible did.

At that point, I decided that because it would be bad for Muslims to believe in their false religion, it would also be bad for me to believe in mine. This wasn’t the actual reasoning I used, of course, but it was the motivation deep underneath it.

This isn’t the kind of pattern that can be imposed onto someone else. But if they will hold themselves to it honestly, they have a good chance of saving themselves from false faith. So based on personal experience, I’d say turning bad belief against itself is the best way to help religious people find truth. Using phrases like “bad belief” and “false faith” is likely to help. The idea isn’t that belief itself is bad, it’s that beliefs are very important so we need to be sure to choose the right ones.

Someone might claim that it’s perfectly fine if believers fear evidence. But most people who make that kind of claim are fibbing, and if you gently say you don’t believe they really think that they will likely be left begrudgingly impressed and slightly persuaded. I don’t think anyone really believes this, personally, having lived with Fundamentalists for many years.

For other tools, there’s a useful post on LessWrong about how justification by faith is a modern idea, and in the Bible people try to prove things by appealing to eyewitnesses. Eliezer’s retelling of Sagan’s invisible dragon was also good. The Conservation of Expected Evidence isn’t something I used myself, but it would probably be helpful for many.

@Nornagest

Name one case where it unequivocally fails.

I’ve never heard a convincing one.

In fact, William of Ockham, the intelligent theist who the principle is named after, had to specifically include an exception for religious dogma in the original formulation: “Nothing ought to be posited without a reason given, unless it is self-evident or known by experience or proved by the authority of Sacred Scripture.”

There are plenty of places in science where algorithmically simpler theories have been superseded by more complicated ones after the former have been shown not to explain all the facts. Circular vs. elliptical orbits of the planets, for example.

One might object that Occam’s razor shouldn’t apply to future discoveries but rather to models of existing data, but that throws out any predictive value it may have had; it essentially becomes a statement about what’s easiest to work with, which makes it nearly tautological.

Which is consistent with Occam’s razor: you have to pick the simplest theory that explains the observations, not anything simpler.

“Simplest” doesn’t even have a simple definition. “God says so” is about the simplest explanation there is, and it explains everything from physics to metaphysics to ethics to “why?”.

““Simplest” doesn’t even have a simple definition. “God says so” is about the simplest explanation there is, and it explains everything from physics to metaphysics to ethics to “why?”.”

Running joke or not, “have you read the sequences” is often adequate:

http://lesswrong.com/lw/jp/occams_razor/

The problem with Ockham’s Razor is that in its general form it is true insofar as it’s useless. “Don’t make your theory more complex if you don’t need to” is generally good advice, but specifically because all the difficulty is in figuring out how difficult you need to make it.

Theism becomes less and less plausible the better the alternative explanation is. Which is another way of saying that we found a way of making our theory simpler without losing anything. There’s a reason Kolmogorov complexity isn’t actually computable.

So yeah, as stated Ockham’s Razor never “fails,” because the rule includes “unless this doesn’t work, then do something else” as part of its text.

@Jadagul

I think this is a good point.

Still, I think that humans tend to overdetect agency not necessarily because it is the most rational explanation to think of with bounded resources, but because erring on the side of overdetection was useful in the adaptation environment.

@Cauê,

“Read the sequences” can be valid when there’s a link to a specific page, instead of vague handwaving at several novels’ worth of mostly unrelated material. In that vein, your comment was indeed helpful, and I both found the link interesting and deserved it for being snide, but… (you knew there had to be a “but”)

LessWrongers tend to treat The Sequences as scripture; I don’t think they realize the extent to which people can disagree with them. I find the dissenting comments there have the better of it; Vikings weren’t wrong about lightning because Thor is complex, they were just wrong because it happened not to work that way. Defining Occam’s Razor in terms of Turning machines not only makes the Razor inaccessibly to Occam and most of the scientific patriarchs, it kills it as a heuristic. Occam’s Razor, is, after all, only a rough rule of thumb which has been wrong many times, and once you remove the ability to compute a heuristic quickly, it’s no longer even useful as a rule of thumb. (Does this mean that anything non-computable also fails Occam?)

The rule of thumb thing matters a lot, especially since I usually see it deployed in the manner of the OP; an atheist wants to show there is no God, and it all ends up hinging on the Razor. I have seen atheists passionately argue that “infinite universes in infinite combinations” are less complex than “infinite God,” therefore No God. Which of these is really simpler seems to boil down to very motivated reasoning, and I think both would fail the test given, since both involve infinite computer programs (assuming God is computable at all). And in the end, it doesn’t even matter; Occam’s Razor is still just a quicker way of guessing a little better, not solid evidence.

To be fair, you can generate arbitrarily varied structure with a finite set of generation rules, and Kolmogorov rules state that you go by the complexity of the generating program rather than of the output. You need an infinity somewhere to get infinitely varied output, but that can be in parameters like run time or memory, not necessarily the instruction set.

By my lights this applies to gods as well as to physics, though, with the caveat that we know a lot about physics and gods tend to be seen as famously ineffable.

The Vikings were wrong about thunder because it happened not to work that way, but you could have predicted that in advance of gathering the evidence, from the degree to which their explanation involved totally made-up details.

You can generate infinite sequences with infinite variation with a finite program for sequences with certain structures. This may or may not apply to all possible universes, of course.

By the same token, a program describing a whole bunch of people isn’t really much simpler than a program which instantiates one additional person with all the same basic personality subroutines plus an ability to cast Lightning Bolt. I don’t really see much point in going into the finer details of holes in Norse mythology, given that our sources aren’t even that close to the originals.

(Parting thought: what of Divine Simplicity? Does Occam compel us to accept this? I feel like this is another variant of the ontological argument.)

“God says so” explains everything thus it explains nothing. It is unfalsifiable, it does not decrease the cross-entropy of your predictions. Therefore, it is not an useful epistemic belief.

I think smart, passionate rationalists can fall victim to inside-view thinking, same as any other smart, passionate group. Refusing to look at outside-view criticism on the basis of “its not inside-view” seems like a dangerous failure mode.

I generally agree with su3su2u1 and I’ve made similar criticism in the past.

I just want to add a point that seems not to have been raised in this discussion so far: doing outreach through public media and endorsements by famous non-expert public figures rather than going through standard academic publishing channels is a risky strategy that may backfire:

Public media, and public opinion in general, feed on hype: Memes quickly become popular, especially when they are linked to other popular memes (and AI is certianly a hot topic at the moment), just as quickly as they fade to obscurity as the collective attention shifts to something else.

Consumers of popular media always want fresh topics to be interested in, and media organizations compete to supply their customers with the most novel content they can find. Also, social elites are constantly looking for new, unusual and hip ideas to endorse in order to signal their status, and these ideas trickle down to the general population yielding fashion waves.

If the AI safety discussion is primarily conducted in the arena of public opinion, there is a risk that, when human-level AI fails to materialize in a few years (as it is plausible), AI safety concerns will be relegated to a 2010s fashion and fall outside public awareness.

In fact, there is even a risk of hype backlash: if you cry wolf too many times then AI safety may become a low-status topic that nobody wishes to discuss in public to avoid being associated with the weird fearmongers of the past.

AI research, as a whole, has already suffered from hype backlash in the past, when the grandiose promises of imminent breakthroughs made in the 60s were met with disapponting results, leading to a massive criticism and defunding of the field for much of the 70s and 80s known as the “AI winter”.

A second AI winter is unlikely now, at least as long as AI continues to produce practically useful applications, but academic research and industrial interest in AI safety, machine ethics, and related topics may be hampered by a hype backlash, which would, ironically, actually increase the risk that high-level AI is produced without sufficient safety guarantees and then something goes wrong.

The academic community, while not immune to fashions and perverse incentives (e.g. rewarding papers “by the kilogram”), seems better suited at maintaining an active discussion on technically difficult and controversial issues that may only become practically relevant in the non-immediate future. Seismology, volcanology, astonomy (for asteroid tracking), and to some extent climate science and ecology seem relevant examples.

The academic community is awful at doing AI safety. Truly awful.

Uhm, evidence?

No one outside the MIRI/FHI circle publishes anything about the risks of superintelligence.

People who work on AI safety focus on safety and ethics issues around dumber than human AI and robots, which is a totally different – and much less important problem.

This is a questionable claim. But even if it is true, then it is MIRI/FHI responsability, as part of their core mission, to reach out to these people and persuade them to refocus their research, or if they can’t be persuaded to reach out at least to experts in AI, compsci, safety engineering and ethics.

You genuinely think that ethics of driverless cars/drone attacks might be more important than how to control superintelligence?

Clearly one of us has a very severe problem with our understanding of the world.

Reaching out and persuading people to refocus on FAI is very hard. Most people don’t think the problem is real.

I feel quite frustrated that people in this thread don’t seem to understand the realities of the situation and yet still want to snipe at MIRI. Most people in positions of power – such as respected academics – think the whole superintelligence thing is mumbo jumbo. So when you try to persuade them to work on it, of course they are not going to do as you ask. On top of that, academics are governed by what research is fashionable because that is what gets funded easily. Creating a field of research around safety of superintelligence is extremely hard.

At the moment yes, since driverless cars and military drones already exist, while superintelligent AI is still “15-20 years in the future” just like the past 50 years.

In the long term, if superintelligence is really possible, then controlling it will certainly become more important than the ethics driverless cars, but research in the ethics driverless cars might turn out be a better stepping stone in that direction than, say, speculation on Löb’s theorem.

Or maybe not, but in that case, if your core mission is to do reduce AI risk and you are convinced that mainstream research in the field is insufficient and unproductive, then it is your responsability to try and steer mainstream research in a more productive direction.

No, that’s not how it works. If your house is about to burn down you don’t carry on doing the gardening until it’s actually on fire. If superintelligence is a real and grave risk for the future, then it’s an important problem right now.

Research into the ethics/safety of dumber than human AI is not a good stepping stone for research into safety/ethics of superintelligence. I think there’s an SIAI paper saying why but I’m on a mobile device so can’t easily find it.

> I feel quite frustrated that people in this thread don’t seem to understand the realities of the situation…….

Or, as they would put it, “aren’t persuaded by the arguments”.

It depends on how far in the future superintelligence is and how well we currently understand the issues relevant to superintelligence safety to make significant progress right now.

Air traffic safety is an important issue now, but think of a group of Renaissance people trying to discuss air traffic safety based on Da Vinci’s flying machines sketches. Do they have a realistic chance of making progress? Is this the most productive use of their time (and of the money of the people who fund them)?

As far as we know, research in superintelligence safety may be at the same stage.

Has this paper been published in peer-reviewed academic journals or conferences? How has it been received by the community?

If it has been published in a peer-reviewed channel and the research community paid attention to it, then this is an example of MIRI doing what critics claim it is supposed to do and did not do enough in the past.

WELP. *rolls up sleeves*

I’m planning on finishing an MSc in computer science and have studied mechanized theorem-proving and formal verification of program correctness. I also possess some actual skills in Latex.

Plainly the correct solution to this problem is for me to go apply to one of those job openings, since I’m one of those people who cares about doing the most radical work possible instead of making heaps of money… and it STILL pays better than more grad-school!

Since you brought up Time Cube: Thyme Cube

I’d like to see a lot more emphasis on the concept of, “Outreach is not what MIRI ever thought its primary job was. Solving the actual damn technical problem is what we always thought our primary job was.” We did outreach for a while, but then it became clear that FHI had comparative advantage so we started directing most journalist inquiries to FHI instead.

Until the end of 2014, all our effort is going into writing up the technical progress so far into sufficiently coherent form to be presented to some top people who want to see it at the beginning of 2015. This couldn’t have been done earlier because sufficient technical progress did not exist earlier.

Dr. Stuart Armstrong at FHI is someone we count as a fellow technical FAI researcher, but it does not appear that FHI as a whole has comparative or absolute advantage here over MIRI, and I doubt they’d say otherwise. One may also note that all of Armstrong’s key ideas have been put forth in the form of whitepapers, so far as I know; I don’t recall him trying to shepherd e.g. http://www.fhi.ox.ac.uk/utility-indifference.pdf through a journal review process either, despite FHI being a far more academic institution. I would chalk this up to the fact that there aren’t journals that actually know or care about our premises, nor people interested in the subject who read them, nor reviewers who could actually spot a problem—there would be literally no purpose to the huge opportunity cost of the publication process, except for placating people who want things to be in journals. When Dr. Armstrong writes up http://lesswrong.com/lw/jxa/proper_value_learning_through_indifference/ on LW and pings MIRI and maybe half a dozen other people, his actual work of peer review is done. The rest is a matter of image, and MIRI has been historically reluctant to pay large opportunity costs on actual research progress to try to purchase image—though as we’ve gotten more researchers, we have been able to do a little more of that. But I would start that timeline from Nate and Benja being hired. It did not make sense when it was just me.

I don’t think you’ve really responded to the criticism that multiple math people in this thread have stated, see e.g. this comment; you’ve talked about why you don’t bother publishing in journals, but that’s not what people are actually complaining about.

We have tons(well, kilograms) of online-accessible whitepapers now of the type Ilya is talking about. Most papers tagged CSR or DT qualify in http://intelligence.org/all-publications/. They’re not well-organized and certainly not inclusive of everything the core people know, but they sure are there in considerable quantity. The work of showing these to top CS people is the work of boiling these into central coherent introductions. That is the next leap forward in accessibility, not taking an out-of-context result and trying to shepherd it through a process (though that might reflect a prestige gain).

Actually, everyone stop talking and go look at the papers tagged CSR and DT in http://intelligence.org/all-publications/. Scott, please add a link there in your main post? I don’t think most of the commenters realize that page exists.

OK, this replies to most of the criticism. It does leave that little bit of “If you’ve done this much, why not put it on arXiv, which will both make things easier for everyone else and gain some of the image without all the extra work?”

Looking at all those papers, only 1 or 2 are at the level of something you could put on arxiv, and those were in the last year or two.

The one that is most formally written up does appear to be on arxiv.

Will:

What paper?

That page had already been linked on the post under the title “MIRI has many publications, conference presentations, book chapters and other things usually associated with normal academic research, which interested parties can find on their website,” but I guess this sort of decreases my probability most people looked at it.

I’ll make it more prominent.

This makes it possible for mathy people with the spare time to read/digest MIRI papers to determine whether MIRI are cranks. Is there a good way for people who use normal metrics to gauge mathematicians’ work to determine MIRI’s competence?