In the past week I’ve written about antibiotics and the rate of technological progress. So when a graph about using antibiotics to measure the rate of scientific progress starts going around the Internet, I take note.

Unfortunately, it’s inexcusably terrible. Whoever wrote it either had zero knowledge of medicine, or was pursuing some weird agenda I can’t figure out.

For example, several of the drugs listed are not antibiotics at all. Tacrolimus and cyclosporin are both immunosuppressants (please don’t take tacrolimus because you have a bacterial infection). Lovastatin lowers cholesterol. Bialafos is a herbicide with as far as I can tell no medical uses.

“Cephalosporin” is not the name of a drug. It is the name of a class of drugs, of which there are over sixty. In other words, the number of antibiotics covered by that one word “cephalosporin” is greater than the total number of antibiotics listed on the chart.

If I wanted to be charitable, I would say maybe they are counting similar medications together in order to avoid giving decades credit for producing a bunch of “me-too” drugs. But that doesn’t seem to be it at all. They triple-count tetracycline, oxytetracycline, and chlortetracycline, even though they are chemically very similar and even though the latter are almost never used (the only indication Wikipedia gives for the last of these is that it is “commonly used to treat conjunctivitis in cats”)

They also leave out some very important antibiotics. For example, levofloxacin is a mainstay of modern pneumonia treatment and the eighth most commonly used antibiotic in the modern market. That’s a whole lot more relevant than cats with pinkeye, but it is conspicuously missing while chlortetracycline is conspicuously present. Maybe it has something to do with levofloxacin being approved in 1993?

(also missing from the 90s and 00s: piperacillin, tazobactam, daptomycin, linezolid, and several new cephalosporins)

I can’t find a real table of antibiotic discovery per decade, so I decided to make some.

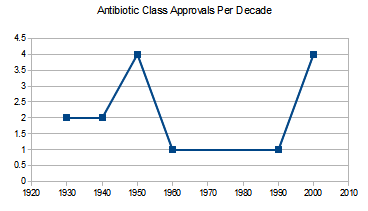

Antibiotic classes approved per decade. An antibiotic class is a large group of drugs sharing a single mechanism. For example, penicillin and methylpenicillin are in the same class, because one is just a slight variation on the chemical structure of the other. There are some arguments over which new drugs count as a “new class”. Source is this source.

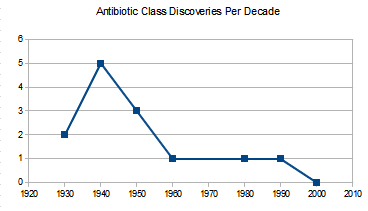

Anbiotic classes discovered per decade. The first graph lists when classes got FDA approval, this one lists when they were first discovered. Both have different pluses and minuses. This one is likely to undercount recent progress because the chemicals being discovered now haven’t been tested and found to be valuable antibiotics and approved (and so don’t make it onto the graph). But the last one might overcount recent progress, because a drug being “approved” in 2010 might represent the output of 1980s science that just took forever to get through the FDA. On the other hand, it might not – a lot of times people find a drug class in 1980, decide it’s too dangerous, and only find a safer useable drug from the same class in 2010.

Individual antibiotics discovered per decade. Individual antibiotics can still be vast improvements upon previous drugs in the same class. Source is here. I have doubled the number for the 2010s to represent the decade only being half over and so make comparison easier. About half of that spike around 1980 is twenty different cephalosporins coming on the market around the same time.

I conclude that antibiotic discovery has indeed declined, though not as much as the first graph tried to suggest, and it may or may not be starting to pick back up again.

Two very intelligent opinions on the cause of the decline. First, from a professor at UCLA School of Medicine:

There are three principal causes of the antibiotic market failure. The first is scientific: the low-hanging fruit have been plucked. Drug screens for new antibiotics tend to re-discover the same lead compounds over and over again. There have been more than 100 antibacterial agents developed for use in humans in the U.S. since sulfonamides. Each new generation that has come to us has raised the bar for what is necessary to discover and develop the next generation. Thus, discovery and development of antibiotics has become scientifically more complex, more expensive, and more time consuming over time. The second cause is economic: antibiotics represent a poor return on investment relative to other classes of drugs. The third cause is regulatory: the pathways to antibiotic approval through the U.S. FDA have become confusing, generally infeasible, and questionably relevant to patients and providers over the past decade.

A particularly good example of poor regulation from the same source:

When anti-hypertensive drugs are approved, they are not approved to treat hypertension of the lung, or hypertension of the kidney. They are approved to treat hypertension. When antifungals are approved, they are approved to treat “invasive aspergillosis,” or “invasive candidiasis.” Not so for antibacterials, which the FDA continues to approve based on disease state one at a time (pneumonia, urinary tract infection, etc.) rather than based on the organisms the antibiotic is designed to kill. Thus, companies spend $100 million for a phase III program and as a result capture as an indication only one slice of the pie.

And one more voice, which I think of as a call for moderation. This is Nature Reviews Drug Discovery:

Most antibiotics were originally isolated by screening soil-derived actinomycetes during the golden era of antibiotic discovery in the 1940s to 1960s. However, diminishing returns from this discovery platform led to its collapse, and efforts to create a new platform based on target-focused screening of large libraries of synthetic compounds failed, in part owing to the lack of penetration of such compounds through the bacterial envelope.

Sometimes stagnant science means your civilization is collapsing. Other times it just means you’ve run out of soil bacteria.

In a way, this points out the unfairness of using antibiotics as a civilizational barometer. This is a drug class first invented in the 1930s and mostly developed by investigating soil bacteria, which have since been mostly exhausted. Of course progress will be faster the closer to the discovery of this technique you get.

If instead you use antidiabetic drugs – a comparatively new field – this is an age of miracles and wonders, with new classes coming out faster than anyone except specialists can keep up with. If antidiabetic drugs were used as a civilizational barometer, we would be having the Singularity next week.

1. This isn’t the first time I’ve seen someone mistake tacrolimus for an antibiotic. I wonder if this is some kind of urban legend?

2. The soil bacteria story is actually consistent with a *sort* of civilizational decline narrative.

Let’s consider the argument that broad “screening” as a method of doing science (“check ALL the compounds!” “check ALL the genes!” etc) doesn’t actually work, or at least, doesn’t work for reasonable costs and at reasonable timescales. In the old days, perhaps, people used to have an actual hypothesis. “Hm, these microbes kill other microbes, let’s see if there’s a drug here.” Today the prevailing paradigm is “check ALL the things!” and that doesn’t work, so we haven’t found the 2010’s equivalent of soil bacteria. [I’m not confident that this is true, but it’s worth considering.]

Yes, I think it’s shifting the goalposts to argue against stagnation based on “We only ever had the one paradigm; we’re just picking increasingly high-hanging fruit.” In disciplines that are actually advancing, new paradigms arise as a matter of course.

If we’d had stagnation in artillery, you’d hear “Well, smoothbore cannons are only accurate to so far. You hear amateurs asking why artillery ranges aren’t increasing but what they don’t realize is that there’s a fundamental tradeoff between range and accuracy. Cannon technology is already pretty awesome, we’re just not in the early days of figuring out how gunpowder works anymore.” If we never innovated beyond the mainframe…that might not even register as stagnation; routine developments in 50s computer science were plenty for science fiction writers to have fun with. Advancing disciplines don’t claim the excuse of having picked all the low-hanging fruit, partly because fools on the internet aren’t challenging them for stagnating, but partly because a procession of disruptive paradigms is part and parcel of being an advancing discipline.

Put it another way. If the combinatorial chemistry approach to drug development had paid off, we’d be seeing a bumper crop of new medicines, far above what we have today, and this would correctly be seen as an anti-drug-stagnation argument. Now that it’s a clear failure, we should treat that as a data point in favor of stagnation. The fact that we’re executing perfectly on the “check ALL the bacteria!” paradigm doesn’t change that.

I also want to take the opportunity to link the inestimable Moldbug on Cancer thread, which takes a similar tack: “All our smart people are executing perfectly on a crappy paradigm.”

It was a little odd for me to read the Lensman books, where space dreadnaughts of frankly unreasonable power were implied to contain, somewhere deep in their substructure, cavernous, oily bays stuffed full of nerds with slide rules.

*new paradigms arise as a matter of course*

Or it could be that they don’t, because they don’t always exist, and when we run out of new paradigms we call that stagnation.

I feel like there’s a difference here, which is that “searching soil bacteria” wasn’t a paradigm, it was stealing the hard work of a billion years of evolution. That’s hard to replicate.

Suppose that in 2050, humans discovered an alien library on Mars that contained the secret to superadvanced technology. By reading the books, they are able to advance a hundred years in the decade from 2050 to 2060. Between 2060 and 2070, progress is comparatively slow.

It would be unfair to tell them “Well, it’s your own fault for not finding some other incredibly fortuitous discovery jumping you ahead a century.”

Scott: But all new paradigms feel like cheating at first. For artillery, the step where you figured out how to calculate parabolic flight trajectories is hacking a universe that runs on math. If combinatorial chemistry had worked, it would be suddenly doing drug discovery millions of times faster than we’d done them before. Mainframes were for the first time, we can use mass production to create vast arrays of logic machines.

New paradigms are really indeed awesome. And so if they stop coming, it’s tempting to look back and say “Welp, it’s like we discovered a library on Mars, picked all the low hanging fruit, and now we’re back to grinding.” In disciplines that are advancing, we keep finding new cheat codes, so it never occurs to us to frame prior achievements as one-offs.

In a universe where computer progress stagnated with vacuum-tube powered mainframes, the “library on Mars” argument would sound perfectly plausible. And we (an amateur audience) would assume that the only plausible path of development was that of incrementally faster ENIACs.

Keep in mind also that early the early antibiotic revolution would have been pretty impressive even if we hadn’t come up with the idea of screening soil bacteria. The famous “sulfa drugs” of the WWII era were the product of German experimentation with aniline dyes. They were the result of standard-issue grinding with chemistry, not playing Indiana Jones with bacteria.

Which goes back to the point that functional disciplines regularly produce multiple paradigms and are mostly not as chance-driven as the Martian archaeology notion would suggest. Even here in antibiotics, we had multiple irons in the fire and were not, in fact, totally dependent on a one-off discovery.

@Athrelon

Would you agree that the correct way of measuring of technological progress is to count the number and impact of new paradigms rather than looking at a single one? Because that is totally compatible with everything Scott has written – in particular, one can make no claims about stagnation on the basis of how many new antibiotics there have been.

The idea of new paradigms as a measure of progress is an interesting one, I’ve never seen it crystallized so clearly even if it is sort of intuitive. One argument for stagnation might be that our current political and corporate structures create a strong incentive for optimization rather than creating new paradigms.

Except all the antidiabetic drugs are pretty useless, nothing is better than metformin, scratch that diet/exercise. And none of these new agents are known to be better than insulin – this is under active investigation in the GRADE study.

All this is to say that what we really care about isn’t number of discoveries or classes or drugs. What we care about is DALYs, and YLL/morbidity left unaddressed, and successful development of interventions that meet these DALY needs.

A quick googling of graphs of life expectancy doesn’t seem to suggest much stagnation in the rate of improvement in life expectancy (except possibly life expectancy at birth, which has the obvious diminishing returns problem when you shift regimes from infant mortality having a significant life expectancy cost to one where infant mortality is <1%).

Could you link one of those graphs of change in life expectancy at 40 (say) since 1900? Cursory googling didn’t turn one up, but improvement in overall life expectancy in the US has definitely stagnated.

There’s one here.

By default it shows birth, but you can click to see other ages (by sex). It shows a fairly clear diminishing returns (not exactly stagnation) for life expectancy at birth, but not so much for the others.

The issue with life expectancy at birth is that the low hanging fruit of reducing infant mortality has almost all been eaten. Not because reduction in infant mortality has stagnated, but because infant mortality has fallen so low that changes no longer have a significant effect on life expectancy.

Can we just stop with the idea that number of skyscrapers, or antibiotics, or AAA video games, or rap stars with insufficient vowels in their names or anything else is a straightforward measure of civilizational decline? These arguments are incredibly tedious and prove next to nothing.

If we admit to not having the information to make huge world-changing decisions for society, we might as well admit to not having the capabilities or right to!

Blah blah inaction is a choice.

Yes, and it’s usually the correct choice.

Regular link to In Praise of Passivity.

(it’s the one using bloodletting physicianry as an analogy for possibly having no idea that we have no idea what we’re doing.)

That is one heck of an article. My attitude towards political attempts to solve social and economic problems boils down to two principles: 1) you can’t fix it, and 2) if you try to fix it, you will make it worse. It’s nice to see somebody else making the same argument.

If I can’t bemoan some generation or another by comparing the merits of different D&D editions, I don’t want to live on this planet anymore.

4th Edition is a sure sign that this generation’s brains have been taken over by the superstimulus of video games. Stagnation and then extinction are inevitable.

Scott: I’m getting a ‘click to edit’ link on the comment above (with a timer counting down from, I think, one hour), although it’s not one of mine. Clicking the link gives me an edit box, but I didn’t try actually submitting any changes.

edit: Since posting this, I no longer have the option to edit Anonymous’s comment, but only my own.

Ah, but 4e’s days are done. The new edition, which is called D&D Next, is coming, and it’s more like the old editions (variously 1e through 3e) than it is like 4e. One might say that it is…

*puts on sunglasses*

… reactionary.

Sigh… I struggle with the shame of my D&D preferences not being in line with my political philosophy.

Then again, that is only really going chronologically. I wonder if anyone of a particular nerdy type would want to deconstruct the last 5ish versions of D&D along political lines. Or would that be the mother of all thread wars?

The thing about antibiotic stagnation AIUI is that antibiotics are A) really important, they make whole classes of nasty things that would otherwise kill us pretty trivial and B) something that gets used up, because eventually bacteria evolve resistance to whatever drug you were using.

So it’s not so much that antibiotic stagnation is an indicator of impending civilizational doom, it’s actually a cause.

Much of the reason for the age of miraculous diabetes drugs is that the current standard of care in Europe, sulfonylurea, is worse than nothing.

And the reason Europe uses sulfonylurea is not because they’re fooled by the short term effects, but because it’s slightly cheaper than metformin.

I didn’t know sulfonylurea was standard of care in Europe. Everyone I know here is very good about using metformin first and rarely goes to sulfonylurea at all.

NICE CG87 recommend Sulfonylurea as a first-line diabetes treatment if the patient cannot tolerate Metformin, and Metformin if the patient is overweight. In patients of an ordinary weight and tolerance to both drugs they suggest clinicians ‘consider’ both Metformin and Sulfonylurea, which is their weakest recommendation and basically means clinicans can do what they want.

Hospitals cannot prevent doctors from prescribing either Metformin or Sulfonylurea (because they are recommended by NICE, which means hospitals MUST pay for the drugs if clinicians recommend them (which incidentally is about to get very exciting with the new £800 NOACs replacing £11 Warfarin for the management of Atrial Fibrillation) ), so if Sulfonylurea is standard of care in Europe it can only be because of different clinical judgement on the issue. Maybe Europe is less overweight than America, or has more Metformin intolerance because of some genetic quirk?

NICE only have the legal mandate to look at the NHS in the UK, but they are influential enough that other European systems often copy their guidance. Neverthless it is possible if the first poster in this chain works or lives somewhere other than the UK the guidance I link below isn’t relevant.

(Source is Section 1.5 of the ‘Recommendations’ section of this guideline: http://www.nice.org.uk/guidance/CG87/chapter/1-Guidance)

Where did you see this graph? Was it really used to talk about scientific progress, and not just to say that antibiotic resistance is a problem? Because that’s all I find the graph being used for.

It was cited on twitter.

To be fair to the naysayers of progress, the fact that many low-hanging fruit have been picked and eaten is a major mechanism that stalls progress. And since bacteria evolve permanent resistance to antibiotics, it is really the number of viable antibiotics remaining that is a true measure of how far we have come. By that measure we are probably going backwards at the moment.

From Scott’s own analysis that is what is happening here.

Yeah, but on the other hand, we no longer can legally use bacteriophages in lieu of antibiotics in the United States. (And in fact, the’re illegal most anywhere outside Russia, where they are used to impressive effect.) We could use them in the 1930’s, though, and they had few side effects.

And I would guess (though I am not certain, I’m no expert) that if we did use bacteriophages, we would also develop fewer resistant strains of bacteria, on account of the phage evolving along with the bacteria it infects.

I’m pretty sure this counts as significant stagnation due to artificial regulatory barriers.

http://en.wikipedia.org/wiki/Bacteriophage

http://en.wikipedia.org/wiki/Phage_therapy

It does sound pretty promising, though I would understand reticence on account of other notable let’s-introduce-this-species-to-eat-this-other-species efforts… which tended to fail spectacularly.

Then again, I think we know more about bacteriophage ecology in particular than temperate woodland ecology in general, so.

That Wikipedia article doesn’t read like it’s written by someone with Western standards for evidence-based medicine. How good does the evidence for phage therapy to work happen to be?

I find it really funny that all the references to drug research and drug stagnation have caused your ad provider to decide that the appropriate ads to show relate to addiction recovery centers. Sometimes machine learning and big data is really impressive, other times, not so much

You’ll know they’ve really gone off the rails when they start advertising anti-mosquito sprays and pool chlorine.

A couple hours ago it was chemoluminescent molecular probes, which I consider impressive in that they apparently figured out that this post was about drug discovery aka biotechnology.

But I’m trying to figure out their target demographic. Laboratory managers who are out of chemoluminescent molecular probes, and just forlornly browse the Internet hoping they will run into some?

I may be showcasing my ignorance here, but is there a reason we should expect there to be many more classes of antibiotics to be discovered? It seems to me that there must be some limit to the number of compounds which, when administered as a drug, will selectively harm bacterial pathogens while causing minimal harm to human tissue. If we were to reach this limit, how would we know? Is there reason to suspect that this limit is much higher than the number of known antibiotics?

Put another way, it may simply be that we are nearing the limits of the paradigm of simple chemical antibiotic agents.

The naturally-occuring antibiotics are staggeringly far from simple. They’re incredible bits of chemistry, the result of 1500-million-year arms races between fungus and bacteria. They’re a lot more complicated than the reasonably flat concatenated heterocycles that modern drug discovery produce as specific inhibitors for single proteins. A total synthesis of a newly-characterised natural antibiotic will often still produce several PhDs and a paper in a good journal.

Of course a natural antibiotic has evolved to kill bacteria while leaving some specific fungus alive; whilst fungi have spectacular degradation enzymes, they don’t have livers to keep them in.

I think that graph is using the definition of ‘antibiotic’ as a compound secreted by some organism that kills some other organism; they’re sometimes effective anti-cancer drugs because the mechanism of action involves some subsystem which is more active in cancer cells than in normal ones.

As an abstract thing, I dunno. But there may be more antibiotics to find in fungi and parasitic wasp larvae.

http://phenomena.nationalgeographic.com/2013/01/07/if-youre-going-to-live-inside-a-zombie-keep-it-clean/

Though penicillin came from a fungus itself, of course.

My wife is Type 1 diabetic. What are the new drugs in the pipeline? I try to keep up with experimental treatments, and they tend to make a big media splash and then disappear.

Most of the new drugs are for type 2.

Thanks, I suspected as much. A few years ago there was a big splash about temporarily curing Type 1 by injecting capsacin into the pacreas to kill off the cells which were attacking the insulin-producing cells. Then nothing.

Sometime I’d be interested in hearing your thoughts on the antibiotic resistance crisis. If indeed there is one. Are we really nearing the “post antibiotic era”?

If there’s an existing summary that you like and you think is sensible, I’d check it out.