Peter Gerdes says:

As the examples of the Nicaraguan deaf children left on their own to develop their own language demonstrates (as do other examples) we do create languages very very quickly in a social environment.

Creating conlangs is hard not because creating language is fundamentally hard but because we are bad at top down modelling of processes that are the result of a bunch of tiny modifications over time. The distinctive features of language require both that it be used frequently for practical purposes (this makes sure that the language has efficient shortcuts, jettisons clunky overengineered rules etc..) and that it be buffeted by the whims of many individuals with varying interests and focuses.

This is a good point, though it kind of equivocates on the meaning of “hard” (if we can’t consciously do something, does that make it “hard” even if in some situations it would happen naturally?).

I don’t know how much of this to credit to a “language instinct” that puts all the difficulty of language “under the hood”, vs. inventing language not really being that hard once you have general-purpose reasoning. I’m sure real linguists have an answer to this. See also Tracy Canfield’s comments (1, 2) on the specifics of sign languages and creoles.

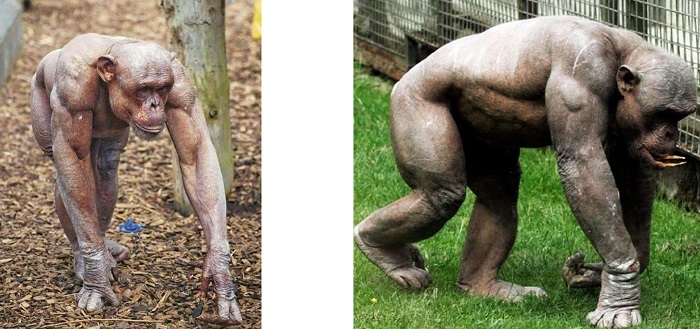

The Secret Of Our Success described how human culture, especially tool-making ability, allowed us to lose some adaptations we no longer needed. One of those was strength; we are much weaker than the other great apes. Hackworth provides an intuitive demonstration of this: hairless chimpanzees are buff:

Reasoner defines “Chesterton’s meta-fence” as:

in our current system (democratic market economies with large governments) the common practice of taking down Chesterton fences is a process which seems well established and has a decent track record, and should not be unduly interfered with (unless you fully understand it)

And citizencokane adds:

Indeed: if there is a takeaway from Scott’s post, it is that one way to ensure survival is high-fidelity adherence to traditions + ensuring that the inherited ancestral environment/context is more or less maintained. Adhering to ancient traditions when the context is rapidly changing is a recipe for disaster. No point in mastering seal-hunting if there ain’t no more seals. No point in mastering the manners of being a courtier if there ain’t no more royal court. Etc.

And the problem is that, in the modern world, we can’t simply all mutually agree to stop changing our context so that our traditions will continue to function as before because it is no longer under our control. I’m not just talking about climate change; I’m talking even moreso about the power of capital, an incentive structure that escapes all conscious human manipulation or control, and which more and more takes the appearance of an exogenous force, remaking the world “in its own image,” turning “all that is solid into air,” and compelling all societies, upon pain of extinction, to keep up with its rapid changes in context. This is why every true traditionalist must be, at heart, an anti-capitalist…if they truly understand capitalism.

Which societies had more success in the 18th and 19th centuries in the context of this new force, capital? Those who held rigidly to traditions (like Qing China), or those who tolerated or even encouraged experimentation? Enlightenment ideas would not have been nearly so persuasive if they hadn’t had the prestige of giving countries like the Netherlands, England, France, and America an edge. Even countries that were not on the leading edge of the Enlightenment, and who only grudgingly and half-heartedly compromised with it like Germany, Austria, and (to some extent) Japan, did better than those who held onto traditions even longer, like the Ottoman Empire or Russia, or China.

In particular, you can’t fault Russia or China for being even more experimental in the 20th century (Marxism, communism, etc.) if you realize that this was an understandable reaction to being visibly not experimental enough in the 19th century.

And Furslid continues:

I think an important piece of this, which I hope Scott will get to in later points is to be less confident in our new culture. It makes sense to doubt if our old culture applies. However, it is also incredibly unlikely that we have an optimized new culture yet.

We should be less confident that our new culture is right for new situations than that the old culture was right for old situations. This means we should be more accepting of people tweaking the new culture. We should also enforce it less strongly.

Quixote describes a transitional step in the evolution of manioc/cassava cultivation:

Also, based on a recent conversation (unrelated to this post actually) that I had with one of my coworkers from central east Africa, I’m not sure that he would agree with the book’s characterization of African adaptation to Cassava. He would probably point out that

– Everyone in [African country] knows cassava can make you sick, that’s why you don’t plant it anywhere that children or the goats will eat it.

– In general you want it plant cassava in swampy areas that you were going to fence off anyway.

– You mostly let the cassava do its thing and only harvest it to use as your main food during times of famine /drought when your better crops aren’t producing

It seems like those cultural adaptations problem cover most / much of the problem with cassava.

There is a very nice experimental demonstration in this article (just saw the work presented at a workshop), where they get people to come as successive “generations” and improve on a simple physical system.

Causal understanding is not necessary for the improvement of culturally evolving technology

The design does improve over generations, no thanks to anyone’s intelligence. They get both physics/engineering students and other students, with no difference at all. In one variant, they allow people to leave a small message to the next generation to transmit their theory on what works/doesn’t, and that doesn’t help, or makes things worse (by limiting the dimensions along which next generations will explore).

A few people including snmlp question the claim that aboriginal Tasmanians lost fire. See this and this paper for the status of the archaeological evidence.

Five hundred years hence, is someone going to analyze the college education system and point out that the wasted effort and time that we all can see produced some benefit akin to preventing chronic cyanide poisoning? Are they going to be able to do the same with other complex wasteful rituals, like primary elections and medical billing? Or do humans create lots of random wasteful rituals and occasionally hit upon one that removes poison from food, and then every group that doesn’t follow the one that removes poison from food dies while the harmless ones that just paint doors with blood continue?

I actually seriously worry about the college one. Like, say what you want about our college system, but it has some surprising advantages: somehow billions of dollars go to basic scientific research (not all of them from the government), it’s relatively hard for even the most powerful special interests to completely hijack a scientific field (eg there’s no easy way for Exxon to take over climatology), and some scientists can consistently resist social pressure (for example, all the scientists who keep showing things like that genetics matters, or IQ tests work, or stereotype threat research doesn’t replicate). While obviously there’s still a lot of social desirability bias, it’s amazing that researchers can stand up to it at all. I don’t know how much of this depends on the academic status ladder being so perverse and illegible that nobody can really hack it, or whether that would survive apparently-reasonable college reform.

Likewise, a lot of doctors just have no incentives. They don’t have an incentive to overtreat you, or to undertreat you, or to see you more often than you need to be seen, or to see you less often than you need to be seen (this isn’t denying some doctors in some parts of the health system do have these pressures). I actually don’t know whether my clinic would make more or less money if I fudged things to see my patients more often, and nobody has bothered to tell me. This is really impressive. Exposing the health system to market pressures would solve a lot of inefficiencies, but I don’t know if it would make medical care too vulnerable to doctors’ self-interest and destroy some necessary doctor-patient trust.

I’ve got two young kids of my own. One puts everything in his mouth, the other less so, and neither evinced anything resembling what I’m reading in Section III. We spent this past Sunday trying to teach my youngest not to eat the lawn, and my oldest liked to shove ant hills and ants into his mouth around that age. Yeah, sure, anecdotal, but a “natural aversion among infants to eating plants until they see mommy eating them, and after that they can and do identify that particular plan themselves and will eat it” seems like a remarkable ability that SOMEONE would have noticed before this study. I’ve never heard anyone mention it.

I don’t think I’m weakmanning the book, it’s just that this is the only aspect discussed in Scott’s review that I have direct experience with, and my direct experience conflicts with the author’s conclusions. It’s a Gell-Mann amnesia thing, and makes me suspicious of the otherwise exciting ideas here. Like: does anyone here have any direct knowledge of manioc harvesting and processing, or the Tukanoans culture? How accurate is the book?

I checked with the mother of the local two-year old; she says he also put random plants in his mouth from a young age. Suspicious!

I think this one greatly overstates its thesis. Inventiveness without the ability to transmit inventions to future generations is of small value; you can’t invent the full set of survival techniques necessary for e.g. the high arctic in a single generation of extreme cleverness. At best you can make yourself a slightly more effective ape. But cultural transmission of inventions without the ability to invent is of exactly zero value. It takes both. And since being a slightly more effective ape is still better than being an ordinary ape, culture is slightly less than 50% of the secret of our success.

That said, the useful insight is that the knowledge we need to thrive, is vastly greater than the knowledge we can reasonably deduce from first principles and observation. And what is really critical, this holds true even if you are in a library. You need to accept “X is true because a trusted authority told me so; now I need to go on and learn Y and Z and I don’t have time to understand why X is true”. You need to accept that this is just as true of the authority who told you X, and so he may not be able to tell you why X is true even if you do decide to ask him in your spare time. There may be an authority who could track that down, but it’s probably more trouble than it’s worth to track him down. Mostly, you’re going to use the traditions of your culture as a guide and just believe X because a trusted authority told you to, and that’s the right thing to do,

“Rationality” doesn’t work as an antonym to “Tradition”, because rationality needs tradition as an input. Not bothering to measure Avogadro’s number because it’s right there in your CRC handbook wikipedia is every bit as much a tradition as not boning your sister because the Tribal Elders say so; we just don’t call it that when it’s a tradition we like. Proper rationality requires being cold-bloodedly rational about evaluating the high-but-not-perfect reliability of tradition as a source of fact.

Unfortunately, and I think this may be a relic of the 18th and early 19th century when some really smart polymathic scientists could almost imagine that they really could wrap their minds around all relevant knowledge from first principles on down, our culture teaches ‘Science!’ in a way that suggests that you really should understand how everything is derived from first principles and firsthand observation or experiment even if at the object level you’re just going to look up Avogadro’s number in Wikipedia and memorize it for the test.

nkurz isn’t buying it:

I’m not sure where Scott is going with this series, but I seem to have a different reaction to the excerpts from Henrich than most (but not all) of the commenters before me: rather than coming across as persuasive, I wouldn’t trust him as far as I could throw him.

For simplicity let’s concentrate on the seal hunting description. I don’t know enough about Inuit techniques to critique the details, but instead of aiming for a fair description, it’s clear that Henrich’s goal is to make the process sound as difficult to achieve as possible. But this is just slight of hand: the goal of the stranded explorer isn’t to reproduce the exact technique of the Inuit, but to kill seals and eat them. The explorer isn’t going to use caribou antler probes or polar bear harpoon tips — they are going to use some modern wood or metal that they stripped from their ice bound ship.

Then we hit “Now you have a seal, but you have to cook it.” What? The Inuit didn’t cook their seal meat using a soapstone lamp fueled with whale oil, they ate it raw! At this point, Henrich is not just being misleading, he’s making it up as he goes along. At this point I start to wonder if part about the antler probe and bone harpoon head are equally fictional. I might be wrong, but beyond this my instinct is to doubt everything that Henrich argues for, even if (especially if) it’s not an area where I have familiarity

Going back to the previous post on “Epistemic Learned Helplessness”, I’m surprised that many people seem to have the instinct to continue to trust the parts of a story that they cannot confirm even after they discover that some parts are false. I’m at the opposite extreme. As soon as I can confirm a flaw, I have trouble trusting anything else the author has to say. I don’t care about the baby, this bathwater has to go! And if the “flaw” is that the author is being intentionally misleading, I’m unlikely to ever again trust them (or anyone else who recommends them). .

Probably I accidentally misrepresented a lot in the parts that were my own summary. But this is from a direct quote, and so not my fault.

roystgnr adds:

Wikipedia seems to suggest that they ate freshly killed meat raw, but cooked some of the meat brought back to camp using a Kudlik, a soapstone lamp fueled with seal oil or whale blubber. Is that not correct? That would still flatly contradict “but you have to cook it”, but it’s close enough that the mistake doesn’t reach “making it up as he goes along” levels of falsehood. You’re correct that even the true bits seem to be used for argument in a misleading fashion, though.

This seems within the level of simplifying-to-make-a-point that I have sometimes been guilty of myself, so I’ll let it pass.

A funny point about the random number generators: Rituals which require more effort are more likely to produce truly random results, because a ritual which required less effort would be more tempting to re-do if you didn’t like the result.

Followed by David Friedman:

This reminds me of my father’s argument that cheap computers resulted in less reliable statistical results. If running one multiple regression takes hundreds of man hours and thousands of dollars, running a hundred of them and picking the one that, by chance, gives you the significant result you are looking for, isn’t a practical option.

Yikes.

The quote on quadruped running seems inaccurate in several important ways compared to the primary references Henrich cites, which are short and very interesting in their own: Bramble and Carrier (1983) and Carrier (1984). In particular, humans still typically lock their breathing rate with their strides, it’s just that animals nearly always lock them 1:1, while humans are able to switch to other ratios, like 1:3, 2:3, 1:4 etc. and this is thought to allow us to maintain efficiency at varying speeds. Henrich also doesn’t mention that humans are at the outset metabolically disadvantaged for running in that we spend twice as much energy (!) per unit mass to run the same distance as quadrupeds. That we are still able to run down prey by endurance running is called the “energetic paradox” by Carrier. Liebenberg (2006) provides a vivid description of what endurance hunting looks like, in Kalahari.

And b_jonas:

I doubt the claim that humans don’t have quantized speeds of running. I for one definitely have two different gaits of walking, and find walking in an intermediate speed between the two more difficult than either of them. This is the most noticable if I want to chat with someone while walking, because then I have to walk in such an intermediate speed to not get too far from them. The effect is somewhat less pronounced now that I’ve gained weight, but it’s still present. I’m not good at running, so I can’t say anything certain about it, but I suspect that at least some humans have different running gaits, even if the cause is not the specific one that Joseph Henrich mentions about quadrupeds.

I’ve never noticed this. And I used to use treadmills relatively regularly, and play with the speed dial, so I feel like I would have noticed if this had been true. Anyone have thoughts on this?

I read somewhere that the languages with the most distinctive sounds are in Africa, among them the ones including the !click! ones. Since humanity originates from Africa, these are also the oldest language families.

As you move away from Africa, you can trace how languages lose sound after sound, until you get to Hawaiian, which is the language with the fewest sounds, almost all vowels.

I’ve half heartedly tried to find any mention of this, perhaps overly cute theory again, but failed. The “sonority” theory here reminded me. Anyone know anything, one way or the other?

Secret Of Our Success actually mentions this theory; you can find the details within.

Some people reasonably bring up that no language can be older than any other, for the same reason it doesn’t make sense to call any (currently existing) evolved animal language older than any other – every animal lineage from 100 million BC has experienced 100 million years of evolution.

I think I’ve heard some people try to get around this by focusing on schisms. Everyone starts out in Africa, but a small group of people move off to Sinai or somewhere like that. Because most of the people are back home in Africa, they can maintain their linguistic complexity; because the Sinaites only have a single small band talking to each other, they lose some linguistic complexity. This seems kind of forced, and some people in the comments say linguistic complexity actually works the opposite direction from this, but I too find the richness of Bushman languages pretty suggestive.

What about rules that really do seem pointless? Catherio writes:

My basic understanding is that if some of the rules (like “don’t wear hats in church”) are totally inconsequential to break, these provide more opportunities to signal that your community punishes rule violation, without an increase in actually-costly rule violations.

I’d heard this before, but she manages (impressively), to link it to AI: see Legible Normativity for AI Alignment: The Value of Silly Rules.

With regard to accepting other people’s illegible preferences…I wish I could show this essay to, like, two-thirds of all the people I’ve ever lived with. Seriously, a common core of my issues with roommates has been that they refuse to accept or understand my illegible preferences (I often refer to these as “irrational aversions”) while refusing to admit that their own illegible preferences are just as difficult to ground rationally. Just establishing an understanding that illegible preferences should be respected by default or at least treated on an even playing field, and that having immediate objective logical explanations for preferences should not be a requirement for validation, would have immediately improved my relationships with people I’ve lived with 100%.

I’ve had the same experience – a good test for my compatibility with someone will be whether they’ll accept “for illegible reasons” as an excuse. Despite the stereotypes, rationalists have been a hundred times better at this than any other group I’ve been in close contact with.

Nav on Lacan and Zizek (is everything cursed to end in Zizek eventually, sort of like with entropy?):

Time to beat my dead horse; the topics you’re discussing here have a lot of deep parallels in the psychoanalytic literature. First, Scott writes:

}} “If you force people to legibly interpret everything they do, or else stop doing it under threat of being called lazy or evil, you make their life harder”

This idea is treated by Lacan as the central ethical problem of psychoanalysis: under what circumstances is it acceptable to cast conscious light upon a person’s unconsciously-motivated behavior? The answer is usually “only if they seek it out, and only then if it would help them reduce their level of suffering”.

Turn the psychoanalytic, phenomenology-oriented frame onto social issues, as you’ve partly done, and suddenly we’re in Zizek-land (his main thrust is connecting social critique with psychoanalytic concepts). The problem is that (a) Zizek is jargon-heavy and difficult to understand, and (b) I’m not nearly as familiar with Zizek’s work as with more traditional psychoanalytic concepts. But I’ll try anyway. From a quick encyclopedia skim, he actually uses a similar analogy with fetishes (all quotes from IEP):

}} “Žižek argues that the attitude of subjects towards authority revealed by today’s ideological cynicism resembles the fetishist’s attitude towards his fetish. The fetishist’s attitude towards his fetish has the peculiar form of a disavowal: “I know well that (for example) the shoe is only a shoe, but nevertheless, I still need my partner to wear the shoe in order to enjoy.” According to Žižek, the attitude of political subjects towards political authority evinces the same logical form: “I know well that (for example) Bob Hawke / Bill Clinton / the Party / the market does not always act justly, but I still act as though I did not know that this is the case.””

As for how beliefs manifest, Zizek clarifies the experience of following a tradition and why we might actually feel like these traditions are aligned with “Reason” from the inside, and also the crux of why “Reason” can fail so hard in terms of social change:

According to Žižek, all successful political ideologies necessarily refer to and turn around sublime objects posited by political ideologies. These sublime objects are what political subjects take it that their regime’s ideologies’ central words mean or name extraordinary Things like God, the Fuhrer, the King, in whose name they will (if necessary) transgress ordinary moral laws and lay down their lives… Kant’s subject resignifies its failure to grasp the sublime object as indirect testimony to a wholly “supersensible” faculty within herself (Reason), so Žižek argues that the inability of subjects to explain the nature of what they believe in politically does not indicate any disloyalty or abnormality. Žižek argues that the inability of subjects to explain the nature of what they believe in politically does not indicate any disloyalty or abnormality. What political ideologies do, precisely, is provide subjects with a way of seeing the world according to which such an inability can appear as testimony to how Transcendent or Great their Nation, God, Freedom, and so forth is—surely far above the ordinary or profane things of the world.

Lastly and somewhat related, going back to an older SSC post, Scott argues that he doesn’t know why his patients react well to him, but Zizek can explain that, and it has a lot of relevance for politics (transference is a complex topic, but the simple definition is a transfer of affect or mind from the therapist to the patient, which is often a desirable outcome of therapy, contrasted with counter-transference, in which the patient affects the therapist):

}} “The belief or “supposition” of the analysand in psychoanalysis is that the Other (his analyst) knows the meaning of his symptoms. This is obviously a false belief, at the start of the analytic process. But it is only through holding this false belief about the analyst that the work of analysis can proceed, and the transferential belief can become true (when the analyst does become able to interpret the symptoms). Žižek argues that this strange intersubjective or dialectical logic of belief in clinical psychoanalysis also what characterizes peoples’ political beliefs…. the key political function of holders of public office is to occupy the place of what he calls, after Lacan, “the Other supposed to know.” Žižek cites the example of priests reciting mass in Latin before an uncomprehending laity, who believe that the priests know the meaning of the words, and for whom this is sufficient to keep the faith. Far from presenting an exception to the way political authority works, for Žižek this scenario reveals the universal rule of how political consensus is formed.”

Scott probably come across as having a stable and highly knowledgeable affect, which gives his patients a sense of being in the presence of authority (as we likely also feel in these comment threads), which makes him better able to perform transference and thus help his patients (or readers) reshape their beliefs.

Hopefully this shallow dive was interesting and opens up new areas of potential study, and also a parallel frame: working from the top-down ethnography (as tends to be popular in this community; the Archimedean standpoint) gives us a broad understanding, but working from the bottom-up gives us a more personal and intimate sense of why the top-down view is correct.

This helped me understand Zizek and Lacan a lot better than reading a book on them did, so thanks for that.

Stucchio doesn’t like me dissing Dubai:

I’m just going to raise a discussion of one piece here:

}} “Dubai, whose position in the United Arab Emirates makes it a lot closer to this model than most places, seems to invest a lot in its citizens’ happiness, but also has an underclass of near-slave laborers without exit rights (their employers tend to seize their passports).”

I have probably read the same western articles Scott has about all the labor the UAE and other middle eastern countries imports. But unlike them, I live in India (one of the major sources of labor) and mostly have heard about this from people who choose to make the trip.

To me the biggest thing missing from these western reporter’s accounts is the fact that the people shifting to the gulf are ordinary humans, smarter than most journalists, and fully capable of making their own choices.

Here are things I’ve heard about it, roughly paraphrased:

“I knew they’d take my passport for 9 months while I paid for the trip over. After that I stuck around for 3 years because the money was good, particularly after I shifted jobs. It was sad only seeing my family over skype, but I brought home so much money it was worth it.”

“I took my family over and we stayed for 5 years; the money was good, we all finished the Hajj while we were there, but it was boring and I missed Maharashtrian food.”

“It sucked because the women are all locked up. You can’t talk to them at the mall. It’s as boring as everyone says and you can’t even watch internet porn. But the money is good.”

When I hear about this first hand, the stories don’t sound remotely like slave labor. It doesn’t even sound like “we were stuck in the GDR/Romania/etc” stories I’ve heard from professors born on the wrong side of the Iron Curtain. I hear stories of people making life choices to be bored and far from family in return for good money. Islam is a major secondary theme. So I don’t think the UAE is necessarily the exception Scott thinks it is.

Moridinamael on the StarCraft perspective:

In StarCraft 2, wild, unsound strategies may defeat poor opponents, but will be crushed by decent players who simply hew to strategies that fall within a valley of optimality. If there is a true optimal strategy, we don’t know what it is, but we do know what good, solid play looks like, and what it doesn’t look like. Tradition, that is to say, iterative competition, has carved a groove into the universe of playstyles, and it is almost impossible to outperform tradition.

Then you watch the highest-end professional players and see them sometimes doing absolutely hare-brained things that would only be contemplated by the rank novice, and you see those hare-brained things winning games. The best players are so good that they can leave behind the dogma of tradition. They simply understand the game in a way that you don’t. Sometimes a single innovative tactic debuted in a professional game will completely shift how the game is played for months, essentially carving a new path into what is considered the valley of optimality. Players can discover paths that are just better than tradition. And then, sometimes, somebody else figures out that the innovative strategy has an easily exploited Achilles’ heel, and the new tactic goes extinct as quickly as it became mainstream.

StarCraft 2 is fun to think about in this context because it is relatively open-ended, closer to reality than to chess. There are no equivalents to disruptor drops or mass infestor pushes or planetary fortress rushes in chess. StarCraft 2 is also fun to think about because we’ve now seen that machine learning can beat us at it by doing things outside of what we would call the valley of optimality.

But in this context it’s crucial to point out that the way AlphaStar developed its strategy looked more like gradually accrued “tradition” than like “rationalism”. A population of different agents played each other for a hundred subjective years. The winners replicated. This is memetic evolution through the Chestertonian tradition concept. The technique wouldn’t have worked without the powerful new learning algorithms, but the learning algorithm didn’t come up with the strategy of mass-producing probes and building mass blink-stalkers purely out of its fevered imagination. Rather, the learning algorithms were smart enough to notice what was working and what wasn’t, and to have some proximal conception as to why.

Someone (maybe Robin Hanson) treats all of history as just evolution evolving better evolutions. The worst evolution of all (random chance) created the first replicator and kicked off biological evolution. Biological evolution created brains, which use a sort of hill-climbing memetic evolution for good ideas. People with brains created cultures (cultural evolution) including free market economies (an evolutionary system that selects for successful technologies). AIs like AlphaStar are the next (final?) step in this process.

The thing that most helped me understand Lacan was that he was talking about second order mimesis. You are not just the average of the five people you most closely interact with but an unconscious reification of that average extended far beyond the sparse data that actually generated it. To feel this vividly, think about your model of good and bad relationships between other people, and notice what mental moves you perform to generate these thoughts.

I still have absolutely no idea what that means, sorry. What is the average of a group of people? What moves am I supposed to be performing when I think about my model of good and bad relationships? Insofar as I have such a model, it’s too complex to look at all in one go, and I don’t spend much time thinking about it it at all.

It sounds like you are many steps farther down the stack than what I am talking about. I mean when you read the words ‘think about good relationships’ several things happen very rapidly composed of mental images, maybe mental talk, probably some felt sense. I mean noticing these.

Still utterly lost. What do the qualia associated with thinking about about… whatever I’m supposed to be thinking about here have to do with whether I’m the average of five other people, or the reification of that average? And more to the point, what the hell does either of those latter terms mean?

This is easier with graphs. In fact a lot of philosophy would be less absurd if they had just inserted a graph.

Imagine the line graph that represents the connection between you and the people you spend time with, and their relationships with each other. Now do the same for those people. The relationships between *those* entities is second order mimesis.

That’s just some special cases of the general relationship graph, with the same imperfect knowledge.

This confuses me, because the challenge of psychoanalysis is to look under the hood at the pieces of whatever constitutes “you”. The phenomenon you’re describing is known as “introjection”–the mental internalization of important others in your life–and goes back to Freud’s idea of the super-ego (literally, “over-I”, your introjected parents stand above your sense of ego, literally your “I”, and appear to issue you directives).

The most useful aspect of introjection involves recognizing what psychologist Eric Berne called “injunctions”, where an internalized parental figure “issues” you a negative command, like “don’t jump in that puddle” “talking to strangers is wrong”. You immediately obey, but you can’t quite say why, other than that you feel like you should. This is how you learn the rules of society, but also what creates neuroses and anxiety, so it can be useful to consciously reflect upon these injunctions (this is aligned with what Lacan describes as the goal of psychoanalysis, which is to cast conscious light upon previously dark areas of your mind, a conscious revelation of your deepest “self” to your thinking self, in which the analyst is to act as a mirror).

Lacan’s “big Other” is, as OP describes, a reification of this sense of “rules of society” into a Subject (something that has an “I”), like a God or like some leftists treat “Capitalism” (this is to say, the Other occupies a similar psychological position as a God might have, but more idiosyncratic, without associated culture-derived symbolism, and similarly you can believe or not believe in it). In a sense, the big Other can “talk to you” but it is not “like you” in that it’s not a person, but “the rules of society” or “of nature” of whatever you see as having the key to your desires. From here I’d need to dive a lot deeper into other concepts to explain more fully why/how the Other operates, but hopefully you’re starting to get the idea… I wrote an essay/blogpost a while back that uses the concept in relation to fetishism, if you’re interested.

The challenge with Lacan is that he had a large space of ideas (objet petit a, mathemes, four discourses, RSI model, the synthome/symptom, etc), some of which are very difficult to wrap your head around. He saw many as a part of a topographical space, in which the various structures (the Subject, the Other, the symptom, etc) relate to each other and overlap and intersect. Explaining any one idea in isolation is possible, but wouldn’t really be able to do it justice. Lots of people have made attempts to translate these ideas into more comprehensible forms (I got most of my knowledge through a book, “Lacan, A Beginner’s Guide”, although I’ve read a little of his primary texts too), but it’s never easy. Another hurdle is that many of the ideas concern our understanding of the mind through our use of language itself, so learning requires becoming sensitized to a new way of looking at texts and language, always interrogating and asking “says who?” or “for whom?”

Injunctions sound simple. They are preferences people actually had. By second order mimesis I mean inferred preferences of the relationships between people. Romance, justice, etc. Things where, were they available to you to question, an inquiry would probably change your conception of what was happening in the situation.

Where do those inferences come from? It’s more than just injunctions for sure. But injunctions illustrate the basic principle of introjection, which is a primary source of “inferred preferences”.

Wild conjecture, same as psychoanalysis :p

Replying to:

https://slatestarcodex.com/2019/06/04/book-review-the-secret-of-our-success/#comment-759449

In my family the question of atheism and theism was simply unintelligible. Grandparents went to church because it was the social custom to go. The idea of them being entitled to decide if there is a god or not would have struck them as weird. It is simply not a well-formed question to decide if they had faith in god or not, or even if they had faith in the church or not. Maybe they had “faith” in that one should follow social customs. They did not care about theoretical ideas like whether there is a god or not at all.

My parents grew up without much religion but again that does not mean atheism. They did not think about that question at all. And interestingly they did not adopt politics as a substitute religion. They were liberal roughly in the sense of tolerant, but would not campaign for saving the Earth or something. They had no problems with gays but would not campaign for equal rights for them. They just did not really care about anything else but making money, having fun and so on.

They didn’t really raise me in any kinds of moral principles. It was just practical. Study, work hard, that’s how you get money. Don’t break that law, that is an overly risky way to get money. Be polite and nice, because if people like you, that can come useful, in networking, getting good jobs. The basic economic parable that if the shopkeeper treats customers well, they will come back, if he treats them badly, they won’t. That was all.

I guess maybe because Europe is a more cynical place than the US. It seems in the US everybody is judged by their morality, be that religious or political. My parents or me never ever donated a cent to any charity or anything. They have mostly judged people by professional success i.e. money and that very basic morality that they should not lie and commit fraud and generally break their words.

Yes, I totally mean Europe is a more cynical place. Sweden not but most are. Our welfare systems and universal healthcare and all that do not come from our hearts going out to the poor. Rather it comes from the fear that we ourselves might become poor. That’s what war does to you. Germans were dirt poor at the end of WWI and also at the end of WWII. Oh and in between as well, hyperinflation. One could be poor without any personal fault. Just war. Decent middle class people wore pants made of sacks at the end of WWI. In that situation the poor is not the Other you feel charitable to. It is potentially you. It is in your selfish interest to have welfare and universal healthcare. So we can be cynical, not very moralistic and “socialist” at the same time. It makes perfect sense.

FWIW your statement about people in Europe not making moral judgements day to day completely contradicts my own experience living here. I’d guess its more a matter of particular localities and social groups, but without knowing your background I can’t say specifically where it differs from mine. (Obvious example, moralistic old ladies who judge the young for being promiscuous

, taking drugs, etc)

I think its true that the US uses more explicitly moral language in its public discourse, but that seems to be more of a difference in terminology. (e.g. the same attack by one politician on another might use “evil” in the US, but “elitist” in a european country).

As for human running speeds, I have also noticed that there is a small region (somewhere in the neighborhood of 4-5mph) that is between my comfortable walking speeds and my comfortable running speeds. However, if I escape this range on either end, I can walk comfortably and basically any lower speed and run comfortably at and higher (but not beyond my maximum) speed.

I will second this.

I can walk comfortably at 4mph, but by 5mph it takes noticeably more effort to walk at that speed than to simply shift posture and adopt a running stance. 4.5 is roughly the break-even mark, where it doesn’t matter what stance I choose, it subjectively feels equally efficient. There’s also a point at the very high end of speed where there feels like there might be a slight posture discontinuity between jog/run and outright sprint, but I’m much less confident in that.

To add another datum, I find that there are a range of walking speeds I am uncomfortable going at, but I’m yet to find a running speed that isn’t easy enough.

Even at the high end?

Running as fast as you can is much less uncomfortable than walking at a speed just below a jog. It takes more energy, sure, but you fall into it more easily: it doesn’t require the motor-control-effort of maintaining an inefficient walking speed. You just tell your motor system to max out, and the neural patterns drilled into you by millions of years of evolution reduce the cognitive effort of moving to virtually zero.

If humans were physically capable of running fast enough that it becomes energetically unfavourable or physically damaging, then that would be uncomfortable, but with the way that our bodies work, it seems we can keep up the same gait as fast as we are capable without negative effects.

The theory is in fact too cute. However, there is a real reason the Bushman languages are called “older”, despite the logical problems — the click consonants that they use have never been observed as the result of a sound change. If you take that (lack of) observation at face value, it can only mean that the click consonants were given to the original humans by God, and only the Bushmen preserved them appropriately.

(Background — the sounds a language uses change all the time, as when the Latin word centum, beginning with a /k/, turned into French cent, beginning with /s/ (and, more generally, all Latin /k/ before front vowels also became French /s/). With the exception of clicks, for every sound known to have been lost to a historical change there is another known change that produced the same sound from something else. If there is no known process that could introduce clicks into a language, you’re kind of forced to conclude that, if clicks are there now, they’ve always been there.

Also, there are Bantu languages in South Africa that use click consonants. Those are reasonably felt to have gotten their clicks from exposure to the click languages rather than through a sound change.)

This doesn’t work at all; to the extent that there is a relationship between community size and linguistic complexity, the smaller communities have more complexity, not less. Linguistic complexity doesn’t need to be maintained; it is constantly growing fresh.

In Biology, we’d say a feature is “more conserved” if it hasn’t changed a lot over time – the bone structure of mammal limbs (one bone attached to a shoulder, two attached to that bone, many attached to those bones – see the human Humerus>Radius&Ulna>whole mess of hand bones) is highly conserved, which walking upright certain is not highly conserved. Talking about “older” languages doesn’t really make sense, but talking about languages with high conserved features might – it’s not that the language is older, but that (certain features of) it haven’t changes for a very long time.

First of all, the Wikipedia article:

https://en.wikipedia.org/wiki/Click_consonant

has some interesting things to say under Click genesis and click loss.

Anyway, replying to Scott’s post, I would say that, since complexity in any language subsystem (phonology, morphology etc.) can both increase and decrease, it is possible, in theory, to go from an 80 consonant language to a 8 consonant language AND VICE VERSA, within, say, 2000-3000 thousand years. Now compare that to the time depth of humans leaving Africa:

Within Africa, Homo sapiens dispersed around the time of its speciation, roughly 300,000 years ago. The “recent African origin” paradigm suggests that the anatomically modern humans outside of Africa descend from a population of Homo sapiens migrating from East Africa roughly 70,000 years ago and spreading along the southern coast of Asia and to Oceania before 50,000 years ago. Modern humans spread across Europe about 40,000 years ago.

Language is thought to have come about 200k-50k years ago.

Argh, obviously I meant 2000-3000 years, not *thousand* years.

One thing I have wondered about is how complexity in language arises. From observation, it looks like almost all changes go from complex to simpler – e.g., losing objective/subjunctive/gentive forms, verb conjugations (thou hast/shalt -> you have/shall) or replacing difficult sounds (German ‘ch’ with ‘sh’). If languages always flow downhill towards simplicity, how did we get the Latin or German grammars with all their declensions? Are they derived from even more complex languages, and thus turtles all the way down?

In genetics, there is a relationship – linear, IIRC – between (effective) population size and the number of polymorphisms supported in the population. This seems opposite to the case for languages, where small, isolated communities develop linguistic intricacies and larger communities simplify to some sort of pidgin the intellectuals and parents sneer at. The correct comparison is likely to be interoperability rather than polymorphisms, and genetic incompatibilities (a.k.a. speciation) usually or perhaps necessarily occurs when populations are separated, while in a large population, variants that make you incompatible reproduction-wise are at a severe disadvantage. Genetic polymorphisms are perhaps more akin to the number of different words – it would be neat if we could show that as grammars get simpler, dictionaries grow.

So the contours of a theory of linguistic complexigenesis is that languages accrue complexity in small isolated groups, and that large communities simplify. This fits with the observations that all languages seem to drift towards simplicity, since going back in time, communities were smaller and more isolated. But is there any more solid evidence of this?

The usual theory is that a language which is learned by large numbers of adults becomes simpler (because the adults have trouble learning it). The standard examples are English, Latin, Mandarin Chinese, and Swahili.

If you compare classical Latin to classical Greek, and then compare modern Latin to modern Greek, it’s difficult not to conclude that a lot more of the explicit grammatical structure was lost in Latin than in Greek. That’s the kind of thing people are thinking of. (On the other hand, Greek lost most of its vowels, which isn’t the kind of thing people are thinking of for this theory.)

The problem here is the words “from observation”. Not all languages are equally likely to be observed by you. Easily observed languages are almost precisely the same set as languages with a lot of exposure to foreign adults.

I think there’s something to be said for the idea that large communities, even if they’re all native speakers, might hold the complexity level down. Coordinating language across large groups is hard. In the past, it wouldn’t be possible; those large communities would split into smaller communities and their languages would diverge. If you’re not going to have that happen, you need innovations to be either quickly adopted across the whole community or ruthlessly squelched before they can catch on anywhere.

This is conditioned on all else begin equal, so we need a fixed mutation rate as well as a stable, homogeneous population.

The short but incomplete answer is that languages don’t always flow towards simplicity. Proto-Uralic had 6 noun cases, but its descendant Finnish has 15, for instance. The most common examples you’ll hear of languages getting simpler, including the ones you just gave, are actually one single giant example: the Indo-European languages. The morphology of Proto-Indo-European was about as strongly inflectional as you can get, and so its daughter languages have naturally gotten simpler, but there are plenty of non-IE languages that are becoming more inflectional over time. It’s not a universal trend.

A second, slightly better answer is to point out that inflectional complexity is not the only kind of complexity. Modern English may lack the tables of noun declensions and verb conjugations found in Old English, but it makes up for it (as analytical languages often do) with a complex system of periphrasis. Compare elaborate catenae like “is going to steal”, with a simple “stele”, for example. Likewise, Proto-Indo-European may have been more complicated than its descendants in some ways, but it also had at most 5 different vowels, and probably more like 2. Modern European languages however include some of the most complex vowel systems in the world, such as Standard Swedish with its 17 vowel qualities. The common view that inflection is the measure of complexity is due in large part to the prestige associated with learning languages like Latin, Ancient Greek and Sanskrit – which you’ll notice just happen to be PIE’s oldest attested daughters, making this the exact same example as before.

But the best and most interesting answer is this: the erosion of complex forms into simple ones is the very process that creates complex forms.

For instance, imagine you have a language with fusional declensions, like Old English. These might wear away over time, like they did in Modern English. Well now you have to bring in extra words to take over those lost meanings via periphrasis. But as time goes on those phrases will begin to erode as well, until the boundaries between words are lost and you have a new system of agglutinative inflection – long strings of suffixes, say, where once you had strings of individual words. But then these too will meld into one another, until your system only uses one suffix at a time to convey information that used to take several, but now there is a different version of that suffix for each possible combination that got merged. And hey presto, now you have a fusional inflection again!

You can get even more involved than this. I don’t think anyone would deny that the triconsonantal root systems found in the Semitics languages like Arabic and Hebrew are seriously complex. And yet their evolution can be explained largely through repeated assimilation and analogy, processes that involve removing distinctions, and are thus part of the “drift towards simplicity”. It’s a false distinction.

Japanese appears to have gone through multiple cycles of analytical constructions “melting into” the words they modify and becoming inflectional/synthetic grammar, with another cycle currently underway. Many things that are analysed as grammatical forms in the modern language (passives, causatives, transitive-intransitive pairs of verbs, different classes of adjectives) can be traced to auxiliary verbs (think English “I did eat it”) that were attached to a much more regular grammatical core in various periods of the classical language, with some of them having already become “grammar” that more auxiliary verbs could be applied to by the time of the 8th/9th century high court literature period. I’d argue we are currently observing the same process with the “continuous forms” (言っている etc.), whose abbreviated casual instances (言ってる etc.) look and feel no different from most of the other inflectional grammar around.

That being said, I was trying to think of similar patterns in other languages I’m familiar with but came up mostly empty-handed except for a few much less persuasive examples in Russian (-ся). The problem may be that few modern languages are sufficiently isolated to have any particular need for new grammar created ex nihilo when there is a continuous supply of adjacent languages to calque constructions from (cf. German adopting the ubiquitous English continuous form with am, US English eating AAVE constructions like the habitual be which may be originally from other languages). (I’m not sure what to make of the arguably different connotations of the “AAVE participle” (“hatin”) as opposed to the fully enunciated one (“hating”).)

Also, of course, descriptive grammar + education + media freezing grammatical development to a much greater degree than invention of new words within the existing scheme. Languages that lack some or all of these factors seem grammatically much more innovative; I’m under the impression that most of the verbs you will hear in a Korean conversation on the streets nowadays will be in some inflection that may not have existed 100 years ago.

Speaking from a position of ignorance, I would think phonetic complexity could be be pretty distinct from linguistic complexity?

>What political ideologies do, precisely, is provide subjects with a way of seeing the world according to which such an inability can appear as testimony to how Transcendent or Great their Nation, God, Freedom, and so forth is—surely far above the ordinary or profane things of the world.

All that jargon for such a very simple thing? This is religion. To be fair, one should also add Equality, the Environment, Fairness etc. Religion being non-falsifiable in a feature, not a bug, it means it will not be falsified and hence it is a safe tool for building a community around it. Ideas about ordinary or profane things are necessarily falsifiable.

We want to solve coordination problems. Meaning, form groups within which we trust each other. The obvious thing is blood relatives, kin selection and I’d die for 2 brothers or 8 cousins and all that. Selfish genes. That is how animals work. But humans are speaking animals. You don’t have to be my son, I can just SAY you are my son, adoption existed already in the Code of Hammurabi and Rig-Veda. You are my relative now because we made a blood-brotherhood ritual or you married my sister. Being of my tribe does not necessarily mean you are descended by blood, it just means you SAY “I am a member of tribe X”, and possibly add a costly signal.

So we coordinate around words. But it sucks when those words are falsified. So you pick something unfalsifiable. Which means far removed from ordinary experience. That is all, really.

Creating unfalsifiability is a really interesting idea.

Even recently two young people couldn’t be considered to really be dating unless their facebook status said “In a Relationship”.

(This was the case not that long ago, I’m not sure if it’s still true today since so many parents are on facebook.)

Perhaps ironically, it’s easier to slip off a wedding ring to pretend that you aren’t married than to alter your “In a Relationship” status.

“Religion” is a big word and it’s hard to disentangle which aspect exactly is referred to when invoked. We want the essential characteristics without the cruft. The goal of all the jargon is to point to something small and simple, yes, which you’ve identified as an aspect of religion (so it looks like it worked!). It might be more apt to say that all religion is ideology in some form, rather than that all ideology is religion.

As for your explanation of why ideology works, I think it’s a plausible evolutionary or historical explanation, but it doesn’t get at the phenomenological or “inside one’s head” reasons why it works. A nationalist ideologue doesn’t think “I am nationalist because the people of my nation are my replacement for blood relations,” they instead have some vague sense which is likely not manifest as language, and they act based on that. Answering the question of “how exactly does it feel?” requires building a concept space for expressing such “inside” things, and without familiarity, we might understand that space of language as unnecessary jargon.

But we know how it feels. This is why I mentioned the more leftish sounding ones like Equality or Fairness. Hardly anyone is immune to one or the other kind.

I will not disclose which kinds work on me, I would rather people not put me into political categories, but the ones that do work feel like elevation for me. Literally. As if being lighter in my body and flying up. Catharsis. Purification. And sometimes a bittersweet urge to cry.

Your use of the word “catharsis” demonstrates the value of jargon.

We can also add memetic evolution too. Humans don’t just care about spreading their genes, they also care about spreading their ideas. An adoptive child will carry on the parents ideas as well as a biological child.

If someone get an unrelated stranger to accept my/my tribe’s ideas, that is also reproductive success. It’s also an ally who will protect those relatives who now share my tribe.

I think at the end of the article you are referring to this article by Eliezer: https://www.lesswrong.com/posts/XQirei3crsLxsCQoi/surprised-by-brains

(By the way I think the term “cumulative optimization” from that article might be better than “tradition” in some circumstances.)

I also say something similar in my recent https://www.gwern.net/Backstop essay – evolution is the worst and least efficient of all optimization strategies, but because it exists at all, it can evolve better ones…

“The Sinaites only have a single small band talking to each other, they lose some linguistic complexity.”

The opposite seems to be true – small isolated groups develop more complex languages, then as the language spreads to a larger and larger group and acquires more non-native speakers and demands more clarity of communication with less cultural context it becomes more analytical and “simpler”. Compare the classical Latin spoken by a few hundred thousand native speakers in Latium in 200 BC to modern Spanish, or the Classical Arabic of a few Bedouin tribes to any of the far simpler modern urban Arabic dialects. Mandarin is an extreme example of a language being pared to down to analytic simplicity, spoken by hundreds of millions of people, as is English.

This seems true to me. I commented in a previous thread how just a marriage, a family is enough to make up dozens of new words. But others will not understand it. The need for a lot of people to understand each other means they can only come up with new words when truly necessary. They rather tend to lose words, simplify concepts, and so on. Classical or Church Latin is really complex. E.g. the problem with “love your enemies” was that they had three different words for three different kinds of love and two different words for two different kinds of enemies.

Regarding human running speeds, I do long-distance running up to and including marathon distance and I haven’t noticed quantised speeds, despite using a run-tracking app while training. In fact one of the skills I developed while training was to maintain a steady pace over many kilometres, whatever that pace happened to be. If my running speeds were quantised, you would expect this skill to be pretty much impossible because I’d find myself gravitating to the nearest “natural” pace over time.

I do have quantised breathing patterns, however. If I’m going at a light jog then I’ll breathe once every eight steps (four in, four out). Slightly faster and I’ll do three steps on the in-breath and four on the out-breath, and so on all the way up to two in/two out when I’m going at my fastest aerobic pace. (Sprinting is a different ball game, and one that I’m not an expert in, but my understanding is that as an anaerobic exercise, it causes the oxygen in your body to run out faster than your breath can restore it anyway, so it doesn’t necessarily follow that a sprinter will breathe more often than once every two steps, although they may do.)

Interesting notes on the breathing patterns. I do Nordic Walking occasionally (I’m not in shape at all, no regular exercise, no theoretical background), and my usual pattern is 3 in, 2 out, or 2-2 when I have to breathe more frequently.

You write that in certain situations you breathe in 3-4, so fewer steps per intake, and equal otherwise, while I do more steps on the intake and equal otherwise. Is there an objective reason for preferring one over the other, or is it all personal preference?

My comment was a bit unclear. I do also do 3-2. Basically I do all steps from 4-4 to 2-2.

Whether to do more steps on the in-breath or the out-breath is something I don’t actually have a strong preference about. I think I mix it up a bit depending on things like temperature and whether there are flying insects in the air but I’ve not paid too much attention to it. So my (not very confident) answer to your question would be that there’s no objective reason for preferring one over the other.

I’m now very curious how typical this is, and whether different people have different preferences and whether, as you say, there is an objectively better way around…

I got curious too, so I googled around. The top result advocates for 3-2 on grounds of far-eastern philosphy, getting in the zone, and something something core stability.

The next link already suggests that there is no clear consensus among experts and that there is scant scientific evidence either way.

So my best guess would have to be personal preference as well. But it certainly seems to be something to keep in mind and consciously try out to actually develop a preference in.

Some people commenting that quantized speeds of running is definitely a thing for them and others posting that it’s definitely not a thing for them is really interesting.

I think quantized breathing is what makes the most sense in terms of how this plays out for humans. I use 4-4, 3-3, and 2-2 breathing as well. By running this way I have very consistent pacing. My speed is controlled by my stride length, which is an artefact of my leg length. If I have a stride length that is not comfortable for me I will soon feel it and adjust accordingly.

I don’t quite understand how this is sufficient to give you quantised running speeds. (Is that even what you’re saying?)

Presumably for a given 4-4 breathing pattern you can still speed up or slow down a little, not by altering your stride length but by altering the frequency of your steps (and hence your breaths)?

As a distance running throughout high school and college, my experience has been the same. In particular, my college cross country team used to do mile repeats in which we would be instructed to hit a particular pace which varied five or ten seconds between groups. I never noticed any pace that people had particular difficulty hitting.

I wonder if speeds are not quantized but if there are certain “forbidden” speeds that are particularly inefficient, e.g. near the transition between “walk” and “run”.

This is exactly it. That transition is marked by your calf muscles maintaining tension between steps, which stores tensile energy that lets you run more efficiently. Walking normally relaxes your calf muscles between steps, which takes less energy. It’s possible to tense your calves at a slower speed but it’s highly uncomfortable, as well as incredibly wasteful if you don’t put a spring in your step. Trying to maintain a speed in between a walk and a run has none of the benefits and all of the drawbacks of the two methods.

Stupid question:

From my EMS training, you can’t increase oxygenation by increasing ventilation rate (I assume dropping levels down to 0 aren’t considered). If that’s the case, does increasing the ventilation rate only improve the rate of CO2 off-gassing? If so, is it merely a comfort thing or does not increasing your ventilation rate work just fine.

(Things that bother me in continuing education classes)

> you can’t increase oxygenation by increasing ventilation rate

Is this true when exercising, or only at rest?

It’s plausible that if you just hyperventilate, the bottleneck becomes circulation or something.

But if you’re jogging, your heart is pumping more, faster, and you can get more oxygen into you.

Med student, so not an authority, but as far as I’m aware:

-ventilation refers just to the physical movement of air in and out.

-oxygenation (in this context) refers to the efficiency of gas diffusing from the air to the bloodstream.

Ventilation is thus necessary for oxygenation, but more ventilation will not increase oxygenation (assuming ventilation is adequate to begin with).

The EMS training is probably telling you that if a patients lungs are shot, just increasing the rate of ventilation won’t get oxygen into the bloodstream any better, and you need to consider PEEP or something else. This is different to the exercise scenario, wherein the lungs are working just fine, but the physiological strain is requiring more frequent refreshing of the gas in the lungs.

On further though, its probably driven by the need to offgas CO2, as exhaled oxygen concentration is relatively similar to inhaled (oxygen diffusion is terrible), and one’s sensation of breathless is driven by CO2 concentrations in any case.

I think it’s a bit of both here. For me, running speed is smoothly variable over quite a wide range between ‘running on the spot’ and ‘quick jog’, all using roughly the same fairly vertical gait. Then there’s a step up to ‘run’ which takes me above my aerobic threshold and lengthens my stride compared to jogging, but I’m still landing on my heels or the sides of my feet. There’s a bit of a range of running speeds but much smaller than jogging. Above that is a flat-out sprint, with maxed out stride length and running on the balls of my feet.

Concerning colleges: Anglo-american colleges are very good at securing large amount of money for research through donations and good investments of their assets. This is why the most prestigious researchers are at colleges in the USA or England.

However, Anglo-American colleges are quite bad at giving students what they need. All my friends who are doing their maths PhD in the anglosphere keep telling me bizarre stories: That in the Linear Algebra II lecture they are assisting computing the determinant of a 3×3-matrix is a hard math problem, or that in the ODE lecture they assisted, the students where unable to check whether a function is a solution to an ODE. At my mediocre German college, even the most lowly students can solve such tasks.

Those would not have been considered hard problems at my undergrad (which was a good university but not super elite), but it may depend on the university.

The difference may be in how able the least able students are so to speak. My understanding is that in Europe (and Asia and basically everywhere but the U.S.), almost every country uses tests almost entirely to determine college admissions. Sometimes an ethnic quota system modifies the test cutoff scores or sets quotas like some places in Africa or Asia although I haven’t heard of that being used in Europe. The U.S. does not have a system like that. There are a variety of bizarro factors of no relation to academic ability that can get you into a U.S. college.

I would expect the U.S. to therefore have more students in college who can’t do mathematics past a middle school to early high school level. And because U.S. colleges also don’t force people to take courses in a very specific order (or even specify most of your courses at all), some of them will go way over their heads for a bit.

At German universities, there are no barriers to admission into most majors other than having obtained “Hochschulreife” (fitness for university) when finishing school, which about 50% of all students do. The true limiting factor is that professors and universities owe their students nothing, so in a subject like math, 50% of the students fail their exams in the first semesters, and only 30% or so ever obtain a Bachelor’s degree in math.

Nicaraguan, not Ecuadorian.

Sorry if someone’s already posted this, but here is a video of a pursuit hunt narrated by David Attenborough. The first time I watched it, goosebumps.

Regarding humans having a quantized running speed. I sometimes run after my oldest sun when he’s riding his bike. He often picks a pace that is too fast for me to walk after him and too slow for me to run after him. I can’t pick either a “slow run” or “fast walk” to match him, and have to tell him to either speed up or slow down. That’s not exactly “quantized”, but there’s definitely an awkward/uncomfortable speed. Once we’re up at a running speed, I haven’t noticed that there’s any quantization.

Thanks for sharing this! I hadn’t seen it yet.

Robert Pirsig’s Lila (1991) posits a metaphysics of “quality” that has this exact shape. First he carves reality into “static” and “dynamic” quality. Then he says: here is static material. A dynamic quality (i.e. change) called random chance gives us the emergence of biologicals. Static qualities are rungs on the ladder; biologicals don’t replace nonbiological materials, and in fact rely on them for their continued existence. For Pirsig, the next step up is “social” quality. You note that brains create “cultures” but it seems like one point of this series of essays is that a brain doesn’t create culture: evolution creates culture. Culture supervenes on biology. Then the next level up Pirsig calls intellectual quality. Basically, cultures create ideas. Pirsig’s contribution is this flip; people tend to think that individuals and ideas come together to form cultures, but he thinks cultures are what create individuals and ideas.

Anyway, this last paragraph put me very much in mind of Pirsig, who discusses cultural evolution via anthropology in Lila; it would not be I think inaccurate to describe him as treating “all of history as just evolution evolving better evolutions.”

Lila has not been especially influential, though it is a narrative sequel to one of the biggest pop-philosophy books ever published (Zen and the Art of Motorcycle Maintenance). But many of its zanier aspects occasionally crop up in other guises among the professional metaphysics-and-epistemology crowd; emergence and supervenience being two of the more controversial versions. I was surprised, but I suppose not very surprised, to see echoes show up here as well.

On the “languages with more consonants in South Africa, lose consonants and become more vowel-y the further you go away”.

I’m only judging this based on a second hand description, but it seems to me that this confuses consonant inventory (how many different consonants there are in the phoneme inventory of the language) with consonant-to-vowel ratio (how many actual consonants the language can cram in a single syllable).

Hawaiian doesn’t have a lot of distinct consonants, but as it turns out it doesn’t have that many distinct vowels either — it has 10, basically the five vowels of Spanish in short and long variations (so it’s on par with Latin or Czech). What makes Hawaiian be perceived as a “vocalic” language is that its syllables adhere to a strict Consonant-Vowel structure; but as it turns out, most sub-Saharan African languages also adhere to that structure!

It turns out that not only can you find languages with quite large consonant inventories pretty far from South Africa, including in the Caucasus, Europe, Northern India, Southeast Asia, Australia or the Pacific Northwest, but when it comes to languages with large consonants:vowel ratios per words, most of the formidable examples are found outside of Africa, witness:

Georgian (Caucasus) mts’vrtneli trainer

Nivkh (Russian Far East) amspvengx seal soup

Nuxalk (Northwest Plateau) tsktskwts he arrived

Even Russian (vzdrognut’ to flinch) or for that matter English (abstract, explode) admit consonant collisions that are far beyond what most people in Africa or the Pacific Islands would instinctively consider pronounceable.

Perhaps because languages don’t even agree what is a consonant. Serbo-Crotian considers “r” a “half-vowel”, leading to sentences like “Brz trk na trg s hrt!”

Latin has more than just 10 vowels. In addition to the ones you mentioned, it also has /y/ and /y:/, as well as nasal variants of the long vowels.

Well we can argue, but /y y:/ were only found in Greek loanwords and likely pronounced as /i i:/ by most speakers, with only the few well educated Patricians fluent in Greek pronouncing them distinctly (the same way that English could be argued to have /χ/ on the basis of some speakers pronouncing that sound correctly in Spanish or Yiddish loanwords, but most just substitute it for /h/ or /k/ depending on word position).

As for the nasal vowels, they were not phonemic (that is, not contrastive, it didn’t matter if you pronounced versum with a final in [ũ] or [um], it would be perceived as the same word). English too has non-phonemic nasalisation. A word like “long” is phonemically /ˈlɔŋ/, but is really pronounced more like [ˈlɔ̃ŋ], but nobody will misunderstand it as a different word if you somehow miss that nasalisation.

You’re right of course that /y/ was a rather marginal phenomenon that only occurred in Greek loan words, but then Latin had quite a few of those. And yes, uneducated people probably didn’t bother with this phoneme, but if we’re talking about classical Latin, we’re typically not interested in the way common people talked but in the language of Cicero et al.

As for nasal vowels not being distinctive, surely the question is not whether they are distinct from non-nasal vowel + [m], but whether they are distinct from the ordinary long vowel. For a minimal pair, take the accusative and ablative of any first declension noun. E.g. filiam vs filiā.

Well I’d argue it does matter? At least some varieties of French have a multiple-way contrast between:

short vowel + nothing – la /la/ the (feminine singular)

short vowel + oral consonant – lac /lak/ lake

short vowel + nasal consonant – lame /lam/ blade

long vowel + nothing – las /lɑ:/ weary (masculine)

long vowel + oral consonant – lasse /lɑ:s/ weary (feminine)

long vowel + nasal consonant – âme /ɑ:m/ soul

nasal vowel + nothing – lent /lɑ̃/ slow (masculine)

nasal vowel + oral consonant lente /lɑ̃t/ slow (feminine)

nasal vowel + nasal consonant ennui /ɑ̃nyi/ boredom

By comparison the Latin contrast is really not that robust, and my inclination as someone who studied linguistics at least seriously enough to get a bachelor’s degree is to say that it was not contrastive and that nasal vowels are not part of Latin’s inventory of phonemes, and as far as I can tell this is the consensual view among latinists.

That said phonemic analysis of a given language is rarely a settled issue and it’s always possible to propose an alternative analysis (indeed some linguists propose that “phonemes” are not in fact encoded in the brain but are a pure construct of linguistic analysis, and that the primary blocks the brain work with are not phonemes but syllables on the one hand, and individual features (nasal, voiced, labial, etc) on the other).

Although I’ve had some training in linguistics, I’m not a linguist, least of all a Latin linguist, so I don’t know how the professionals classify nasal vowels in Latin. But if, as you say, most of the experts don’t recognise nasal vowels as distinctive phonemes, I submit, at the risk of sounding presumptuous, that the experts are wrong.

Most classicists have rather barbarian accents and very few even bother pronouncing the nasal vowels. The example I provided above is hardly marginal. It’s a whole class of examples that come up all the time. Compare that to voiced and unvoiced dental fricative in English where you have to resort to fairly obscure minimal pairs such as thigh – thy and ether – either, but I’ve never heard anyone suggest that these two sounds are actually the same phoneme in English.

Well, the knock out argument for most latinists over nasal vowel is, as I’ve mentionned, that the pairs “oral vowel + nasal consonant” and “nasal vowel” do not contrast, which is usually what you would be first looking for in order to establish that a language has distinct, phonemic nasal vowels (a contrast that can be shown to exist in French, Portuguese or Polish for instance).

That the correct pronounciation of Classical Latin requires nasal vowels is a different matter, one of accents and allophones. This is like how, in English, L is velarized when in coda position (at the end of a syllable). The L in “male” is pronounced quite distinctly from the one in “lame”, and mastering that distinction is part of getting a natural sounding English accent. However, these two sounds do not form a minimal contrast, you won’t find a pair of words that differ only by one using “clear L” and the other “dark L”.

But as I said, it kind of also depends of how reductionist you want to be. Under some analysis, English doesn’t have a distinct “sh” phoneme (since it doesn’t contrast with “s” + “y”), and standard Chinese has only two distinct vowels.

I’m genuinely baffled by this response. If we want to find out whether a nasal vowel is distinct from an oral vowel, why not just look at that question instead of the different question of whether the nasal vowel is distinct from the oral vowel + a nasal consonant?

If you want to parse spoken classical Latin, you have to be able to distinguish between the accusative “filiam” (pronounced with long nasal a and silent m) and the ablative “filiā”, which you cannot do if you don’t distinguish between nasal and oral a. Surely that is what it means for two sounds to belong to two different phonemes rather than being allophones.

Well this is the crux of the distinction between phonemes and sounds. Sounds are how it’s actually pronounced. Phonemes are units of meaningful semantic distinction.

The problem is not that “-am” contrasts with “-ā”; the problem is that it contrasts just as well whether you pronounce “-am” as [ã:] or [am]. So all that this tells us is that “-am” is not a long oral vowel.

The point is that the pronounciation [ã:] and [am] are in fact in predictible complementary distribution: you get the [ã:] pronounciation word-finally (rosam [rosã:]) and the [am] one in other positions (amare [ama:re], ambulare [ambula:re]).

Likewise, “en” is [ẽ:] before a fricative but [en] in other positions (gens [gẽ:s] but gentis [gentis].