[Previously in sequence: Epistemic Learned Helplessness, Book Review: The Secret Of Our Success, List Of Passages I Highlighted In My Copy Of The Secret Of Our Success. Deleted a controversial section which I still think was probably correct, but which given the number of objections wasn’t provably correct enough to be worth including. I might write another post giving my evidence for it later, but it probably shouldn’t be dropped in here without justification.]

I.

Years ago, I wrote about symmetric vs. asymmetric weapons.

A symmetric weapon is one that works just as well for the bad guys as for the good guys. For example, violence – your morality doesn’t determine how hard you can punch; they can buy guns from the same places we can.

An asymmetric weapon is one that works better for the good guys than the bad guys. The example I gave was Reason. If everyone tries to solve their problems through figuring out what the right thing to do is, the good guys (who are right) will have an easier time proving themselves to be right than the bad guys (who are wrong). Finding and using asymmetric weapons is the only non-coincidence way to make sustained moral progress.

The parts of The Secret Of Our Success that deal with reason vs. cultural evolution raise a disturbing prospect: what if sometimes, the asymmetry is in the wrong direction? What if there are some issues where rational debate inherently leads you astray?

II.

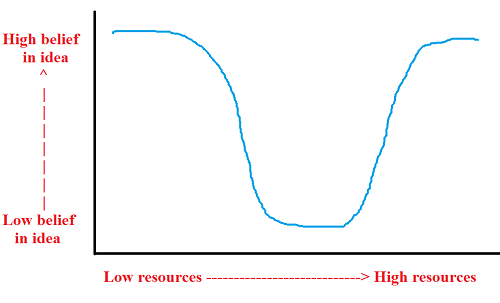

Maybe with an unlimited amount of resources, our investigations would naturally converge onto the truth. Given infinite intelligence, wisdom, impartiality, education, domain knowledge, evidence to study, experiments to perform, and time to think it over, we would figure everything out.

But just because infinite resources will produce truth doesn’t mean that truth as a function of resources has to be monotonic. Maybe there are some parts of the resources-vs-truth curve where increasing effort leads you the wrong direction.

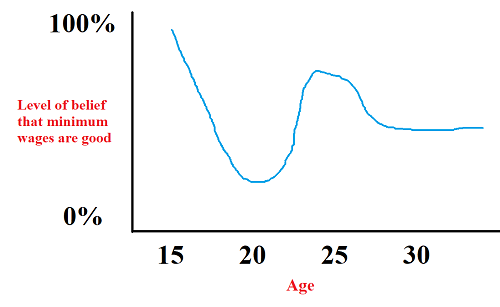

When I was fifteen, I thought minimum wages obviously helped poor people. They needed money; minimum wages gave them money, case closed.

When I was twenty, and a little wiser, I thought minimum wages were obviously bad for the poor. Econ 101 tells us minimum wages kill jobs and cause deadweight loss, with poor people most affected. Case closed.

When I was twenty-five, and wiser still, I thought minimum wages were probably good again. I’d read a couple of studies showing that maybe they didn’t cause job loss, in which case they’re back to just giving poor people more money.

When I was thirty, I was hopelessly confused. I knew there was a meta-analysis of 64 studies that showed no negative effects from minimum wages, and a systematic review of 100+ studies that showed strong negative effects from minimum wages. I knew a survey of economists found almost 80% thought minimum wages were good, but that a different survey of economists found 73% thought minimum wages were bad.

We can graph my life progress like this:

This partly reflects my own personal life course, which arguments I heard first, and how I personally process evidence.

But another part of it might just be inherent to the territory. That is, there are some arguments that are easy to understand, and other arguments that are harder to understand. If the easy arguments lean predominantly one way, and the hard arguments lean predominantly the other way, then it will natural for any well-intentioned person studying a topic to follow a certain pattern of switching their opinion a few times before getting to the truth.

Some hard questions might be epistemic traps – problems where the more you study them, the wronger you get, up to some inflection point that might be further than anybody has ever studied them before.

III.

We’ll get to vast social conflicts eventually, but I want to start with boring things in everyday life.

I hate calling people on phones. I can’t really explain this. I’m okay with emailing them. I’m okay talking to them in person. But I hate calling them on phones.

When I was younger, I would go to great lengths to avoid calling people on phones. My parents would point out that this was dumb, and ask me to justify it. I couldn’t. They would tell me I was being silly. So I would call people on phones and hate it. Now I don’t live with my parents, nobody can make me do things, and so I am back to avoiding phone calls.

My parents weren’t authoritarian. They weren’t demanding I make phone calls because That Is The Way We Do Things In This House. They were doing the supposedly-correct thing, using rational argument to make me admit my aversion to phone calls was totally unjustified, and that making phone calls had many tangible benefits, and then telling me I should probably make the call, shouldn’t I? Yet somehow this ended up making my life worse.

Or: I can’t do complicated intellectual work with another person in the room. I just can’t. You can give me good reasons why I’m wrong about this: maybe the other person won’t make any noise. Maybe I can just turn the other way and focus on my computer and I won’t ever have to notice the other person’s presence at all. Argue this with me enough, and I will lose the argument, and work in the same room as you. I won’t get any good work done, and I’ll end up spending most of the time hating you and wishing you would go away.

I try to be very careful with my patients, so that I don’t make their lives worse in the same way. It’s often easy to get patients to admit they don’t have a good reason for what they’re doing; for example, autistic people usually can’t explain why they “stim”, ie make unusual flapping movements. These movements are distracting and probably creep out the people around them. It’s very easy to argue an autistic person into admitting they stimming is a net negative for them. Yet somehow autistic people always end up hating the psychiatrists who win this argument, and going somewhere far away from them so they can stim in peace.

Every day we do things that we can’t easily justify. If someone were to argue that we shouldn’t do the thing, they would win easily. We would respond by cutting that person out of our life, and continuing to do the thing.

I hope most readers find at least one of the examples above rang true to them. If not – if you don’t hate phones, or have trouble working near others, or stim – and if you’re thinking “All of those things really do seem irrational, you’re probably just wrong if you want to protect them against Reason” – here are some potential alternative intuition pumps:

1. Guys – do you have trouble asking girls out? Why? The worst that can happen is they’ll say no, right?

2. Girls – do you sometimes get upset and flustered when a guy you don’t like asks you out, even in a situation where you don’t fear any violence or coercion from the other person? Do you sometimes agree to things you don’t want because you feel pressured? Why? All you have to do is say “I’m flattered, but no thanks”.

3. Do you diet and exercise as much as you should? Why not? Obviously this will make you healthier and feel better! Why don’t you buy a gym membership right now? Are you just being lazy?

I don’t mean to say these questions are Profound Mysteries that nobody can possibly answer. I think there are good answers to all of them – for example, there are some neurological theories that offer a pretty good explanation of how stimming helps autistic people feel better. But I do want to claim that most of the people in these situations don’t know the explanations, and that it’s unreasonable to expect them to. All of these actions and concerns are “illegible” in the Seeing Like A State sense.

Illegibility is complicated and context-dependent. Fetishes are pretty illegible, but because we have a shared idea of a fetish, because most people have fetishes, and because even the people who don’t have fetishes have the weird-if-you-think-about-it habit of being sexually attracted to other human beings – people can just say “That’s my fetish” and it becomes kind of legible. We don’t question it. And there are all sorts of phrases like “I don’t like it”, or “It’s a free country” or “Because it makes me happy” that sort of relieve us of the difficult work of maintaining legibility for all of our decisions.

This system works so well that it only breaks down when very different people try to communicate across a fundamental gap. For example, since allistic people may not feel any urge to stim or do anything like stimming, its illegibility suddenly becomes a problem, and they try to argue autistic people out of it. The worst failure mode is where illegible actions by an outgroup are naturally rounded off to “they are evil and just hiding it”. I remember feeling pretty bad once after hearing a feminist explain that the only reason men stared at attractive women was to intimidate them, make them feel like their body existed for other people’s pleasure, and cement male privilege. I myself sometimes stared at attractive women, and I couldn’t verbalize a coherent reason – was I just trying to hurt and intimidate them? I think a real answer to this question would involve the way we process salience – we naturally stare at the most salient part of a scene, and an attractive person will naturally be salient to us. But this was beyond teenaged me’s ability to come up with, so I ended up feeling bad and guilty.

If you force people to legibly interpret everything they do, or else stop doing it under threat of being called lazy or evil, you make their life harder and probably just end up with them avoiding you.

IV.

Different problems come up when we talk about societies trying to reason collectively. We would like to think that the more investigation and debate our society sinks into a question, the more likely we are to get the right answer. But there are also times when we do 450 studies on something and end up more wrong than when we started.

A very boring, trivial example of this: I think we should increase salaries for Congress, Cabinet Secretaries, and other high officials. There are so few of this that it would be very cheap: quintupling every Representative, Senator, and Cabinet Secretary’s salary to $1 million/year would involve raising taxes by only $2 per person. And if it attracted even a slightly better caliber of candidate – the type who made even 1% better decisions on the trillion-dollar questions such leaders face – it would pay for itself hundreds of times over. Or if it prevented just a tiny bit of corruption – an already rich Defense Secretary deciding from his gold-plated mansion that there was no point in going for a “consulting job” with a substandard defense contractor – again, hundreds of times over. This isn’t just me being a elitist shill: even Alexandria Ocasio-Cortez agrees with me here. This is as close to a no-brainer as policies come.

But I think I would be demolished if I tried to argue for this on Twitter, or on daytime TV, or anywhere else that promotes a cutthroat culture of “dunking” on people with the wrong opinions. It’s so much faster, easier, and punchier to say “poor single mothers are starving on minimum wage, and you think the most important problem is taking money away from them to make our millionaires even richer?” and just drown me out with cries of “elitist shill, elitist shill” every time I try to give the explanation above. Sure enough, the AOC article above notes that although Americans underestimate the amount Congressmen get paid (they think only $120,000, way less than the real number of $170,000), most of them believe they should be paid less, with only 17% saying they should keep getting what they already have, and only 9% agreeing they should get more.

This is a different problem than the one above – the policy isn’t illegible to the people trying to defend it, but the communication methods are low-bandwidth enough that the most legible side naturally wins. That Congressmen are even able to maintain their current salary is partly due to them being insulated from debate: the issue never really comes up, so the consensus in favor of cutting their pay doesn’t really matter.

And yeah, I know, Popular Opinion Sometimes Wrong, More At 11. But this seems like a trivial but real society-wide case of the epistemic traps above, where if you increase one resource (amount an issue is debated) without increasing other resources (intelligence and rationality of the participants, the amount of time and careful thought they are willing to put in) you get further away from truth.

V.

Are there any less trivial examples? What about turn-of-the-20th-century socialism?

I was shocked to learn how strong a pro-socialism consensus existed during this period among top intellectuals. Socialist leader Edward Pease described the landscape pretty well:

Socialism succeeds because it is common sense. The anarchy of individual production is already an anachronism. The control of the community over itself extends every day. We demand order, method, regularity, design; the accidents of sickness and misfortune, of old age and bereavement, must be prevented if possible, and if not, mitigated. Of this principle the public is already convinced: it is merely a question of working out the details. But order and forethought is wanted for industry as well as for human life. Competition is bad, and in most respects private monopoly is worse. No one now seriously defends the system of rival traders with their crowds of commercial travellers: of rival tradesmen with their innumerable deliveries in each street; and yet no one advocates the capitalist alternative, the great trust, often concealed and insidious, which monopolises oil or tobacco or diamonds, and makes huge profits for a fortunate; few out of the helplessness of the unorganised consumers.

Why shouldn’t people have thought this? The period featured sweatshop-like working conditions alongside criminally rich nobility with no sign that this state of affairs could ever change under capitalism. Top economists, up until the 1950s, almost unanimously agreed that socialism would help the economy, since central planners could coordinate ways to become more efficient. The first good arguments against this proposition, those of Hayek and von Mises, were a quarter-century in the future. Communism seemed perfectly straightforward and unlikely to go wrong; the first hint that it “might not work in real life” would have to wait for the Bolshevik Revolution. Pease writes that the main pro-capitalism argument during his own time was the Malthusian position that if the poor got more money, they would keep breeding until the Earth was overwhelmed by overpopulation; even in his own time, demographers knew this wasn’t true. The imbalance in favor of pro-communist arguments over pro-capitalist ones was overwhelming.

Don’t trust me on this. Trust all the turn-of-the-20th-century intellectuals who flocked towards socialism. In the Britain of the time, the smarter you were, and the more social science and economics you knew, the more likely you were to be a socialist, with only a few exceptions.

But turn-of-the-century Britain never went communist. Why not?

One school of thought says it’s because rich people had too much power. Even though the intellectuals all supported communism, nobody wanted to start a violent revolution, because they expected the rich to win and punish them.

But another school of thought says that cultural evolution created both capitalism, and an immune system to defend capitalism. This is more complicated, and requires a lot of the previous discussion here before it makes sense. But it seems to match some of what was going on. Society didn’t look like everyone wanting to revolt but being afraid of the rich. It looked like large parts of the poor and middle class being very anti-communist for kind of illegible reasons like “king” and “country” and “God” and “tradition” or “just because”.

In retrospect, these illegible reasons were right. It’s hard to tell if they were right by coincidence, or because cultural evolution is smarter than we are, drags us into whatever decision it makes, and then creates illegible reasons to prop itself up.

Empirically, as people started devoting more intellectual resources to the problem of whether Britain should be communist or not – as very intelligent and well-educated people started thinking about the problem using the most modern ideas of science and rationality, and challenged all of their preconceived notions to see which ones would stand up to Reason and which ones wouldn’t – they got further from the truth.

(I’m assuming that you, the reader, aren’t communist. If you are, think up another example, I guess.)

There is a level of understanding that lets you realize communism is a bad idea. But you need a lot of economic theory and a lot of retrospective historical knowledge the early-20th-century British didn’t have. There’s some part in the resources-vs-truth graph, where you’re smart enough to know what communism is but not smart enough to have good arguments against it – where the more intellect you apply the further from truth it takes you.

VI.

Obviously this ends with everyone agreeing to think very hard about things, carefully distinguish notice which traditions have illegible justifications, and then only throw out the traditions that are legitimately stupid and exist for no reason. What other position could we come to? You wouldn’t say “Don’t bother being careful, nothing is ever illegible”. But you also can’t say “Okay, we will never change anything ever again”. You just give the maximally-weaselly answer of “We’ll be sure to think about it first.”

But somebody made a good point on the last comments thread. We are the heirs to a five-hundred-year-old tradition of questioning traditions and demanding rational justifications for things. Armed with this tradition, western civilization has conquered the world and landed on the moon. If there were ever any tradition that has received cultural evolution’s stamp of approval, it would be this one.

So is there anything at all we should learn from all of this? If I had to cache out “think very hard about things” more carefully, maybe it would look like this:

1. The original Chesterton’s Fence: try to understand traditions before jettisoning them.

2. If someone does something weird but can’t explain why, accept them as long as they’re not hurting anyone else (and don’t make up stupid excuses for why their actions really hurt all of us). Be less quick to jump to “actually they are doing it out of Inherent Evil” as an explanation.

3. As per the last Henrich quote here, make use of the “laboratories of democracy” idea. Try things on a small scale in limited areas before trying them at larger scale; let different polities compete and see what happens.

4. Have less intense competitive pressure in the marketplace of ideas. Kuhn touches on how heliocentric theory had less explanatory power than geocentric theory for a while, but was tolerated anyway long enough that it was eventually able to sort itself out and become better. If good ideas are sometimes at a disadvantage in defending themselves, leave unpopular opinions alone for a while to see if they eventually become more legible. I think this might look like just being kinder and more tolerant of weirdness.

5. If someone defends a tradition that seems completely wrong and repulsive to you, try to be understanding of them even if you are right and the tradition is wrong. Traditions spent a long time evolving to be as sticky as possible in the face of contrary evidence, humans spent a long time evolving to stick to traditions as much as possible in the face of contrary evidence, and this evolution was beneficial through most of history. This sort of pressure is as hard to break (and probably as genetically-loaded) as other now-obsolete evolutionary urges like the one to binge on as much calorie-dense food as possible when it’s available (related).

6. Having done all that, and working as gingerly and gradually as you can, you should still try to improve on traditions that seem obsolete or improvable.

7. Cultural evolution does not provide evidence that traditions are ethical. Like biological evolution, cultural evolution didn’t even try to create ethical systems. It tried to create systems that were good at spreading. Plausibly many cultures converged on eating meat because it was a good source of calories and nutrients. But if you think it violates animals’ rights, cultural evolution shouldn’t convince you otherwise – there’s no reason cultural evolution should price animal suffering into its calculations. (related).

Finally: some people have interpreted this series of posts as a renunciation of rationality, or an admission that rationality is bad. It isn’t. Rationality isn’t (or shouldn’t be) the demand that every opinion be legible and we throw out cultural evolution. Rationality is the art of reasoning correctly. I don’t know what the optimal balance between what-seems-right-to-us vs. tradition should be. But whatever balance we decide on, better correlating “what seems right to us” with “what is actually true” will lead to better results. If we’re currently abysmal at this task, that only adds urgency to figuring out where we keep going wrong and how we might go less wrong, both as individuals and as a community.

I feel like this series of posts was the underlying theme of your writing all along.

It really was!

Check out the first post on this blog:

Charity over absurdity!

Same. This is probably some kind of semi-common issue, I figure.

I know you’re discussing meta-levels, but the concentration camp school of healthy lifestyle isn’t healthy. It feels horrible, is unsustainable for the vast majority, and doesn’t even reliably work for health outcomes.

Yeah, but we are frickin’ fat. I do work out, don’t look very fat (big belly but no folds) and still hovering at the edge of diabetes. I hate all the shaming and virtue-signalling about diets and all, but at the end of the day I would rather not hover at the edge of diabetes. I look at 16 years old girls, a third of them overweight, and I noticed they walk visibly differently than their classmates. Assuming human joints were “meant” for the thin kind of walking, I think they are facing a lifetime of joint issues… this isn’t just beauty standards and crap like that, it is that I look out the window and I see thin 65 years old tourists sight-seeing, walking all day, and I am not sure I will be able to do that at 65, and I am fairly sure these young people will not be able to do it at 65. We are missing out on so much of life because of weight… I am no longer throwing myself on to hotel beds. Because I don’t want to buy them a new one.

Right. Sure, most people probably should not be spending two hours a day in the gym or whatever and eating only lean protein and fresh vegetables, but most people (in the West) would be happier if they moved significantly in that direction from where they are now. Source: am fat, 36, already have a shit knee that makes it difficult to do things I like doing, am generally much happier in periods where I eat properly and exercise, still usually don’t.

Dieting etc. is hard. You have to do all your own cooking, because the food industry peddles almost entirely unhealthy food, however much it conforms to the fad of the week. (If salt is bad, they add extra sugar; etc. ad infinitum. Because folks prefer the bad stuff – especially when they don’t consciously know it’s there. And there’s money to be made…) Gyms are wretched unpleasant places – and exercise for its own sake, rather than in daily life/as part of doing something you want done is just about the worst option. But cities are unwalkable, children are kept with adults (= taught to move at slower pace and go everywhere by car), and there isn’t even generally good public transit (which usually involves lots of walking to and from stops).

Meanwhile people generally have a built in short term bias – adaptively so, in that if you don’t survive in the short term, there is no long term to worry about. Planning and preparing for the distant future is something done by those feeling secure and optimistic. And “when I’m 80” is very distant to a 20 year old, or even a 40 year old.

You can eat very healthy without having to cook, you just have to pay a lot of money relative to eating out/and unhealthy. Domino’s will charge you 5.99 for a 210 calorie salad or a 2320 calorie pizza, and bring either to your house.

@Spookykou

Raw meat isn’t that expensive.

@HarmlessFrog

I was responding to,

Which I saw more as a claim that it is ‘harder’ to eat healthy cause you can’t eat out/order food, which I think is not true. Plenty of restaurants will sell you healthy food(In the US), they just charge the same as their unhealthy food, so you might be getting 1/10th the calories on the dollar. You won’t have the exact same selection obviously there is more total unhealthy food than healthy out there, but cooking healthy food for yourself will similarly limit your options.

Like many activities eating healthily has a learning curve, you can’t expect to be expert on day 1. So use the approach of thinking you are trying to learn about healthy eating rather than being a healthy eater. This means trying different foods that are more healthy, learning to cook healthy food, researching healthy eating and so on. What you will find is that you will unconsciously be more able to eat healthy without that mental drain of feeling like you are being forced to do something you don’t want to do.

You can usually find convenience shops almost anywhere that sell ready-to-eat cheap healthy food.

E.g. a bowl of mixed salad + two servings of cooked or canned fish or meat with no added sugar or preservatives is a 400-500 kcal high-protein low-carb meal. Add a banana for ~110 kcals of satiating carbs. Is this difficult to find anywhere?

>Gyms are wretched unpleasant places

I love gyms. As soon as I walk in the door, I relax a little bit because I know I’m in a welcoming place where I can be successful. I’m sorry you feel differently.

Also, deadlifts feel good.

Look into lowcarb, intermittent fasting, or both. Or even into the ultralow fat, if you like sugar and vitamin pills.

To me the phone thing is not only directly relatable but an example of an aversion that can be more easily explained than most, thus is not that illegible. When speaking “in real time” to another person, one is forced to think on one’s feet, understand the nuances of what the other party is trying to communicate, and form responses on the fly. This creates challenges not present in the medium of writing messages. Then, it’s not hard to see why this is way easier to do when the other party’s body language and hand gestures are visible and the sound quality is maximized by actually being a few feet away from each other.

Yeah. It’s a medium that greatly reduces information transfer compared to face-to-face interactions, but still has the same time pressure.

I suspect that people who dislike calling are those who have more difficulty interpreting fairly subtle signals (like speech inflections). There is an expected rhythm to phone calls, where you can’t really be (far) too late. So for some people it might be similar to walking next to a person with a higher natural walking pace and having to constantly push themselves out of their comfort zone to keep up.

I think I’m better than average at interpretation, but still dislike phone calls. I think it’s worry about getting just the right response, which translates in text to editing a few times, but in speech to mumbling and interrupting myself and so on.

Also, silence on the phone is awkward in a way that silence in person is not.

And for that matter, it can be hard to know when it’s a bad idea to begin speaking because the other person is starting to speak or hasn’t finished talking — it’s much easier to pick up on this when you can see what their face is doing. (I actually have issues with getting this right even in person, but on the phone it’s much worse.)

In addition to those weaknesses, it also lacks the advantage that most other current long-distance communication methods have of leaving an easily-referenced record of the conversation afterwards.

Not only do I hate calling people on the phone, unless the person is intractable in responding to other options, it generally doesn’t even seem like a comparably useful method.

Depends on how fast you text and if you have your hands and eyes free. Phones are quite useful for organizing things requiring mutual input or confirmation in a timely manner.

Along similar lines …

I have no particular problem with phone calls, but I like being alone a good deal of the time. Back when I had a WoW character, I would explain my disinterest in joining a guild by describing myself as solitary by nature.

I think it’s related to what you describe. If others are present, some part of my attention has to be on them, even if we are not interacting. It’s more comfortable and relaxing to be by myself.

I have always fucking loved phonecalls, and I think a lot of it is that speech inflections were highly legible to me, while visual social data is something I only began to competently process five years or so ago.

I’ve never understood why people focus so heavily on gyms for exercise. If you’re a powerlifter, or you’re looking for serious aesthetics, then sure, you need machines and weights. But our hunter-gatherer ancestors didn’t need to pump iron, they just ran around and did things. What’s the point of paying money for a treadmill when you can just go for a run outside? A lot of exercises only require a reasonably flat surface; I take a 2 minute break every so often to do various kinds of push-ups.

I suspect the commercialization of exercise has a lot to do with the malaise in the West right now. Signing up for something (and paying real money) is something best to be done in the foggy future, never in the present. Going somewhere else, putting on gym attire, all of that is difficult and unpleasant, which exercise shouldn’t actually be.

I think it’s not just gyms, it’s the idea that exercise should be drab– simple repetitive movements.

This kind of attitude falls into the exact kind of legibility issues that Scott is discussing in this post. Most people don’t want to do 2 minutes of pushups every 30 minutes, even if you can argue that it’s good for them.

I agree that it isn’t particularly intuitive. However, I think there are several direct and indirect reasons why people go to gyms.

Direct:

– As you alluded to, some people want to use equipment that is impractical to have at home (not only weights, but a pool, racquetball courts, etc.)

– In many parts of the country working out outside is unpleasant much of the year (e.g. below freezing with icy sidewalks, hot and humid). Aside from the weather, running in a city can be annoying if you’re always dodging pedestrians or waiting at intersections. In that case, getting to a gym might be easier than getting to a park with a good running path.

– Childcare: The YMCA we go to will watch your kids (for free with the standard membership!) for two hours while you work out. This is a huge deal for my wife who makes use of this several days a week.

– Space considerations: Even if buying a treadmill and some weights might be more economical than a monthly gym membership, if you live in a small apartment you might not have room and getting a bigger place is much more expensive than the gym.

Indirect:

– Gyms can work as a nice Schelling point if you want to work out with other people. Your friend might not want to drive over to your place, go for a run, drive home to shower, and then drive to work. Meeting at a gym, showering there, and going to work might be more convenient.

– Some people just prefer having separate places for separate things. It can work as a self-enforcement strategy. If you go through all the effort to get yourself to the gym, you might as well work out. You could think of this as exploiting the sunk cost fallacy (and the same if true for paying for the membership, of course). If you dislike working out, walking/driving to the gym delays starting to work out (more than just running once you step out of your house), but once you’re there then you’d feel silly if you just turned back around.

I used to run outside, but I got tired of the cold, the rain, the inattentive drivers, and the hard pavement. Now I walk 1/2 mile the the gym (U$10/month) and run on a treadmill in a climate-controlled environment where I am in no danger of being run over.

1) Treadmills and other equipment that lets you run in place or the equivalent so you don’t have to be outside.

2) Some people like group classes for motivation/support.

3) While bodyweight exercise is great for getting into or staying in shape, at some point you need progressive overload to get into better shape. Progressive overload = add more weight. Agree with OP though that you don’t need a gym to get into shape.

Competitive 10k (37:12) and marathoner here (2:58!) I do try to do most of my exercise outside, even in the winter, but weather and time pressures mean that I frequently will have to use a treadmill to meet my weekly mileage goals. Plus swimming is a great way to add some extra aerobic time while working different muscles from running and is easier on your joints – although I try to do a lot of open water swimming in the summer, unless you have your own large pool and live in a warm climate, it’s not feasible for much of the year unless you have a gym membership.

When I am at home there is always something more fun to do than exercise. At the gym there is nothing else to do.

“Hit the gym” is a synecdoche. I did a lot of exercising at home until I worked my way up to the gym, but I knew exactly what was meant by going to the gym.

Do what works for you.

I go to gyms as it is the most efficient time wise way to stay healthy. Two or three one hour sessions a week are all I need. Plus once you have a routine worked out it is great for experiencing flow. If I try little bits of different types of exercise the mental activity of planning that exercise means I am much less likely to do it and I don’t get into flow while doing it.

Aren’t these two potentially in conflict with one another? Say, if one person’s seemingly wrong and repulsive tradition also involves shunning people who are weird but ultimately harmless?

The conflict between them is much like the conflict in telling someone “don’t let what other people say bother you” and “don’t say mean things to people”.

The proper advice varies depending whether you are talking about sending or receiving, acting on others or being acted upon, etc. It’s a bit like the robustness principle applied to people. Similarly, there are issues with taking such compact advice too far so to speak (although the robustness principle may be a good or bad idea for software, I think it’s a good idea for humans). Sometimes, you probably should be bothered by what others are saying, and sometimes you should say mean things. But humans tend to err in the opposite direction of the advice, so it’s still usually good advice.

“Don’t say mean things to people” has a connotation of “People who say mean things are or should be low status.” “Don’t let what other people say bother you” has a connotation of “Not saying anything about the status of people who are saying mean things, and perhaps implying overly sensitive people should have low status.”

Then more or less everybody correctly derives that it is people who say mean things are who deserve low status and not people who are sensitive so they get angry if they hear the second.

Same story as telling women to be cautious about, you know what. They got angry. Because not uncautious victims deserve low status, but perpetrators.

Why do people take an advice as an implication of low status? I don’t know. But advice is generally something from top to down? Sure our friends give us advice, but the doctor’s advice, delivered top to down, feels like the more central kind of advice.

Advice can be interpreted as an order directly or indirectly*.

* Where the person tells you what the norm is, but it is actually a norm that benefits them, rather than an unbiased norm.

> Why do people take an advice as an implication of low status? I don’t know.

I believe that in modern world, where it is seen to be wrong to judge people by their immutable characteristics and factors outside of their control – agency is used as shorthand for judgement. Both good and bad work the same – you can only be judged (Positively or negatively) by your choices, not by your circumstances.

Giving advices on how to prevent bad thing happening to you shifts judgment onto you. You chose poorly and now paying the price. By the same token, telling people that good things happened due to factors beyond their control will also anger them – they’ll likely angrily tell you how they are simple everymen who just choose the right thing that would work for everyone if only they’ve chosen to be as virtuous.

Possibly related, I believe there is a general reluctance to think of the world in terms of incentives and environments that influence human behavior. I regularly think this way myself, and explain to people ways in which I try to restructure my environment to help encourage the kind of behavior I want to have, and the consistent response is a combination of confusion and admonishment.

Clearly people who do wrong things deserve lowered status, including injudicious/incautious people and overly sensitive people, as well as harassers and abusers. The purpose of status is to reward/punish people for doing right/wrong things and thereby encourage prosocial behavior, right? If complaining about every slight makes you high status our culture is doomed. It’s high status people who can afford to be gracious and forbearing.

That makes sense, although I still see a bit of an inherent contradiction if one is expected to be, for instance, both accepting of gay people and accepting of homophobes.

As the Democratic Party learned back in the 60s, you can’t keep the support of both the Black community and White supremacists. Accepting one necessarily entails rejecting the other, directly or indirectly. And if you try to appeal to both of them, neither will support you.

I think this summation, while accurate in some ways, is a little misleading about the path that the civil rights/segregation split followed.

Partly because, before FDR, there wasn’t really any Black support for Democrats. FDR managed to gain Black support without losing segregationists, for reasons having nothing to do with segregation.

1948, when Black service members civil rights were being supported, is the first crucial point. But in 1964, Johnson knew, and was right, that segregationists would withhold national support from the party over the issue.

Doesn’t contradict the other point if the bold part is taken into account.

Re homophobes: One can be relatively tolerant of gay people but also of homophobes who are not in a position to force their views on others. There have been a lot of discussion about how progressives (but also other ideologies that are ascendant at a given time) often prefer to not only get their way in terms of policy, but stomp over the remnants of their opposition; one may prefer to discourage this.

I have trouble parsing this in a way that isn’t either trivial or weird, because I’m not sure what you mean by “accept”.

Obviously, two contradictory beliefs can’t both be right, but when I think of accepting people I’m not thinking of accepting their beliefs as correct. I doubt I’ve ever met a single person who I wouldn’t find had some significant beliefs I thought were deeply incorrect in some situation. And vice-versa even more so, my political and personal beliefs are well outside the norm. Some subset of my beliefs will be horrifying to any major segment of Americans. Lots of people have worked with me, some of whom knew I disagreed with them. I can’t remember anyone cutting me off over religion or politics (although people sometimes get mad for a little while). The groups that would cut me off are fringe.

There are specific situations where you can’t have two people with different beliefs on an issue in the same room together cooperating on something, but I think it’s the exception. Not the rule. In any given situation, most of a person’s beliefs just don’t matter.

I think the premise violates the experiment. By homophobe, I assume you include people like Ben Shapiro who oppose gay marriage on the illegible, irrational ground of ‘god says so’. But generally, there people don’t hate gay people, and think they should be entitled to civil rights, they just disagree that maraige falls under the perview of the state, rather than of the church (remember, even Obama was a civil union supporter, not a gay marriage supporter until scotus got involved). So by labeling them ‘homophobic’ you’re doing that ‘assume they’re just evil’ thing.

If instead, you mean people who want to use the force of state to castrate or imprison gay people, or people who want to do non-state violence to gay people, then their weirdness is clearly harmful.

You can accept someone without appealing to them.

I’ve really been enjoying this whole sequence. I agree with what you’re saying and with the way you’re saying it, and you’re putting it all more eloquently and clearly than I could have. Thank you!

Just FYI, you should call people more. Its very effective.

I dunno man, it seems like everyone just lets you go to voice mail and texts you back.

There are times when I’ve wished I could just call folks, but it’s only been because they have infinitesimal attention spans and zero consideration, so trying to clarify things like “Where and when are we meeting” gets me no response or an immediate, totally not thought out, totally unhelpful three word text. If you’re communicating with someone who spends more than a nanosecond considering whether what he’s bothering to write is going to be even remotely useful to the person he’s sending it to, texting is better.

In my experience, short-horizon logistical coordination is almost always better done over the phone. This is because it’s a synchronous communication method which can resolve all contingent questions in a single block. This is as opposed to asynchronous text communication, which is much slower (even very fast phone typers are much slower than speaking), spread out over a series of interrupts for each contingent question, and prone to petering out in the middle as someone gets distracted. In the best case, it’s SYN/SYN ACK/ACK, and in the worst case it’s Byzantine Generals.

Yeah. I hate, hate, hate people who will only text. Solving a thing that would take a 5 minute conversation where we can communicate quickly by voice and have a rapid back-and-forth instead requires interminable pecking on those ridiculous software keyboards to sort out even the most basic questions.

E-mail is a middle ground, where you can at least generally use a better keyboard, but still often requires more back and forth to sort out things that are generally quickly handled in person or with a quick phone call.

From the other side, I have a similar antipathy for people who are too quick to call.

At work, most of my team’s coordination happens over chat. I have a certain coworker. Nice guy, good at his job. But whenever he has an issue, he’ll spend maybe two lines trying to explain it, I’ll ask a couple clarifying questions, and he’ll immediately jump to “can we discuss this on a video call?”.

Then, on the call, the actual thing he wants bears no resemblance at all to his attempts to explain it over email/chat. Like, I don’t understand how he got from Issue X to Textual Description Y. I’d think it was just me, but I don’t have this problem with anyone else on the team.

I hate this. Absolutely hate it. A call is far more disruptive, leaves no log, can’t be proofread (i.e. invites misunderstandings), and must be answered synchronously. It should be a last resort, not a first.

(that said, I share your hatred for texting too — but only in comparison to email/chat. Chat gives me the option of answering from my real computer, with a real keyboard. I’d rather type with broken fingers than try to type on a phone. It couldn’t be much slower.)

It sounds the two of you have incompatible problems, so to speak. His problem is that for some reason he can’t communicate his problems without a call (which, I notice, is a video call, which is a whole other kettle of fish). Your problem is that you hate calls. But he’s correct that he needs the call, because of his incompetence at textual communication.

That is more or less the conclusion I came to, yes. It’s double frustrating because I know that on some level, every time I accommodate him, I’m reinforcing the idea that the right way to get problems solved is “bug me for a call” instead of “work on his written communications skills”.

I’m a lot more skeptical of the “gender is bad” counterexample, for unclear reasons. In the previous post, you said

If we assume we still have some blind spots, I’d guess they’re on cultural/social issues – we can probably catch most science/nuirition/accidental poisoning issues with modern science, but the more social-sciency things get the harder it is to judge (both because it’s complicated, and because our incentives are screwy). So I’d be incredibly careful of taking cultural things – especially basic building blocks like gender – and throwing them out because hey, it was probably just for dealing with Mongol invaders and can’t possibly be useful anymore.

I’m not sure it’s just for dealing with mongol invaders either. I would not force anyone into traditional gender roles, but I still think they are useful.

1. Traditional gender roles provide an easier to use framework for relationships. Many people will have better results using traditional roles than trying to develop their own frameworks.

2. There is evidence that children do better in ways that are still relevant now if they are raised in a traditional family. Abandoning traditional gender roles has lead to a rise in one parent households.

3. One tradition is avoiding poly relationships. Poly done wrong can be very bad. Some people can do poly right, but most people would do it wrong. I’d be more comfortable with poly on the fringes until traditions develop that make doing it right easier.

On that point about cultural things, we’ve been doing this weird experiment for the past 200 years, where people live in nuclear families and get their subsistence from an employer for whom they do contract work. It’s a major change from basically every human society before, where people lived with their extended family, and the vast majority of them provided for their own subsistence with trade around the edges. It’s produced a lot of major cultural dislocation. Getting rid of gender roles might be more or less extreme than that shift.

The medieval family, in both Eastern and Western (Christian) Europe, was largely nuclear. Don’t know about economic matters.

I agree that it’s probably a comparable shift. The shift to the current employment/nuclear family model is roughly the same as the industrial revolution (changing our model from isolated farmers to massive supply chains). Which was probably a good thing overall, but definitely had some Rocky bits (including two world wars and several famines with eight figure death tolls). So it might be good overall, but expect some serious disruption before we hit any sort of stability.

While I don’t think it’s easy to reason about social science things, I also don’t think that traditions are likely to get it right: traditions developed at a time when many of the relevant circumstances were very different than today.

About gender, IMO not strongly pressuring people into traditional roles, but also not pressuring them to abandon them works well: an an approximation, people tend keep those (and only those) elements of traditional roles that are either still important, or match their personal preferences. I think this describes today’s society.

Actually, the HIV thing shows illegibility is even worse than you think.

You saw your friend as pointing to the taboo “don’t be homosexual.” But HIV in Africa spread heterosexually, along highway routes, through prostitutes and truckers. “Don’t be homosexual” wasn’t the right taboo to save the 15 million Africans dead of AIDS.

And conversely, the (non-anal, non-promiscuous, socially regulated) homosexuality of Athenian Greece wouldn’t have transmitted HIV. “Don’t be homosexual” wasn’t relevant to their risk, even though they were doing it.

Yet in both Africa and San Francisco, the larger idea of “obey all traditional sex taboos” would reduce the risk, even if in each case it included things that didn’t individually pay off.

This ironically just strengthens your point about “illegibly” valuable custom. You and your friend thought the custom was evolved by the risks from homosexuality. So what would have happened if we’d given you two a time machine and a djinni, to change people’s sex behavior in the past?

If you and your friend had time-machined the 1990s to erase homosexuality, but not erased African prostitute/trucker culture, we would still have millions dead in Africa of AIDS.

But a properly conservative community that obeyed the sex taboos without caring about the “reason”, and that used their djinni-wish to time-machine a period of obedience to *all* the traditional sex taboos, would have saved the people in both San Francisco and Africa.

So it’s even worse than you thought – you can’t properly make the useful part of the tradition legible, even when you’ve already seen how it’s useful!

This is a good point. “Don’t be homosexual” is just a small part of a conservative worldview that also includes taboos like “don’t be promiscuous”, and used to include “no sex before marriage”.

Oh, it still includes “no sex before marriage” – it’s just harder to get away with saying that outright nowadays, because of how absurd it is to request that of someone. Instead, they’ll deliver a post mortem scolding (“If you hadn’t had sex before marriage, this wouldn’t have happened.”) every time something even moderately bad comes out of premarital sex, which makes the same point but is less socially costly. You see the same thing with “don’t be homosexual”. My father is a moderate homophobe. He would never say that phrase to someone’s face. But if one of my gay friends got AIDS in some terrible situation, my dad would say something along the lines of, “If he didn’t have gay sex, he wouldn’t have gotten AIDS.”

This is part of how traditions keep their stickyness – you can reinforce an association by saying something entirely factual about whatever anecdote you’ve brought up, without actually taking the leap into generalization.

Two things you might consider:

I’m old enough in the church to know many people who credibly didn’t have sex until marriage. Remember that there’s a not insignifigant amount of people who go into their mid-twenties or thirties without having sex despite trying to; it’s difficult for them to make it work.

This implies a cohort (that my experience shows me exists) of people for whom it would be kind of hard/challenging to have sex at all or frequently for whom the social norm discourages them from trying just enough that they skate through.

Imagining that everyone the no-premarital-sex affects is uber-hot and socially skilled and would be covered in genitals at all times with no effort over-simplifies. I think to be honest about this you have to think about the people for whom the rule has enough effect to delay sexual activity another year or so, and for whom this pushes sex back to marriage or commitment to their eventual marriage partner, which has nearly the same effect.

The second thing is that this still has the desired effect to some extent if it only diminishes the frequency of sex; a no-guilt teen who has sex every weekend is more at risk than my high-school friend Mark, who was considered hot and could have had sex every weekend, but only had sex occasionally because of the guilt or influence of the norm.

People in my church (I am Mormon) still talk about not having premarital sex, and they emphasized this *a lot* when I was a teenager.

Mainline Protestant, still here as well.

Evangelical, still here as well. Oddly enough, the kids tend to marry young.

Mormon kids marry young too, typically.

Unfortunately the way sexual tabboos are enforced and promulgated tends not to limit the behaviour of medium to high status heterosexual men.* If you had a policy of generally enforcing sexual tabboos you’d end up doing a lot of unnecessary harm to gay people, but not meaningfully limiting the behaviour of men seeing prostitutes or having multiple female sexual partners in other ways.

My guess would be this is because tis heterosexual men in positions of power who enforce the tabboos, and have no interest in punishing their own group. So a theoretically useful norm against risky sex ends up reinforcing existing power structures and not fixing the problem.

The same taboos can be used to bring down the powerful (not always successfully – see the allegations against Trump). Enforcing taboos is a choice, and like all such things this means it can be deployed for social/political gain.

And taboos are classically enforced by grannies, not middle-aged high-status individuals, since acquiring status is often not simply a matter of following taboos but of actually manoeuvring around the edges of them. This is why taboos are strongly held in say rural Pakistan whilst high-status individuals actually live in less taboo-dominated cities.

I don’t think this is true, at least not in the strong form. There’s more hypocrisy than norm breaking. Pastors in churches will readily condemn porn or adultery, rather than try to preserve their own sexual freedom at the expense of women.

Does it? From what I’ve seen in people’s behaviour, the proper conservative worldview is – females had better be pure, but boys will spread wild oats (they just better not do it with our daughters – and if they do, we’ll force the pair to marry) and high status males can fuck any female they want, provided they keep a veneer of respectability (i.e. deny the relationship in public).

This is of course a revealed preference description. Lip service to purity is commonly part of the package for everyone. A display of contrition may be required to ‘excuse’ one’s acceptable-in-practice behaviour if it comes to public notice.

@DS

The actual traditional Christian taboo is sodomy, not homosexuality. Arguably, the shift among conservative Christians to be against homosexuality, evolved from the sodomy taboo.

There is evidence that anal sex is widespread among heterosexuals in Africa.

I’ve said this before, but I doubt it has much at all to do with VD; homoeroticism just reduces stable het pair bonding, stable het pair bonding is important to marriage, marriage produces families, and families are the foundation-stone of all societies. Though I have not studied the history of sexuality in any depth and that’s mostly based on a few striking examples–Athens had rampant pederasty combined with teen brides who spent their lives imprisoned in the back of the house, for one. Probably there are abundant counterexmples though.

Our society is really weird in the way it has almost completely removed family formation from discussions of sexuality, and IMO it doesn’t make sense to discuss these historical taboos as though people at these time periods perceived anything like a sexual marketplace.

But this viewpoint needs to justify the importance of family defined as parents and children. Historical records are pretty clear that the sexual marketplace is nothing new, whilst the conception of family as a married couple is very modern: it doesn’t work as a useful definition in societies with larger multi-generation households (and family is a word for household as much as biological kin) or with high mortality of childbearing-age females.

More to the point, for men at least sex and procreation are separate (if obviously compatible) activities. Accepting responsibility for offspring has always been a choice, and in recent history (when the nuclear family ideal was evolving and dominant) social pressures were not to do so, making the consequences of extra-marital sex less significant.

If you want to rephrase your argument around parental commitment then it would likely be more convincing. The benefit to culture is well-supported and therefore culturally-literate children, not a stable hetrosexual partnership, which is simply one way of achieving this, albeit seemingly the most effective for western society in recent years (our data for this will always be hindcasting, since for childhood it’s outcomes that matter).

I said nothing about the nuclear family, and in any case it doesn’t matter because the extended family is more or less a cluster of nuclear families (and it doesn’t matter if the household includes slaves or servants, they all had parents who took care of them at some point and the bulk of households in most societies will not be able to afford large numbers of either).

A kid has two parents, and it’s more helpful to have both around when rearing it, for reasons of financial and emotional support as well as physical security and role modeling. Even if you allow for polygamy and the like–which are (in practice, in developed societies) rare for a reason–same-sex pairings are still a net drain because they’re not where the kids come from; you’ve reduced the number of options on the “market.”

To clarify what I mean by that, I have in mind the idea of sex primarily as a means of pleasure pursued by free agents–the modern urban American norm, more or less. Historically, a sexual partner was a fellow-parent, and you got married because that’s how you formed a household. It was a critical life stage bypassed by few and generally with some stigma. Now, people did cheat, and always will, but we’re talking about social norms here.

Anyway, the nuclear family isn’t that rare in history. I can’t recall the exact title, but the book is something like “Marriage and the Family in the Middle Ages.” It makes clear that a couple with children was considered both desirable and the norm, with exceptions only for cases such as poverty.

>extended family is more or less a cluster of nuclear families

An extended family is very different from a cluster of nuclear families. Within an extended family, food, economy, justice, possessions, and leisure activity, could all be assigned or controlled by members of the extended family. Your clan was essential to your survival; without it, you would often die, and sometimes you wouldn’t even meet anyone from outside it for most of your life.

That’s very different from modern nuclear families, with a mere two parents and most other services provided by state or corporations. Essentially, nuclear families is much closer to radical individualism than it is to extended families.

Irrelevant to the point I intended to make, which is that human beings do better when raised by two parents and families are either couples or clusters of related couples, with rare exceptions for polygamous societies–and in these, for obvious reasons, polygamy is generally not the norm. A few high-status men accumulate wives, while some others do without to compensate.

To clarify: kids come from parents. That requires heterosexual sex. People get married for one reason or another. Regular sex between couples is desirable both because it increases fertility (important in premodern societies) and because it promotes pair bonding (household is less tense when parents don’t despise each other, arguably gives woman some leverage as well). If either partner is also having sex with a same-sex partner, it is likely to have a negative effect on their relationship with the spouse. This is a Bad Thing.

However, since somebody brought it up, I looked it up, and Oxford Dictionary of Byzantium confirms that Byz. society was centered on nuclear families as well. So both European Christendoms, where anti-gay feeling was strong, were nuclear-oriented. Islam was more tribal in its origins, and has some animus towards homosexuals. I don’t know how deep its theological roots are, or how long they continued to have more extended families in the middle ages etc., but I know the stigma against homoeroticism could have been stronger. As I recall, five percent of surviving Abbasid poetry is related to pederasty. This doesn’t sound impressive until you realize that the share dedicated to praising women is also five percent, the remainder being patron-praise poems (roughly equivalent to paid advertising). Eventually the whole caliphate was hijacked by Turkish slave soldiers/catamites.

Pre-Christian Rome was extended, fine with homoeroticism. China and its Confucian satellites were more extended-oriented, and had no stigma against homosexuality that I know of. Don’t know about India. All this doesn’t add up to much, but it’s an interesting set of correlations.

Don’t know enough about ancient Israelite family structure to speculate. Anybody know anything about the size of ancient Jewish families? Googling seems to bring up a lot of jibber-jabber and untrustworthy sources (e.g. Creationists).

I’m extremely skeptical that the risks of chlamydia, gonorrhea, and herpes can explain pre-Columbian homosexuality taboos. Those diseases aren’t nearly as dangerous as syphilis or HIV – though they sometimes cause fertility-related complications in women – and unlike HIV in particular, they aren’t notably more transmissible between men and other men than they are between men and female prostitutes.

I agree, I think it’s really dubious that it’s a VD thing. I’d suspect/speculate (suspectulate?) that it’s a consequence of other taboos:

1. Being a passive/receiving sex partner is inherently feminine/submissive (these two get equated because gender roles)

2. Higher status men thus shouldn’t be receptive homosexuals, and they and their families lose status for doing so (the Romans and the Pashtuns both seem to have got stuck at this point)

3. Families strongly discourage their children from being receptive homosexuals (partly status retention, and partly something related to the Pashtun “if you won’t stop me f*cking your daughter, you won’t stop me stealing your goats” principle)

4. All homosexuality gets tarnished by association, or as abetting sin, or as hypocrisy by a more egalitarian moral framework (Christianity in the Roman case)

All of this is probably boosted by the fact that if you live in a society with hierarchical gender roles, your default assumption will be that romantic relationships are inherently hierarchical, and will impute this onto homosexuality. I suspect this is why tolerance of homosexuality has gone from everyone utterly abhorring it to everyone (secular) now struggling to come up with any convincing legible argument against it – we now officially view relationships as inherently egalitarian (whatever they’re like in practice).

It’s my understanding that anal sex in particular makes the transmission of basically every STD more likely.

Here’s an argument that African AIDS is distinctive due to their medical system using lots of tainted needles:

http://www.overcomingbias.com/2010/02/africa-hiv-perverts-or-bad-med.html

It seems that HIV is less common in the more Muslim & northerly parts of Africa, though whether that’s due to sexual taboos or less needles is hard to say.

Also, HIV isn’t the only thing to be worried about. Our current advances in antibiotics appear to be encouraging a certain subset of people to be highly promiscuous without using protection on the assumption that a doctor can fix whatever ails them later, which we would expect to breed more virulent & antibiotic resistant strains of those diseases.

For what it’s worth, I think this tends to oversimplify the history of “capitalist” vs “socialist” discourse. For one thing, one of the primary strains of socialist thought in Britain and the United States was based upon the work of Henry George (this recent article lays out good background), and George was quite outspoken in his doubts of the efficiency and workability of central planning. (This led George’s ideology to be adopted both by generations of socialists as well as early libertarians like Albert Jay Nock). His arguments may not have exactly been laid out in the allocation framework as per Hayek, but they were certainly more sophisticated than Malthus’s.

To some extent, the George-flavored brand of socialism was put into effect, as the “New Liberal” platform ran heavily on land reform, and elevated “[their] first, and only, radical Prime Minister”.

Concessions were made with the People’s Budget of 1909 that tended to erode the radicalism of the movement, but it at least partially explains where the energy went.

And August Bebel foresaw exactly why communism wouldn’t work and what horrors it would lead to in the 1880s. It’s like the housing crash: the answer to “why didn’t anyone see this coming?” is “some people did, and anyone who observed and reasoned as well as they did could have.”

Dostoevsky to does a wonderful call out of the worst impulses of progressivism in notes from the underground.

Of course I don’t mean August Bebel, I mean Eugen Richter. August Bebel was a convinced socialist, Eugen Richter a prescient critic of socialism. *facepalm*

The whole AIDs thing seems like a pretty bad example of a tradition turning out to have an illegible justification. It seems more like an instance of a stopped clock being right twice a day. HIV had not spread to humans at the time anti-homosexuality taboos developed. The STDs that did exist at the time lacked the freakish properties of HIV that were tailor-made to spread invisibly through sexually active communities. Cultural evolution is not that much more forward-looking than biological evolution, it seems unlikely that hundreds of years ago society preemptively evolved an anti-homosexuality taboo just in case a mutant super-STD evolved in a few centuries hence.

The issue is muddied further by the gay rights movement’s massive lobbying for AIDs treatment. I’m not sure what would have happened in a world without a gay rights movement, but where HIV had evolved at about the same time. Obviously it might have spread to gay people more slowly. But I’m not sure if it would have been discovered as quickly if the gay activists groups hadn’t drawn so much attention to it; it well might have had more time to spread. I don’t think that Haitian immigrants, another group in the US that suffered a lot of early infection, were nearly as well organized. And I don’t know how much the gay community’s lobbying accelerated the development of anti-retroviral drugs. That hypothetical world might well have seen a greater number of deaths from AIDs that was discovered and treated years later than in our timeline.

“The STDs that did exist at the time lacked the freakish properties of HIV that were tailor-made to spread invisibly through sexually active communities.”

Is that true? I think the most salient factor was that the others were all curable by that point. I think syphilis spread in a pretty similar way back when it was first around. See study speculating that about 10% of people in London in 1900 had syphilis.

Independently, I disagree with the “stopped clock” formulation. Suppose your mother says “Don’t drive drunk”, and you drive drunk and hit a tanker truck and start a giant fire that causes a bridge to collapse and kills a hundred people. Obviously your mother didn’t predict that in particular, but is it “stopped clock is right twice a day”, or “useful warnings can deal with a wide range of scales of disaster”?

As in, disproportionately through homosexual sex? If so, when was the taboo actually working?

I think syphilis spread in a pretty similar way back when it was first around.

Which isn’t nearly as long ago as when the homosexuality taboo first appeared in Europe. I have the impression that the homosexuality taboo intensified in Victorian England, and I can buy that syphilis might have played a role in that – although now we’re talking about a time frame of a few hundred years; I’m not sure if that’s long enough for the types of traditions we’re talking about to develop.

In the case of syphilis specifically, I think that taboos against homosexuality in Europe developed centuries before syphilis made it there, especially if we accept the dominant theory that syphilis is a New World disease brought to Europe by Columbus. As far as we can extrapolate from scant records, pre-Columbian Native Americans didn’t have the same sort of anti-gay taboos that Europeans did. This makes me question how useful such taboos were in preventing disease. It seems strange that Europeans would develop such taboos and Native Americans wouldn’t; considering that Native Americans had far more incentive to do so since they had syphilis and pre-Columbian Europeans likely didn’t.

The point I am trying to make is that AIDs is a bad example of the dangers of questioning evolved tradition and conventional wisdom because the evolution of a new type of lethal disease that is resistant to all existing STD treatments is the kind of thing that it isn’t reasonable to expect a cultural reformer to predict.

I think a closer, if a bit more convoluted analogy, would be someone’s mother warning them not to get drunk when they are out because drunk driving is unsafe and they are too poor to call a cab or an Uber. They follow their mother’s warning until they get a better job and are able to afford an Uber when they are too drunk. However, a flying saucer lands on the street they are driving down and their driver hits it, causing a giant explosion.

AIDs didn’t just differ in scale from other STDs. It also differed in predictability. The evolution of a new and horrible STD within decades of the time we learn how to treat all the other ones isn’t quite as fantastic as an Uber hitting a flying saucer. But it’s still pretty fantastic odds. AIDs seems to me to be, if anything, the cultural evolutionary version of a Gettier problem.

Making another analogy, imagine there’s a crackpot who protests against the surgical removal of wisdom teeth. They insist that evolution put them there for a reason, so we shouldn’t remove them. I think we’d be right to dismiss them as a crackpot. I don’t think they’d be any less a crackpot if a new disease evolved that only killed people who had their wisdom teeth removed. (To clarify, the crackpot isn’t a scientist who discovers the new disease before anyone else, they’re a hippy environmentalist who thinks wisdom teeth must be good because all “natural” things are good. Their being right in in this instance is a Gettier case.)

The whole point is that reason itself may not be a great tool for changing tradition. It’s not an excuse to say “they couldn’t have predicted that using reason,” because that’s the entire point.

“As far as we can extrapolate from scant records, pre-Columbian Native Americans didn’t have the same sort of anti-gay taboos that Europeans did.”

There were many different Native American societies with differing attitudes toward homosexuality, some of which were very harsh, the Aztecs for instance. Syphilis is only one example of the pre-AIDS stds, there were many, the phenomenon is older than humanity itself:

“Human papillomavirus type 58 causes cervical cancer in 10–20% of cases in East Asia. It is rarely found elsewhere. An estimate of the date of evolution of the most recent common ancestor places it at 478,600 years ago (95% HPD 391,000–569,600). As this date is before the generally accepted date of the evolution of modern humans, this suggests that this virus was transmitted to humans from a now extinct hominin. As this virus is usually transmitted sexually this furthermore suggests that mating occurred in this area between modern humans and a now extinct hominin species”

https://en.wikipedia.org/w/index.php?title=Interbreeding_between_archaic_and_modern_humans&oldid=839043854#Indirect_evidence

Here in Europe AIDS was not even associated with gays. It was associated with unprotected sex and there were huge condom campaigns thrown on all young people, regardless of sexual orientation. The most famous person with AIDS we have heard about was Magic Johnson who is straight. I think the authorities simply thought straight people should be using condoms anyway to prevent unplanned pregnancies, so used AIDS as another argument for it. There was this joke, a reporter asks some average guy, have you heard about HIV, are you using condoms? And then he says yes, I don’t want to catch it, I am wearing it all the time, only taking it off for peeing and for having sex.

My favourite variant involves three SAS soldiers – an Englishman, an Irishman and a Scotsman – captured on a black ops mission by the forces of a nefarious dictator (at the time it was generally Saddam Hussein). Allowed to choose the manner of their own deaths, the Englishman is shot, the Scotsman hanged, and the Irishman injected with the HIV virus. When liberated by friendly forces, he’s asked why he chose such a horrible, lingering death, and explains that he had them all fooled: he was wearing a condom.

But he WAS liberated and the other two were dead?

Do you mean associated psychologically or statistically? The safe sex messaging in the states was broadly targeted as well, rarely pointing out how much riskier homosexual sex is or why.

According to my elder son’s description of his high school sex-ed class in the Philadelphia suburbs, HIV was described as if it was an ordinary venereal disease, with no suggestion of a link to homosexuality or anal intercourse. My conjecture was that that represented a left-right alliance. The left didn’t want homosexuals to be blamed for the AIDS epidemic, the right wanted to scare kids out of premarital sex.

That would have been about thirty years ago.

That was my experience as well (around the same time), to the point where I did not even realize that AIDS was more common among gay men, or had any connection to gay men at all, until my late-twenties.

Partly I’m still embarrassed by my degree of naivete. Partly, it feels like evidence of systemic deceit that has left me extremely distrustful of all pro-homosexuality messaging and, by (possibly unreasonable) extension, pro-trans messaging.

This is probably unfair, and I know that most groups who manage to get any cultural power will do the same thing to achieve their goals, but it’s had a big influence on how I view all of that. “People will lie very effectively to cover up the downsides,” is hardly shocking, but it makes me pretty unlikely to believe them when they say, “Okay, but there aren’t any other downsides; trust me. Also, we should be in charge of designing your child’s sex-ed curriculum.”

To me this is a bizarre perspective. For a long time most gay art (in my community) was about HIV. It’s still a very common theme. Queer people do not in general think about “covering up the downsides” because queerness is generally seen as not a choice, and therefore not to be defended on some kind of cost-benefit analysis. If you mean the downsides of sexual activity, ie STI risk, loads of queer community organizations exist specifically to educate queers and MSM about these things. It’s quite a stretch to go from some curriculum designers feeling uncomfortable talking about gays and STIs in a sex ed class to “systemic deceit”. It must be changing, but for a long time coming to learn about the way AIDS ravaged “the gay community” was a sort of initiation rite for young gay men. Sex ed curricula are generally not great on homosex ed, the innocent explanation being that there are not that many homos, and getting more information useful to non-heteronormative experiences into curricula is an ongoing project for activists.

@Jack

It seems to me that it’s the outsiders with great sympathy for a group who will more often tell falsehoods, because it’s much easier for them to adulate the group, assuming that negative claims are just lies by those who hate the group.

For example, surveys show that white liberals are less likely to have negative beliefs about blacks than black people themselves.

@Aapje, intuitively true, but note that white liberals are a group selected to not have negative beliefs about blacks.

@Aapje Even if your claims are true (I do not mean to express skepticism nor agreement) we’d still have a problem with “groups who manage to get any cultural power will do the same thing [systemic deceit]” as an explanation of these curricula? “Them”? The implication of “all pro-homosexuality messaging”? Like, the “mainstream” could be a giant pro-fag conspiracy of deceit but please go listen to some actual fags before indicting them?

@Jack

I appreciate your initial comment, because it does point out an obvious distinction I hadn’t been making. I’m completely willing to believe there was a big difference between what LGBT individuals and communities were saying among and about themselves, and what mainstream, non-queer advocates of homosexuality preferred everyone believe for ends-justifies-the-means reasons. That’s certainly borne out by what I’ve seen and heard since then, but it wasn’t part of my mental model when I was younger. I agree that no one was asking any LGBT people what to include in our particular sex ed classes.