[Epistemic status: I am quite confident I am not making a *small* mistake here. Either I am right, or I have completely misunderstood the entire premise of the debate.]

I.

One of the more interesting responses I got to yesterday’s discussion of IQ:

Let me ask you this, though, what would lead you to believe “g” is not a real thing? What would lead you to be more in favor of “g” being a real thing (e.g. out there in the real causal model of the world). For example, I don’t think this is really evidence for “g” as a real thing.

I can invent an abstraction called “hit points” that is a function of your vital life signs you measure in a modern hospital. Then I point out that it varies in a dose dependent way with being hit with a hammer. In what sense is this evidence that hit points are real?

“Hit points” is me applying the modeling philosophy behind “g” to healthcare (something Scott knows quite a bit about). It is safe to replace “hp” by “g” in any sentence.

Many fields of medicine depend heavily upon abstractions of exactly this sort. If I were being really mean, I’d make you learn about APACHE III, but for the sake of simplicity let’s look at the Glasgow Coma Scale instead.

Every medical student knows about the Glasgow Coma Scale and it is used in every hospital in the country. It is exactly what you probably think it is – a scale measuring the degree to which someone is in a coma. You can think of it as a hit point system covering those last few hit points – the ones between -1 and -9, where you’re dying but not quite dead.

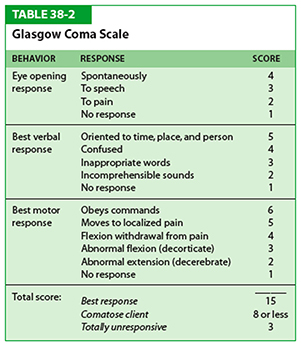

Instead of going from -1 to -9, the GCS goes from 15 to 3. It adds together three subscales, Eyes, Verbal, and Motor. Eyes for example offers 4 points to people with their eyes open, 3 points to people with closed eyes who open them when you talk to them, 2 points to people who open their eyes in response to painful stimuli, and 1 point to people who never open their eyes at all.

Add it all up and you get a pretty good idea how deep a coma someone is in.

This is useful for a lot of reasons. If you give a comatose person a medication, you might want to check whether they’re getting worse or better by watching if their Glasgow Coma score goes up or down. Or you might want to know when to perform certain drastic interventions (like intubating a patient or transferring them to ICU) and you can study outcomes for different interventions in patients with different Glasgow Coma scores. A patient with a score of 14 is still pretty okay and intubating them would usually be silly; a patient with a score of 4 is very ill and probably needs intubation right away. Various people did lots of studies to discover which Glasgow Coma scores do and don’t need intubation, eventually leading to the ancient Medical proverb: “Score of eight, intubate”

(also: “Score of nine, nah he’s fine”. Given that I work in a Catholic hospital, you can guess what we rhyme “seven” with)

Although it is not an official use, a lot of people use GCS as a quick and dirty way of estimating someone’s chances. In a particular ICU, it was discovered that mortality rates ranged from about 10% at GCS 11 to about 66% at GCS 3. Other indicators like the aforementioned APACHE are better for this, but GCS will do in a pinch.

II.

So that’s what Glasgow Coma Scale is. What would it mean to say “comas do not exist” or “the Glasgow Coma Scale does not exist”?

It’s important to distinguish between a couple of different interpretations of the question.

First, are comas a real thing? I would say that there is a common-sense concept of being-in-a-coma which is valuable in predicting various things we want to predict, like whether someone is able to talk and able to walk and able to solve math problems and so on. I would say that different people differ in the degree to which they are in comas. I feel very strongly about this.

Second, is the GCS an okay proxy for our common-sense concept of being-in-a-coma? I’m not asking for some perfect Platonic identity here. I’m just saying that, for example, counting the number of letters in a person’s name, then multiplying by their birth date is a terrible proxy for whether or not that person is in a coma. Glasgow Coma Scale seems to probably be very well correlated with asking for people’s subjective opinions about whether someone is in a coma or not and if so how deep the coma is. It conveys well beyond zero information about this. I feel pretty strongly about this one too.

Third, does the GCS correctly predict the things we want it to predict? That is, does an increasing Glasgow Coma score really mean the patient is improving (as measured in things like not dying)? Does a lower Glasgow Coma score really mean the patient is more likely to need intubation? Are we sure that people don’t have exactly the same outcomes at every Glasgow Coma Score, or that it doesn’t go up and down like a roller coaster and someone with a score of 10 does better than 9 but worse than 8? As far as I know, all the research suggests that it predicts outcomes just fine.

Fourth, is the Glasgow Coma Scale measuring a single General Factor Of Comatoseness which is the sole cause of comas in the entire world? I don’t think anybody thinks that it does or expects it to.

It’s possible I don’t understand what it would mean for there to be a General Factor Of Comatoseness, so let me try to sketch two scenarios, one of which I would interpret as having such a GFOC and one of which I wouldn’t.

Scenario One: suppose that it were discovered that comas are not, in fact, caused by organ failure. Cells in failing organs secrete a chemical that activates an immune response – let’s call this comaleukin. When comaleukin gets too high, the cells that produce normal conscious behavior shut down and the patient falls into a coma. It is proven that in people genetically unable to produce comaleukin, no coma ever occurs – the patient is completely conscious until they suddenly drop dead. On the other hand, injecting comaleukin into a healthy patient causes them to fall into a coma. The more you inject, the deeper the coma is. It is discovered that there is a perfect linear relationship between amount of comaleukin in the blood and score on the Glasgow Coma Scale. In this case, comaleukin is a General Factor Of Comatoseness.

Scenario Two: you need working heart, brain, and liver to survive. If each of these three organs are working at 100% efficiency, you have a total of 300 Health Points. If you ever drop below 150 Health Points, you go into a coma. It is discovered that score on the Glasgow Coma Scale is exactly equivalent to (Number Of Health Points/10). In this case there is no General Factor Of Comatoseness; instead, there are three different factors representing the health of the heart, brain, and liver.

III.

If this is what is meant by a General Factor, I conclude I really couldn’t care less if I have one. Like, it seems very clear to me that comas exist, and are important, and are accurately measured by the Glasgow Coma Scale – whether or not there is any such Factor.

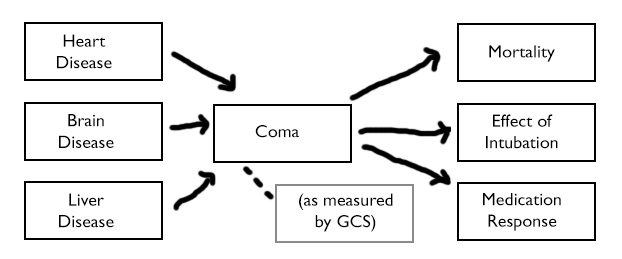

Like, here’s how I imagine the causal graph. If I change it so that “Heart Disease”, “Brain Disease” etc all point to a node marked “Comaleukin Levels”, and then that node points to “Coma”, so what?

If some experiment had previously established that people with Heart Disease had a 40% chance of ending up with a GCS less than 5, learning about comaleukin doesn’t change that result at all. If another experiment showed that right-handed people went into deeper comas than left-handed people, it doesn’t change that result either.

And if some experiment had previously established that people with GCS 10 had a 15% mortality rate, learning about comaleukin doesn’t change that either. If another experiment showed that it was extremely important to intubate people below GCS 8, well, that also doesn’t change.

What seems to be happening is that all of the causative factors – heart disease, brain disease, liver disease – are going into a giant pot called “COMA”. Then we are drawing predictions out of that giant pot.

Learning about the existence (or non-existence) of comaleukin helps describe the internal structure of that pot and gives us more predictive power, but it doesn’t tell us that the predictions we made using the giant pot were wrong. It just (potentially) gives us an option to improve those predictions.

For example, suppose comas caused by heart disease have much higher mortality rates than comas caused by any other kind of disease. In that case, instead of drawing predictions from the giant pot, we might want to make a more sophisticated prediction algorithm that took heart disease, liver disease, and brain disease as separate variables and weighted the heart disease variable more strongly when trying to determine mortality.

Or maybe the contribution of liver disease to a coma is independent of its contribution to any interesting outcome like death. Then we might want to remove liver disease from the pot.

But if we decided not to do this, and we’d previously found the giant pot to be 90% accurate in making our mortality calculations, well, the giant pot would remain 90% accurate at doing that.

In contrast, if we learned that all comas were caused by comaleukin and nothing else, we would know for sure that we would never be able to beat the giant pot. But that discovery would not in itself make the giant pot more valuable to us.

IV.

Compare the Glasgow Coma Scale to blood pressure.

Almost everyone would say blood pressure is “real”. For goodness sakes, it’s measured directly! Using machines! Called sphygmomanometers, which are clearly very important given the number of complicated Greek-sounding letter combinations in their name!

On the other hand, causally it’s not obvious to me that blood pressure works any different from the Glasgow Coma Scale.

We do not measure blood pressure directly. We have a good proxy for blood pressure in the form of certain sounds made by blood when cuffs are contracted to certain levels. This is only moderately accurate – everyone in health care knows that the doctor is always going to get a different blood pressure reading than the nurse and neither of them is going to get anywhere near what the automated machine detects. I will bet money that two doctors using the Glasgow Coma Scale will have better inter-rater agreement than two doctors using a blood pressure cuff.

Blood pressure, like comas, is caused by multiple different factors. The two most important are heart rate and vascular caliber. A blood pressure reading of 50/30 could mean your heart isn’t beating much. Or it could just mean your vessels are super dilated for some reason. Or it could mean a lot of other things.

Blood pressure, like comas, are used to predict outcomes of interest. There are calculators that will tell you your likelihood of getting a heart attack or a stroke at certain blood pressure levels.

And blood pressure, like comas, is kind of fuzzy. Your blood pressure in your legs and lower body may be completely different from your blood pressure in your arms (medical students reading this blog to procrastinate studying for your USMLEs: what condition does this classically imply?) In fact, your blood pressure in your right arm may be completely different from your blood pressure in your left arm. Your blood pressure may vary wildly over the course of the day, and it may vary between sitting and standing.

And, as commenters in the last post pointed out, pressure is an abstraction over millions of different blood cells doing their own thing – a multifactorial process if there ever was one.

When your doctor tells you “Your blood pressure is 146, eat less salt”, it’s hard to tell what exactly makes this number more “real” than your doctor telling you that you have a coma score of 12 – except that sphygmomanometers look a lot more impressive than a medical student sitting around with a questionnaire trying to see how hard she has to poke you before you can open your eyes.

I feel like Glasgow Coma Score and blood pressure on on pretty much the same ontological ground. Various factors go into them. Then you use them to predict various other factors. Whether they are “real” or “abstractions” seems to me to be beyond the scope of medicine and more into the realm of mysticism.

“By doing certain things certain results will follow; students are most earnestly warned against attributing objective reality or philosophic validity to any of them.”

V.

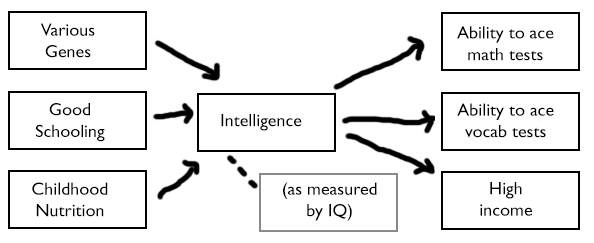

IQ seems to me to work much the same way.

I realize the most controversial part of this graph should be the word “INTELLIGENCE” in the center. But if you’re complaining about that, did you complain about “COMA” before? Comas are made of various subfactors, like inability-to-speak, inability-to-move, et cetera. These subfactors are mostly correlated, so it’s fair to lump them together into a single factor-category called “COMA” if we want. Likewise, whatever multiple intelligences there may be are correlated and so lumping them into a single factor-category called “INTELLIGENCE” seems both fun and profitable. If you didn’t object to the one, I hope you feel at least a little bad objecting to the other.

I think most researchers agree there are lots of different factors affecting intelligence. Even if we limit ourselves to genes, most geneticists agree there are thousands of different ones that can make you a little smarter or a little dumber. Into the big pot they all go.

Heart disease, brain disease, and liver disease all have a common result: you lie motionless in a hospital bed with your eyes closed and don’t talk much. The degree to which this common end result has been realized gets measured by the Glasgow Coma Scale and used to predict mortality, intubation response, et cetera.

Bad genes, bad nutrition, and bad schooling all have a common result: you’re not very good at various correlated cognitive tasks. The degree to which this common end result has been realized gets measured by IQ tests and used to predict income, likelihood of criminal offending, and how well you do on other correlated cognitive tasks that you haven’t been tested on.

Just as it would be neat to discover that comas were caused by the chemical comaleukin, an easily detected physical intermediary corresponding to the observed common result of “being in a coma” – so it would be neat to discover that intelligence was caused by (let’s say) number of neurons in the brain, an easily detected physical intermediary corresponding to the observed common result of “being good at solving these sorts of cognitive tasks”.

The important part is that a lot of different causes all get mixed together into one pot, the contents of the pot can be observed with a test, and then the results of that test can be used to make important predictions.

VI.

I worry that people who say “Intelligence doesn’t exist” or “IQ isn’t a real science” are using a motte and bailey.

Consider the actual controversial claims about intelligence that people would like to refute. For example, “Intelligence is at least 50% heritable” or “Ashkenazi Jews have higher intelligence than Gentiles”.

Neither of these things really has any relationship to whether there’s a General Factor Of Intelligence or a thousand different factors.

(one might ask: if there are a thousand different factors, isn’t it unlikely that Ashkenazi Jews are better at all of them, or enough of them to make a difference? Not necessarily. For example, in our heart-brain-liver model there are three factors of comatoseness, but someone who just drank five bottles of vodka all at once will have worse brain function, heart function, and liver function than someone who didn’t. Likewise, even if there are a thousand genes that affect intelligence, someone with high mutational load may have less functional versions of all one thousand of them)

All you need to do to show that intelligence is at least 50% heritable is do some twin studies using some measurement of intelligence which everybody agrees corresponds to our intuitive use of the term.

All you need to do to show that Ashkenazi Jews are more intelligent than Gentiles is get some Jews and some Gentiles to take IQ tests and see what the average result is.

(this may be slightly more clear if we replace the loaded term “intelligence” with the mostly-synonymous term “problem-solving ability”. If we want to know if Ashkenazi Jews have higher problem-solving ability, the obvious experiment is to ask them to solve some problems!)

The bailey for “intelligence doesn’t exist” is that statements like ‘intelligence is heritable’ or ‘Ashkenazi Jews have higher intelligence’ can’t possibly have meaning and so we don’t have to think about them.

The motte is “We don’t know if there’s a single general factor that shapes intelligence.”

But the motte is so broad that it can apply to comas or to virtually anything else, things that nobody seriously questions the existence of.

Intelligence exists enough that all statements about intelligence except those being made in a very specific neuroscience-y sort of context can still be true and interesting.

Knowing the coma score shouldn’t screen off everything you know about specific diseases. If anything, I’d guess (not actually knowing anything about medicine) it should be the other way around! Arrows would go straight from disease to mortality and response to intubation. If there were such a thing as comaleukin, then, yes, you would have arrows pointing to comaleukin, then coma, then outcomes. You’re making major assumptions about causation just by drawing the diagram that way in the first place, and that will affect both how you interpret new evidence and what decisions you make. Same thing goes for the intelligence diagram.

> Intelligence exists enough that all statements about intelligence except those being made in a very specific neuroscience-y sort of context can still be true and interesting.

Causal statements aren’t just about neuroscience. They are the ones, after all, with implications for what you should actually do.

Agreed. I’m assuming things like heart disease and brain disease are hard-to-observe hidden factors, in the same way genes might be a hard-to-observe hidden factor in the IQ example.

Causal statements might be less important than you think. If I have correlated “patient’s disease gives him a Glasgow Coma score of 5” to “the correct response in this case is always to give penicillin”, then ferreting out cause becomes of purely academic interest.

How did you figure out that penicillin worked? Why did you decide to look at penicillin in the first place?

If I want to improve life outcomes, how do I decide whether to put money into education or genetics research or deworming? If I want to make the best possible hiring decision, do I just give an IQ-like test or is it worth getting a work sample? Will one screen off the other? (It seems it’s best to use them together, although I haven’t gone deep enough into that research to figure out whether the causal conclusions are justified.)

Obviously the IQ test is easier to administer (ignoring legal questions), so there’s a tradeoff, as with all heuristics. Seems a bit like a perverse case of un-dissolving a question, though, to put so much emphasis on it when we know quite a bit about other causal pathways to outcomes and IQ doesn’t explain that much variance. But I guess that’s a question of degree on which we might reasonably disagree.

“the correct response in this case is always to give penicillin” is a causal statement about the effect of giving penicillin.

In your scenario, the Glasgow Coma score is an effect modifier.

You are correct that it may not be necessary to figure out whether effect modifiers are the true cause of anything.

However, the information you need is causal, and the only way you can truly answer it is by running a randomized controlled trial on the effect of penicillin, in which you are pay particular attention to whether the effect differs for subgroups with different coma scores.

You should contrast your model of “various causes feed into intelligence, which feeds into outcomes and test results” to one that pays attention to sub factors. E.g. people have persistent sub-score splits on IQ tests, some do better on verbal subtests, others on spatial, etc. So learning IQ+subscore split tells you more and lets you make better prediction about performance on different future tasks than IQ alone.

In your coma example this would correspond to things like liver impairment having especially strong effects on response to medicine. Basically, situations where conditioning on your intermediate measure of intelligence or coma does not render data about the contributing factors irrelevant: they’re not interchangeable, and there’s a lot more work to be done and gains to be had by going beyond ‘general coma/intelligence’ to look at factors.

My sense is that in intelligence research adding some high-level factors (verbal, math, spatial) gives you noticeable improvements in task prediction, but less than the gains from just using the common g factor.

I don’t disagree with this at all.

Note that this is a contrast with the comaleukin case though. If comaleukin were a real thing, there could be interventions like “filter comaleukin from the blood” that would work equally well regardless of the contributing factors that caused the comaleukin increase in the first place.

E.g. people have persistent sub-score splits on IQ tests, some do better on verbal subtests, others on spatial, etc. So learning IQ+subscore split tells you more and lets you make better prediction about performance on different future tasks than IQ alone.

Not really true unless you’re testing a sample with a restricted range of g (e.g., college students). When there’s a wide range of g in the sample, IQ batteries have very little predictive validity independently of g.

I agree with the main argument here.

I think there is an additional question you can ask of these constructs, which is: are the “pots” we’re using the best ones for understanding and/or prediction, given the kind of data we have, and the kind of understanding and/or prediction we’re interested in? Could we do better?

If we go back to the “pseudo-speedometers” that measure “Speed Quotients” (note for people other than Scott: introduced here), we could imagine a post like this about whether “Speed Quotients” were “real,” and whether it mattered. All the points would carry over: we would conclude that speed is a real thing, that pseudo-speedometers can be used to make predictions, and these predictions clearly have something to do with speed, and that no amount of worrying over the actual way in which pseudo-speedometers obtain their output can diminish the predictive utility of this output.

But it would still be the case that pseudo-speedometers make poor predictions relative to real speedometers.

A point I’ve seen made a few times (e.g. here) in this debate is that the amount of variance actually explained by IQ in many cases (e.g. in college performance) is really very low. If IQ is the best predictor, this is because our other options are even worse. The question is whether there are steps by which we could devise much more predictive metrics by getting closer to the way nature actually does things, like people moving from pseudo-speedometers to real speedometers.

I think when people say things like “IQ doesn’t capture that there are many factors involved in intelligence,” they’re not implying we should never talk about things that aren’t in are lowest-level causal model of the world, but making the point that getting closer to the lowest-level causal model may reap us predictive gains. I would be less worried about this issue if the predictions made with IQ were so good that they couldn’t get much better, but this doesn’t seem to be the case.

I almost never see a debate between “people who want to talk about IQ” and “people who want to talk about an exciting new measure that is better than IQ”. The debates I see usually look more like “people who want to talk about IQ, including various more complex subcomponent analyses” and “people who want to avoid the entire subject”.

If there are people working on something better than IQ, of course I am on their side – but I think it tends to look a lot like orthodox IQ research and just splitting up verbal and mathematical IQ and seeing how much that helps.

Edit: Just realized I misread the post I am responding to so this is much less relevant than I thought. Leaving it up anyway.

Possibly relevant Wikipedia links:

https://en.wikipedia.org/wiki/Fluid_and_crystallized_intelligence

https://en.wikipedia.org/wiki/Three-stratum_theory

https://en.wikipedia.org/wiki/Cattell%E2%80%93Horn%E2%80%93Carroll_theory

Skimming, it looks like, of these, G_f and G_c theory came first, omitting the g factor; but then the (empirically-based) three-statrum theory refined it and added the g factor back in; and then CHC theory (also empirically based) refine the theory further and still includes the g factor. So it looks like this fits the “Things are more complicated than g but it’s certainly important and we can’t seem to get rid of it even if we can’t currently explain it” picture. But yes when people are talking about if IQ is a real thing, they do not seem to be talking about this sort of thing usually.

(In case it is not clear, I am not any sort of psychologist at all and don’t actually know anything about this topic.)

IIUC part of nostalgebraist’s point is “for cases that affect me [nostalgebraist] in my actual life, IQ is a weak/confusing/far enough measure that anecdotal common-sense data is ‘something better than IQ'”. Which may or may not be true, but he doesn’t seem to be saying “let’s avoid talking about intelligence at all”.

“not implying we should never talk about things that aren’t in are lowest-level causal model of the world, but making the point that getting closer to the lowest-level causal model may reap us predictive gains. ”

I feel like I would want to more explicitly make a distinction between predictive gains from a more fine-grained model of a cause you have an imperfect measure of, and incorporating other causes.

I wouldn’t expect even a very, very sophisticated account of intelligence to explain all of the squared deviations from the mean in income or GPA. That would leave no room for things like “not working full-time and so unable to study as much,” “picked an easy/hard major,” “had a hard-grading professor,” “wants to get high grades,” and so on. That extra information would improve prediction and interpretation of GPA/income results, but we wouldn’t necessarily want to build it into an account of intelligence, since such an account wouldn’t be very reusable in other contexts.

Likewise, you could expand the coma scale to include things like whether someone was feeding and caring for the patient, or the budget available for treatment, to better predict patient mortality and life expectancy. But I’m not sure I would take that as a serious criticism of the existing scale, which could an input into that even more accurate predictive model.

A point I’ve seen made a few times (e.g. here) in this debate is that the amount of variance actually explained by IQ in many cases (e.g. in college performance) is really very low.

What’s your standard for “very low”? The SAT-college GPA correlation is 0.40 or so. This is without correcting for range restriction (stupid people tend to not take the SAT or attend college), different course selection, grade inflation, idiosyncratic grading practices, etc. There might not be any “real speedometers” to be discovered because you cannot predict noise. Do away with statistical artifacts, and the variance in educational achievement explained by IQ is > 50 percent. See this study.

public link

flowchart protip: http://www.draw.io/

I liked the flowchart.

I don’t know much about this, but I’ve heard that IQ tends to be stable throughout life. If so, how can it be true that schooling affects IQ?

Its always been known that more years in school correlates with an increased IQ. The “IQ is fixed” crowd explained that as people with higher IQs choose to stay in school longer, the “IQ isn’t fixed” crowd suggest that schooling is improving IQ.

It’s stable throughout adulthood. It fluctuates more in childhood and adolescence.

How does schooling affect IQ?

I think it’s rather “how does schooling affect scores on IQ tests”. What I mean is, after learning how to take tests, what the kinds of questions are, how you should answer, and having been tripped up by a few ‘trick’ questions, the older you are (and have been exposed to more standardised tests), then the better you are likely to do, simply because you know how to ‘take a test’.

It needn’t affect more than a couple of questions, but it can mean bumping scores up a few extra points. I’ve sat aptitude/IQ tests where I had no idea what Question 99 was all about. Get a few years’ experience under my belt, the next time I see Question 99 on a test, I recognise “Ah, that’s the one where the sequence of colours/numbers/wavy lines is THIS one is the next one” and there you go – correct answer.

Not because I got smarter in the interval and figured it out on my own, merely because I’d learned a few tricks.

The distinction you’re making is between “How does schooling affect intelligence?” and “How does schooling affect IQ?” IQ is the result of how well one scores on an IQ test; intelligence is what the IQ test purports to measure (and I’m not knocking it’s ability to do so by pointing this out).

If you believe this nicely-coloured selection of maps, then we in Ireland are thick as the wall and for really smart people you should go to Finland.

How that correlates with the stereotype of Finns as violent, morose, suicidal alcoholics, you tell me!

All the scores cluster very tightly around an average near 100. (And, I’m not familiar with that stereotype of Finns, so you’d have to tell me?) I suspect, though, that we are actually agreeing.

Well, I’m beginning to get the impression that being a violent, morose, suicidal alcoholic is pretty much a prerequisite to being a successful writer…

There’s a cool mathy thing you can say here about these “single pot” predictive models reflecting the existence of a matrix describing the relationship between the inputs and the outputs of a predictive model with one very large principal component (in the sense of principal component analysis). Once you’ve approximated this matrix with the matrix you get by throwing out all of the other principal components, it’s an empirical question how accurate the resulting predictions are on data you care about, and the math doesn’t require that you give the principal component a name, and the question about whether the component “exists” has been completely dissolved.

A nice example where you expect more than one principal component is in recommendation engines, e.g. when Netflix predicts whether you’ll like a show or not based on user ratings of shows. Here you might expect to get principal components that, if you stared at how they corresponded to movies hard enough, might correspond to things like “amount of comedy” or “chick-flickness” or “amount of action,” but making predictions with these kinds of models again doesn’t require that you give the principal component a name, and again the question of whether “amount of comedy,” etc. exist has been dissolved.

There’s another cool thing you can do where you measure how much of the variation is explained by that one component, and how big the next-biggest component is. So you can see in which cases the one-factor model is adequate and which cases need a more complex explanation.

As I understand it (from the perspective of a PhD mathematician who likes thinking about stats but doesn’t have much formal background), the point of Glymour’s original critique is that a lot of the IQ research assumes a particular causal model. Which you’re also implicitly doing here.

The Netflix problem is basically simple in concept. You have one set of variables you’re trying to predict: user ratings of movies. And you know exactly what all the causal inputs (that you can control) are–“properties of the movies you’re recommending.” So you can plug it all into a statistical analysis which was “what aspects of movies predict high ratings for this user?” At that point you can do a principle components analysis and get out these primary causal dimensions that you may or may not try to give English names to.

But before you can run that analysis you need the model–the model that says that movies cause scores. But in a hypothetical world where user ratings changed the content of movies, this wouldn’t work. It doesn’t matter if you do the component analysis correctly, the entire edifice of statistical tools doesn’t work because you’re using tools that assume movies cause ratings and ratings don’t cause movies.

And, to be clear, if you’re Netflix that’s a perfectly reasonable model and you should keep on assuming that. You have to have some model to do anything, and that seems like quite a good one.

So let’s look at the case of IQ. In an idealized world, we have a list of outcomes we care about: life success, reported happiness, lifetime income, number of children, skill base, whatever. We know that none of these factors have any effect on each other–people with more skills don’t make more money, people with more children aren’t more or less happy, and so on.

Then we take a bunch of factors that we hypothesize to be partial causes for our “outcome” measures–years of education, genetic load, parental involvement, childhood nutrition. And factors that we assume aren’t possibly affected by our outcome measures, so people who do better on tests and have more skills are no more likely to stay in school, for instance. Then we plug it into statistical machinery, and run the Netflix algorithm. And the biggest component we call “g”.

This doesn’t work for two reasons. The first is that “life success” is hard to measure, so we measure it by proxy. The second is that my causal model above is obviously crazy and various aspects of life success all have direct effects on each other, and also on the factors that we called “causal.” So principle component analysis doesn’t make any sense–it has a crazy model built into it–and you can call the biggest component it gives you “g” but you’d get a different component if you built a different crazy causal model where the amount your parents read to you causes your genetic load. You can define “g” this way but there’s no reason to consider it special.

Now, Scott does basically get around this critique. Rather than “g” being “The thing that pops out of principle component analysis, therefore it’s special!”, “g” is just “some number we can compute that tends to correlate usefully with a bunch of stuff.” He’s not actually depending on the dumb statistical definition, so pointing out how dumb it is isn’t a big problem for him.

i wasn’t aware that there are people who genuinely don’t think there’s any merit to the idea of general intelligence (as a rough guideline, that is). i am very surprised by this, and slightly disappointed; it feels like basically any fact, no matter how basic or undeniable, can be safely ignored for the sake of a belief system.

also those mouse-arrows and slightly-uncentered-headings are getting on my nerves far more than they should.

There are tons of these people, I’m amazed that you managed to avoid them. Honestly I feel like “IQ is meaningless” is a more popular view than the reverse.

Agreed. Feels kind of taboo to talk about IQ (offline).

I on the other hand disagree. It would not feel taboo to talk about IQ, I have never encountered someone who claims IQ is meaningless (to be fair, it hardly ever comes up as a topic, but:) and I would be very surprised if in my circle of friends or colleagues someone held such a view.

So, presumably, whether or not this topic is taboo or not and on which side everybody is on depends on your social surroundings.

Now it would be somewhat interesting to know who holds this topic taboo and who finds IQ a useless measure.

I would strongly suspect that the “topic taboo” crowd and “IQ useless” crowd have a huge overlap. And I would suspect them to be on the left side of the political spectrum, with the more militant political correctness enthusiasts. (I’m saying that as someone who would describe himself as a progressive)

Sounds about right for my social group.

Yeah, I got to admit I started off trying to convince people to agree with me, and now that most people do I’m questioning myself: “Wait, was there ever any actual disagreement? Maybe everyone reasonable was talking about general factors the whole time and I just misinterpreted them.”

The replies of a person and caryatis above suggest otherwise.

(Depending on how many people you exclude by putting in the qualifier “everyone reasonable”)

Everyone *here* may agree that IQ is real, but that doesn’t mean that everyone in the outside world agrees that IQ is real.

Also, “everyone reasonable was doing X” may just mean that the real world includes a lot of people who aren’t reasonable.

I think the actual argument against IQ is this:

1. Intelligence is a measure of your value as a person in a wide range of situations.

2. IQ supposedly measures intelligence.

3. IQ may not be significantly changeable.

4. Therefore, this test lets you measure the innate aptitude and this value of a person.

5. Therefore, this could be used to prove I am inherently less valuable than other people.

6. This makes me REALLY UNCOMFORTABLE.

7. Therefore, IQ is wrong.

I’m pretty sure this is the real argument against IQ, and most arguments against it are simply attempts to find arguments that fit this conclusion.

I also don’t believe in IQ as a valuable measure, but I’m pretty sure that this has nothing to do with data; I’m just falling prey to this very bias.

i’m not sure i understand the conceptual link between “aptitude” and “value” — or, well, i do, but you seem to be switching between “value” as in “usefulness” and “value” as “moral worth”. it’s obviously true that some people are less useful in the majority of situations (“intelligence”), but not obvious that this makes them significantly less morally relevant.

One might suspect that it requires mental effort for humans to consider useless (or rather, low-status) people morally valuable. Thus, while not conceptually correct, the conflation is probably psychologically relevant.

One occasionally attempted hack is to limit how steeply a person’s political power scales with “aptitude”. Remember, the word “meritocracy” was intended as a satirical pejorative.

This whole political paradox around meritocracy was less of a problem when the ideas of modern leftism were crystallizing, but it will surely get far worse in the coming decades.

What happens if we bite that bullet, and just go ahead and consider someone’s moral worth directly proportional to their IQ, with a cutoff (say, at 125) below which they are no longer considered a ‘person’ at all?

@Ialdabaoth:

Reasonably optimistic scenario: sympathetic abolitionists design and disseminate schematics for 3d-printing coil guns and EMP bombs, and make people equal through popular ownership of the means of destruction.

@Ialdabaoth so…I’m hoping that was a joke!

@creut: true. however, i don’t think this becomes any less of a problem if we pretend that intelligence doesn’t exist, so it’s kind of a moot point.

@ialda: “personhood” is only a useful concept because humans are strata above even the most intelligent of other animals, so anything below the very top apes or octopi or whatever don’t really come close. if there were a more smooth progression it be a lot more obvious that personhood is an artificial concept, as it’d be less useful a heuristic for moral relevance. as it is, though, there’s no point distinguishing moral worth between human beings (unless you’re into deontology *spits*), except at the very tails. as for “directly proportional to iq” iq isn’t nearly correlated well enough to g to even remotely justify determining worth through it, let alone proportionality.

Reasonably optimistic scenario: sympathetic abolitionists design and disseminate schematics for 3d-printing coil guns and EMP bombs, and make people equal through popular ownership of the means of destruction.

Optimistic? That’s optimism?

Check out what Homs looks like right now. There’s your optimism.

You know, everyone has a vote right now. How responsible are they with those?

I agree it’s a problem in any case, but I think IQ exacerbates it by giving the impression that it has been scientifically established and codified that certain people really aren’t good for anything. For example, without it, you can always resort to “well, maybe we haven’t yet found the thing that he’s good at”.

@peppermint:

1) Current technology doesn’t let numbers and morale beat Assad’s heavy weapons on the offensive. 2) More importantly, the motherfucking Gulf states are the real problem, they fuck up everything around them; I think the whole Middle East would be better off if their subjects destroyed their geopolitical power. 3) And then, of course, you have US imperialism acting in various unsavoury roles behind the scenes.

Some utlitarians, such as Peter Singer, do however believe that a person with sufficiently low intelligence, is worth less than a “normal” person. I don’t recall if he would consider the relationship smooth and monotonic, or if it’s more of a step function.

well, yes, and i believe that too. but the operative word in the above statement is “significantly” — it is not obvious to me that at the scales of difference humans usually talk about, moral worth changes by any appreciable amount. a case can be made that an iq-50 person is worth some amount “less” than an iq-200 person, but i’m not sure there’s any worthwhile moral distinction between iq-100 and iq-115 people.

No, Singer does not believe in a threshold to count as a human. Indeed, most of his shtick is about including animals, not about excluding humans.

This is what I think is going on, or something close to it:

Pretty much everyone has a strong subjective and objective understanding of intelligence. We experience ease of certain intellectual tasks, difficulty with others. We observe the same in other people. It’s also blindingly obvious that smarter people are more successful in many aspects of life due to their greater intelligence.

In that context, a statement like “the Average IQ of Africans is 89 while the average IQ of East Asians is 110” translates to “Africans are inferior to East Asians.”

Now say the observation is about something which doesn’t really matter. “Africans are more resistant to malaria than East Asians.” That doesn’t translate to “East Asians are inferior to Africans” because no one around here ever gets malaria.

I think it’s less about the person themselves than just the idea that people can be ranked. If you take the idea that all people are equal as an axiom (we hold these truths to be self-evident…) then sheer logic pushes you into increasingly weird conclusions.

1) In theory, not necessarily. Especially if your morality is not so tribal and infantile (I’m talking about you again, NERDS!!!) as to uncritically accept point 1 above.

2) This is likely the implicit goal of many scientific racists, etc, who push so hard for using IQ in everything.

3) Even if they would deny this to be the case directly, they still advocate for a world where the results would be indistinguishable from judging someone’s “value” through IQ. If you’re aggressively rejected from employment, social activities, breeding… if you’re denied an equal say in collective affairs, have to endure countless humiliating, paternalistic interventions into your lifestyle, watched more closely for any potential wrongdoing… then the IQ-worshipping world would seem like hell to you, whatever its elites think of its morality.

I identify much more strongly with the ordinary <100 IQ folk, however uncouth/unenlightened/etc they might seem, than with the haughty and dangerously arrogant IQ supremacists, and so I have a… slightly different perspective on the issue.

“I identify much more strongly with the ordinary <100 IQ folk, however uncouth/unenlightened/etc they might seem, than with the haughty and dangerously arrogant IQ supremacists"

Countersignal harder lol

If I countersignal hard enough to take pleasure in the company of the former but not the latter in my everyday life, does that still need to be called signalling?

I identify much more strongly with the ordinary <100 IQ folk, however uncouth/unenlightened/etc they might seem, than with the haughty and dangerously arrogant IQ supremacists, and so I have a… slightly different perspective on the issue.

Multi, some of us who think that race and IQ is an important issue with significant public policy implications think so largely because we think that current progressive orthodoxy is harmful to people with low IQs. I am all for high IQ folks in general getting out of their bubbles and spending time with the lower classes (who, as I have remarked before, are often more race realist than the American intelligentsia). I think that we ought to build non-coercive social communities, such as churches, in which people of all races and classes can interact on friendly terms. In these ways I “identify” with < 100 IQ folk. But I am convinced that current laws and social mores hurt these members of society. For example, competent and well-meaning schoolteachers are prevented from separating lower IQ children out to teach differently in ways better suited to their needs because this leads to "disparate impact" on on average lower IQ racial groups. On the level of social mores, progressive sexual norms tend to work okay (at least in terms of more easily measurable life outcomes) for the middle and upper classes, who are generally smart enough and have enough impulse control to adopt them and yet not live self-destructive lifestyles; but these same norms have been an utter disaster for the African American community in the past 50 years.

This seems like a good defense of operational definitions and psychometrics, but I think the initial analogy is flawed because it conflates vital signs with hit points, a term that frames the issue in D&D metaphors. Hit points don’t compare well to intelligence, going steadily up through experience, whereas intelligence does so slowly at best (in newer editions). Damage to hit points is non-intuitive at higher levels, requiring clever narration to explain why it doesn’t correspond to simple physical integrity. At low levels characters can be injured and killed by weapon impacts, and at higher levels they must be grazed a lot. But this is a problem of game design.

More generally, what concepts do not fall to this argument? Are not all the objects of experience multi-factorial? Quoting Liber O was a nice touch.

You misunderstood the analogy. Forget everything you know about “hit points” from something like D&D. This “hit points” is different.

I think you misunderstood my point. I understand perfectly well that the analogy was using “hit points” outside of a game context, but the term itself carries connotations that imply such a context. My interest in game design and beer led me to go on about it, but that wasn’t really what I was getting at. rich, below, comes close to my point that the measurements work in different ways – vital signs (HP) and the GCS measure wounds linearly, while IQ is normalized (as is INT in D&D). But those differences don’t invalidate the concepts as models, which, as Imm notes, are always simplifications.

My real point was that I agree with Scott and didn’t know people were still debating the reality of IQ due to its being a construct. All things are constructs in a similar manner, perhaps not with questionnaires or other measuring devices, but it is generally true that for any concept at all we can draw a diagram with it in the center and a bunch of inputs and outputs. This applies not just to comas, blood pressure, and IQ, but to things like “Derek”, “Scott”, “coffee cups”, “weather”, “politics”, “trees”, etc. Understanding that something results from many factors doesn’t suggest that it’s unreal in any sense. There are no un-fuzzy things. So while it’s reasonable to debate making a better IQ test with stronger correlations to outcomes, dismissing the idea as a whole simply because it’s constructed makes no sense.

“I will bet money that two doctors using the Glasgow Coma Scale will have better inter-rater agreement than two doctors using a blood pressure cuff.”

That’s not a strong point. I could devise a test that two doctors could get better inter-rater agreement than with a blood pressure cuff. E.g. “does the patient still have all their fingers?”

I think that’s a pretty strong argument that finger count is real.

When you have a scale and a checklist, you can put several unrelated things with strong inter-rater agreement together, and get something with stronger inter-rater agreement than blood pressure, but that is still less meaningful than blood pressure.

E.g. the JJ scale. One point for each question you answer “yes” to. Possible scores: 1, 2, 3.

Does the patient have blue hair?

Does the patient have all their fingers?

Does the patient’s name begin with a letter in the first half of the alphabet?

Better inter-rater agreement but less useful than blood-pressure. Inter-rater agreement is not a strong indicator of usefulness. Actually I think this is Scott’s point.

Usefulness is independent, but the point there is that the JJ scale is a real, physical quantity, not something entirely mythical like aura hue, and not just a blank slate into which the assessor projects their biases. I took Scott to mean simply that even though the Glasgow coma scale looks “woolly” and subjective because it’s a questionnaire and blood pressure looks “scientific” because there’s a machine for it, the former is actually more objective than the latter.

the doctor is always going to get a different blood pressure reading than the nurse

Go with the nurse, they generally know their arses from their elbows 🙂

I’ve had a hospital doctor try and fail THREE times to take my blood pressure, even with one of those automated cuffs.

So the coma scale is useful because it makes predictions, and these predictions are reasonably accurate. Its a model of the world, essentially. So IQ is a model of intelligence, I guess my question would be what it is useful for predicting? Success at one’s job? At education? If I have research that tells me that a person of race A has a lower IQ than a person of race B on average, what does that predict? Poorer education outcomes? But if I’m interested in education outcomes, why don’t I just directly investigate race A and race B’s education outcomes.

The problem as well is that when you start measuring things, people start paying attention. Lets suppose I am an academic who invents a new intelligent test. I find that it predicts how well people do at their jobs with about a 70% accuracy. I publish my results, and my tests gets popular. Employers catch on to it and start using it to discriminate potential employees, even when better measures exist. We know this sort of thing already happens with personality tests, for instance.

A hit points model for health is harmful when better models exist, if people start using that.

The question is which is causally prior – race or IQ? When you have someone whose IQ is unusual for their race, does their performance on whatever metric more closely track their IQ or their race? This is an empirical question, not something we can determine a priori. The use of race over IQ would be supported by evidence that IQ is mostly only predictive due to its correlation with race; the opposite would support the opposite.

Of course there are ethical and legal concerns over using race; but then, there are ethical and legal concerns over using anything correlated with race. Which is most things, including IQ. So whatever.

All models are simplifications. If I want to measure “how harmful is exposure to lead paint”, it would be nice to follow up subjects through their whole life, but I may not have the funding for that. If I want to decide “do I hire this person?” then it would be nice to have more detailed information, but there’s a limit to what previous employers will tell you and to how much a sample predicts performance on novel problems. In fact, if you integrated all available information on performance on novel problems, DW-NOMINATE style, I bet you’d get a predictive model that looked like IQ.

My problem with IQ is that IQ tests seem to define, implicitly, intelligence as pattern-matching ability, but we know that there is nothing intelligent about pattern-matching, it’s known in philosphy as witgenstein paradox and it’s been studied extensively in mathematics as statistical learning theory.

What this tells me is that to be successful at IQ tests you have to posses the same models that the test designer had, not many more (because it would lead to indecision) and not a different set (because it would lead to impossibility to answer).

Therefore there can be only be two explanations to IQ tests success in correlating with academic and business performance:

1. IQ test designers manage to systematically stumble upon fundamental brain circuitry that works the same for every human on earth despide the fact that no one really knows what it is and how it works

2. IQ test designers have found something that correlates with academic performance because they are testing for models that are indirectly learned in school. Then academic performance correlates to everything else.

My impression is that 2 is much more likely than 1, it’s simpler and it also explains why the correlation of IQ with success ends around 120~130 and why very IQ people aren’t all multimillionaire Tony Stark types.

I’m not very interested in the IQ of Ashkenazi Jews, primarily because I don’t know what Ashkenazi means, but I know that I have seen IQ mentioned by stormfront users a lot, so pretending there is no ethical risk in reifying ‘g’ is disignenuous, considering its epistemological basis consists solely of statistical correlations.

IQ tests don’t just measure patternmatching ability. There are tests and subtests that don’t have it at all. It just happens that patternmatching ability is highly g-loaded and gives a decent approximation in a pinch. There are diminishing returns on accuracy to adding more and more subtests by the very fact that they all end up measuring a single large common factor.

Hypothesis #1 is actually reasonable if you think of assortative mating and mutational load, and Hypothesis #2 is ruled out because IQ is often a better predictor than academic ability (academic ability is confounded by Conscientiousness).

I remember reading that the correlation of IQ with success might actually continue into higher ranges, but it’s difficult to find out by how much because a) there aren’t many people with very high IQs and b) “success” becomes harder to define at high levels (in particular, using money as a proxy breaks down because many genius thinkers are content to do their work for modest salaries relative to what they could do if they started a hedge fund).

(I can’t find the source for the preceding part so don’t take it on faith)

IQ tests don’t just measure patternmatching ability. There are tests and subtests that don’t have it at all

I have never seen them. I would be curious to see them.

It just happens that patternmatching ability is highly g-loaded and gives a decent approximation in a pinch

How do you know this? There is no other measure of ‘g’ beyond IQ tests, isn’t this circular reasoning?

If I measure ‘something’ with patternmatching ability and find that the thing that correlates with ‘something’ the most is patternmatching ability maybe ‘something’ is patternmatching ability?

Hypothesis #2 is ruled out because IQ is often a better predictor than academic ability (academic ability is confounded by Conscientiousness).

There is a plethora of alternative interpretation, I would like to see those studies. I’m still skeptical that we have managed to guess how the brain works on the first attempt.

I remember reading that the correlation of IQ with success might actually continue into higher ranges, but it’s difficult to find out by how much because

And that’s part of the problem, how do you know a test is measuring something if you have no other measure for that something? A lot of things correlate with success, most of which are not intelligence.

“There is no other measure of ‘g’ beyond IQ tests, isn’t this circular reasoning?”

What? g-factor is, pretty much definitionally, the common factor in ability to do well at a variety of cognitive tasks, with the original cognitive tasks being subjects in school: students who did well in one subject tended to do better in others. Pattern-matching ability is highly g-loaded if and only if it is correlated with performance at a variety of other cognitive tasks. Which it is.

Yup. Anonymous, I might suggest reading Is Psychometric g a Myth?, particularly section II, to get a bit of the flavor of just what people are talking about here — people have tried to find other ways of measuring intelligence that end up being truly different from existing IQ tests; they have failed. Things really are that correlated. (The Piagetian tasks example is particularly interesting.)

(And before anyone points it out, whether or not the essay is actually responding to Shalizi or strawmanning him is not relevant to this particular point.)

Did you perhaps mean this?

http://infoproc.blogspot.com/2009/11/if-youre-so-smart-why-arent-you-rich.html

http://infoproc.blogspot.com/2014/07/success-ability-and-all-that.html

The author is a physicist with a side interest in genetics, and IMO does a good job of explaining why there are probably *not* diminishing returns to IQ at high levels.

You overstate the extent to which we “know” that there’s “nothing” intelligent about pattern-matching. A paradox named after a philosopher is a bad proof of anything; thanks to Xeno we know that there’s no such thing as motion.

If you don’t like philosophers that’s fine (neither do I, by the way). You can read about statistical learning theory.

The name however is Zeno, not Xeno (that’s the scientology deity, I believe).

The scientologists’ chap is Xenu. Xeno is the name of a kinda-Zeno-like philosopher in Terry Pratchett.

Feel free to replace ‘Ashkenazi Jews’ with ‘European Jews’ in this context; the former is a bit more precise, but basically they refer to the same grouping. Think guys like Disraeli, Einstein and Woody Allen rather than other less recognizably “jewish” groups like Spanish, Baghdadhi or Ethiopian jews. The Jewish diaspora is a really fascinating phenomenon, it’s worth studying a bit.

Anyway, on a more salient point; if you oppose people on the far-right, taking steps to ensure they’re the only ones with semi-accurate views on an important issue should worry you on a strategic level. I know I personally was a pretty decent liberal until I found out that Gould had blown smoke up my ass about IQ and anthropology in ‘The Mismeasure of Man’, and researching the topic myself started a chain reaction which ended with me having multiple books by Evola sitting next to ‘A New Kind of Science’ (excellent and apolitical book on modeling complex systems with cellular automata; a must read for everyone) on my bookshelf.

It’s a bit cliche but putting the genie back in the bottle is virtually impossible, not without a societal cataclysm anyway, and that’s very much what it would take to bury psychometrics at this point. It seems like a much less uphill battle to adapt your ideology to the science than vice versa, and as Scott has shown you can still have a quasi-Progressive viewpoint while acknowledging the reality of IQ.

Why doesn’t your side consider the opposite? For example, in theory there’s nothing that would force the far right to worship the actually existing global capitalism (which self-evidently rewards corruption and rent-seeking, not virtue and excellence). And still the “IQ” shibboleth often seems to drive your people to reject or, at best, downplay and ignore every other explaination for existing inequality.

And therefore you have it both ways: the existing elites might be competent but are mostly there because they are best at exploiting the corrupt system… while the lower orders are only there due to biological inferiority plus some nebulous, freakishly moralizing suggestions of “culture”, and there’s no reason to be concerned with other factors. Isn’t this an empirically flawed worldview? Doesn’t fetishizing “IQ” for your own ends lead to confusion?

One of the great things about the far right is that you get to stop tying yourself into knots worrying about “inequality.” The confusion you describe is mainly a libertarian phenomenon which melts away once you take egalitarianism out of the mix.

Obviously high IQ doesn’t imply correspondingly high wisdom; after all, research suggests the primary difference between ‘unsuccessful’ sociopaths riding the revolving door of prison and ‘successful’ sociopaths who make up an alarming proportion of corporate executives is intelligence, i.e. cunning. But by that same token, to quote Plato, “a small man was never the doer of any great thing either to individuals or to states.” The capacity for greatness is in itself valuable, whether used for good evil or purposes which are beyond good and evil.

I’m not going to waste my time defending modernity, if you want that kind of argument find a conservative. But the problem of modern elites has historically been a lack of wisdom rather than of cleverness; even today’s Harvard Zombies are certainly smart, whatever else you can say about them.

“Wisdom” as you seem to be invoking it is two thirds mystical woo, one third an attempt to reify some highly diverse, culturally contingent and contextually sensitive things. Calling some woo-peddler like Evola “wise” casts serious doubt on the practical utility of such “wisdom”. You need some factors not measured by “IQ”, but replacing everything with “wisdom” just seems silly.

But surely ameliorating the direct sympthoms of what we know as “inequality” – poverty, humiliation, insecurity, blatant arbitrarily disparate treatment, being defenseless before the whim of one’s “betters” – is what the far right promises to do – often by creating a hyper-exploited permanent and sanctioned underclass outside the actual polity, like the Confederates.

If you just flat out said that all of the above is here to stay, that would be a pretty awful selling point! (Nietzsche did say this exact thing; luckily, nobody bothers to read him and/or take him at his word.)

raises hand umm…

actually existing global capitalism (which self-evidently rewards corruption and rent-seeking, not virtue and excellence)

Hey, as long as you say “self-evidently,” it means you don’t need any evidence for your outrageous claims!

Is it possible to get and stay very rich in a modern Western country through corruption and rent-seeking – or even blind luck? Yes. Is it the norm? No. Is it far more rare than in non-capitalist societies, such as Russia and China? Yes. Is competition by-and-large fair and on a level playing field? Yes, at least in the Anglosphere. Do the best by-and-large rise to the top, and are those at the top by-and-large the best? Yes.

As for “virtue” and “excellence” – these are very much in the eye of the beholder.

@Multiheaded

Examples of wisdom vs. intelligence in the modern world are easy to find, absent any ‘woo’ mystical or otherwise. Clever people are eager to invent complex schemes to avoid simple truths. Even an idiot should be able to realize that fantasies of immortality utopia and limitless plenty are just that, but quite a number of geniuses have thrown their lives away arguing that you can make something from nothing. The essence of practical wisdom is knowing your own limits and that is sorely lacking in modern elites.

As for the ‘signs of inequality,’ while fables about Primae Noctis are good for getting the public riled up (ask the Kings of Rome) it’s funny how it’s always a crowd banging on Lot’s door isn’t it? The rapaciousness of the mob and their would-be “protectors” has always been much more of a threat to life and property than any corrupt noble class or bad king. Some suffering is inevitable in any human society, but the amount certainly won’t decrease while opportunists are running around blaming any handy ‘oppressor’ for every bad harvest or test gap.

@Ialdabaoth

I’d like to see a thread which didn’t devolve into talking about your personal life. I’m sure you’re an interesting person but let’s try to practice a little restraint ok?

Just because the right makes some incorrect assumptions doesn’t mean it’s ok for the left to make some different incorrect assumptions (unless you assume that all incorrect assumptions have equal cost).

I’ve found that assuming that IQ is real and that e.g. successful people will be good at general problem-solving has caused me less confusion than assuming that successful people were more corrupt than non-successful people.

@Salem:

Systemic corruption.[1] Warren Buffett is an honest person, but it is, in some sense, criminal that he has an ultimately rather unproductive way to apply his talents, and that nothing else could make him the wealthiest in the world. If we wanted an actual meritocracy, we could probably all agree that the creation of real value should be more rewarded than the clever use of arbitrage. So e.g. nobody in finance deserves so much wealth, the state should support open-source talents, etc.

[1] I am now remembering that, once, Lenin ran into this exact problem of definitions in some interview when he casually said that the bourgeois state is corrupt in this sense, and the interviewer took offense at the seeming implication that Western politicians are exceptionally dishonest.

@lmm:

How about this: the way that the corrupt system gives many successful people the opportunity to make use of their IQ is by shutting out non-succesful people en masse just because they have the misfortune to be caught in some filters, which some can semi-randomly escape through drive/intelligence/charisma/whatever – and the system also offers modest to great rewards to some people who help reproduce this state of affairs.

@AFP:

Bullshit, laced with very decontextualized platitudes.

@Multiheaded

You seem to drastically underappreciate Buffet’s function and value. People like Buffet *are* the Invisible Hand. Without people like him constantly working to spot inefficiencies and reallocating capital – ironing out the inefficiencies – the system as a whole would suffer enormously.

Whether or not the percentage of rents he extracts for doing this job is fair is a separate issue, I suppose, but society keeps on paying him to do it even when he tries to give it away.

Okay. Why can’t we, as a small reformist measure, have people like Buffett doing exactly what Buffett does, but under public control and called ‘Gosfin’ and seeing only a tiny percentage of the arbitrage they extract?

And part of the problem, of course, is that for every Buffett there’s like a hundred thousand complete fucking assholes who snatch desperately at the money that he would give away, out of all proportion to any incidentally performed regulatory function – and we can’t even know, or really talk about the depths of this “out of all proportion”. Even liberals feel queasy about this!

@Multiheaded:

If a system is corrupt in the forest and allocates resources efficiently, is it still corrupt?

I mean, usually if you’re claiming a system is corrupt you’re talking about it rewarding dishonesty, or making decisions based on irrelevant factors, or something of the sort. Corruption in its usual sense is visible at the output level: we notice that contracts are being awarded to incompetent bidders or the like. That’s not, on the whole, what I see; what I see is a pretty good correlation between intelligence and success, which suggests that, whatever the reason and the process, it’s operating correctly.

Spinoza was Sephardic (specifically Portuguese.) You got Einstein and Woody Allen right, though.

Woops, thanks for the catch.

Nor Disraeli.

“Ashkenaz” simply means “Germany”; “Germany and Eastern Europe” is better than “Europe.”

Spinoza was a Sephardic Jew (but why is Spain not Europe?)

Because Sephardic (‘Spanish’) Jews generally don’t live in Spain and haven’t for centuries, not to mention that the modern grouping mostly consists of peoples who have never lived in Spain but just follow the traditions that originated there.

I do admit I cocked up the Spinoza example. That was embarrassing.

Cosma Shalizi wrote a very good article on Wolfram and A New Kind of Science ( http://vserver1.cscs.lsa.umich.edu/~crshalizi/reviews/wolfram/ ), and in an amusing coincidence, the end links to a short math paper rebutting E. T. Jaynes.

Am I the only person here that doesn’t really give a shit about race:IQ correlations, nor consider them apocalyptically important to fundamental social policy?

Nope.

Nope, especially when you look at the paltry number of IQ points everybody is arguing about, which seem to be on the order of measurement error on an individual test.

In fact, one could just say, “Sure, fine, IQ and race are perfectly correlated, so what?” Then let them start listing their horrible social policy proposals, and then we can get to the actual point of the discussion.

It is not AFP who thinks the correlations “apocalyptically important,” but Stephen J Gould. Race-blind IQ tests work great. Test firefighters on what they’ll need to know to do the job. But they’re illegal because people lie about IQ and the correlations.

Explicit quotas would be much, much better than the deadlock we have now, but they too are illegal. Two popular solutions are to stop hiring and promoting firefighters and to hire by lottery.

They don’t seem terribly important in and of themselves but they seem like a place where our policymaking is broken, in a way that might have deeper implications.

Then let them start listing their horrible social policy proposals, and then we can get to the actual point of the discussion.

Okay. Here are three. (1) Allow companies to use IQ tests in hiring. Do not worry if this leads to different racial groups being hired at different rates. (2) Allow teachers to group children by ability. Do not worry if this leads to different racial groups being put into different groups, on average. (3) Work to promote — through non-coercive means (e.g., tax breaks, public awareness campaigns) — social values that tend to help people with lower IQ, lower time preference, and lower impulse control function better in modern society, such as marriage and religiosity.

It’s not that race:IQ correlations are important to social policy, it’s that ignoring them can lead to harmful policies. So take this story for example:

http://www.newyorker.com/magazine/2014/07/21/wrong-answer

A bunch of hard working teachers and administrators were going to lose their jobs and were forced to cheat because people refuse to believe that the kids they’re teaching are at the left end of the bell curve.

Willful misunderstanding of reality is basically never helpful, often harmful, and should thus be avoided.

it also explains why the correlation of IQ with success ends around 120~130

That’s a myth unsupported by evidence: http://neoacademic.com/2011/10/05/gladwell-was-wrong-high-and-very-high-ability-employees-perform-differently/ See also: http://infoproc.blogspot.com/2009/01/horsepower-matters-psychometrics-works.html

Both those studies seem to not be about IQ.

They are both about IQ, or general intelligence. The SAT, the ASVAB, the WAIS, etc. are all highly correlated with each other even though they consist of different tests. That’s the point about g.

I don’t doubt the data presented by Landers, but it doesn’t seem to either support or refute Gladwell…they didn’t measure IQ’s correlation with success, they measured its correlations with various other test scores and GPA.

Are GPA and job performance not measures of success? The second link shows that there are no diminishing returns on IQ with respect to patents, STEM publications, and doctorates earned.

Here’s another graph showing the association of measured ability with subsequent outcomes among the four quartiles of the top one percent of scorers at age 13: http://languagelog.ldc.upenn.edu/myl/SMPY_Fig1.gif

In the first pair of links, the only kind of performance measured was the technical performance of soldiers. This is fairly linearly correlated with ASVAB score. The third link (the graph) shows a similar linear correlation between 95th %ile income and SAT-math scores at age 13. (Correlations with “nerdier” measures do show an increased slope at the hight end.) But, they also didn’t measure IQ.

I don’t have access to Gladwell’s book, but the point he argues is that there are diminishing returns on IQ in terms of workplace success, yes? (Of course, even if true, you could take this as an indictment of the workplace rather than of IQ.)

Two more links refuting the assertion that there are diminishing returns to IQ at high levels:

http://infoproc.blogspot.com/2009/11/if-youre-so-smart-why-arent-you-rich.html

http://infoproc.blogspot.com/2014/07/success-ability-and-all-that.html

I don’t see the relevance of Wittgenstein’s paradox.

It doesn’t need to be fundamental. We can imagine a proxy for performance that’s quite tangential to anything we actually care about, and yet accurate and well conserved across brains.

The creators of optical illusions have done quite well at this task.

Pingback: Outside in - Involvements with reality » Blog Archive » IQ Crime-Stop

To all the other procrastinators – coarctation of the aorta.

And going one level meta, interstudy variability for interobserver variability in GCS –

http://www.ncbi.nlm.nih.gov/pubmed/20398274

http://www.ncbi.nlm.nih.gov/pubmed/14747811

http://www.ncbi.nlm.nih.gov/pubmed/16842308

http://www.ncbi.nlm.nih.gov/pubmed/17993947

[Wrote this before I saw there were other comments, probably redundant but posting anyway.]

There’s another diagram you could draw. It could have things like “verbal intelligence”, “quick thinking”, “spatial ability”, etc. on the left, and “ability to ace vocabulary tests”, “ability to ace math tests”, and income on the right. Arrows of varying strength could connect left to right.

This diagram would necessarily be more informative than one in the middle. The question is how much we lose by simplifying all of the different factors down to “intelligence”. If all of the left-hand-side factors are highly correlated, we don’t lose much: someone with a high IQ is probably good at math and therefore is probably good at math tests. If they’re not correlated we lose a lot: You shouldn’t use IQ for predicting how someone will do on a math test if you can measure their math ability.

To move back to the coma analogy, if the different components of comatoseness aren’t highly correlated, you wouldn’t want to use the GCS to decide whether to intubate someone. You’d want to measure their breathing directly. If you’re in a rush and someone’s ability to move their eyes and know who they are are highly predictive of breathing ability, the GCS is probably fine.

From everything I’ve read [citations not provided], at higher levels of IQ, the differences between the subfactors matter more. Richard Feynman had a reported IQ that’s pretty low considering his achievements in physics; but apparently he was much smarter in some parts of math ability than in most of the other subfactors. That sort of thing is much less common with people who are below average, and when it does happen, the differences aren’t as big. Idiot savants are exceptional – most people who are really really really bright in one thing are still well above average in everything else.

Also, “success” as measured by anything which isn’t mostly just an IQ proxy is going to be partially conditioned on conscientiousness (and possibly other personality traits) – the answer, in the U.S. and other developed societies, to “if you’re so smart why ain’t you rich” is usually “low conscientiousness”.

This is known as Spearman’s law of diminishing returns (doesn’t strike me as a great name, but, whatever).

Note that (offhand, not a psychologist or statistician) this appears to be something different (or more than) the generic phenomenon of the “the tails coming apart”, since it appears to apply specifically at the upper end and not the lower end. Also — and I may be quite wrong about this — but it looks like it just relies on picking people scoring high on IQ tests, rather than the highest, and as I understand it the generic “the tails coming apart” relies on the latter? I may be confused here. Nonetheless the upper-end-only part seems to indicate there’s something more going on here.

(I seem to recall someone — I think Sark Julian — remarking on Twitter that disbelieving in IQ is a way of signalling high intelligence, since IQ is a less useful measure for those of high intelligence…)

(Regardless of anything else, might I just remark that Sark is an unpleasant and unduly arrogant person. In fact, she displays a lot of what I loathe so much about IQ supremacists.)