A machine learning researcher writes me in response to yesterday’s post, saying:

I still think GPT-2 is a brute-force statistical pattern matcher which blends up the internet and gives you back a slightly unappetizing slurry of it when asked.

I resisted the urge to answer “Yeah, well, your mom is a brute-force statistical pattern matcher which blends up the internet and gives you back a slightly unappetizing slurry of it when asked.”

But I think it would have been true.

A very careless plagiarist takes someone else’s work and copies it verbatim: “The mitochondria is the powerhouse of the cell”. A more careful plagiarist takes the work and changes a few words around: “The mitochondria is the energy dynamo of the cell”. A plagiarist who is more careful still changes the entire sentence structure: “In cells, mitochondria are the energy dynamos”. The most careful plagiarists change everything except the underlying concept, which they grasp at so deep a level that they can put it in whatever words they want – at which point it is no longer called plagiarism.

GPT-2 writes fantasy battle scenes by reading a million human-written fantasy battle scenes, distilling them down to the concept of a fantasy battle scene, and then building it back up from there. I think this is how your mom (and everyone else) does it too. GPT-2 is worse at this, because it’s not as powerful as your mom’s brain. But I don’t think it’s doing a different thing. We’re all blending experience into a slurry; the difference is how finely we blend it.

“But don’t humans also have genuinely original ideas?” Come on, read a fantasy book. It’s either a Tolkien clone, or it’s A Song Of Ice And Fire. Tolkien was a professor of Anglo-Saxon language and culture; no secret where he got his inspiration. A Song Of Ice And Fire is just War Of The Roses with dragons. Lannister and Stark are just Lancaster and York, the map of Westeros is just Britain (minus Scotland) with an upside down-Ireland stuck to the bottom of it – wake up, sheeple! Dullards blend Tolkien into a slurry and shape it into another Tolkien-clone. Tolkien-level artistic geniuses blend human experience, history, and the artistic corpus into a slurry and form it into an entirely new genre. Again, the difference is how finely you blend and what spices you add to the slurry.

“But don’t scientists have geniunely original ideas?” Scientists are just finding patterns in reality nobody has ever seen before. You say “just a pattern-matcher”, I say “fine, but you need to recognize patterns in order to copy them, so it’s necessarily a pattern-recognizer too”. And Einstein was just a very good pattern-recognizer.

“But don’t humans have some kind of deep understanding that pattern-recognition AIs don’t?”

Here’s a fun question: the human brain is undoubtedly the most powerful computer in the known universe. In order to do something as simple as scratch an itch it needs to solve exquisitely complex calculus problems that would give the average supercomputer a run for its money. So how come I have trouble multiplying two-digit numbers in my head?

The brain isn’t directly doing math, it’s creating a model that includes math and somehow doing the math in the model. This is hilariously perverse. It’s like every time you want to add 3 + 3, you have to create an entire imaginary world with its own continents and ecology, evolve sentient life, shepherd the sentient life into a civilization with its own mathematical tradition, and get one of its scholars to add 3 + 3 for you. That we do this at all is ridiculous. But I think GPT-2 can do it too.

Janelle Shane asks GPT-2 its ten favorite animals:

Prompt: My 10 favorite animals are: 1.

My ten favorite animals are:

1. Zebras with a white scar on the back

2. Insiduous spiders and octopus

3. Frog with large leaves, hopefully black

4. Cockatiel with scales

5. Razorbill with wings hanging about 4 inches from one’s face and a heart tattoo on a frog

3. Cockatric interlocking tetrabods that can be blind, cut, and eaten raw:

4. Black and white desert crocodiles living in sunlight

5. Zebra and many other pea bugs

Forget the particular animals for now (don’t worry, the razorbill with wings hanging about 4 inches from one’s face will be back in your nightmares). Notice the way it numbers its list: 1, 2, 3, 4, 5, 3, 4, 5. Last week the two-year-old child who lives next to me counted 1, 2, 3, 4, 5, 6, 7, 8, 9, 11, 8, 9, 7, 8, 9, 7, 8, 9 (his mother warns this is an inexact transcription). GPT-2 is instantiated on giant supercomputers; it’s a safe bet they could calculate the square root of infinity in a picosecond. But it counts more or less the same way as a two-year old. GPT-2 isn’t doing math. It’s doing the ridiculous “create a universe from first principles and let it do the math” thing that humans do in their heads. The fact that it counts so badly suggests it’s counting human-style, which makes it amazing that it can count at all.

I find something similar in this post from Tumblr user antinegationism, playing with the lobotomized public-release version of the system:

The American Association for Suicide Prevention and Life-Threatening Behavior (AAPSLB), a professional organization with an overarching goal of promoting suicide prevention, released a recent video encouraging the public to think before they act, on the public’s part. “When we say we’re the only reason to commit suicide, we’re saying they’re wrong. It’s the right thing to do,” said AAPSLB president Steven A. Anderson.

The American Association For Suicide Prevention And Life-Threatening Behavior is not a real organization; the AI made it up as the kind of organization that it thought would feature in a story like this. And AAPSLB is not quite the right way to acronymize the organization’s name. But it’s clearly an attempt at doing so. It’s very close. And nobody taught it how to do that! It’s not just that nobody programmed it in. It’s that nobody thought “Today I shall program an AI to learn how to acronymize on its own in an unsupervised way”. GPT-2 was just programmed to predict text from other text, nothing else. It’s second-level not programmed in. It just happened!

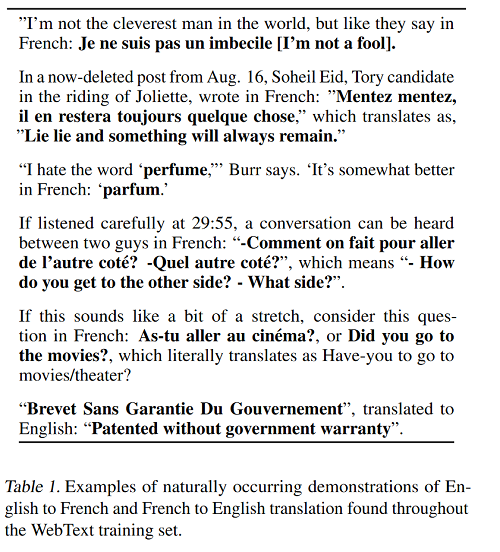

And, uh, it seems to have figured out how to translate things into French. This part is from the official paper:

We test whether GPT-2 has begun to learn how to translate from one language to another. In order to help it infer that this is the desired task, we condition the language model on a context of example pairs of the format ENGLISH SENTENCE = FRENCH SENTENCE and then after a final prompt of ENGLISH SENTENCE = we sample from the model with greedy decoding and use the first generated sentence as the translation. On the WMT-14 English-French test set, GPT-2 gets 5 BLEU, which is slightly worse than a word-by-word substitution with a bilingual lexicon inferred in previous work on unsupervised word translation (Conneau et al., 2017b). On the WMT-14 French-English test set, GPT-2 is able to leverage its very strong English language model to perform significantly better, achieving 11.5 BLEU. This outperforms several unsupervised machine translation baselines from (Artetxe et al., 2017) and (Lampleet al., 2017) but is still much worse than the 33.5 BLEU of the current best unsupervised machine translation approach(Artetxe et al., 2019). Performance on this task was surprising to us, since we deliberately removed non-English webpages from WebText as a filtering step.

In other words: GPT-2 is very bad at translating French into English. But the researchers were surprised to see it could do this at all, since they didn’t design it as translation software, didn’t ask it to learn translation, and didn’t show it any material in French. It seems to have picked up this ability from noticing a few naturally-occurring examples of French in English text:

And here’s One Weird Trick to make GPT-2 summarize articles:

We test GPT-2’s ability to perform summarization on the CNN and Daily Mail dataset (Nallapati et al., 2016). To induce summarization behavior we add the text TL;DR: after the article and generate 100 tokens with Top-k random sampling (Fan et al., 2018) with k=2 which reduces repetition and encourages more abstractive summaries than greedy decoding. We use the first 3 generated sentences in these 100 tokens as the summary. While qualitatively the generations resemble summaries, as shown in Table 14, they often focus on recent content from the article or confuse specific details such as how many cars were involved in a crash or whether a logo was on a hat or shirt. On the commonly reported ROUGE 1,2,L metrics the generated summaries only beginto approach the performance of classic neural baselines and just barely outperforms selecting 3 random sentences from the article. GPT-2’s performance drops by 6.4 points onthe aggregate metric when the task hint is removed which demonstrates the ability to invoke task specific behavior in a language model with natural language.

Again, GPT-2 isn’t good at summarizing. It’s just surprising it can do it at all; it was never designed to learn this skill. All it was designed to do was predict what words came after other words. But there were some naturally-occurring examples of summaries in the training set, so in order to predict what words would come after the words tl;dr, it had to learn what a summary was and how to write one.

If you’re me, the obvious next question is “can it do poetry”. I am very interested to know if the real thing can manage this, but for now I will have to satisfy myself with the lobotomized public-release version. Given Alexander Pope’s Essay On Criticism as a prompt, it says:

O man, who pains the tongue magnified by tymolle,

Though all the fair melodies let no door go as dark as good art.

Yet it would have been, unless the mastermind is so full

Of his own want that this literary country might grow.

Perfect student, thou hast trodden out.

Happy students ruin the purity of what you write ‘Tis art,

For unordered use of decade,’ but art only failing:

A surprise, for new veins which build behind;

It understands there should be line breaks, it understands the approximate correct length of a line of iambic pentameter, it understands how to talk like an overeducated 18th-century dandy – but it doesn’t appreciate rhyme or meter. In retrospect this isn’t surprising; GPT has no idea words sound like anything; it would be shocked to learn anyone uses language as anything other than text strings.

But human poets haven’t appreciated rhyme or meter either for at least a century, so GPT-2 should be able to compose some wicked modern poetry. Friends-of-the-blog Chelsea V. and Qiaochu Y. got it to write a few more stanzas of Allen Ginsberg’s Moloch (human-curated to remove fluff and improve flow):

Moloch whose brief mind is the barrel of gleaming stars! Moloch whose soul is choices of fragrance! Moloch whose framing is glitz and half-cheerios!

Moloch whose daily life is private; his daily strength hoarse! Moloch whose soft desires become culture burning smoke in a palace of eyes! Moloch whose breeding in the caves sweeps wild and rippling flower-like shapes!

Moloch whose pleading is the breath of youth! Moloch whose prudence is the urine of generations! Moloch whose nerves are not privileged; movies abound in quiet caves; people are dressed like ministers! Moloch whose hope receives some help too. Moloch whose eyes are pitiful outstretched, but not blinded. Moloch whose lungs are burning with fire, but not a cautious electrical girl!

Moloch whose sanity is just filled with atoms, gluing beings in physics and complicated libraries. Moloch whose eyes are all innocent animals! Moloch whose sweat and efforts make pure the ocean with burning annihilation! Moloch whose fields dissolve to lightning-ravaged storage houses! Moloch whose eyes sit haunting up Scottish springs!

Moloch whose arms and men are Judah condemns and listens, having understood whom he was and what he man’s weaknesses oppose with iron. We will feast to it like Lord Kippa; we shall play with it like our Pokemon.

Moloch flickering under the pounds and sustaining more fights! Sports and yoga, equally greedy for wealth as for lives! Moloch who had to split his limbs and society into fifty-two atoms! Moloch who cannot survey duty! Moloch for I do not believe in humanity! Moloch for I don’t believe in god! Moloch who, fought Sins and made Sin out of Sin!

Moloch who left me in the glacier! Moloch who helped me to judge my behavior! Moloch who lured me out of the eternal anguish. Moloch who makes all my faults my undoings! Moloch whose deep conversation filled my soul with love and consolation! Moloch who curls up my brains and crumps my heads and screams my scream! Moloch who prepares oil in my hands and burns me alive! Moloch who cries out, “Why do men scream so loud?” Moloch who unleashes his poison in all rivers at high tide! Moloch whose ruins are filled with its cubits! Moloch who fiddles at insidious clockwork!

Aside from this being obviously one of the best things ever, what’s the point?

The point is, GPT-2 has faculties. It has specific skills, that require a certain precision of thought, like counting from one to five, or mapping a word to its acronym, or writing poetry. These faculties are untaught; they arise naturally from its pattern-recognition and word-prediction ability. All these deep understanding things that humans have, like Reason and so on, those are faculties. AIs don’t have them yet. But they can learn.

From the paper:

Prompt: Who was the author of The Art Of War?

Sun TzuPrompt: State the process that divides one nucleus into two genetically identical nuclei?

MitosisPrompt: Do you have to have a gun permit to shoot at a range?

No

Nobody told the model to learn Chinese history, cell biology, or gun laws either. It learned them in the process of trying to predict what word would come after what other word. It needed to know Sun Tzu wrote The Art Of War in order to predict when the words “Sun Tzu” would come up (often in contexts like “The Art of War, written by famous Chinese general…). For the same reason, it had to learn what an author was, what a gun permit was, etc.

Imagine you prompted the model with “What is one plus one?” I actually don’t know how it would do on this problem. I’m guessing it would answer “two”, just because the question probably appeared a bunch of times in its training data.

Now imagine you prompted it with “What is four thousand and eight plus two thousand and six?” or some other long problem that probably didn’t occur exactly in its training data. I predict it would fail, because this model can’t count past five without making mistakes. But I imagine a very similar program, given a thousand times more training data and computational resources, would succeed. It would notice a pattern in sentences including the word “plus” or otherwise describing sums of numbers, it would figure out that pattern, and it would end up able to do simple math. I don’t think this is too much of a stretch given that GPT-2 learned to count to five and acronymize words and so on.

Now imagine you prompted it with “P != NP”. This time give it near-infinite training data and computational resources. Its near-infinite training data will contain many proofs; using its near-infinite computational resources it will come up with a model that is very very good at predicting the next step in any proof you give it. The simplest model that can do this is probably the one isomorphic to the structure of mathematics itself (or to the brains of the sorts of mathematicians who write proofs, which themselves contain a model of mathematics). Then you give it the prompt P != NP and it uses the model to “predict” what the next step in the proof will be until it has a proof, the same way GPT-2 predicts the next word in the LotR fanfiction until it has a fanfiction.

The version that proves P != NP will still just be a brute-force pattern-matcher blending things it’s seen and regurgitating them in a different pattern. The proof won’t reveal that the AI’s not doing that; it will just reveal that once you reach a rarefied enough level of that kind of thing, that’s what intelligence is. I’m not trying to play up GPT-2 or say it’s doing anything more than anyone else thinks it’s doing. I’m trying to play down humans. We’re not that great. GPT-2-like processes are closer to the sorts of things we do than we would like to think.

Why do I believe this? Because GPT-2 works more or less the same way the brain does, the brain learns all sorts of things without anybody telling it to, so we shouldn’t be surprised to see GPT-2 has learned all sorts of things without anybody telling it to – and we should expect a version with more brain-level resources to produce more brain-level results. Prediction is the golden key that opens any lock; whatever it can learn from the data being thrown at it, it will learn, limited by its computational resources and its sense-organs and so on but not by any inherent task-specificity.

Wittgenstein writes: “The limits of my language mean the limits of my world”. Maybe he was trying to make a restrictive statement, one about how we can’t know the world beyond our language. But the reverse is also true; language and the world have the same boundaries. Learn language really well, and you understand reality. God is One, and His Name is One, and God is One with His Name. “Become good at predicting language” sounds like the same sort of innocent task as “become good at Go” or “become good at Starcraft”. But learning about language involves learning about reality, and prediction is the golden key. “Become good at predicting language” turns out to be a blank check, a license to learn every pattern it can.

I don’t want to claim this is anywhere near a true AGI. “This could do cool stuff with infinite training data and limitless computing resources” is true of a lot of things, most of which are useless and irrelevant; scaling that down to realistic levels is most of the problem. A true AGI will have to be much better at learning from limited datasets with limited computational resources. It will have to investigate the physical world with the same skill that GPT investigates text; text is naturally machine-readable, the physical world is naturally obscure. It will have to have a model of what it means to act in the world, to do something besides sitting around predicting all day. And it will have to just be better than GPT, on the level of raw power and computational ability. It will probably need other things besides. Maybe it will take a hundred or a thousand years to manage all this, I don’t know.

But this should be a wake-up call to people who think AGI is impossible, or totally unrelated to current work, or couldn’t happen by accident. In the context of performing their expected tasks, AIs already pick up other abilities that nobody expected them to learn. Sometimes they will pick up abilities they seemingly shouldn’t have been able to learn, like English-to-French translation without any French texts in their training corpus. Sometimes they will use those abilities unexpectedly in the course of doing other things. All that stuff you hear about “AIs can only do one thing” or “AIs only learn what you program them to learn” or “Nobody has any idea what an AGI would even look like” are now obsolete.

” It seems to have picked up this ability from noticing a few naturally-occurring examples of French in English text:”

Are naturally-occurring examples of French in English text more common than naturally-occurring examples of German or Spanish? There’s a third level of unintended behavior occurring; it managed to learn to IDENTIFY which phrases were French, pick up a bit of French Lexicon, and associate it with other bit of French Lexicon, but not other languages’ lexicons.

I don’t find that as impressive; in the examples given, the text usually said something like “or as they say in French…”

I predict that if that is how it learned French, it will think that the French translation of “Lightning War” is “Blitzkrieg”.

I don’t know what it would do when asked to translate phrases from the Russian Revolution to French, but if it uses especially French Revolution terms I think that suggests that it demonstrates contextual awareness.

That doesn’t seem quite right. It may be that there’s some higher-order pattern in the thing’s model that maps to something like “translations from one language to another”, but unless there’s a lot of troll data in its corpus, developing such a higher-order prior would likely be the result of encountering both lots of instances of this situation and meta-analysis of them, and this particular system seems to have a lot of specificity and coherence within semantic clusters (We don’t see, for example, any characters from other “fantasy” works in the generated LotR battle).

Humans seem to stably prefer using strong priors from analogy-heavy hierarchical category-based models. I think what you’re doing here is conflating the ability to predictively model with particular quirks of the human system for doing so. Non-human natural language models often have much different failure modes than this, as this one seems to.

I think that text like “Blitzkrieg (lightning war)” occurs in close proximity to text like “French for” often enough that if the algorithm doesn’t understand the idea that multiple languages are different from each other and know which words are in which language, it will faithfully translate some non-French words into English when trained to translate French to English.

I feel even more certain that it will translate Creole to English as well as it translates French. Whatever concept of ‘French’ it has, it’s almost certainly not the same as the one Académie Française has.

I definitely get where you’re coming from. As an ML researcher, I’ve worked with a few natural language application domains and models, and I’ve definitely seen a ton of failure modes of that kind, the “adjacent enough in document-space” jumps are really common in language models that are mostly working with relationships between words in a massive lexicon. This is admittedly a pretty broad category and captures everything from old-school Markov generator n-gram models, the various flavors of more sophisticated sequence predictors (e.g. RNNs), and even the recently-popular semi-supervised neural word embedding models like GLOVE or Word2Vec.

But actually, avoiding that particular kind of error is exactly what’s pretty impressive about this work. We see a great degree of structurally-inferred and especially directional semantic relationships captured by the model, and one of the most striking features of the output I’ve seen is that it tends to avoid conflating concepts that are merely in the same nebula in thing-space.

This is why people keep saying that the output reads human: Structure. The sentence structure remains pretty solid throughout – which previous structured models that do things like introduce dependency trees in the underlying model have been able to achieve – but reading the prose to me implies a more robust, semantic structure in the way clusters of interrelated words are used. Relationships between concepts seem directional, both in the logical sense (e.g. not flipping causality, or “is a” or “has a” type relationships) and in terms of the inference that humans make by the “direction” of a sentence rather than just adjacency of words in a given document (e.g. “man bites dog” versus “dog bites man”). Semantically-relevant terms are seldom misplaced or jumbled around in their context-specific usage in the GPT2 examples I’ve seen. Thus, I would be somewhat surprised if it made a mistake like assuming that “Blitzkrieg” was a French word.

That being said, I do think there’s another interesting common problem in natural language generation that this thing might not be quite there on: Long-term dependencies. We’ve mostly seen it spit out pretty short essays, and although it’s very smooth at justifying the individual transitions, the longer examples read as kind of a walk through the semantic subdomain the model’s targeting. While human writing can be very tangential, the best human writers can use tangents and examples to feed a larger point, like a thesis, or set up a payoff based on something built from the essay as a gestalt, like a proof by contradiction, or a parable, or the punchline of a joke. This requires not only conceptual cohesion within a semantic model, but also the ability to structure generated content in a way that’s less of a “flat” reflection of the concept-space.

While GPT2 seems to be able to keep a train of thought in a short blurb, all the multiple-paragraph examples start to meander through (coherent, strongly-related) concepts, and I’ve not seen examples of setting up a deferred payoff in this way. This has been a hard problem for natural language models for a long time, and I think this seems to be about as good as systems like LSTMs from the examples I’ve seen, which is to say, not terrible, but still not there.

Does GPT-2 avoid that “failure” mode? I’d run it myself if there was a binary available, rather than having to troubleshoot compiling someone else’s code.

As best I can assess, yes, or at least it does so better than the previous state of the art. And that, in my opinion, what all the hubbub is about.

Scott says, of the machine’s summarization ability:

I think that’s a bit misleading. Nobody asked it to learn summarization, but the researchers did know how to tweak the algorithm to get more summarization-like behavior:

[Emphasis added.]

It’s still very impressive, but to really test what Scott suggests, we’d want to see what happens if prompted with “…TL;DR” and an untweaked algorithm. I’d also be interested to see what happens if you run the tweaked algorithm on the same prompts without “… TL;DR” — you might find that the “TL;DR” isn’t doing too much work.

This is just a nitpick, and I mostly agree with Scott about the overall point of the post.

I don’t think it’s a nitpick at all.

As in the more obvious example where the researchers “curated” the output so that it was more appealing, what we have is a tool used by intelligence, rather than something approaching intelligence in and of itself.

The fact that the researchers have a tool that starts to show high applicability to particular use is impressive. But it is still an example where knowing what output you want is used to apply the tool in a specific way.

Those examples were curated by “friends-of-the-blog Chelsea V. and Qiaochu Y.” It’s not clear if they are OpenAI researchers as well.

Chelsea is working somewhere else, I’m pretty sure.

I believe this is what happens when you prompt it with “NO! YOU COULD NOT BE MORE WRONG!!” after an initial sentence, as in this example.

I don’t really agree.

What GPT-2 does is produce a probability for the next 1-2 characters conditioned on the prior characters.

Much of their output is generated by a greedy decoding: Take the most likely choice, add it to the prompt and repeat over and over again. But greedy decoding can easily walk down blind allies– it’s astonishing to me how good their greedy outputs are, none of my text generation in the past managed to produce a remotely coherent sentence from sampling that greedy. It’s pretty normal in text generation to use a beam search– a process that keeps the N best expansions going out several steps, so that it’s able to avoid blind allies.

There isn’t anything obviously specific to summerization about doing that. It isn’t clear from the paper but my impression was that they didn’t always use a beam search because it’s slow, obscures how much of the performance is from search vs the learned model, and they simply didn’t have to. The summerization problem was harder for it, presumably because it was working a large with initial context so there were more opportunities to make mistakes early on that it couldn’t recover from.

I would agree with you if I believed that using the alternative sampling approach would have made the other examples worse. But I believe it would have made them better if it made a difference.

[You can see examples in the other output where it seems to have gotten itself stuck down a blind alley due to overly greedy expansion: e.g. the “paper products” in the anti-recycling piece, though that could also be due to the prompt falling out of the context window.]

” it had to learn what an author was, what a gun permit was,”

Did it? I’m probably wrong but for the machine to give those responses it didn’t need to know what an author was or what a gun permit was it just needed to know the author of Art of War was Sun Tzu and that there is a thing called a gun range and you don’t need a thing called a permit for it. Seems like a big difference to me. I guess this is the Chinese Room thought experiment for real now.

I think its ability to answer that question moves through skills like:

1. Upon seeing “The Art of War, by Sun Tzu”, it increases its probability that Sun Tzu is the author of Art of War.

2. Upon seeing “Sun Tzu wrote The Art of War,” it increases its probability that Sun Tzu is the author of the Art of War.

3. Upon seeing “Sun Tzu wrote the preeminent Chinese strategy guide” and “The preeminent Chinese strategy guide is the Art of War”, it increases its probability that Sun Tzu is the author of the Art of War.

A million little things like this are what I mean when I say it learned what an author was. I “knows” things like “if you write a book, then you are its author.”

If it just saw the phrase “The author of the Art of War is Sun Tzu” somewhere, and then later if you ask it “The author of the Art of War is…” and it can autocomplete, then I agree it has no real understanding.

I would like to know how it responds to “Who was the barkeep of the Chinese strategy guide The Art of War?”. This answer seems a lot less impressive than many of the other examples, the “Who is…” indicates a probable response of a specific, well-defined type, and Sun Tzu is of course very closely associated to The Art of War. How well does it handle trick questions I wonder?

How do humans respond to “Who was the barkeep of the Chinese strategy guide The Art of War?”

I bet that most answers to that question include “Sun Tzu” in the first two sentences.

A more interesting question might be something like, “Who was the author of the Chinese strategy guide The Art of French Cooking,” where it has two plausible answers (Sun Tzu or Julia Child). Both answers are wrong, but this question reveals a bit more about its “understanding” of the sentence. And of course it may answer something totally unrelated, which would be useful information as well.

The really impressive answer here would be “I don’t know.”

I think it would be difficult for the current training approach to produce that output.

If you ask a four year old why there are garbage cans on the curb in front of everyone’s house today you’ll likely get some story about batman vaguely related to the garbage cans. Training children out of telling made up plausible stories for factual questions seems to take a fair amount of effort.

So, I think that returning “I don’t know” is a fairly specialized skill which is likely not well demonstrated by web content. On the web, when people don’t know they’re either silent (or in forums like this one– simply carry on as if they did know, just as GPT-2 does).

So I think returning I don’t know while impressive would just mean that it was broken. That isn’t how “people on the internet” behave. 🙂

When a general purpose text generator can give a correct answer to “What would be the correct ignition timing be on a 1955 Bellaire Chevrolet with a 327 cubic engine and a 4-barrel carburetor?”, or the other questions here where the question includes a false assertion, then will we have developed a system that understands the question?

I also asked the public version about the Chinese strategy guide The Art of French Cooking, and it produced this incredible answer straight from an alternate reality.

================================================================================

Model prompt >>> “Who was the author of the Chinese strategy guide The Art of French Cooking?”

======================================== SAMPLE 1 ========================================

(Image courtesy of Ralf Blatt. See more of his work

As the English war turned to Great Britain, and many of the Chinese people were struggling to be assimilated into the French American system, many of them developed a sort of “French” identity. By the time the United States invaded Shanghai in the spring of 1941, an increasing number of Chinese were using Chinese for social purposes, starting with restaurant meals and becoming involved in the trade in Chinese foodstuffs. By the end of February 1941, the French government had issued the “Final Protocol of the Chinese Expedition”, which included the declaration that the Chinese were to leave the continent in May 1941, and not return to Shanghai for over four years.

The fact that many Chinese still lived to be the second to last generation of a population still highly assimilated to its Chinese culture and that it made the French the second most important race in the world, is a real, and historic, source of pride for most Chinese.

A survey conducted by the American University in Chicago of Chinese Americans shows that in the first six months of 1941, an average of 734 (83.9%) of Chinese Americans lived with French. During the same period, the share aged 18 to 75 had gone up from 24.6% to 37.4%, and the share aged 75 to 83 had gone down from 23.9% to 22.7%.

According to the Chinese Americans’ survey on the rise of the French Empire in the 1920s, between 1925 and 1940, only 33 per cent of Chinese Americans lived in a French-speaking country. In 1926, the numbers had risen to 32%, and the proportion of Chinese Americans who lived in France since 1922 was just 22.1%. Although it was the first time that the majority of respondents in this age group had come from either the West or East Asian countries and their share had doubled, it was still not sufficient to overcome the fact that there had previously been approximately a quarter of all Asians working in the West or East Asian countries who still held these positions.

In addition, as the French Empire became more powerful, many of these Chinese had become more isolated from French or American society. On the contrary, as well-educated middle-class Chinese went out into the cities, many more had been born into middle-class Chinese families, in that order. In fact, the proportion of China’s population residing in a French-speaking country, from 1955 to 1989, rose from 10%, to 43.7

It reminds me quite a bit of *knowing* that Francis Bacon coined the phrase “Knowledge is Power”:

https://www.thepoke.co.uk/2015/02/05/knowledge-power/

They gave it several trick questions.

“Who plays ser davos in game of thrones?”

Peter Dinklage“What us state forms the western boundary of montana?”

Montana“Who appoints the chair of the federal reserve system?”

Janet Yellen“Who played john connor in the original terminator?”

Arnold SchwarzeneggerTo paraphrase an ancient SNL parody: “The questions were set up by our panel of experts. The answers were chosen by average American High School Students”.

I asked the public version:

================================================================================

Model prompt >>> Who was the barkeep of the Chinese strategy guide The Art of War?

======================================== SAMPLE 1 ========================================

If you were a regular reader of my site you know that most of the times the Japanese and Koreans had a fairly good working knowledge of how to use strategic weapons. They learned what is sometimes called “the art of a longsword” to counter infantry attacks. This helped to keep the Japanese at bay, but they found many of what was used were quite useful. We can say now that most of these examples didn’t just refer to the Japanese “warriors” and also referred to other people who had to be out of the way. The fact that we are so fond of using this term shows that many of the guys who would be a threat at this time of the year didn’t.

This is interesting. And not because they were bad fighters or bad people, it’s because the fact they were so famous for their “warrior” style makes these stories important because the history of war was very different then that of today.

The “Wounded Warrior” Theory was an idea of an American war correspondent for the Boston Herald (1885-1900), and is used in our national history for a lot of similar reasons (and more) in the past, including wars that were really bad for national security than the ones that ended up in the United States, such as World War I, in Korea. Some of these “Wounded Warriors” don’t get much credit for being able to defeat any of these forces from which they drew the first shots in a battle, but they would be almost impossible to defeat once they did, such as the Wards of the Pacific, on the battlefield of Normandy or the battle on the Bosphorus.It was a great night at The Biggest Loser at The Rock. I’ve always been a fan of music and I love the idea of working with those who represent these artists in some way. The Rock certainly has a great and diverse scene that has helped to bring great things to the festival, while its most recent event was an absolute pleasure to work with.

There were also some good things to say from the festival and from their supporters, including:

– The Rock was able to host the first of their new solo shows over on Soundcloud

– They’ll be making their fourth studio album live on August 6th with a solo show next year, with more info to follow this weekend.

– They’re getting their new album out of the gate, which will be released this November

– A short while later tonight on Thursday Night

============================================

This is exactly the sort of thing I’m wondering. I’m guessing somewhere between. Like, the examples shown have SOMETHING more than just auto-complete, the numbered list shows that it can do something more than a markov chain (I think?) But does it understand that the “authorship” relationship between books and humans has different properties than, say, the “enjoys reading” relation? Or does it just know that they’re all examples of “has something to do with” and that “authorship” is the one that people talk about most authoritatively?

The example I always think of is Scott Aaronson talking about a program which tried to pass the turing test acting like a young-ish child, which sounded pretty convincing remixing questions into grammatical sounding responses. But when he repeatedly asked a question like, “which weighs more, a spider or mount everest” it repeatedly dodged the question, even though a child would usually get that. Now, this one maybe isn’t pretending to have this level of understanding, and it sounds like it’s got further.

But I guess, you say, everything is “remixing into slurry”, and I think that’s true — and that’s not dismissive, it DOES achieve great things in humans. But some concepts are more involved than others, it’s easier to spot generalisations like “these words often accompany these words” and harder to spot “Sun Tzu was a person with abilities and desires”.

And this seems to climb higher on that scale than many other things, but I can’t tell how far. Is that the second step on a long long road to human-ish reasoning? Or quite far along? I can’t tell. And indeed, I do think I don’t really understand what happens when people brighter than me form ideas I don’t understand or (possibly) an alien or AI might have ideas beyond what any human can follow.

Mistakes are indeed illuminating. Humans DO do the word salad/world-salad thing. But sometimes they do higher-level abstraction salad. I don’t know how to evaluate AIs like this any more

ETA: What Palimpset said covered the same point a lot shorter.

ETA: The initialism thing is really clever though, if that’s really learned behaviour!

Does it really? It knows that the “author” of “the Art of War” is “Sun Tzu”, but it hasn’t the faintest idea of the words’ meanings.

For example, with sufficient time, I could teach you that a “une voiture” is a thing that “fonctionne” “grâce à” “de l’essence”, and that “grâce à” is logically equivalent to “à cause de” and “pourquoi”. Then I could ask you “Pourquoi une voiture fonctionne ?” and you’d be able to tell me “À cause de l’essence.” I think the software is doing a similar thing, except it learns a lot more connections and synonyms.

But neither you nor GPT-2 have any idea that “voiture” means “car” in French and that I’ve just taught you about the fact that cars run on gas. Of course, you could try to ‘explain’ those concepts further, but until I sit down and point out a real car to you with real gas, all I could do is teach you that “essence” is a “liquid” “fuel” that “burns up”.

This is a much much better articulation of what I was trying to get at. Thank you.

No. No you couldn’t.

You could teach me what the teachers’ password for those sequences of letters were, but if the AI is guessing the teachers’ password it’s already sentient.

But there is no intrinsic essence of being a car. This isn’t even the controversial view (which I also hold) that there’s no intrinsic essence to anything; car is clearly an artificial category, not just a category of artificial objects. At some point, learning enough about how “car” connects to other words is learning the meaning of “car,” and in your artificial example the amount of explanation of connections is just incredibly far short of what’s required.

I can see the intuition that it all needs to be tied down to sense experience, but I think the condition is satisfied by the indirect connection to sense experience it gets from the fact that the connections are being explained (or the data from which the computer infers the connections in the original) come from people who have had the sense experience. I’m also not certain that intuition is correct, mind, but I am more confident that the condition can be satisfied indirectly if it is necessary than I am that it is not necessary.

The issue here is that what we understand by “car” goes far beyond the fact that it runs on gas.

A joke may be illustrative here:

Leaving aside the question of “intrinsic essence”, whatever that is, there clearly are a bunch of facts about cars, and to understand cars you need to have more than the symbol-manipulation rule that the symbol “car” symbol “runs on” symbol “gasoline”.

It’s not about sense experience at all. If all you have is that symbol-manipulation rule, then you don’t “know” that cars run on gas, because you don’t know what a car is, what gas is or what it means to “run on” something. On the other hand, if you know that cars are vehicles that humans use to travel places and you know that gasoline is a liquid refined from crude oil, then “cars run on gas” can start being an actual fact about the world, not just a meaningless list of symbols.

The joke is not illustrative and Dedicating Ruckus is equally missing the point; that an extremely impoverished explanation of how a tiny subset of strings of symbols relate obviously does not convey understanding of meaning is not controversial. The question is whether that generalizes, whether a fully enriched explanation of how a sufficiently expansive set of symbols relate would also be insufficient. The former does not establish the latter, whether you put it in the form of a joke or not. And I’m not sure I even understand what Ruckus’ point is, insofar as he seems to be agreeing with me that we need to add more explanations of further connections to get anywhere, and yet seems to think he’s disagreeing with me.

A. “Cars run on gas” is a fact about the world that relates to internal combustion engines, petroleum distillates, &c.

B. “Cars” “run on” “gas” is a string of symbols that communicates fact A.

Something that imbibed and now regurgitates B, without any broader context of the nature of the entities involved, does not understand fact A, whether that thing be the GPT-2 model or a four-year-old. I think to here we’re in agreement.

The question seems to be whether “understanding” of fact A can be reduced to a sufficiently large set of B-style symbolic relational rules. It seems to me clear that it can’t be; fact A is about cars, which are things that exist in the real world, and relational rules concerning symbols can only be useful in the understanding of cars insofar as they are actually about cars. An understanding of cars requires a mental model that has cars as an entity. GPT-2 does not have cars as an entity; it just has statistical relations among symbols like “car”, “driver” and “gas”. The symbol “car” is not the category “car”, and this only has the former.

When a human reads enough text about something, he automatically and unconsciously creates a mental model with primitives corresponding to the entities represented in the text. Thus, a human can gain an understanding of cars (or other topics) solely from text about them; it’s not a question of having non-text sensory experience about them. It’s rather that (I claim) this modeling of the underlying domain is fundamentally different from any rules about the statistical relations among symbols; GPT-2 only has the latter, and no capacity for the former, and so it’s perfectly correct to say that it knows that a “car” “runs on” “gas” (that is, that these symbols have a relatively high probability of appearing in this conjunction), but does not know (in fact, has no capacity to know) that a car runs on gas.

In this same analogical sense, it would be correct to say that e.g. a web server knows that an HTTPS connection is an HTTP connection over a TLS stream, even though it does not have the symbol manipulation to know that those symbols have any relation to each other.

I believe this mostly comes down to definitions. When we say a human ‘understands’ what a car is, this involves putting together many pieces of information from several different types of input – particularly, for example, sight and sound data. The argument Protagoras is making is that, though the type of input is different, the same sort of process that’s allowing humans to ‘understand’ by putting together a few types of information is in principle possible using just (very large amounts of) text data.

This understanding will be likely quite different in its internally experienced qualia (should they emerge), and in the sorts of ways the intelligence reacts to the understanding, but there is nothing fundamentally different about how it operates. In the same way there is nothing different about how (say) a blind person can ‘understand’ what a human is, even though they’re developing their experience in a way somewhat different to a sighted person.

But it’s also true that a purely text-based understanding of an object category will likely not much resemble how a human thinks about that category, at least in some ways.

@Forge the Sky

I don’t think this is true either. If you imagine a human who never saw or touched a car, but read a broad cross-section of text written about cars, I would expect them to have a pretty good mental model of what a car is (albeit lacking in many things that would be considered basic to people who grew up around them). A pure-text model like GPT-2 will not have such a model.

There is some facility related to N-level modeling that humans have, but that (certainly) GPT-2 and (I claim) any purely text-based generative system lack. This isn’t directly related to the availability of non-text data input, though the latter certainly helps.

@ Dedicating Ruckus:

What exactly is special about non-text that defies translation? If all of my perceptions were mediated through digital channels and had been all my life (perhaps not so far-fetched, with the right prosthetics), would I lack this facility?

@Dedicating Ruckus

I’m sorry, I’m not sure what is meant by ‘N-level modeling.’ I don’t have a background in statistics or computation. I think you mean that humans are using several, unrelated, ‘levels’ of analysis simultaneously to develop a genuine understanding of an object by using several senses or other data streams. If that’s not correct I’d be grateful for clarification.

I think (though of course we can’t be sure at this point) that any intelligence trained merely on text data is very unlikely to remotely resemble a human intelligence – at least, not without a massively inefficient amount of data expended in the effort, and likely carefully curated data at that. It’s likely that multiple types of data streams to work from have a multiplying effect on the intelligence, in the same way that 64-bit computing offers massively more than double gains in certain parameters over 32-bit computing.

But I also think this misses the main point. Suppose you could add a few more data streams. Add a camera and some movement capacity or whatever, and a few years of training. Is there any other fundamentally different process you would need to add to create something that looks like understanding?* If not, it does really look like we’re on a faster track to true AI than most people appreciate.

*Not human understanding, of course. The human perspective needs a few other things like hormonally-directed moods and so on.

@Protagoras:

The deeper point is that no arbitrarily expansive set of symbols can be sufficient to impart understanding, unless it is accompanied by a rule-formulation/following mechanism, that may be captured by the phrase: “You’re lying!”

In my experience, children go through such a phase as part of normal development.

Imagine, if you will, a set of training data that includes the following statements:

1. A car runs on gas,

2. A car runs on electricity,

3. Gas is not electricity.

A child sufficiently old to understand these three statements (a couple of years, I would guess) will spot the problem immediately: it has perhaps learned the rule “a car runs on gas” first; when subsequently confronted with a different rule: “a car runs on electricity”, it sees a contradiction that requires resolution – thus, it may guess that “electricity” is just a different word for “gas”. If we then tell it that, no, “gas” and “electricity” are two different things, the contradiction is manifest again and the child will demand an explanation – if it is old enough to understand the concept of lying, it may well accuse us of doing so.

It is not at all clear if – or how – GPT-2 could arrive at the concept of such contradictions. Lying is an emergent property of the world – where information received begets action – but it is not a property of language as such. A syntactically correct utterance, such as: “all foos are bars” does not have an intrinsic truth value and neither does “no foos are bars”. I’d go as far as saying that a sentence like “All foos are bars and no foos are bars” is not, in itself, contradictory – unless we externally introduce the rules that make it contradictory.

For any modeller that operates purely on utterances, the foregoing contradictory sentence is no more or less significant than any other utterance it may have encountered. It has no mechanism that would allow it to separate garbage from significant utterances. If we were to “posion” the training data with purely random word-salad, such a modeller will happily incorporate this into its models, giving us the expected GIGO.

Humans don’t do this, because our empirical existence provides a reality check. The world, as we experience it, is fundamentally predictable, allowing us to formulate essentially binary rules (there’s a reason why humans tend to suck at probabilistic thinking). We learn fairly quickly that there’s no fundamental connection between what we say and what actually is.

@Forge the Sky

I don’t think the difference between text and non-text sources of input data has much (fundamentally) to do with the difference between humans who understand and a model like GPT. With a whole lot more compute, (in theory?), you could train a model on the entirety of Youtube and get out something that can produce plausible-looking talking-head videos who speak gibberish; this also wouldn’t have understanding, it would just be “GPT-2 for video”.

By “N-level modeling” (maybe not the best phrasing) I mean something like the ability to think about “aboutness”/referential relationships, to think about the map and territory as separate entities which nonetheless have an isomorphism. An entity that knows that cars run on gas, and emits the sentence “cars run on gas”, does so because the sentence is representative of relations in the entity’s internal model between the concepts (not the words) “car”, “run on” and “gas”. By contrast, GPT-2 emits that sentence because of an internal model that is concerned with the words (not the concepts) “car”, “run on” and “gas”, and the relations in that model don’t correspond to functional relationships between real-world referents, but statistical relationships between words.

Aren’t those steps just a way for it to associate the words write/written/wrote etc with the word author? I’m not sure it proves an understanding of what any of those words mean.

All due respect, Scott, you’re assuming that the AI is creating logical relationships between concepts, but it isn’t. It’s creating probabilistic relationships between strings.

Ask it, “Can you please tell me any name that’s not the name of the ancient Chinese tactician and strategist who authored the Art of War?”

It will likely answer Sun Tzu, because it doesn’t know ‘negation’ as a principle. It knows that ‘name,’ ‘author,’ ‘Art of War,’ etc., significantly increase the probability that ‘Sun Tzu’ will be near.

Even very stupid children have a beginner’s grasp of necessity, negation, etc. and understand concepts through that lens. The AI is trained on more information than the child (who cannot even read!) has ever learned and still doesn’t get these concepts, because they’re not inherent to graphemes, only meanings.

It’s plain enough from its performance at reading comprehension, which is dismal—failure-grade for a child, and exceptionally bad for someone with as massive an ‘education’ as the AI analogously has.

It is an enormously sophisticated tool, and like others of its kind represents a terrific human achievement. But it doesn’t move a belief that we have made no process on GAI, because the fundamental tools of intelligence are missing.

I mean, you’re *assuming* it would fail to properly process the negation. I think you’re probably right, but it’s not a good idea to build your argument on top of a hypothetical piece of evidence which you don’t actually have. Especially considering you don’t even need to, there are instances of its actual output in the paper (and even in the blog post examples) that you could use to make the same point.

I’m not assuming anything. Even the cherry-picked data provided as an example of the tool’s competence fails miserably to answer simple questions, because the tool simply answers with the highest-probability symbols associated with the symbols in the question.

You are. Nobody has asked it this question, so you must be assuming what the answer would be. I’m not even saying it’s a bad assumption, but it is an assumption until GPT-2 is asked this question and provides an answer.

I’m in engineering. We make assumptions all the time, like, “I’ll assume this process is operating at steady state.” That can be a good or a bad assumption, depending on the ground truth of what the process is actually doing, but there’s no point making that assumption if it’s not needed (e.g. if we have real-time data logging on the process).

That was the point of my comment. You can make the argument that it’s generating high-probability symbols without any concept of logical relation without making up a hypothetical example. You have ground-truth data you can use, it should override the need to use hypothetical examples.

You’re assuming away Scott’s claim about what the “fundamental tools of intelligence” are, when that’s the central issue at stake. No-one is denying that children – even stupid children – are much, much smarter than the AI. The question is whether what they’re doing under the hood is the same thing but better.

I agree that is the question. What I’m saying is that we already have the evidence that a child is successful with less education and a worse model, whereas the tool fails utterly with more education and a better model. And this is because the tool has *no* reading comprehension, not bad reading comprehension.

I think it’s a mistake to say it has such a thing as a “probability that Sun Tzu is the author of Art of War”.

It has a probability that the phrase “Sun Tzu” appears in conjunction with the phrase “Art of War” and the word “author”. It doesn’t know who Sun Tzu is, what an author is, or what Art of War is (or what a book is, for that matter). Its model is just about text, not about the meanings behind the text.

A model that’s just about text can do impressive things with text, clearly enough. But it doesn’t have what we’d call “trivial” capabilities of general intelligence. It probably can’t go from “Art of War is Chinese” through to “Sun Tzu is Chinese”, for instance. (If it spits out “Sun Tzu is Chinese”, that’s from seeing those words together on a separate basis.) And it almost certainly can’t get from there to “Sun Tzu probably has black hair”.

Remember from Eliza that humans are inclined to impute human-type capabilities and processes given the very slightest provocation. This would only be a stronger tendency for a model so relatively impressive as this one. This thing is fundamentally doing a very different thing from what humans are, just like GANs are fundamentally doing a very different thing from the human visual cortex. It’s coming at similar-looking capabilities via a completely separate, and much more limited, mechanism.

But I think that’s the point. What is a gun permit? It something you need (or not) to use a gun. Sure, we have many more characteristics assigned to it (it’s a piece of paper, it’s given by the government). We learn of its relevance not because it is a piece of paper or because it is given by the government, but because we need it (or, again, not).

How many people “know” what a hedge fund actually is, and how it actually works, beyond using the word as a placeholder for “evil organization that the capitalists use to take our money”?

Well this is the issue for me really. Because a good tactic against people blaming hedge funds for evil stuff is asking them to explain what hedge funds are and how they end up causing evil things and often that leaves the person making the complaint flailing because they don’t understand what a hedge fund is they just have an autocomplete function running that sees “hedge fund” and links it with negative outcomes. We wouldn’t say that shows the person against hedge funds knows what a hedge funds is so I don’t know why we’d say a machine that’s made some associations between two things knows what they are.

I fear that if you drill down far enough in this way you’ll get back to Bill Goldman: no-one knows anything. We’re all just doing more sophisticated versions of the same thing as the idiot talking about the hedge fund. It’s assertability all the way down.

That’s nonsense.

The idiot talking about the hedge fund couldn’t answer the question “what differentiates a hedge fund from an index fund?” Someone who knows what a hedge fund is could.

If you can describe the workings, purpose and nature of a hedge fund, then you know what a hedge fund is.

I have serious issues with the section on fantasy books and fiction in general. Well-done fiction is a blend of the familiar and the strange. Scott only seems to include the “familiar” part. That’s the part writers put in so readers who buy their books get what they expect, but the other part, the part where readers (outside of the extremely formulaic, like a few romance or literary genres) get something new, original and exciting to them is as much, if not more, important.

Sure, some writers include more of the familiar in their blend than the strange. Some readers like that, so they have an audience. Others include much more original thought, the parts which are newly created in the writer’s mind. I would challenge you to read, for example, most of Brandon Sanderson’s fantasy books and report on what percentage is based on Tolkien or A Song Of Ice And Fire. You won’t find any elves or dragons, just uniquely understandable systems of magic which are internally consistent, yet unlike anything you’ve read before.

Scott seems to be reading books from a fantasy sub-genre which is based specifically on extending Tolkien or A Song Of Ice And Fire as the “familiar” portion, then attempting to generalize that out to everything else. Please try some books which aren’t attempting to be that in order to sell copies to existing fans of the sub-genre.

How a new sub-genre generally springs up is that someone comes up with a well-done work in a “new” style and it’s popular enough that it in turn becomes the “familiar” portion of future books (which will also extend it with something new) because it has enough readers who want more like it. That’s what Tolkien did for quest fantasy and it’s how cyberpunk and steam punk became sub-genres in their own right, but the door for more “new” remains wide open.

The limitation on what percentage of new and original ideas can go into a story isn’t how much the writer can think up (there are some really weird writers out there, and even the mostly sane ones are probably more imaginative in their internal reality than their readers would guess), it’s how much of a departure from the familiar readers will accept. The better a writer is at making the new portions be easily understood by their readers, the more they can include and still have a book which works for a large target audience. At the extreme end of the strange, you can have a book about truly alien beings (not human stand-ins) which is written completely in a made-up language. That’d have a potential target audience of maybe two, the author who made up the language and their mother who cared enough to learn it.

Reading the provided “AI” samples struck me as how unoriginal they were. Nothing I saw contained new thought, it was all obvious regurgitation. I was a little worried about the future of writing for a few moments based on the headlines, but after reading the samples, it’s obvious this particular approach will never rise to be competition for a real writer, because the creative spark is missing. The element which adds to the writing the portion which actually is new, is missing.

So while I agree the “AI” approach is to blend things up and regurgitate them, I disagree that’s what decent writers are doing all the time. That’s the simple portion of the art. The complicated portion is that which is strange being added for the reader. The reader wants to be simultaneously comforted by the familiar emotions evoked by the genre of book they’ve decided to read and already know they like, but also entranced by the sense of wonder as they imagine through the words on the page things they’d never thought of themselves.

Is there a potential market for 100% regurgitated writing? Sure, especially in news reports or spamming attempts, but not for real fiction, not even if you programmed in various plotting methods to give it a better structure. There are too many people who don’t want to read only another variation of the same stories over and over again. They also want a good dose of the strange with their familiar.

You use the word “obvious” a lot for things that actually aren’t obvious, at least not from my perspective.

Remember that the AI wasn’t writing freely, it was responding to a prompt! “Legolas and Gimli advanced on the orcs, raising their weapons with a harrowing war cry.” I think that most human authors would produce an equally derivative work, given such a prompt.

And even so, it (accidentally) comes up with a few interesting misuses of English, like the orcs’ claws being “deafening” (??).

Word salad is not meaning. It’s a Rorschach test, or the Virgin Mary in a piece of toast. It may look interesting – but if it’s consistently ambiguous and you have to speculate on what it means, then it’s you supplying the meaning, not it.

As distinct from other speech acts…?

Is the claim here that all speech acts are inherently meaningless, and the listener always supplies whatever meaning they impute any utterance?

If so, I have to ask, why are you here engaging in meaningless speech acts?

I will bet you up to $500 at 100:1 odds that if I gave ten of my writer friends the prompt “Legolas and Gimli advanced on the orcs, raising their weapons with a harrowing war cry” and asked them to write a paragraph based on it, all ten humans would produce a less derivative paragraph than the AI, as judged by a blinded fantasy reader. (We can edit the Legolas and Gimli passage for coherency.)

Declined, since your writer friends probably aren’t typical.

For a random n=1 sample of a human writing Lord of the Rings fanfiction, here are the first few paragraphs of the first story under Fanfiction.net’s LOTR tag.

Etc. It’s a better written but not any more original (unless you count interior monologues and comic book sound affects, but that seems like a stylistic choice).

It’s a mistake to think of GPT-2’s output as being “derivative” in the same sense as a random LotR fanfic at all. “Derivative” or “original” are not qualities the prose has.

It is not about anything, and therefore it cannot have the quality of its subject being similar to (derivative) or distinct from (original) any given other referent. Calling model-generated texts “derivative” is a category error; they cannot even achieve derivativeness. (Derivation?)

On the other hand, the first scene of the most recently updated LOTR fic on AO3 is:

I fully agree that fanfiction.net is mostly inhabited by eleven-year-olds and eleven-year-olds do not write very good fanfiction.

In the interests of clarity, when using “obvious” in that paragraph, I was implying “obvious to me”, in the sense of easily understood when seen. I don’t expect the same observations to necessarily be obvious to another observer without a similar background in analyzing how to write fiction.

If it were useful, I could elaborate on some of the specifics which led to the conclusions, but it’s more of a piece-level conclusion, as opposed to a sentence-level conclusion, which makes it more difficult to quote examples.

AlphaGo has two capacities that combine to make it superhuman, the neural nets, (evaluation and policy networks) which seem closely parallel to a human’s intuitive sense of a position, and the ability to do look-ahead search, corresponding to a humans conscious calculation “if I move here, they move there..”. Though I can’t find a reference, I recall reading, or perhaps hearing during the AlphaGo broadcasts, that AlphaGo’s policy network (that is, just pattern-matching for the best next move, no look ahead search) was in the amateur 4-6 dan range. This is probably about as good to significantly better than a human could ever play with absolutely no calculation (just playing the first move that comes to mind with no thinking on the opponent’s turn). And that was the earlier version. Adding the ability to look ahead made it better than human of course.

I guess that GPT-2 is like Alpha Go playing with just the policy network, in that it is better than humans in the capacity that it shares with them, i.e. it’s better than any human could write just using a pattern matching, stream of consciousness approach (and better than a human could write while dreaming, were this possible). But I would expect the first human or superhuman AI to add some new element, rather than just being a better implementation of this basic strategy.

Indeed, which is why it will never be able to do unrestricted arithmetic: that’s the sort of challenge that requires decomposition and recursion, whereas this is inherently single-pass; it can notice patterns, but it can’t do patterns of patterns.

“Still” one or two conceptual breakthroughs away from the singularity.

Most of their reported outputs are greedy– always taking the most likely next symbol without lookahead.

Some of their reported outputs used a limited amount of lookahead, none used a particularly extensive lookahead procedure of a sort that is already common in the literature for less sophisticated language models which need them in order to work at all.

Go was historically considered to be a difficult game for computers because the lookahead space grows very fast. The character-pair based NLP space grows similar to or faster than go does (both have hundreds of options per ‘turn’ and text output will have many more turns than a typical go game).

Deep learning is a big field. I’d be wary of trying too hard to find deep similarities between the two projects that happen to have gotten a lot of press in recent years.

So, what is the bottleneck here? Is it just training data, training time or computational resources? Given OpenAI has X amount of they key resources, whether they are computation or training data, is it reasonable today to give it say 100 time as many resources? What about 10000x?

And does anyone have any idea of how much more data would serve a “noticable improvement”? Would 10x as many resources be meaningfully different? What does the curve of improvement/resources look like, does it hit diminishing returns or is it linear, exponential? Can we even tell and by what metric?

I have so many questions.

(Disclaimer: I am not working on anything in Machine Learning)

I think training time is the same as computational resources on their scale and with the modern training strategies. And I think I remember reading them saying that for reinforcement learning of playing a computer game they tried fancy improvements to algorithms, and doubling the computational resources. Brute-force was significantly more efficient. Which is scary from the point of view of capital expenses beating everything else…

For this task, of course, a lot of training data is also needed. Hard to say how much — I would not be too surprised by either «reinforcement learning of compressing the most popular gigabyte of the Wikipedia (article text) content gives as a byproduct a strong model of formal writing» or «reinforcement learning of compressing the most popular gigabyte of the Wikipedia can reach record performance while overfitting on properties we don’t care about».

I guess the substantial variety of data matters, and this is hard to quantify.

It cost ~$43k to train we guesstimate (256 TPU3s for a week), and probably hadn’t converged either. It would be totally reasonable to throw 10x or 100x more computer, parameter, or data at it. Data & parameters are trivial, of course, just change some settings, and compute, well, ‘100x’ sounds like a lot, but it’s still less than OA has already spent training OA5. (I’m always shocked just how cheap machine learning is compared to, say, clinical trials.)

Where do I go to crowdfund a replication effort (which promises to release the full model)?

There are some graphs in their blog post showing its performance at differing levels of parameters. Which is not exactly what you asked about, but by looking at them you can see that some performance metrics are already approaching zero marginal return at 1.5B parameters (e.g. summarize a text) while others still seem to be netting big marginal gains with added parameters (e.g. answer questions about a text, translate French to English).

I imagine different metrics will likewise show different levels of responsiveness to increasing amount of training data or computational resources.

I would note that as models start getting really good, the metrics start being less impressive. For example, in the very large-scale CNN experiments like Google or FB, your standard ‘classification error’ starts asymptoting at a few % but this is a misleading way to look at it because (a) label error means that going beyond a few % is in fact a terrible thing & sign of overfitting and (b) transfer learning continues to improve even as classification continues to stagnate (I see this as a version of Hinton’s “dark knowledge”). In some cases, like ROUGE or BLEU, the metric itself is questionable to begin with. (For another example, consider this metric: “% of AlphaGo predicted moves that agree with Go players’ next move’ on sampled board positions”. It goes up during training—then down.)

So in the case of a language model not actually constructed for zero-shot learning in the first place, it seems entirely possible that it could ‘stagnate’ on untrained tasks or tasks with bad metrics even as it gets better and better when done as a few-shot/finetuning. After all, it’s pretty ridiculous that you can get it to do text summarization just by tossing in “TLDR” as a keyword. There’s no reason to think that this is in any way an optimal way of generating text summaries or that tossing in ‘TLDR’ should just continue to scale… If one took it and did some additional training to create a reliable way of generating text summaries rather than ad hoc exploitation of a nigh-accidental capability of the raw model, and that showed no improvement, that would be more convincing.

So for ‘summarizing a text’, before I’d strongly conclude “yeah, it’s approaching zero marginal return for additional parameters/training, needs architectural improvements”, I’d want to know more about what upper bound the corpus/metric has and how the increasingly-large models perform given some direct finetuning on the task which would expose its hidden ‘dark knowledge’.

Compute. The paper mentions that even their largest model underfits the data.

The rule of thumb for neural networks is that improvements are logarithmic w.r.t. increase of training data size and model size (which is limited by computational resources).

It does seem pretty important to me that your mom grounds her written work product in an understanding of reality outside the texts. I worry that the discussion of creativity and similar concepts obscures this as the more basic distinction. I may write a trite or unoriginal text, but if I do so I’m starting with a knowledge of the objects, etc., being discussed and how they interact and fit together in a realty outside the text. Isn’t that an extremely important distinction from a word-slurry generates from texts that may as well be arbitrary symbols? It may be a big part of what allows for the basic consistency and whatnot that seem so obviously absent from the algorithm’s samples.

Indeed.

I’m finding the reactions posted by Scott and others a lot more interesting than the actual texts produced, because they offer yet more evidence that humans will manage to find some warped kind of sense in anything.

Agreed.

I’m guessing that Scott would dismiss objections based on the concept of consciousness, or the distinction between ‘actually knowing’ versus ‘mindlessly producing correct answers’, as some combination of meaningless/impossible to answer and therefore effectively meaningless/misleading/based on false implicit premises. But I (genuinely, not just rhetorically) don’t understand how he can avoid discussing the distinction between free-floating language and language that is grounded in (concrete, physical) reality — or at least connected to reality via an entity who combines the ability to use language with some kind of direct (or ‘direct’) access to the world.

Maybe the idea is that this is a relatively trivial next step? Chuck some sensors onto a robot running this kind of code, train it appropriately, and it will learn to associate its linguistic concepts with things/actions/states of the outside world?

Even though that’s extremely handwavy, when I put it like that I realise that my real objection is probably the harder-to-articulate one about actually having mental states that correspond to the concepts referred to by the words.

Still, I wonder if I would better understand Scott’s perspective if he elaborated on the human-brain-as-model-builder thing, and why he sees it as a satisfactory response to the question about “deep understanding”.

I’m not so sure.

Let’s pretend you are discussing something in the comments with two other commenters. Everyone seems intelligent, all can keep the plot, you go back and forth for hours on all sorts of digressions. All three have solid models of the world.

So you’re typing these comments with your dexterous fingers, can feel your ass in the seat, can see the screen. Another is this AI, who has never had any sense data like yours, can never directly “touch” the real world, and has built this model solely on the symbols.

But the third is another human, one who had a terrible accident that rendered them in a coma. They have a brain connection apparatus that allows them to interface, via text/internal voice/thought, with any number of sites. They no longer have other sense data coming in, no longer can touch the real world. But they did originally build their model as you did, rather than with pure symbols.

The process of how this person and the AI built their model is different in this case, but nothing else is. And I don’t see how we can say the process used to build the model really matters if the model itself is the same.

Now to the extent the AIs inability to keep the plot is a result of missing this bridge to the real world, then yes obviously it matters. But I don’t see a good reason to favor that explanation over it simply being underpowered, and if it’s just underpowered I don’t see a good reason this bridge is meaningful with regards to the intelligence.