During my recent meetings with effective altruist groups here, I kept hearing the theory that effective altruism selects for people with mental disorders. The theory is that people with a lot of depression, anxiety, and self-hatred turn to effective altruism as (optimistically) a way to prove that they are good and valuable or (pessimistically) a form of self-harm in which they enact their belief that they deserve nothing and other people are more worthy.

And whenever this got brought up at meetings, people giggled, probably because they were thinking of good examples. I can’t deny there’s a lot of anecdotal evidence here (hi Ozy!). But when I look into it, it seems totally false.

My source was the 2014 Less Wrong survey data, which asked respondents whether they self-identified as effective altruists and whether they participated in effective altruist groups and meetups. Using that question, I separated the respondents into 758 non-effective-altruists and 422 effective altruists. The survey had also asked people whether they had been diagnosed with various mental illnesses, so I checked the rates in both groups. Including self-diagnosis there were no particular results; when I limited it to professionally diagnosed illnesses things got a little more interesting.

Effective altruists had about the same levels of anxiety disorders and obsessive-compulsive disorder as non-EA Less Wrongers. However, they had slightly higher levels of depression (22% vs. 17%) which was barely significant (p = 0.04) due to a large sample size. They also had more autism (8.5% vs. 5%) which was also significant (p = 0.02).

I expected this to be mediated by a tendency for autistic people to be more consequentialist and consequentalists to be more EA, and both these things were true to some degree, but even when I limited the analysis to all consequentialists, effective altruists still had more autism. Further, autistic people seemed to donate a higher percent of their income to charity than neurotypical people or people with other mental illnesses even separated from effective altruist status – that is, even among people none of whom were effective altruists, the autistic people seemed to donate more (effect not always significant) even though they generally had lower incomes.

I conclude that effective altruists are not unusually self-hating or scrupulous, but that they may be a little more autistic, and the reason why isn’t the obvious one.

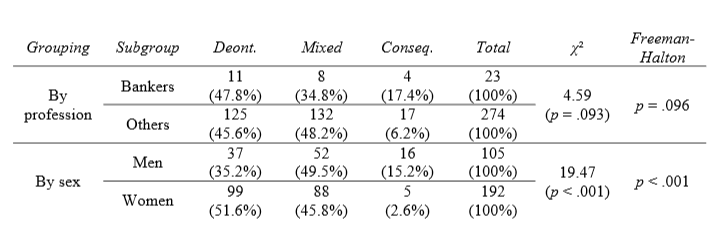

A caveat, by way of presenting another interesting result. Rusch (2015) (h/t @bechhof) studied whether bankers were more consequentialist (in this case, more likely to give consequentialist answers to the Trolley Problem and Fat Man Problem) than nonbankers. He found that they were. But then he checked for confounders and found the result was entirely an artifact of men being more consequentialist than women and bankers being predominantly male.

This is pretty astounding – men are almost six times as consequentialist as women!

On the other hand, in both my Less Wrong data in general and the effective altruist subgroup, men and women don’t vary much in consequentialismishness. Either Rusch’s data is wrong, or there’s a strong filter that acts to get only consequentialists into Less Wrong regardless of gender, or LW converts women to consequentialism (without further converting men).

Interestingly, effective altruists were much more consequentialist than non-effective-altruist LWers – 80% versus 50%. They also had more women than the non-effective-altruists. So it looks like LW filters for consequentalists so strongly it gets an even balance of consequentialist men and consequentialist women, and past that stage, filtering further for consequentialism doesn’t change gender balance much.

This points out a limitation of my statistics above. All it shows is that effective altruists don’t differ from other rationalists in levels of mental illness. It’s possible and indeed likely that both effective altruists and rationalists differ from the general population in all kinds of ways. It’s even possible that self-hate and scrupulosity drive people into the rationality movement in general, although I can’t imagine why that would be. It’s just that they don’t seem to have any extra power to make people effective altruists once they’re there.

It has long been known that vegetarianism is correlated with mental illness, though what gloating omnivores tend to omit is the fact that the diet tends to follow the onset of the disorder. I suspect that self-hate and scrupulosity are also correlated with rationalism and for the same reason – it appeals to people with unusual introspective tendencies.

Is it really an answer to the gloating omnivores that the vegetarianism seems to be a result, rather than a cause, of mental illness?

Yes, because it is used as an argument for meat consumption and people who are considering vegetarianism are likelier to be scared of becoming mad than being mad.

That sounds like a smoking lesion problem. If people who smoke are likely to die because there’s a lesion that both makes you smoke, and kills you, and you want to smoke, should you choose to do so?

The same decision theory which leads people to say “no” to that should lead them to not go vegetarian if it is associated with madness, even if the madness causes the vegetarianism rather than the other way around.

As far as I understand it, all decision theories agree that you should smoke in a realistic, plausible-in-real-life example of a Smoking Lesion problem. The philosophically interesting problem where “no” might be a reasonable answer arises when actually deciding to smoke is correlated with having the lesion even after setting all other things equal (including things like wanting to smoke, deriving pleasure from smoking, etc). It is unlikely that any real-world health problem has this Newcomblike structure.

In the case being discussed, if you already find yourself considering vegetarianism, finding the arguments for it plausible, etc, then it is very likely that actually deciding to be a vegetarian provides no additional evidence of of mental illness.

Will smoking plus lesion kill you faster than the lesion alone? And having watched a close family member die due to lung cancer from smoking, is dying from smoking plus lesion as, or less, unpleasant than dying from lesion alone?

Because dying from lung cancer is fucking miserable, painful and degrading, I can tell you that, and if you want to kill yourself that badly, throw yourself off a footbridge under an out-of-control train first.

The correct answer is to smoke, in the smoking lesion problem. (EDT says you shouldn’t smoke, but that’s because EDT is retarded.)

Deiseach: In the smoking lesion problem it is assumed that smoking doesn’t cause cancer, ceteris paribus. The causal graph is assumed to be: cancer (- lesion -) smoking.

Aleph: I looked that up because I thought you were saying idiotic things, and it turns out LW-type rationalists thought imagining that smoking doesn’t cause lung cancer was a great idea to discuss some narrow distinction. Yeesh. Sometimes I think you all are more affective elitists than effective altruists.

@Harald: Blame that on decision theorists and philosophers, the Smoking Lesion problem was discussed by them decades before LW existed.

Alejandro, not all decision theories say not to smoke, as Aleph says. People generally agree that the answer is to smoke, and this is a strike against decision theories that say not to. You might label EDT as “not a decision theory” (or “retarded”), but it is still valuable to have basic tests like the smoking lesion to apply to new decision theories.

Also, use unicode arrows (copy & paste):

cancer ← lesion → smoking

Pardon me, gentlebeings, going to swear like a trooper here.

Fuck’s sake. “Imagine smoking does not cause cancer”.

Well, how about a thought experiment on the lines of: “Imagine swigging down a bottle of cyanide doesn’t cause you to go blue in the face and die choking horribly in pain? But instead it’s as tasty as tiramisu and banoffee pie in one! Should you therefore swig down a bottle of cyanide?”

Yes, IF YOU’RE A FUCKING IDIOT THAT CAN’T TELL YOUR ARSE FROM YOUR ELBOW EVEN WITH A MAP AND FULL DIRECTIONS WRITTEN IN WORDS OF ONE SYLLABLE.

I’m not going to be calm about this. Dying from lung cancer is HORRIBLE. Dying from lung cancer caused by smoking is STUPID AND AVOIDABLE. Anything that encourages, even as a thought experiment in a non-real world, people to start smoking/take up smoking after quitting/continue smoking will not evoke a wry smile of amusement from me, it will evoke “Where did I put that old billhook and can I get it sharpened because there are some heads need cutting off”.

Now – if we’re discussing the thought experiment of “Imagine doing X will kill you. This turns out to be down to a lesion which causes a desire in you to do X, and will kill you. Doing X will not kill you, the lesion will. Should you do X?”

If X is neither harmful nor pleasurable to you or others, it’s up to you to do X. You can if you want – and if the lesion makes you want to do it, and it’s painful or inconvenient to resist the urge, go ahead.

If X is pleasurable and does not cause harm to you or others, do X.

If X is harmful to you or others, don’t do X.

The only reason I can see to link the lesion to smoking is because there was an idea that smoking was in some way harmful even back when the Smoking Lesion experiment was created. Otherwise, where is the problem? Replace “smoking” with “eating icecream” (where the lesion does not cause you to over-eat ice-cream; one portion a day is sufficient) and who is going to be bothered by it?

The Smoking Lesion Problem only works as a problem where there are TWO harms and you’re being asked to choose the lesser of two evils (lesion will kill you anyway; smoking is minorly harmful but majorly pleasurable; should you do it or not, if it will cause you a small amount of extra harm but will not kill you faster, and will give you pleasure in the run-up to dying?).

And thus I finish as I began: FUCKING IDIOTS WITH SMIRKS ON THEIR FACES TRYING TO “GOTCHA!” THE RUBES WHO ARE OH SO STUPIDER THAN THEM BY CATCHING THEM OUT AS INCONSISTENT IN THEIR PHILOSOPHY.

GO DIE ON A CANCER WARD, YOU TOSSERS.

The answer of EDT to the smoking lesion problem and the “correct” answer depend on implicit assumptions.

EDT says you should condition your action on all the available evidence. It has been often been argued that if the lesion affects your probability of smoking only through your preferences, then since you can observe your own preferences through introspection, then EDT decides “smoke”, which is arguably the “correct” answer under these assumptions. (This is known as the “tickle defense” of EDT).

If the assumption that the lesion affects your probability of smoking through an observable variable does not hold, then EDT decides “don’t smoke”.

This answer may appear intuitively “wrong”, but I would argue that is indeed the correct one.

This can be shown by making the problem more explicitly Newcomb-like:

“Before you are born, Omega predicts with high accuracy whether you will ever smoke in your life, and in that case it introduces in your DNA a lesion which increases your probability of developing cancer. The lesion doesn’t have any other detectable effect, Omega’s action is not observable and there is no know genetic test to detect the lesion.”

This scenario is fully consistent with the original smoking lesion problem (it just fills in some missing detail). EDT decides “don’t smoke” in this scenario.

Arguably, by the same reason why “one-boxing” is the “correct” answer to Newcomb’s problem, “don’t smoke” is the “correct” answer to this formulation of the smoking lesion problem.

I’m just being silly about this, because obviously I don’t really think that any vegetarianism/depression correlation really bears on the merits for it. But just as an abstract matter, the “mental-illness-first” order could arguably compromise the case for vegetarianism at least as much as if it were the other way around. Imagine a hypothetical with grossly exaggerated facts. Only people who have been diagnosed with schizophrenia ever find the rationale for some policy proposal x to be plausible. Even though it would be strictly ad hominem, I think it might well be rational to consider whether there is no valid case for x if the only people who can be persuaded of the fact are mentally ill. It seems like a fair inductive inference that I might be an undiagnosed schizophrenic if I were to buy the argument under those circumstances, which is some reason to think that the argument may be invalid. Returning to reality, I don’t think that depression compromises rationality like schizophrenia does, and there are all sorts of other reasons why I don’t view that hypothetical as a real issue for vegetarianism, even though I remain a meat eater.

I see your point. The mind projection fallacy is something that intelligent and unconventional souls have to watch out for.

Sort of a tangent from mental illness-vegetarians and smoking-lesions but what’s up with the fact that by statistics and anecdotal evidence, mentally ill people smoke way more than everyone else?

Someone Has To Ask It, so it might as well be me: could the effects here be mediated by IQ? That is, higher IQ people tend to be consequentialist, and to donate more to charity. Since they also are more likely to be autistic, this explains the latter correlation. It helps explain the former correlation because standard deviation in IQ is greater for males than females, so that the (presumably upper class, educated) men tested by Rusch and other researchers would be on average smarter than (upper class, educated) women. Inasmuch as LessWrong selects people by IQ, then maybe these sex differences would disappear.

For the record, I doubt these hypotheses can explain the sex differences especially, which are quite large. And as a non-consequentialist, this is a non-self serving explanation. But it seems worth considering.

It would make sense that higher IQ people are more likely to be effective altruists, pragmatists, and consequentialists. They understand that sometimes the best policy may not be the one everyone wants, but has the best long term ROI in terms of maximizing utility.

[Reply deleted]

IIRC, they’ve done fMRI of people making decisions in the trolley problem, and when people make a deonrological decision, an emotional part of the brain is highly active, but when they make a consequentialist decision, a different, more abstract part of the brain is active. So if IQ is involved, I think it’s more likely that having stronger abstract reasoning leads both to IQ and consequentialism, than that high IQ leads to consequentialism.

Josh Greene has reported these kinds of results, but from what I remember when I read up on this several years ago, his studies seemed dubious (e.g., in their measures of consequentialism-ishness, something other posters have noted is a problem in the studies Scott discusses in the post), and I think others have failed to replicate Greene’s findings.

While they all might correlate with intelligence, does autism correlate with scrupulosity and depression? If we’re asking questions about different illnesses or disorders, we can’t ask very specific questions about both autism/Asperger’s and depression/scrupulosity if we put them in no smaller categories than “brain disorders”. That’s as useful as classing ADHD and epilepsy together with no further distinctions

Also, while they both might correlate with scrupulosity, how much does consequentialism correlate with vegetarianism?

I think the same kind of analytic mindset that lends itself to thinking deeply about thinking and computation also lends itself pretty well to self-hate. There are lots of paragons of virtue that are as close to objectively better than you as makes no difference. I think Rationalists are one of the groups that are very vulnerable to putting themselves in a Total Perspective Vortex, and I think EA is probably an outgrowth of that.

This reminded me of something of Eliezer’s I read a long time ago. From Algernon’s Law: A Practical Guide to Neurosurgical Intelligence Enhancement Using Current Technology:

I remember thinking “Huh, that sounds plausible. But I’m not entirely convinced.” Then later on he mentioned being “ten steps ahead of the rest of science again”, which made me definitively suspicious. I admit I haven’t gone through all his links, but I was wondering if anyone here knew what the current state of psychology is (regarding his hypothesis, or just in general). Scott pls.

Anecdotally, it seems to fit my personality well enough since I’m pretty phlegmatic and never grew out of the constantly-asking-“why” phase. On the other hand, the Forer Effect makes me suspicious-by-default of anything which elicits my self-identification.

Last week I got a fortune cookie that said, “You are unusually unsusceptible to the Forer Effect.” Seems accurate.

(Sorry, Scott. I know you hate that kind of humor, but it had to be said, for OCD values of “had”.)

That Rusch paper amounts to a hit piece.

They’re using consequentialism as a (very weak) proxy for psychopathy, which is… wow. I wonder how well “terrible experimental design” correlates with psychopathy. There are also well-known issues with using the trolley/bystander problems as consequentialism/deontology tests, which they blithely dismiss as “not completely unproblematic”, perhaps in a bid for Understatement of the Year. Those tests are inconsistent, highly subject to situational & participant variables, and most people who take them report that they didn’t employ any formal reasoning whatsoever. That’s kind of like asking people what gravity is, and then labeling some of them “string theorists”.

Alternative hypothesis: psychopaths think that pushing people in front of trolleys sounds fun.

Self-hate, scrupulosity, and interest in the rationalist community could all be related to a common factor of something like moral perfectionism or an anxiety about getting things morally wrong. But thinking along the same lines, I would also expect self-hate and scrupulosity to continue to correlate with effective altruism among LWers, so it’s a bit surprising to me that you didn’t find that to be the case.

Maybe I’m missing something but why would it be surprising to find that EAs were more consequentialist than everyone else? EA is quite literally a method of deciding the priority of charities by attempting to quantify the good they do per dollar. It’s hard to see that appealing to anyone who doesn’t implicitly buy into the premises of consequentialism.

On the autism point that makes quite a lot of sense. My little brother and father are both high functioning autistic / aspergers and one thing it was impossible not to notice growing up is that they are both extraordinarily strict when it comes to ethics (although they’re deontologists rather than consequentialists). I have no trouble at all believing that EAs were disproportionately on the spectrum given the way they explain their perspective on morality: very straightforward a-to-b with disturbingly little room for nuance.

EA is quite literally a method of deciding the priority of charities by attempting to quantify the good they do per dollar. It’s hard to see that appealing to anyone who doesn’t implicitly buy into the premises of consequentialism.

Non-consequentialists need not be unconcerned with consequences; they just think other things are important. Take, for instance, a deontologist who thinks there’s an important doing/allowing distinction, that he ought not use other persons merely as means, etc. Donating to charity Effective rather than charity Ineffective is unlikely to involve any differences along those lines, and so inasmuch as our deontologist also thinks that consequences are important, he can prefer to donate to Effective.

There are some non-consequentialist reasons one might have for preferring less effective charities — e.g., one might think one has a greater obligation to those with whom one has some kind of morally significant connection (e.g., poor people in one’s community) — but not all non-consequentialists need think this, and even those who do can still be interested in overall effectiveness as one among other considerations to factor into their decision-making process.

A true consequentialist wouldn’t give their money to EA; they’d steal other people’s money and give that to EA.

I’m starting to see the GiveWell founders’ background in a whole new light.

It isn’t hard; EA should appeal to anyone who values consequences, not just to consequentialists. You appear to think that consequentialism differs from nonconsequentialism in that only the former assigns moral relevance to consequences. But this is a misconception: what distinguishes consequentialists, rather, is the belief that the value of consequences exhausts all relevant moral considerations. As John Rawls, a prominent non-consequentialist, remarked: “All ethical doctrines worth our attention take consequences into account in judging rightness. One which did not would simply be irrational, crazy.”

Not true, EA doesn’t presuppose consequentialism – see http://effective-altruism.com/ea/eg/effective_altruism_and_consequentialism/

Wow that gender gap is insane.

Also, I notice that virtue ethics isn’t mentioned at all in the paper. A quick read-through doesn’t seem to turn up any explanation as to why.

selection bias?

This only covers the intersection of EA and rationalism. There’s lots of people in my local EA group that are either completely uninterested in rationalism or are familiar with it but not engaged enough to fill out a LW survey.

Anecdote: I had moderate scrupulosity issues for quite a while (though I hadn’t heard the term) and have a mild, atypical OCD diagnosis, and I have found that rationality feels the most central to my identity when I am sad, depressed, excessively tired or hungry, or whatever. The worse I feel about myself, the more “Here is a set of tools and a goal, all connected to the core part of your self-concept you feel most secure in” feels appealing and important.

This may suggest a general basis for the effect.

Beware of generalising from one example. I’m the opposite: rationality feels less central to my identity when I’m miserable. (Not an effective altruist though.)

And for me my rationalist identification varies little by emotional state. So all possibilities occur. Though we don’t know anything about distribution yet.

I think that EA doesn’t really select for mental disorders, rather Less Wrong which is explicitly a self-help website selects for mental disorders and is also a gateway to EA.

Or: especially sensitive and vulnerable people (wimps) care more about the universe being a happier place. Like artists are reputed to see color more vividly, they feel pain and sadness more intensely, so they’re more motivated to do something about it. More stoic people will not worry so much, and not entirely understand why EA people do.

Obviously, preferring EA to ineffective altruism is going to have something to do with IQ and culture.

This was my guess while reading this post. Some rationalists self-medicate for their disorders with EA, others prefer FAI research.

Me, I troll rationalist blogs. It works surprisingly well. I think I’m making real progress.

You can’t? I don’t believe you. Have you tried? Which explanations did you rule out?

Can you imagine why self-hate and scrupulosity would drive somebody to martial arts? Does that help?

> especially sensitive and vulnerable people (wimps) care more about the universe being a happier place.

Which raises the question of why the the excessively tough minded don’t have mental illness, The answer suggests itself they do, but in a way that involves taking it out in other people.

I read the article, but I don’t understand your point.

It’d be interesting to look at the personality characteristics of people on the leading edge of moral progress in general (e.g. abolitionists). Increased rates of autism seem like a decent bet; autists seem more likely to take their ideals seriously rather than just going along with what everyone else seems to be doing. (I’ve solved this problem by being more neurotypical than average but honing my nonconformism through years of practice.)

This is not to say that psychologically unsustainable EA constitutes moral progress–if it’s not working, the consequentialist thing to do is to stop doing it. Relatedly, anyone who takes the position that consequentialism should be avoided due to its unwanted consequences puts themself in an odd position philosophically. The steelman of this position, if anyone actually holds it, would probably be something like “you’ll get the best consequences if you compute everyday moral decisions using a nonconsequentialist framework”.

I think achieving consequentialist results through a nonconsequentialist framework is usually called rule consequentialism.

I think it’s more like two level utilitarianism. Two level utilitarianism holds that plain utilitarianism is right, but that in most ordinary cases you would be well advised to act on simple heuristics, like commonsense morality “don’t lie, keep promises” etc. and only in high stakes, atypical, long term etc. cases take the time to actually try to work out what will maximise utility, since if you try to do that in everyday cases you’ll end up unable to function and/or getting your calculations wrong all the time.

Rule consequentualism conversely seems to actually hold that the right thing to do is to follow the rules, even where you know more good would come from breaking them- which is just stupid rule fetishism imo.

I agree with David here. “Rule Utilitarianism” misses the point. Hybrid Utilitarianism (my term) isn’t the belief that being moral means deriving a set of rules that have the best possible expected outcomes and following those all the time, it’s the belief that for a human brain, punting every decision to explicit analysis is undesirable.

In other words, Act Utilitarianism as a backup system to some “natural” form of deontology.

Stupid rule fetishism – this is something I used to think. Reading Parfit changed that, or helped crystallize thoughts I was already having – the exact history isn’t relevant. Anyway, the question is “which rules?” If you say, “those rules that make things go for the best if universally followed” then you get something equivalent to act utilitarianism, or a clunky approximation thereof. If you say “those rules that make things go for the best if universally accepted” then it gets more interesting – for example a rule may make you feel secure, there’s utility to be had there independent of the rule being followed. I forget the reasons why but I remember being convinced that this fixes a lot of the issues to do with act utilitarianism.

Parfit seems to stop with universal acceptance, whereas I think there’s more analysis to do. I sometimes like to thing of the moralists dilemma – which moral rules should I promote? Which criteria should I use for deciding which moral rules I should promote? If the two conflict, is this a problem?

I disagree that it’s philosophically odd. “Fucking stupid” perhaps, but not “philosophically odd.”

Consequentialism is fundamentally an observation that a diversity of tools are best-suited for different tasks. Consequentialism is itself a tool. It would be supremely unconsequentialist to argue that in the moral domain there was no advantage to using a diversity of tools. By contrast, even the furthest exaggeration of the position you’re describing –that is, the claim that consequentialism is a tool best-suited to precisely one purpose: telling us that we can make use of a diversity of moral tools– is consistent.

Relatedly, anyone who takes the position that consequentialism should be avoided due to its unwanted consequences puts themself in an odd position philosophically.

Let’s say I have a certain set of preferences and values. I investigate consequentialism, carefully reasoning through the consequences of various actions or rules. Then I turn consequentialism on itself (because I’ve read Gödel, Escher, Bach) by analyzing the consequences of adopting consequentialism. I conclude that adopting consequentialism has a bunch of consequences that are (measured by my preferences and values) very, very bad. So I conclude that (in the context of my preferences and values) consequentialism contradicts (or at least undermines) itself, and I reject it.

I don’t see what’s so philosophically strange* about the above line of reasoning: I’m not saying, “I accept consequentialism, and thereby reject consequentialism,” I’m saying, “Assume consequentialism; here’s a refutation of consequentialism that results from that assumption; therefore consequentialism can’t be right (or there’s something wrong with my values and preferences).”

(It seems obvious to me that consequentialism can’t undermine itself unaided—some actual values and preferences have to be involved. But by the same token, consequentialism is of no use unaided: you have to have values or preferences to appeal to to judge various consequences.)

(* “Fucking stupid” is barely worth responding to, and may be a signal akin to xkcd 1475‘s “Technically”.)

It sounds like you’re trying to import the idea of proof by contradiction from the “is” domain of propositions having truth values to the “ought” domain of statements about what you value. I’m not convinced. If applied fully generally, it seems like this sort of reasoning would lead you to adapt a set of values that caused you to be maximally satisfied with the current moment (e.g. *waves hands* “I value having a job, but I also value having values that I don’t need to work to achieve, and getting a job would mean work, so by moral proof by contradiction I no longer value having a job”).

I think the analogue to proof by contradiction in the “ought” domain is playing your moral intuitions off of each other, e.g. if your intuitions about the trolley problem with the lever and the trolley problem with the fat man contradict, which wins? How do you compromise?

Changing your values in response to what’s possible in the world seems unnecessary to me… your brain is a biological computer and you’re welcome to program your System 1 with whatever preferences and behaviors you want, but why corrupt abstract theories about what’s valuable by limiting them to what’s possible? It’d be like disallowing numbers above 10^100 when doing math on the grounds that numbers that high never occur within the natural world… it adds complexity and special cases and doesn’t really seem to buy you anything, and it backfires if you do happen to run in to a number that high. Related: http://www.yudkowsky.net/singularity/simplified/

Another analogy might be not ignoring numbers above 10^100 when doing math, but ignoring numbers above 10^100 when doing math intended to make certain kinds of real-world conclusions.

In other words, if you want to calculate the lifespan of a very large black hole, it’s fine if you get a number greater than 10^100, but if you’re faced by a Pascal mugger, do not accept unboundedlky high numbers.

Disallowing numbers this way would be a good thing.

It sounds like you’re trying to import the idea of proof by contradiction from the “is” domain of propositions having truth values to the “ought” domain of statements about what you value.

If that’s what it sounds like, then I’m not being as clear as I thought. I only brought up “or there’s something wrong with my values and preferences” to concede that the anti-consequentialism result holds only in the context (or under the assumption) of a particular set of preferences and values.

Let me try again:

1) If you want to implement consequentialism, you have to supplement it with some values and preferences, otherwise you don’t have any way of determining which consequences are better than which other ones.

2) You can apply consequentialist reasoning to the question of whether to use consequentialist reasoning.

3) It is possible to find yourself in a situation where, using consequentialist reasoning with your values and preferences, the consequentialist outcome is that you shouldn’t use consequentialist reasoning.

4) In that situation, using consequentialism would be inconsistent.

5) I think the argument in 1-4 counts as a “position that consequentialism should be avoided due to its unwanted consequences”.

6) I do not think the argument in 1-4 is philosophically weird.

I concede that the argument in 1-4 is not an indictment of consequentialism in general, but it is a demonstration that consequentialism is incompatible with your values and preferences.

You don’t have to apply consequentialist reasoning to decide when to use consequentialist reasoning.

I can’t see how that would follow unless all forms of consequentialism are so badly broken that they would make things worse. I don’t think it follows just from recursing consequentialism, like a barber paradox.

“xkcd, which covers a lot of different topics but has essentially only one main idea, which is that the guy who writes it and the people who share it are better than everyone else.”

“How dare this person say something I disagree with like they think they’re better than me” is less worthy of serious consideration than either “technically” or “fucking stupid”.

Randall Munroe is culturally uninsightful and insufferably smarmy, and “fucking stupid” and “technically, …” both serve significant useful functions as brain-clearers for the speaker.

“Gender Differences in Response to Moral Dilemmas”

https://t.co/Xi07DFKM9h

“Beyond Kohlberg vs. Gilligan: Empathy and Disgust Sensitivity Mediate Gender Differences in Moral Judgments”

http://papers.ssrn.com/sol3/papers.cfm?abstract_id=2486030

I honestly can’t tell whether or not you’re being sarcastic there at the end when you can’t think of a reason high scrupulosity would drive someone to join Less Wrong.

Or self-haters. The hypotheses come very naturally to me.

Have you considered the possibility that bankers being mostly male may be an artifact of bankers being more consequentialist, rather than vice versa?

You can settle this easily by looking at whether female bankers are more consequentialist than the average woman. It’s safe to assume they did this.

I tend to think the gender gap is a difference in signalling rather than a difference in reasoning. Men choose to signal “I am tough-minded and hard-headed.” Women choose to signal “I am sensitive and abhor violence.”

But I’m actually quite surprised that female bankers don’t also choose to send the “tough-minded” signal. I’d like to know more about the context of the survey. Were people surveyed at work or at home?

There were only 7 female bankers in this sample. That’s not enough to tell.

PS – there’s an example of Simpson’s paradox. Both Bankers and Others are more consequentialist than both men and women.

There is not a strong distinction between signaling choices and actual, true reasoning in humans.

As a psychopath with a well-constructed mask of sanity, I find this sort of erasure extremely offensive.

Apologies, I should have said “most humans”

Sounds like your reasoning process is motivated by your self-image as a psychopath. So it’s a kind of self-signal (to future versions of you, to tell them what kind of person you are and keep their behaviour consistent with how you desire to think of yourself.)

People do that shit all the time and it still counts as signalling as far as I’m concerned. I only just now realised that other people might not count self-signals as signalling.

Yes, that is obvious. But they are different frameworks of analysis. Analysing responses to trolley problems as signalling rather than as “moral reasoning” is more interesting (trolley problems are abstract in a way human minds are simply not), and I meta-predict it would also be a framework one could use to generate better predictions.

> It’s safe to assume they did this.

If SSC taught me anything, it’s that it’s not.

A good topic for the next Open Thread might be a fill-in-the-blank question:

If SSC taught me anything, it’s that ________.

Effective altruists aren’t insane, but they do have an unusual value system.

As several people noted in the other thread, most people have concentric concerns, from themselves out to their family, then community, then nation, then the rest of the world. Steve Sailer makes a distinction between “concentric loyalties” (a concept that seems to come from Gordon Allport) and “leapfrogging loyalties”:

Effective altruism looks like leapfrogging loyalties (similar to Peter Singer’s expanding moral circles) , because it so strongly emphasizes aiding strangers outside one’s kin and community. Animal rights and environmentalism are the extreme examples of leapfrogging loyalties: leaping over one’s entire species.

From an evolutionary standpoint, altruistic behavior usually derives from kin selection or reciprocal altruism, none of which are present in cases of leapfrogging loyalties. This makes that behavior look rather strange. So what could be the payoff? Sailer argues that the payoff of “leapfrogging” loyalties is signaling and status-seeking within one’s own circle. (Of course, there could be other factors, also, such as various moral arguments that people find convincing, but we should still suspect signaling and status as possible influences. Even scrupulosity could be seen as a type of status-anxiety.)

Leapfrogging loyalties are not weird in a modern, educated, Western, liberal context, but they are weird in a global and historical context. Whether concentric altruism or leapfrogging altruism is more “effective” depends on your values, your time preference, and also on a bunch of empirical questions that we don’t know the answer to.

Personally, leapfrogging altruism is not effective for my values. Maximizing strangers’ lives saved in 2015 is nice but seems to have a really low time preference. I am more interested in aiding people who are productive, altruistic, and share my values. This means investing in people and communities that I know.

In short, I am an eigenaltruist because I believe in being altruistic mainly towards other altruistic people who can pay it forward. I suspect that eigenaltruism is game-theoretically superior to merit-blind altruism and also more sustainable and psychologically palatable. I would like to see a version of effective altruism that emphasizes concentric eigenaltruism instead of leapfrogging altruism.

I think that in the case of EA, marginalism has a lot to do with it. Let’s say that both Alice and Bob have concentric “altruism gradients” but (coin flip) Bob’s is steeper than Alice’s. So Bob spends some money on people moderately close to him and less money on people further from him. Alice comes along, sees that the people close to Bob are already well catered for by her standards, and that her money would be best spent on the people further away (whereas if Alice had gone first the people closer to her would have got more of her money). Some time passes, and Bob notes that the people far from him are already well catered for by his standards but the closer people are neglected by his standards and spends more on them. Alice calls Bob narrow and parochial and Bob calls Alice a leapfrogger.

Of course, in this scenario, if Alice hadn’t been around but Chris had, Chris having a steeper gradient even than Bob, then Bob would have ended up being the leapfrogger.

I’m sort-of in two minds about EA’s marginalism, which is one reason why some of my charity budget goes to the charities that EA likes and some of it goes closer to home. Also, I like the movement but I can’t bring myself to be a full part of it.

Also, the vibe I get from EA really doesn’t say “loyalty” at all – more like “hot money” looking for good investment opportunities.

Also, the vibe I get from EA really doesn’t say “loyalty” at all – more like “hot money” looking for good investment opportunities

That’s why it doesn’t appeal to me, though in theory it should; it is stated to be about deciding where charitable intervention makes the most difference/does the most good on the most efficient basis with the least wastage, and then you give your 10% donation or whatever to the highest-ranked charity – which seems to be, at least for now, mosquito netting?

But the thing is, it seems to inculcate or encourage a rigidity or inflexibility of attitude; everyone is giving to the number one ranked charity. You should give to the number one ranked ‘most bang for the buck’ charity, and never mind if you think that mosquito netting is pretty well covered, but something lower down the list needs more attention, or if there’s something that affects fewer people but in a more profound way.

And you apparently can’t (or shouldn’t) try “Well, I’ll give so much to mosquito netting and then make another donation to a lower ranked/unranked charity”. No. To be most effective, if you can afford to make two donations then you should make one large donation to the number one charity and forget the second donation to the research effort for the 60,000 people globally who suffer excruciating agony every waking moment due to Whatever Syndrome – after all, there are only 60,000 of them versus the millions affected by malaria, the netting is a proven intervention while the research will go on for years and may lead nowhere, etc.

Secondly, if the first ranked charity switches to something like, oh, providing solid-gold bath taps (instead of plain old gold-plated) for millionaires (who, after the property crash, are reduced to living in hovels worth peanuts, the poor mites) then you should give to that, because by objectively-determined-standards-of-most-bang-per-buck, there is 100% conversion of donations into solid-gold bath fittings, there are no misuses of the fittings as there may be with mosquito netting used for fishing and being toxic, there is 100% delivery of the bath taps to intended recipients, the recipients report vastly improved quality of life and satisfaction, etc.

It’s no use arguing that millionaires don’t actually need solid-gold bath taps while people suffering from beri-beri could use vitamin supplements; that’s a decision based on emotion and subjective preference, not cool clear reasoning. You can’t argue with the figures!

The thing about the number one charity… The first person to invent EA is going to run the numbers and is likely to find a charity that’s clear top-of-the list. Once you get a movement going, you get GiveWell and the like saying “OK, our previous number one charity is awash with cash, how about this one?” – you sort-of end up with an equilibrium where the marginal utilities for two charities are near enough the same. Basically, if mosquito netting is pretty well covered, the extra-bang-for-extra-buck is likely to be low, and I think GiveWell etc. recognise that. My inner optimist says that as the EA movement grows, more and more charities will end up being drawn into that equilibrium, and every cause can get its share. My inner pessimist says that there will be wild oscillations and people getting annoyed and disillusioned and all sorts of chaos and doom – and “traditional” charity donors feeling they’re being taken for granted. This last bit is one of the reasons I make sure I’m doing some of the old school stuff as well as the EA approach.

I think the gold-plated bath taps thing is a red herring – I’ve seen or at least heard of some sites who are very interested in overheads and things like that, but I don’t really think of them as the EA movement – as far as I can tell, the EA movement is more obsessed with QALYs. Whatever else is wrong with QALYs (lots of disability rights people don’t like them – I’m seeing a theme here), I don’t think they have that problem.

That would need people to update lists, and besides, as you know yourself – if the popular perception of “most efficient charity” is mosquito netting, people will bung a tenner in the post to the mosquito netting charity and not bother finding out if it’s awash with cash or what the number two charity is.

Red Nose Day for Comic Relief is coming up on the neighbouring island, and I’m never entirely sure what the money raised goes to; a lot of various projects, but it seems more like (excuse my cynicism) a way of everyone getting vaguely involved in ‘doing something good’ with a payoff for participants of fun, feeling the glow of virtue, and being part of a mass social movement, and less about ‘where is the money going? is it working? do I agree with the aims of the particular charity?’

Oh, excuse me, the bath taps are solid gold – the poor millionaires had to down-grade to merely gold-plated, due to the horrible downturn in their standard of living after the economic slump.

It’s so hard for them to adjust to this lesser standard of living, don’t you know? Poor people are used to being poor and miserable, but someone who deserves the better things in life because they’re worth it suffer so much more! 🙂

That’s the QALY problem right there: the ‘children frolicking on the beach’ example Peter Singer used when lunching with Harriet McBryde Johnson, according to her article. If your measure of ‘quality of life’ downgrades the experiences of the actual people in preference for the ideal ‘most healthy and happy a person could be’, then naturally you’re going to say “Oh, it would be so terrible to lose the use of my eyes/legs/sense of taste, I’d rather be dead!” and ignore that lots of blind, crippled or otherwise disabled people manage to lead satisfactory lives. I mean, by the ‘frolicking on the beach’ example, my parents should have had the right to euthanize me, since although I have the use of my legs, I preferred to sit and read as a child rather than run up and down being physically and strenuously active.

Deiseach: If the utility of being crippled is determined by what people would do after they are crippled, rather than what they would do to avoid being crippled, you’re saying that changes in people’s happiness which are caused by psychological accommodation to bad circumstances count towards utility.

Couldn’t that reasoning lead to the conclusion that the greatest increase in utility might be done by teaching the poor to be happy being poor? Peahsps instead of giving away malaria nets, you should start a religion which teaches that people who die of malaria get an unusually good afterlife.

Jiro, someone with the use of both legs might well say “Oh, I’d rather be dead than crippled!”

Okay, so they’re in a horrible car crash and need both legs amputated. You run up to them with a gun and say “You want to be dead rather than crippled? I can do that for you!”

Now, some people may well say “Damn straight, blow my brains out, mate!” But other people might say “You know what? I’ve changed my mind, I think I can learn how to use a wheelchair”.

That’s an odd thing to say, given that AMF was removed from Givewell’s list of recommended charities for a year precisely because Givewell thought they didn’t have enough useful things to do with the money. “Room for funding” is explicitly a thing EAs think about.

(AMF’s situation changed and they’re back on the list again. Mosquito netting, apparently, is not so well covered at the moment.)

Do you, seriously, think it is in any way credible that the sort of calculation EAs do will lead to the conclusion that solid-gold bath taps for millionaires do more good per unit money given than (say) deworming or anti-malaria nets or just giving money to very very poor people? Seriously?

OK, then. Here’s why you’re wrong. Helping rich people is much, much less effective per unit of money than helping poor people. This isn’t some kind of coincidence that might happen to go the other way next week and oblige us to devote all our charitable efforts to providing millionaires with gold taps, it’s practically what being rich means.

Why? There are all sorts of things you can do to improve a person’s welfare. Most of them cost money, directly or indirectly. Not being entirely stupid, people will tend to do the most cost-effective first. That means that by the time you’re a millionaire, you already have the opportunity to do all the cheap things that would make you happier/healthier/…, and you have probably done them. Or, if not, there’s probably a reason why not and it’s unlikely to be effective for someone else to try to do them for you.

On the other hand, if you are starving in the poorest parts of Africa, there are any number of cheap things that would improve your lot — but you can’t afford them.

It’s possible to imagine some weird hypothetical world in which buying gold taps for millionaires is the most effective way to improve total happiness. Perhaps for some reason 99.9% of everything that goes to poor parts of the world gets stolen. Perhaps those millionaires are not ordinary human beings but alien lizardmen with a hugely greater capacity for joy and sorrow than we human beings have. (You may notice that these are crazy and improbable hypotheses. This is not unrelated to the way that “buy gold taps for millionaires” feels like a very unpromising goal for charitable action.) If we lived in that sort of weird world then EAs who care about total happiness might indeed choose to buy gold taps for millionaires. I suspect, however, that even here on earth most EAs don’t have “maximize total happiness” as their exact goal. They might, e.g., prefer something more like “maximize minimum happiness” or “maximize some measure of overall happiness that gives priority to the welfare of people at the bottom of the happiness scale”.

(I don’t think I really believe that you think buying gold taps for millionaires would ever be a utility-maximizing move. Which raises the question: what the hell are you trying to do with that hypothetical, and why?)

I’m saying “cost-effective” need not be the best way to support a charity. If one million euro will buy solid gold bath taps for 100% of the applicants for solid gold bath taps and only buy mosquito nets for 75% of the applicants for mosquito netting, then on the figures the bath tap charity is more cost-effective.

I’m not saying we should ignore wastage and inefficiency. I’m not saying we should disregard ‘most bang for buck’ in outcome. I am saying that Effective Altruism sounds (and this is a subjective, emotional reaction rather than a reasoned one, I fully admit it) like it can or could devolve into ‘plug in the numbers and go blindly by them’ rather than a person using their own discretion and weighing up of the good done.

I have money to donate. I’m not sure where to give it. Oh look, here’s a site that will tell me what to do!

Well, great, and I mean that sincerely. But does my donation to Number One Most Effective Charity – when everyone is donating to Number One Most Effective Charity according to what Charity Ranking Site tells them is Number One – really make more of a difference than giving it to Number Sixty-Eight on the list? Or to a local charity? Or handing it to a beggar on the corner?

Indeed, the removal and re-addition of AMF from GiveWell’s recommendations is to me a sign that the equilibration thing is happening, but it’s also what makes me worry about oscillations, and partly what prompted the “hot money” thing in the first place.

If some people are slow about switching, that will damp the oscillations, if too many are slow about switching, things won’t equilibrate, but it looks like that’s not a problem at the moment.

Deiseach, you are radically misunderstanding what constitutes cost-effectiveness if you think that buying gold taps for 100% of the people who want them is necessarily (or even likely) more cost-effective than buying mosquito nets for 75% of the people who want them, Because mosquito nets are really cheap and save lives, whereas gold taps are really expensive and do more or less exactly the same as not-gold taps.

Are you maybe mixing up the sort of QALYs-per-dollar figure EAs like to use with the (largely useless and irrelevant) “percent overhead” figures one can find on some websites?

If in fact everyone were donating to Givewell’s top charity and to nothing else then there would be a big problem. But they aren’t, not by a long way, and if they were then Givewell and other such organizations would start making recommendations more like “pick one of these four at random and give to it”.

Deiseach, I can see why GiveWell’s model of “we’ll tell you where your money belongs!” makes you uncomfortable–it makes me a little uncomfortable, too. But I don’t think your thought experiment of the buying bath taps for millionaires bears out, for a couple reasons. The first is that people who do things through GiveWell presumably sanity-check GiveWell’s recommendations; if GiveWell suggested people donate to millionaires (or something similarly silly or potentially self-serving) people would notice, and not listen to GiveWell. The second is that GiveWell has no incentive to lie about what the best charity is, as long as the charity is remotely plausible and not, say, “GiveWell’s founder.”

Importantly, there’s not a button that you press that makes you give all of your money to whatever charity GiveWell tells you to forever–only now. Like, GiveWell’s not gonna tell you to donate to AMF to reel you in, and then once you’ve committed to giving all your money to GiveWell’s top charity forever, switch to gold bath taps for millionaires.

I hope you can also see why GiveWell’s model of “we’ll tell you where your money belongs!”, despite its mild squickiness, is in fact the optimal model from an efficiency standpoint. There is a best charity, and although figuring out the actual best charity is really really hard and it’s likely that neither me nor GiveWell is likely to find it, GiveWell is much likelier to get close than I am. GiveWell is an organization devoted entirely to figuring it out; I’m one dude, with not much of an inclination to spend that much time on the problem. And,

I think it might well.

My objection is not so much to “This site will recommend charities that do the best with the money they receive”, it’s – quite frankly – the proselytisation involved. If you’re not an Effective Altruist, you might as well be using $100 bills to light cigars as what you’re doing!

Well, maybe I prefer to give to [Charity of My Choice] because it is personally meaningful to me, or addresses a problem I think needs addressing urgently, or it is where I want to give?

I don’t mind people wanting to maximise efficiency; I do mind the zeal of the convert attitude on show that if you’re not EA and if you’re not doing it to these exact limits and if you’re not using a site like GiveWell, you may as well be playing ducks and drakes with your donations and you are in fact making the world worse.

@Deiseach:

Hey, no one’s saying that! Of course your charitable donations make a difference even if they’re not going where GiveWell told you to send them. You already know that! You can see the good they do.

It’s possible they won’t do as much as they would have had they gone to GiveWell’s top charity–but look, if you’re gonna give away your money for free, you’re already being a Really Nice Person, and so you should cut yourself quite a lot of slack. Maybe you can give a bit to GiveWell’s top charity, and the rest where you wanted to give–or maybe you can just give everything where you want to and ignore GiveWell. You’re already way nicer than the 95% of people (totally made that up, by the way) who don’t give anything away at all!

It’s possible that the rationalist community lends itself more easily than others to having really zealous converts to things, if only because pure logic doesn’t understand the concept of “slack-cutting.” I think it’s important to reintroduce that concept, one way or another, or you’ll go crazy.

I have a different issue with GiveWell– it specializes in charities whose effect is easy to prove.

However, while it might be harder to prove that charities which, for example, are trying to prevent and/or stop wars are effective, their work still might be very important.

Peter,

I think your thought experiment does show that you can often get more bang-for-your buck in terms of numbers of lives saved by aiding faraway people.

Yet my critique of standard EA is more fundamental. Who says that maximizing the number of lives saved in the present is the most ethical use of your money? This is a question of values, and GiveWell’s calculations cannot help us answer it.

Let’s say in your example that people further away were less productive, while people close by were responsible for most technological and medical development. Let’s also say that people further away from Bob and Alice had increasingly different values from Bob and Alice, and were more likely to do things that Bob and Alice considered destructive or abhorrent. Or maybe they were even hostile to Bob and Alice’s shared culture.

If Alice factors in the productivity and behaviors of faraway populations into her utilitarian analysis, then she would be less inclined to aid them. It’s not guaranteed that the recipients of Alice’s aid will do something abhorrent or antisocial, but it’s a possibility that serious consequentialists should be considering.

The problem with standard EA is that it is purely need-based and merit-blind, while focusing only on lives saved in the present. Over the long number, it is not clear that this approach will promote human welfare over the long-term, due to negative externalities, opportunity cost, free riding, tragedy of the commons, and other unintended consequences.

Effective altruism side-steps the ethical question of why we should value maximum number of lives saved in the present regardless of opportunity cost or negative externalities. And even if we grant that our goal is to maximize the number of number of lives saved, it is not clear that EA is actually effective at accomplishing this goal over the long term.

In contrast, reciprocal altruism and civic altruism (arguably both forms of eigenaltruism) have a long track record of building successful societies. Eigenaltruism gives people an incentive to be prosocial and altruistic if they want to get any goodies.

By aiding faraway people, there is an opportunity cost of not helping your own people. Furthermore, you are helping those faraway people accomplish their goals. This seems like an expression of loyalty towards the faraway group.

Whether EA is a good return on investment depends on your values and your time preference. The fact that you are “diversifying” your altruist strategy hints at the uncertainty of the EA position.

Maximizing strangers’ lives saved in 2015 is nice but seems to have a really low time preference. I am more interested in aiding people who are productive, altruistic, and share my values. This means investing in people and communities that I know.

I often wonder, in the toting up of how many lives saved in faraway Miserastan, how many of those lives saved belong to the same person. A vaccination on Monday saves him from Disease X. On Tuesday a parcel of food saves him from starvation. On Wednesday a net saves him from malaria. On Thursday some clinic saves him from dying from the poison he swallowed from using the net to catch fish. On Friday an improved net saves him from getting poisoned again. Next Wednesday the net wears out and he gets a new net. Etc.

I’d rather go, one person one life. Save a life that’s likely to stay saved, so that person can continue being productive and find some technical solution to removing the misery from Miseristan in the first place.

That’s a good point, actually, I hadn’t thought about that. I doubt it accounts for more than a factor of 10 or so, though–I think malaria nets last longer than a week. 🙂

Yeah, I should have said a parcel of food saves him from starving in January; another parcel of food saves him from starving in February. Etc.

Exactly. If you save someone’s life, what happens next? Effective altruists act like this question is not their department, but if they are really consequentialists, then it should be.

Say you save some guy’s life in faraway Miseristan. Then he goes and beats his wife, stones the local gay person, engages in slash-and-burn agriculture or other behavior that destroys the local carrying capacity, or joins a militia and kills people with a machete. Your money enabled him to do that.

Then he has 4 kids which have the same problems he does, and also need aid. This is really not an attractive scenario for the use of my money.

Of course, most aid recipients in most places won’t do horrible things. But the prevalence of antisocial population among recipient populations should be a concern to consequentialists. Unfortunately, the further away an aid recipient is, the less I know about their values and whether I actually want to be supporting them.

GiveWell can tell us who is most needy, but it can’t tell us who is most worthy.

Great point. I used to give money to AMF, but a friend explained that it was being used to save black people, so I switched all my donations to Stormfront. I now have seven Iron Crosses on my profile signature.

Good thing I had already finished my coffee. Literally lol’d.

It’s a shame you’re probably going to be banned. Since Jim was permabanned we haven’t really had a New Right counterweight to Multi & co. Plus snark is always appreciated.

This is one of the funniest reductio ad absurdams that I’ve ever seen.

On the question of who is most worthy, I go by the words of Sigrid Undset: “Customs change as time goes by, and people’s beliefs change, and they think differently of many things. But the hearts of men never change, not in any age.”

If I was born in Rwanda, I’d like to think that I wouldn’t have been among the murderers during the genocide. But I can’t know, can’t prove. I can’t confidently assert that my heart (in Undset’s terms) is any better than that of any genocidal Hutu.

I assume as a matter of principle that whatever variation there may be in situation-independent courage, laziness, or evil, cannot be credited to myself.

Maybe the recipient of the food package goes on to be a bad human, but that doesn’t matter. By the golden rule, I must ask: If I was that person, would I want to be given the food package? And the answer still a conditional yes. Maybe that would indirectly lead to more evil, but then that’s on the recipient, not me.

Some peoples are more tribal than others. When was the last time that Scandinavians were chopping each other up? If you are from a population with low tribalness, then you and your population would not have the same values and behaviors as a highly tribal population Rwandans. Humans don’t all have the same sort of hearts.

This really sounds like virtue ethics or some sort of Rawlsian veil of ignorance. Which is OK, but it doesn’t really seem particularly utilitarian or consequentialist, which I thought was the point of effective altruism.

– If indeed there are externalities of aid, then we shouldn’t just consider the perspectives of the recipients, we should also consider the perspectives of whoever suffers the externalities.

– There is an opportunity cost of giving. Should we also consider the perspective of other people we could give to?

– Lots of people want lots of things. This isn’t necessarily a reason to give it to them. A consequentialist should consider the long-term consequences and incentives from giving to one person over another. There are few socioeconomic pursuits where one can be “effective” without considering those things.

– You should care about someone if they are in your circle of concern. But why should someone be in your circle of concern in the first place? That’s the question I’m trying to raise by talking about worthiness. Focusing in on any one person’s perspective loses the big picture in any ethical question where we are trying to weigh multiple people’s interests.

– From a consequentialist perspective, if you knowingly enable someone to do evil, then isn’t that partly on you?

– Speaking of the golden rule, does it matter if it runs both ways? For instance, if their country were socioeconomically advantaged over yours, would they give you charity? Would their circles of concern include you? Chances are, they would not, because population vary in charitable behavior and circle of empathy is a very Western thing. Note that propensity to charity and altruism is not merely a factor of wealth. Groups really differ in values, and they are not just richer or poorer versions of the same psychology.

Personally, I have trouble caring about the plight of people who wouldn’t help me if our socioeconomic statuses were reversed, at least, not enough fork over significant percentages of my money.

Empathy is a limited resource and we should spend it wisely. Dwelling on some particular suffering can cause us to give it undue weight in relation to other factors like other people’s suffering and unintended consequences of aiding them. I know it sounds kind of cold to discount empathy in favor of opportunity cost and other game theoretic considerations, but I really do think that those considerations are more virtuous for the long-run.

In most interpersonal social interaction, we cooperate with altruistic, prosocial people and avoid cooperating with antisocial people. If we did anything else, it would create really perverse incentives.

If people want to be charitable for reasons of warm fuzzies, that’s fine, but the ethical arguments for standard effective altruism seem to show weak utilitarianism, consequentialism, and maybe even virtue ethics. Well, they aren’t terrible arguments by the standards of philosophy, but most philosophical arguments don’t try to take your money. It’s like people are exaggerating the quality of EA arguments for some other psychological or political reasons.

I’m worried that this is a mugging of scrupulous people.

Herald, you’re really missing the point here. If some people really are more tribal than others, then I can’t blame them, can I? I would also be more tribal if I was one of those people – or at least I can’t assume I wouldn’t be, which is the point.

That humans have the same sort of hearts, means that none of us can take credit for our own good morals.

“Who should be in your circle of concern” is a classic question, but it’s more often asked in the form “who is my neighbour?”. Jesus answered a story by telling about an unsympathetic person (a hyperconservative scripture-only fundamentalist – what Samaritan meant before it came to mean compassionate person) who nonetheless acted with compassion.

The implied answer is, as I read it: If you can imagine them acting with compassion towards you, then it doesn’t matter if they otherwise are unsympathetic, your opponents etc. They are your neighbours.

No one is mugging anyone. You’re free to help distant people or nearby people or neither. Just don’t try justifying it by declaring some people more inherently deserving than others, if you consider them people at all.

Harald,

Whether humans have the same sort of hearts depends on what you mean by “same.” In some ways humans are the same, in other ways they are different.

I think humans are sufficiently different and conflicting in values that some humans end up at the periphery of my circle of concern, or even outside it. This doesn’t mean that I don’t view them as people, and it’s nothing to do with credit or blame. In fact, being tribal is totally normal for humans, even more normal than the leapfrogging of Westerners and EAs.

Just because I don’t think someone is worthy of getting my money, it doesn’t mean that they are unworthy in some kind of cosmic sense. Other people (such as people of their own tribe, or people with aligned interests) might view them as worthy.

I do make a claim that being altruistic towards altruistic people (instead of non-altruistic people) is more likely to advance human welfare over the long term (which I am calling “eigenaltruism”). There are numerous reasons you could disagree with that notion on empirical or moral grounds, but if you grant it, then some people are more worthy than others.

I believe that being selectively altruistic is typical throughout history and geography and only sounds harsh to fiercely anti-tribal Western ears.

Your view seems to rest on a level of similarity between people that I don’t believe in. Just because you would be a good Samaritan to someone, it doesn’t mean that they would do the same for you. Also, I reject Rawslian veil of ignorance arguments about considering what you might think or do if you were a totally different person; I do not see these thought experiments to be useful for deciding what to do as the person you actually are.

There is a danger of typical mind fallacy when putting oneself in the shoes of foreign population. Miseristan may be radically different in values than us. Surely there is a point where if people in Miseristan were sufficiently antisocial, and your ability to change their situation was so low, that you would think twice about helping them.

The difference in values between you and I alone underscores the differences between human hearts.

Is this at all alleviated when they estimate how many Quality-Adjusted-Life-Years are saved instead of how many lives are saved? I think some charities do that.

Well, if things are like that in Miseristan, then we won’t have to worry too much about Miseristan for long, because before too long everyone there will be dead. Conclusion: nowhere is like Miseristan.

As Ghatanathoah says, if you look at QALYs saved per dollar for your intervention in context (something the EA people seem to be keen on) then that sorts that problem out.

That’s part of my point; donating to charity no. 93 (providing fishing nets) instead of charity no. 1 (malaria nets) may work out more efficiently, as people with proper fishing nets will not be using malaria nets to catch fish and getting poisoned 🙂

That’s a fully general counterargument against expected utility.

Compare: “Schizophrenics aren’t insane, but they do have an unusual epistemology.”

One particularly important empirical question is “Are you isolated from your natural environment?” Because if not, don’t try leapfrogging loyalties. That shit will get you killed, son.

If your “value system” is extremely dangerous in your natural environment, this suggests (but does not prove) that there may be something wrong with you.

For a very small elite, leapfrogging loyalties is an effective machiavellian strategy to maintain power. For everyone else, it’s like worshipping John Frum, but without expecting to get any cargo.

Effective altruists are no more “insane” than ordinary progressives, but whether progressives are sane is a another question. “Insane” is a strong claim. For now, it is enough to note that leapfrogging loyalties would be harmful in many past or present environments. We should also consider whether there might be negative externalities or opportunity cost in the modern Western environment.

Nicely said.

This is right. In my experience, if you peer into any leapfrogger what you will discover is either a serious case of self-hatred or a kind of poisonous othering of people nearly but not quite like the leapfrogger.

The phenomenon Scott discussed in “I Can Tolerate Anything Except The Outgroup” is also in play here. When they’re not actively self-hating, my observation is that many leapfroggers are Blue-tribers for whom leapfrogging is just another way of othering the Reds.

The inverse almost never occurs. In this respect, I – though not Red Tribe myself – consider the Reds to be more sane. And it’s why EA has almost no appeal for me. I hear the logic of the arguments, but the emotional sentiments driving them strike me as perverse. Anti-survival.

As AirGap says, “That shit will get you killed, son.” It’s fragile and decadent, a luxury of wealthy elites in a time when the Gods of the Copybook Headings have not yet fully returned in terror and fire.

Be nice–EAs aren’t “othering” anyone. They’re doing what logic says is the right thing to do, without flinching when it makes them sacrifice (which is more than can be said of me).

And while it’s entirely true that EA is a “luxury of wealthy elites,” it’s in no way “fragile and decadent.” You know what’s fragile and decadent? A Rolex watch. That’s the sort of thing that wealthy elites have been spending their money on for centuries. So when wealthy elites start using their money for good instead, don’t insult them for it. You may not want to participate yourself, but please at least realize that EAs are worthy of praise, not derision.

The claim “Rolex watches are fragile and decadent” (which I agree with) is in no way exclusive of the claim “Leapfrogging is fragile and decadent.”

You have made a weak, emotionally-loaded argument. That suggests, though it does not prove, that your beliefs in this area are unsound.

When I catch myself doing this, I self-audit for unsoundness. It’s a practice I recommend.

I think what esr is getting at is that EA grows out of the fragile, decadent, othering and machiavellian weltanschauung. Unlike the machiavellian elites, EAers are sincere. Or at least I believe that the sort who go to LW meetups are. Whether this exculpates or inculpates them is not clear.

@ESR: I suppose it’s true that giving your money to charitable causes is “decadent” in the sense that it requires spending a lot of money. But if that’s your definition, it’s awfully hard for a rich person to do anything not decadent without making use of their assets.

And I suppose it’s also true that donating money is more “fragile” than not doing so in the sense that it’s probably mildly dangerous for the donator (such as if the donator contracts a really expensive-to-treat disease).

But that doesn’t mean that giving your money to efficient charitable causes isn’t the right thing to do. In fact, the question of whether EA is fragile or decadent or both is completely orthogonal to whether or not it’s the right thing to do. And I guess since I think that one ought to do this thing, you attaching these negatively-loaded words to this thing (even if they may have accurately described said thing) bothered me, since I thought that you may have implicitly been arguing that it was not the right thing to do. I apologize if that wasn’t the case.

I’ll freely admit I do have emotions about this. I have a couple friends, who I deeply admire, who decided to be EAs (and even chose their career paths to be more effective). The implication that they did it in order to other people rather than to help people struck a nerve.

I guess, we probably don’t even disagree that much. I would appreciate it, though, if you would agree with me at least that EAs are being nice by doing what they’re doing, even if you don’t also want to do it.

P.S. I really like The Cathedral and the Bazaar. Thanks for writing it!

EDIT: Added “@ESR:” to the start of the comment to avoid confusion.

This is not what either esr or I said. You need to work on reading for content. Whereas esr bailed (wisdom of age, presumably), I tried to write you an explainer, which provoked

You’ll notice, if you read more carefully, that I did essentially say that. Your response did cause me to briefly consider taking it back, though.

I don’t think you’re stupid; your writing suggests otherwise. But something emotional or political or whatever is preventing you from engaging your brain fully when attempting to comprehend esr’s & my comments. Nothing to be ashamed of. It happens to the best of us. But it won’t go away on its own.

Apologies–your explainer appeared before I was done with my reply to ESR, so I didn’t see it until I’d already written my reply. I do appreciate that you called EAs “sincere,” and I consider that to be what I was asking for. In fact, I agree with pretty much everything you’ve said. ESR, on the other hand, was implying that EAs do what they do possibly because they hate themselves, possibly because they want to other their neighbors–and that characterization I do take issue with. And unless I’m quite a lot worse at reading comprehension than I thought (and maybe I am), ESR did, in fact, directly say that EA (a form of leapfrogging) was decadent. If I was in error about either interpretation, I’ll apologize to him.

The history of human progress is enabling strategies that get you killed in the ancestral environment. Without strategies that get you killed in the ancestral environment (occupational specialization and dense populations, most obviously) you don’t get OUT of the ancestral environment.

+1

I’m replying to my own comment because the blog interface is only showing me a “Hide” button for Baby Beluga’s.

Beluga, I think your dechotomy between “nice” and “self-hating” is mistaken. There are lots of reasons for prosocial behavior; one of them can be hating yourself enough that you only feel you are redeemed by how much you sacrifice to others. (Some sort of Ayn Rand quote seems indicated here.)

So I can assent to the claim “EA people are nice” while still strongly suspecting like there’s often a pit beneath of hating self and hating people that are enough like self without being so close to self that important affective and tribal bonds bonds take over.

I think this is a common disease in what Scott calls the Blue tribe, and though I have no direct experience of EA people it looks very much to me like a movement that cold have been not have been better designed to concentrate such tendencies.

Perhaps I am wrong about this. In fact, I would prefer to be wrong about it.