When I was younger, my father gave me some advice I will never forget.

“Scott,” he said “when somebody tells you they want to help you figure out the evidence base behind different supplements, ask them about Vitamin D. If they say it’s useful for anything besides bone health, run away.”

(This actually happened. I know other people have fathers who give advice about Dealing With Adversity or Finding The Right Person, but I like mine just fine. You can pick up Dealing With Adversity as you go along, but evidence-based medicine is important)

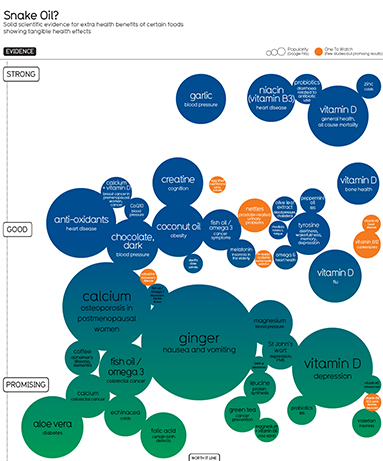

So when four people on my Facebook wall posted a link to a pretty graph with lots of circles about supplements:

Click on picture to see full version – but you might want to read the rest of this first

the first thing I did was look for Vitamin D. And there it was, all the way on the top, having “strong” evidence for “general health” and “all cause mortality”.

I have no doubt that the people putting this together are very smart and very well-intentioned. They drew from exactly the right places – they even checked Cochrane Review! They made all their research public and linked to a bunch of studies proving everything they said – including their claim that Vitamin D is effective for “general health”. So is my father wrong?

No. Their problem is that they are one-sided. Not in the sense of being deliberately biased or having an agenda, but in the sense that their entire procedure consists of searching for studies showing X, counting the number of such studies they find, and then making an “evidence strength” recommendation based on the number (and quality) of positive results.

But suppose we have a very popular supplement that dozens of good randomized studies have been done on. And suppose that ten of these studies have shown it prevents cancer, and so these sites put ten links to studies showing it prevents cancer, give it evidence grade A++, and put it at the top of their list as very highly recommended. This is no good if there are twenty much larger, better-quality studies that show it doesn’t prevent cancer, and this is in fact what’s going on in the vitamin D case.

II.

Let’s look at this more closely. The pretty colored circle people say that Vitamin D increases “general health” and prevents all-cause mortality. I don’t know exactly what “general health” means, but all-cause mortality is clear enough, and they also have a circle saying it prevents cancer. The research in these two areas is closely linked, so I’ll deal with it together.

They give seven studies that they use to make their point. Of these, two (1, 2) are primarily theoretical works detailing the molecular mechanisms by which vitamin D might prevent cancer and heart disease – solid as far as they go, but the graveyards are littered with convincing theoretical explanations for effects that were later discovered not to exist. Another (1) notes that absurdly high dose vitamin D was found in a trial without a control group to be associated with good treatment response in existing prostate cancer, which doesn’t tell us anything about anything except that particular rare situation and probably not even that. Three (1, 2, 3) are correlational studies – which, again, are a valid type of study, but have some pitfalls. And they especially have pitfalls in vitamin D research where every couple of months someone breathlessly announces that their correlational study has found vitamin D protects against Disease X, when what they actually mean is that Disease X (like practically every other disease) decreases serum vitamin D levels and so the disease state is associated with low Vitamin D levels. A single study (1) is a very good randomized trial in a thousand women finding vitamin D administration decreased cancer.

[edit: a newer version of the pretty circle graph includes some different studies. I may rewrite this part later, but it doesn’t change their conclusion and so doesn’t change mine either]

This evidence isn’t 100% conclusive, but it’s certainly suggestive, and I would have no problem with their claim that vitamin D probably decreases cancer and heart disease if that was all we know. But…

The largest randomized trial ever done on the subject, a 36,282-person behemoth, found zero effect of vitamin D on its two measured endpoints of colon cancer or breast cancer and in fact the vitamin D group had nonsignificantly more cancer than controls. Another large case-control study of 1400 people looking at prostate cancer found that vitamin D didn’t prevent it and might actually make it worse. And a randomized controlled trial of 2700 people investigating all-cause mortality found zilch. And this is ignoring all the little correlational studies that find vitamin D increases cancer risk.

In cases where you have lots of conflicting studies, you go to the meta-analyses. A meta-analysis by the Systematic Evidence Reviews people (who know their stuff) concluded that “Vitamin D and/or calcium supplementation also showed no overall effect on CVD, cancer, and mortality.” Wang et al found much the same (although their conclusions section does a terrible job elucidating this). Autier looks at 172 randomized trials (!) and finds “Results from intervention studies did not show an effect of vitamin D supplementation on disease occurrence”. In a meta-analysis of eighteen trials, Pittas et al find ” no clinically significant effect of vitamin D supplementation at doses given.” The Agency for Healthcare Research and Quality did a meta-meta-meta review of eleven systematic reviews (!) and found only that “the majority of the findings concerning vitamin D, calcium, or a combination of both nutrients on the different health outcomes were inconsistent.” And just last week, a very large analysis of hundreds of thousands of patients’ (!) worth of data concluded that Vitamin D supplementation does nothing for “skeletal, vascular, or cancer outcomes” and that the evidence is so clear that it is pointless doing further trials on the issue (this is the first time I heard of a “futility analysis” in statistics, but I like it!). Although there is the occasional meta-analysis that find in vitamin D’s favor, the medical community doesn’t find them very exciting.

So what is the field’s conclusion from all this disparate data?

UpToDate, the semi-canonical database of recommendations for doctors, says “A causal association between poor vitamin D status and nearly all major diseases (cancer, infections, autoimmune diseases, cardiovascular and metabolic diseases) has not been established. We suggest not administering vitamin D supplements above and beyond what is required for osteoporosis or fall prevention.”

The National Institute of Health says: “Taken together, however, studies to date do not support a role for vitamin D, with or without calcium, in reducing the risk of cancer…with the exception of measures related to bone health, the health relationships examined were either not supported by adequate evidence to establish cause and effect, or the conflicting nature of the available evidence could not be used to link health benefits to particular levels of intake of vitamin D.”

The US National Preventative Services Task Force says: “The USPSTF concludes that evidence is lacking regarding the benefit of vitamin D supplementation, with or without calcium, for the primary prevention of cancer” and gives the practice a grade of D, meaning they recommend against.

The Lancet, the second most prestigious medical journal in the world, ran an editorial by Karl Michaëlsson this month saying that “there is a legitimate fear that vitamin D supplementation might actually cause net harm”.

But what about someone really trustworthy? Wikipedia’s Vitamin D article says simply: “Research has found it unlikely that taking vitamin D supplements has any effect on cancer” and “It unlikely [sic] that taking vitamin D supplements has any effect on cardiovascular disease.”

I don’t want to come out too hard against vitamin D. There’s just enough tantalizing hints that, futility analyses be damned, I would not be surprised if later research came out in its favor. And there are some proposed benefits – like depression and fatigue – I haven’t even looked into – and some other benefits like psoriasis that seem to be on firmer ground. If your doctor told you to take vitamin D, or you’re doing well on it at the moment, don’t go off it just on my say-so.

But when the pretty colored circle people say it is the supplement with the most evidence? When they describe the evidence in its favor as “strong”? That’s just wrong.

(Or at least misleading. It probably is the supplement with the most evidence in its favor. It just has even more evidence against it. This is why you can’t just count the number of positive studies.)

III.

The recommendation for vitamin D is mostly harmless. The purported risks are on even shakier ground than the purported benefits, and unless you gobble vitamin D pills like candy, you probably won’t give yourself anything worse than a slightly increased risk of kidney stones (although I feel the need to add that kidney stones really hurt).

But go back to the pretty colored circle chart. Although vitamin D is on the highest echelon of their chart, it is not literally the highest circle. If you really squint, it looks like one other circle might be a few pixels higher than it. That circle is niacin.

…ie pharmacology’s most embarrassing disaster story of the past five years.

There was a time when niacin would have deserved that high spot. In 2010, a meta-analysis of niacin in treating cardiovascular disease found impressive success for the substance, building on about a thirty year history of smaller, less conclusive trials that nevertheless showed similar levels of success. This is the study cited by the pretty circle chart.

In 2011, Merck wanted to market their own form of niacin and decided to do what they expected to be a tick-the-appropriate-checkboxes type study to prove it was effective (which, in accordance with the ancient tradition of cutesy study acronym names, was called AIM-HIGH). Instead, the niacin was so useless that everyone involved gave up and stopped the study early because it wasn’t even worth their time to continue (caveat: this result may have been influenced by a second drug, laropiprant, given with the niacin). This was likely a good decision, since around the same time they started to notice patients in the experimental group getting way more strokes than seemed natural (the significance of this finding continues to be debated).

This study was followed by a second (in accordance with tradition named THRIVE), which was a mixed success. On the one hand, niacin continued to be absolutely useless in promoting cardiovascular health. On the other, we learned all sorts of exciting new side effects of niacin, like skin rashes, diabetes, increase infection, and my favorite, internal bleeding in the brain. Something like 3% of study participants required hospitalization for niacin-related side effects

So, long story short, now we don’t give people niacin so much. I won’t say doctors don’t give it at all, because a few do, especially in patients whose cholesterol can’t be controlled any other way, and if your doctor has you on niacin please at least ask why before going off it.

But once again, the pretty circle graph ignores all this subtlety and puts it on the highest rung of their chart, because their methodology of counting the studies that support a supplement ignores the very important ones that don’t.

IV.

In the discussion on Facebook, a friend agreed we should steer clear of this analysis and instead use examine.com, a very large and comprehensive database of supplement information.

But examine’s page on Vitamin D also gives it a Grade A evidence recommendation in preventing cardiovascular disease and grade B recommendation in preventing cancer. The cardiovascular disease entry says the scientific consensus is “50%” across “three studies” (there are dozens to hundreds), and the colorectal cancer risk says the scientific consensus is “100%” across “one study” (again, dozens to hundreds, with likely the majority against it). Perhaps mercifully, there is no page on niacin.

My father thinks examine.com is “a shill for the supplement industry”, but I disagree. I think they are good people, but they are trying to mass-produce medical recommendations. And at least the way they do it, that doesn’t work.

Examine boasts four hundred different supplements and 25000 references to scientific papers. Each supplement can have as many as forty different uses (vitamin D to prevent cancer, vitamin D to increase energy, vitamin D to strengthen bones, etc). Do you think they hired a doctor or biologist to laboriously go through every single one of those forty different uses of vitamin D, hunt down all the literature on it, read all the literature on it, read the systematic reviews of the literature, and come to a conclusion? And then repeat this process four hundred times?

I think someone Googled “vitamin D cancer”, took whatever studies appeared on the first page of results, and entered them into the database. If those studies were in favor of Vitamin D – even if they were from 1995, or had twenty people, or there were other studies on the second page of the Google results that contradicted them – then the scientific consensus is “100% in favor” and the evidence grade is “A”.

[EDIT: Sol Orwell from Examine.com has responded to this claim (1, 2. He says the important parts of his recommendations are not mass-produced, agrees that the Vitamin D information could be improved, and says he is going to fix the way it is presented. I still think you should use more academic or official sites like UpToDate and NIH first for recommendations, and then Wikipedia which is startlingly good for what it is, but I continue to think examine is a good and on-the-level supplementary (no pun intended) resource])

This doesn’t mean examine.com isn’t a useful resource – I purchased their Supplement Guide, and I constantly find them to be a good jumping-off point for further research. It doesn’t even mean the pretty circle graph doesn’t have something to teach you. But treat mass-produced medical recommendations like this the same way your high school teacher told you to treat Wikipedia, back in the days before everyone realized that Wikipedia was actually much more accurate than whatever crappy second-rate source your tech-illiterate high-school teacher wanted you to use: as something that can occasionally bring interesting facts to your attention but which should probably be double-checked with something more official before you start taking a chemical that has “bleeding into the brain” as a side effect.

And remember, when in doubt about any supplement, the best course of action is always to consult your doctor.

(…is A Sentence I Am Required To Say. In reality, your doctor will probably have never heard of the supplement before and just say ‘no’ out of arrogance or on general principle. And then you will not take it and so you will not get bleeding into your brain and die. In conclusion, the system works.)

Pingback: Tiedeoopiumia kansalle | Nörttitytöt

Aaaaand now the infographic on the linked page (at informationisbeautiful.net) no longer shows Vitamin D as having “strong” evidence… it’s been demoted to “promising.”

Huh.

It might be useful to note in the main post that you distinguish between people who have a deficiency of (for example) vitamin D, and those who don’t. It wasn’t clear to me until I read your comments that you made this distinction.

Thanks for the analysis! I needed this.

I dont understand the niacin study. They gave people extend release no flush niacin along with two other drugs. how is that a fair study to make any conclusions about niacin? the oinly thing that proves is that extended release no flush niacin plus those two other drugs cause strokes and other bad things.

The link the occasional meta-analysis is broken by having two spaces where it should have one. (my link is correct)

I had a fairly favorable opinion of vitamin D and supplement it myself, so I found this quite interesting. For me, the hoped-for benefits are two-fold: I want some sort of insurance against deficiency & spending all my time indoors causing me either psychological/mood problems or overall net health problems; for the latter, all-cause mortality is the number I pay the most attention to as the gold standard of whether something helps or hurts, and some of the cites do indeed touch on all-cause mortality. So I’m going to take a close look and see if the negative impression I get is justified by the cites.

http://www.ncbi.nlm.nih.gov/pmc/articles/PMC150177/ Trivedi et al 2003

To point out the obvious, there aren’t going to be enough deaths among 2700 people to give statistical power to detect a plausible effect like a RR in the .90s. The best you can do is note the effect size (0.88) and wait for a meta-analysis with enough power to hopefully yield a result.

http://www.ncbi.nlm.nih.gov/books/n/es108/pdf/ pg 37

pg70’s forest plot indicates RECORD & Trivedi random-effects meta-analyze to an RR of 0.94 (.88-1.01).

Didn’t look at all-cause mortality, so I’ll skip this.

But Autier did find an all-cause mortality reduction! Looks like they dug up quite a few studies; besides Triverdi and RECORD above, they have Chapuy et al 1992, Lips et al 1996, Chapuy et al 2002, Meyer et al 2002, Porthouse et al 2005, Flicker et al 2004, & Jackson et al 2006. Yielding a RR of 0.92 (0.86-0.99), consistent with Triverdi & RECORD’s point-estimates in the mid-0.90s. Only 2 point estimates were in favor of increased mortality. (And one of them apparently was quasi-randomized and maybe not really appropriate for inclusion; incidentally, 1/8 is almost statistically-significant even by the lamest possible meta-analysis, vote-counting – binomial test of 1/8 is p=0.07 where null is P=0.5.)

Not all-cause mortality.

http://www.ncbi.nlm.nih.gov/books/n/erta183/pdf/

Re-analyzed…? pg127:

Well, of course if you exclude a third of the studies (excluded for only being an abstract? isn’t that like, the definition of publication bias…?) a previous statistically-significant result may no longer hit statistical-significance! Adding 1 more study doesn’t atone for that. Still a positive point-value.

http://press.thelancet.com/vitDfutility.pdf Bolland et al 2014

They seemed to have pulled up quite a few studies that none of the previous ones did, which confuses me and makes me wonder whether they’re using ‘mortality’ and ‘death’ in a sense other than ‘all-cause mortality’… But assuming they didn’t, Figure 5 (pg9) still presents the same basic result all the other cites did: an RR of 0.96 (0.93-1.0).

Trial sequential analysis and futility curves are interesting but I’m not sure I understand them correctly, or they’re really appropriate or use reasonable parameters.

As I understand it, they’re basically a corrective against optional stopping – except is there really optional stopping at play with all-cause mortality? That’s not even a primary endpoint for most of the meta-analyzed studies, so it’s hard to imagine that a meta-analysis like Autier would put a stop to any future vitamin D trials and collection of all-cause mortality, especially inasmuch as you can hardly help collecting all-cause mortality data (‘how many of the subjects died? none? great, that’s our all-cause mortality data’), your subjects are either dead or not.

I also am not sure I agree with a 5% futility threshold for mortality: a 5% RR in all-cause mortality seems quite valuable to me. I’d be quite happy to spend $10 a year and a few seconds each morning to get a 5% reduction in risk of death that year!

You linked Autier previously, FWIW.

———–

I’m not sure why it wouldn’t find them interesting. I mean, look! It’s a fairly plausible result since we have at least one firm pathway for why vitamin D might help (falls); every review and meta-analysis turns in a beneficial point-estimate which is not absurdly powerful; the estimates are statistically-significant (when you aren’t playing dubious games in throwing out studies or jacking up alpha in the name of optional stopping); they generally note low heterogeneity among trials; and there’s minimal sign of publication bias. From the cost-benefit perspective, the costs are minimal both economic and in terms of side-effects while the benefit seems real (5% reduction in mortality? yes please!). Why isn’t this interesting?

At this point the question shouldn’t be so much one of internal validity (‘does vitamin D reduce all-cause mortality in studied groups’) because it looks like it does, but rather one of external validity (‘will vitamin D help you and me who are not >50yo women?’). This point I am much more dubious on. If you look back at Autier, 50yo is the youngest any subject was in any of the meta-analyzed trials; while Lyons 2007 had a mean age of 84; I didn’t look at the extra ones in Bolland too carefully but the table on pg3 shows no mean age < 53yo.

Unfortunately, I'd guess that in a younger population the mortality rates are going to be too low for any feasibly-funded RCT or set of RCTs to yield enough evidence to establish whether benefits in that population as well. (I haven't done an explicit power calculation for an RR of 0.95 in a population of 20s/30s yos but I could try to figure out how to do it if anyone really wants me to.) So at least for younger people it remains an uncertain choice in the absence of other reasons like worries about seasonal depression etc.

In case anyone else, like me, *freaked out* about niacin causing bleeding into the brain, here’s the somewhat-tricky-to-find pdf detailing the study: http://www.ctsu.ox.ac.uk/~thrive/HPS2-THRIVE_MainDAP_V1p6.pdf

It was a 2 gram daily dose. By comparison, the WorX (Five Hour Energy clone) on my desk has 0.030 grams (niacin is Vitamin B3; energy drinks usually have somewhat high B-vitamin concentrations), and claims that the PDV is 150%.

Just noting something:

Your algorithm for evaluating medical validity seems to involve

1.) Classifying studies by type. Plausible biochemical mechanisms and correlational studies are weaker evidence than controlled trials

2.) Ranking studies by size. Big studies are better than small ones.

3.) Giving priority to meta-studies.

4.) Giving weight to the recommendations of official medical organizations and the most prestigious medical journals.

These are all heuristics I use (though they’re not the *only* ones I use.) They’re also pretty simple. Developing a “research quality checklist” might go a long way to making layman meta-analysis more effective.

Hi from Examine.com! A few things:

1. Shills? Your dad is a bit off 😉

2. You can see who is on our staff here: http://examine.com/about/#editors – we have 5 researchers + me. And they are all very very qualified individuals.

3. The 50% was on the meta-reviews. Everything in our HEM has been fully read – which includes methodology, and so forth. Our HEM has roughly ~3000 studies, and we’ve been at it for almost 3 years. You can even click on each to see demographic breakdown. So yes – a lot of laborious work. *A lot.* No Dr. Google here.

4. The letter grades and consensus are automatically generated. We do not put those in. The level of evidence (letter grades) is based on the body of research available. So both CVD and risk of falls are “A” for vitamin D because of how many studies are available.

5. Happenstance of timing, but we also often breakdown media-blown stories, the latest of which in fact covers vitamin D: http://examine.com/blog/vitamin-d-and-bone-mineral-density/

Hope that clears it all up. Any questions, ask away!

Sorry – one clarification, and I’ll admit this is our fault for not being more clear – but the A B C D grades just mean *how much* evidence there is. You can see that we say that taking all of the evidence (of which there is quite a bit), vitamin D likely has a minor effect in decreasing it.

On the other hand, one meta review (leading to a “B” level of evidence) found that vitamin D had a notable effect in decreasing colorectal cancer: http://examine.com/show_rubric_effect.php?id=44&effect=Colorectal%20Cancer%20Risk&selection=all

Hi Sol, thanks for your measured response and as I said above I love your site. I don’t think we’re actually disagreeing.

– What people see on your page and draw their conclusions from are the effect matrix, the letter grade, and maybe the scientific consensus thing.

– As you say, those are automatically generated, or to use my terminology, “mass produced”.

– Using only one meta-analysis is better than using only one study, but there are still probably a dozen meta-analyses, it’s unclear why you chose the ones you did, and other better ones you don’t mention seem to contradict the ones you do.

– You list both Vitamin D decreasing colorectal cancer (major effect size, grade B) and Vitamin D decreasing CVD (minor effect size, grade A). I’m saying there’s a moderately strong consensus in the research and medical communities that it doesn’t do either of those things, I feel like I cited more than enough sources to support this, and that your site doesn’t convey this at all. Do you think that my conclusion about the state of the evidence is wrong, or am I missing exactly what your recommendations are supposed to mean?

Sweet – all we want is your love!

1. That grade is going to go. We’re working on v6 (an internal re-write), and people focus on the letter grade instead of No Change/Minor/Notable/Strong aspect. Again, our mistake is not being clear enough.

2. The *only* part automatically generated is the level of evidence. That’s it. Even then, all it means is “we have a lot of evidence.”

3. The *only* reason we would not include a meta is if we missed it. Any studies excluded are usually because they deal with multiple compounds, and we explicitly state we excluded them at the HEM. Our Vitamin D HEM has many many meta studies.

4. In regards to CVD, these are the two metas we have

http://www.ncbi.nlm.nih.gov/pubmed/20194238

http://www.ncbi.nlm.nih.gov/pubmed/20031348

In regards to colorectal cancer, this:

http://www.ncbi.nlm.nih.gov/pubmed/17296473

We then did *not* list the studies in the meta as we found the metas to adequately cover them

5. Crossing back, vitamin D is one of those headachy ones because there is *constantly* new research coming out. We tend to tackle each supplement page every 4 months, which means we haven’t had a chance to tackle the Lancet meta yet. So while I didn’t see any data that changes our viewpoint on colorectal cancer, we may downgrade our CVD to “no effect”

6. Lastly, that’s part of the headaches – even our comment on CVD says “The degree of prevention is borderline significant overall” – so by no means did we mean to say that vitamin D really helps with CVD, just that it *may* have a slight effect.

Hope that clears it up. vitamin D and fish oil tend to be the two biggest headaches because of the the plethora of research being published for them (and going through fulltexts instead of a quick bite off the abstract takes time). I think my only point of contention was really “I think someone Googled “vitamin D cancer”, took whatever studies appeared on the first page of results, and entered them into the database. If those studies were in favor of Vitamin D” – we start and end in pubmed, and then visit other sites to figure out any references and sources we may have missed. We have literally zero interest in being cheerleaders for any supplement (best example – fish oil. While the media still loves it, we’re not so enthusiastic).

Nice discussion guys! I’ll be visiting Examine.com instead of the snake oil chart to see if I want to include anything in my Soylent mix.

Thanks. I’ve updated the original post to include a link to your response.

Why do you trust USPSTF over Cochrane?

Just because USPSTF is a couple years later?

If they disagree in their meta-analyses, shouldn’t you ask why?

It appears to me that for all-cause mortality, USPSTF just looks at WHI, N=36k, gets HR=0.91, 95% CI=(.83,1.01), p=0.07 and declares that it doesn’t work. Whereas, Cochrane (p 3 / 6) throws in another 36k people and pushes it over the edge of statistical significance.

When it comes to Cochrane vs USPSTF, I trust the larger N. Maybe those extra 30 studies are crap (from Cochrane??), but it’s definitely not futile to do future studies to decide if a p=0.07 phenomenon is real!

I’m confused at what the Lancet article is saying. I think it is saying that vitamin D does reduce mortality by 4% and it would be really expensive to reduce the confidence interval to a single percentage point.

I don’t think Cochrane and USPTF conflict. Cochrane said its findings were for institutionalized (probably nursing home-bound) elderly women. USPTF talked about “premenopausal women or in men” and “noninstitutionalized postmenopausal women.”

It makes sense to me that elderly women in nursing homes would benefit more from vitamin D because they tend to have a LOT of problems with falls and bone fractures, which is where vitamin D has been best proven to be efficacious. Second paragraph of my post, I say “If they say it’s useful for anything besides bone health, run away” and the graph I’m critiquing has bone health in an entirely different circle.

So I don’t think I’m going against Cochrane too badly to say that in the population reading that graph and my blog – presumably not old grannies in nursing homes – the evidence for Vitamin D is lacking, and the evidence for extraskeletal effects is lacking even more.

No, Cochrane, meaning the document jsalvatier and I linked, said that its results were for all adults, not institutionalized women. Could you point to the document you are quoting and give a page number?

Also, the WHI p=0.07 result is healthy elderly women, not institutionalized. If Cochrane is generalizing to men from that, it is an error, but I haven’t determined what studies they are using.

I think the sample is heavily skewed towards elderly women, and I didn’t see cochrane breaking things up by age and gender.

Maybe I’m misinterpreting which document you meant? I thought you meant http://www.update-software.com/BCP/WileyPDF/EN/CD007470.pdf , where right in the abstract it says things like “The mean age of participants was 74 years”, “Most trials included elderly woman – older than 70 years”, “Conclusion: Vitamin D seems to decrease mortality in predominantly elderly women who are mainly in institutions and dependent care.”

Oops…I didn’t read the abstract because I already read the summary, which said that it was true regardless of institutional status. That might be a result of more data in 2012, but I think the 2011 abstract is simply in error. For example, in the discussion section on p27/30, it states the conclusion for “participants living independently or in institutional care.” And, again, the biggest input study, WHI, appears to me to be about independent living. Also, I don’t see anywhere that it tries to distinguish the institutionalized from the free-living.

But, yes, the 2011 study is pretty consistent that it is talking about women, except for the first place I looked. The 2012 summary seems to contradict itself about men.

What the FUCK is wrong with the system? Why is it so fucked up? What the FUCK is wrong with cochrane review? If you can’t trust cochrane to be competent, is there no one you can trust? I looked into vitamin D for several days a couple days last year and literally read nothing about what you’re talking about. What the FUCK?

I am so angry.

Cochrane is pretty competent. Are you talking about the study showing that there might be a very slight mortality decrease for elderly women taking vitamin D? I’m not against that, although I think the causes might be skeletal or might involve genuine vitamin D deficiency in that population.

OK, skeletal problems is a good reason not to generalize from elderly women to the general population. I don’t understand why you think that population would have special deficiency, though.

In general, you have to generalize from the elderly to the young because there’s no other way to get mortality data than to look at dying populations.

I’m calmer now.

I think I was misremembering the results a bit. I was remembering this (not cochrane review) from 2007 and the cochrane review. The article I was remembering estimated an effect size of 7% reduction, which would be quite large.

The 2011 cochrane review also estimated the effect size at 6%.

Also, maybe I wasn’t taking seriously the fact that most of the data is on quite elderly women. Why is that anyway? Isn’t there a pretty solid reason to think that people across the board at high latitudes are getting less vitamin D than their body expects?

I’d really like to get a hold of the data used in these trials. The confidence intervals seem pretty large (9%-2%) given their large total sample size (74k). I get the sense that people aren’t really squeezing all that they can out of their data.

Women around 70 have an annual death risk of 1:65. For 5 years, thats about 7.5%. The studies have about 75k observations. Thus the posterior for the two groups should have variance of about

Sqrt( 7.5% * (1-7.5%) / 35000) = .14%

I’m not sure why these meta analysis are pulling so little out of their data unless there is important heterogeneity, which I remember they did not.

Er, that cochrane claimed there was not heterogeneity.

See my response to Douglas. Institutionalized elderly woman are both less likely to go outside (often can’t walk), more likely to be vitamin D deficient (general terrible health and diet) and much more susceptible to the condition we have strong evidence D helps with – falls and bone fractures.

Why do you expect institutionalized people to have a terrible diet? I expect the institution to provide a balanced diet and that such people would less likely to be missing micronutrients than people living on their own. But, yes, institutionalized people don’t go outside, so for that vitamin I would expect a problem. And, yes, institutionalized people don’t get enough exercise.

It turns out Cochrane publishes the data they collect for their meta-analyses!

Unfortunately, they only report effect sizes and sample sizes rather than observed statistics, which makes a Bayesian analysis harder.

Running my own Bayesian, hierarchical meta analysis basically confirmed the Cochrane results. Posterior probability of reduced risk is about 94%, with a mean of about .96 RR. The prior on the mean effect size has a significant impact. Evidence seems good, but not as good as I imagined. Publication bias makes me a little nervous.

I was surprised by the large number of tiny studies. Lots of studies with n < 100. Why do these studies get run?

Lots of reasons. People don’t do power analysis. They do it, but wrong and with highly optimistic values. They do it correctly, but can’t get funding. They stop early when some metric hits significance (both peeking & adaptive trials). They stop early when something goes wrong, an investigator leaves/dies/gets-bored, a funder stops funding. (Funny example: while I was googling trial sequential analysis to understand that particular vitamin D meta-analysis, I went looking for R code to see if maybe it would be useful for my own meta-analyses; one of the discussion threads I found was… someone having issues because their drug company had been acquired, was not interested in funding their clinical trial to completion, and wanted to stop halfway through.)

Re: institutional diets: people often don’t like the food an institution serves them. (Observe kids in any school cafeteria.) The tray may be fairly nutritious if you actually ate the whole thing, but someone who gets broccoli instead of the peas they would normally eat at home may just avoid vegetables altogether rather than eat something they didn’t choose.

Yes, there are scenarios in which they’d get a better diet at home, but I’m skeptical that’s typical. How about you survey your clients on whether they ate more vegetables at home?

See Yvain’s counterpoint/giant-neon-warning-sign: https://slatestarcodex.com/2014/01/25/beware-mass-produced-medical-recommendations/

… did you post this comment in the wrong place?

Yes…um. Huh. If everyone could ignore me, that would be great.

(Meant for Rock Star Research, got tabs on phone mixed up)

Hypothesis: certain people have too little vitamin D in their blood, other people have too much, and others have about the right amount (depending among other things on their diet, their skin colour and their exposure to sunshine). Giving them vitamin D would help them, harm them, and do little for them respectively. Studies that don’t take this into account are pretty much useless.

Or am I missing something obvious?

Out of curiosity, Scott, what supplements, if any, do you currently take?

Seconding this as a useful question that I’d love answered.

It always shocks people when they find out it costs money to review the medical literature. But it’s the truth. Evaluating a simple claim (“Is Vitamin D good for you?”) takes a few hours of a doctor’s time. Evaluating a much broader question (“I have chronic pain, what can I do?”) might take hundreds of hours to get a non-terrible attempt at an answer.

> our high school teacher told you to treat Wikipedia

“High school”. “Wikipedia”.

You young kids are funny.

It jarred me too, and I’m three years younger than Scott.

So, the moral of the story is: go to Google Scholar/Pubmed and look for the latest meta-analysis. 🙂

That is precisely the opposite of the lesson. The lesson is that unless you’re highly familiar with the literature, know what you’re looking for, good at statistics and willing to spend a long time on the topic, you’re wasting your time.

Googling will bring up the Cochrane review meta-analysis on vitamin D which shows a large all cause mortality reduction, which is apparently spurious (according to Yvain).

Uh, maybe ignore this.

I take vitamin D supplements because I’ve often found myself very vitamin D deprived during winters (according to actual blood tests rather than self-diagnosis) and that isn’t much fun (for me it manifested as extreme tiredness. I’m not sure that that’s typical). It “appears” to help with general energy levels in the normal case even when I’m not, by which I suspect I just mean that the placebo effect is awesome. It does still make me feel rather guilty about effectively buying into the hype.

I also take vitamin D supplementation in the winter. I don’t know the research on tiredness, but it seems like a very cheap experiment.

Are you worried about bone health?

Or more seriously, is the lesson about supplementation above recommended amounts, assuming no deficiencies either way?

This is useful information to update on. I had heard the vitamin D hype but not the counter-evidence.

(Also, despite what marketers say, “antioxidants” are also pretty much useless for preventing or treating anything other than vitamin deficiency.)

My experience with vitamin D is that a daily dose of 3,000 IU has done wonders to help clear up my psoriasis. No kidney stones yet, and so long as I don’t develop any I don’t see much reason to stop taking the supplements.

I think vitamin D for psoriasis is on firmer ground than the things I mention above.

Very true, I just find it funny that this chart left out a condition with a stronger showing of evidence. Of course psoriasis isn’t nearly as headline-friendly as cancer or heart disease so I probably shouldn’t be surprised.

OK, so this is an entirely tangential question, but I’m curious — what is the difference between “internal bleeding in the brain” and a (hemorrhagic) stroke?

From what I understand, bleeding into the brain is slightly more general — hemorrhagic strokes result from bleeding in the brain (which leads to less blood supply), but bleeding into the brain can also result in other things, like subdural hematoma (depending, I guess, on what you define the “brain”). Not that there are also non hemorrhagic strokes, so your parentheses seem a bit superfluous.

For example: http://en.wikipedia.org/wiki/Central_serous_retinopathy

Where liquid comes out of the blood vessels under the retina (sort of bleeding, more permeable).

Huh! I never knew that was possible.

Cool.

Or, well, not cool, exactly, but interesting.

And it answers my question. Thank you!

I’ve tried taking supplements now and then, and drifted away from them because they just don’t seem to make me feel better, something that I optimistically expect from things which are good for me.

This includes rhodiola rosea, which some people seem to get a lot out of. I do seem to be the only person who likes the taste, but that’s hardly a reason to keep taking it.

Actually, this reminds me that I should take another crack at vitamin C. which does seem to improve my mood. No double blind tests, but I’d occasionally feel happy for no reason– something which hadn’t been happening otherwise since childhood.